The NVIDIA GeForce GTX 660 Review: GK106 Fills Out The Kepler Family

by Ryan Smith on September 13, 2012 9:00 AM ESTMeet The GeForce GTX 660

For virtual launches it’s often difficult for us to acquire reference clocked cards since NVIDIA doesn’t directly sample the press with reference cards, and today’s launch of the GeForce GTX 660 launch is one of those times. The problem stems from the fact that NVIDIA’s partners are hesitant to offer reference clocked cards to the press since they don’t want to lose to factory overclocked cards in benchmarks, which is an odd (but reasonable) concern.

For today’s launch we were able to get a reference clocked card, but in order to do so we had to agree not to show the card or name the partner who supplied the card. As it turns out this isn’t a big deal since the card we received is for all practical purposes identical to NVIDIA’s reference GTX 660, which NVIDIA has supplied pictures of. So let’s take a look at the “reference” GTX 660.

The reference GTX 660 is in many ways identical to the GTX 670, which comes as no great surprise given the similar size of their PCBs, which in turn allows NVIDIA to reuse the same cooler with little modification. Like the GTX 670, the reference GTX 660 is 9.5” long, with the PCB itself composing just 6.75” of that length while the blower and its housing composes the rest. The size of retail cards will vary between these two lengths as partners like EVGA will be implementing their own blowers similar to NVIDIA’s, while other partners like Zotac will be using open air coolers not much larger than the reference PCB itself.

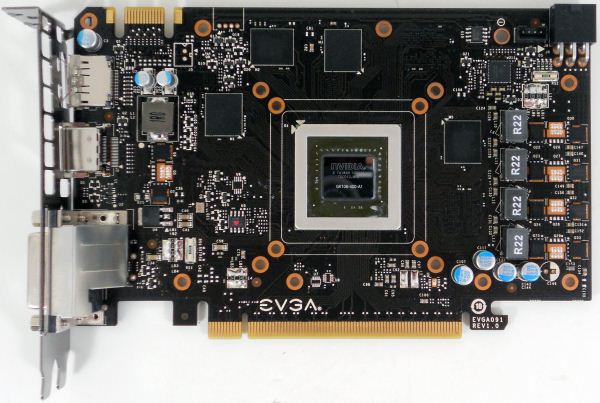

Breaking open one of our factory overclocked GTX 660 (specifically, our EVGA 660 SC using the NV reference PCB), we can see that while the GTX 670 and GTX 660 are superficially similar on the outside, the PCB itself is quite different. The biggest change here is that while the 670 PCB made the unusual move of putting the VRM circuitry towards the front of the card, the GTX 660 PCB once more puts it on the far side. With the GTX 670 this was a design choice to get the GTX 670 PCB down to 6.75”, whereas with the GTX 660 it requires so little VRM circuitry in the first place that it’s no longer necessary to put that circuitry at the front of the card to find the necessary space.

Looking at the GK106 GPU itself, we can see that not only is the GPU smaller than GK104, but the entire GPU package itself has been reduced in size. Meanwhile, not that it has any functional difference, but GK106 is a bit more rectangular than GK104.

Moving on to the GTX 660’s RAM, we find something quite interesting. Up until now NVIDIA and their partners have regularly used Hynix 6GHz GDDR5 memory modules, with that specific RAM showing up on every GTX 680, GTX 670, and GTX 660 Ti we’ve tested. The GTX 660 meanwhile is the very first card we’ve seen that’s equipped with Samsung’s 6GHz GDDR5 memory modules, marking the first time we’ve seen non-Hynix memory on a GeForce GTX 600 card. Truth be told, though it has no technical implications we’ve seen so many Hynix equipped cards from both AMD and NVIDIA that it’s refreshing to see that there is in fact more than one GDDR5 supplier in the marketplace.

For the 2GB GTX 660, NVIDIA has outfit the card with 8 2Gb memory modules, 4 on the front and 4 on the rear. Oddly enough there aren’t any vacant RAM pads on the 2GB reference PCB, so it’s not entirely clear what partners are doing for their 3GB cards; presumably there’s a second reference PCB specifically built to house the 12 memory modules needed for 3GB cards.

Elsewhere we can find the GTX 660’s sole PCIe power socket on the rear of the card, responsible for supplying the other 75W the card needs. As for the front of the card, here we can find the card’s one SLI connector, which like previous generation mainstream video cards supports up to 2-way SLI.

Finally, looking at display connectivity we once more see the return of NVIDIA’s standard GTX 600 series display configuration. The reference GTX 660 is equipped with 1 DL-DVI-D port, 1 DL-DVI-I port, 1 full size HDMI 1.4 port, and 1 full size DisplayPort 1.2. Like GK104 and GK107, GK106 can drive up to 4 displays, meaning all 4 ports can be put into use simultaneously.

147 Comments

View All Comments

chizow - Tuesday, September 18, 2012 - link

Where did I call you an idiot? You took issue with my response to rarson, who fits my profile as someone who continuously ignores or is unable to understand some very simple concepts backed by mounds of evidence and historical data.Then he has the gall to question my ability to understand certain concepts? Of course I have trouble understanding opinions founded on stupidity. Unless you have the same problems, why would you take offense?

CeriseCogburn - Thursday, November 29, 2012 - link

Here, I'll call him an idiot and a liar.He's an idiot and a liar.

He's been one forever.

It will never change.

As least David's butt is smakc full of his lipstick, and poor Goliath is rich as can be and the one still standing and alive.

I guess Galidou sucked too hard now David (amd) is almost dead.

Poor Galidou, supporting the underdog under it's jockstrap just hasn't worked out at all.

I have a feeling David's paramour might be a bit "upset" again, and again, and again, and again, and again.

Did the idiot get anything correct ?

Were his correction to his incorrect comments that he corrected not needed anyway since even after the corrections he issued to himself he was still wrong?

I'll answer that.

YES.

Galidou - Monday, September 17, 2012 - link

http://www.techpowerup.com/reviews/NVIDIA/GeForce_...20% more performance than last gen for the same price one year and a half later isn't a big deal either. Sure you win on thermal and consumption constraints.

You don't even know me personally and still you have to insult my intelligence, that's what fanboys do... and that's far worse than lacking of judgement in my opinion.

I admit that AT LAUNCH the 7970 was worse than the gtx 280 compared to last gen parts but you have to consider what's coming out too. And we all know they have this kind of information, and estimation of the performance of the part for the price.

So right, they should of priced 7970 400$ but that would of made another war with Nvidia(which already sued AMD for price fixing between them) so this price might just reflect the return to normal for both companies. No more 4870 BIG DEAL, back to normal, not because AMD want to price it BADLY because they have been sued to do so....

Galidou - Monday, September 17, 2012 - link

You get the first shot on new technology, you price it higher, you lower the price when the new stuff comes out. Same laws for both companies. 4870 was an unknown mistake, the chip wasn't out and the preliminary tests showed it performing way less than when it launched.It was a precipitated launch. Prices had been fixed WAY before the final product. With drivers enhancements and such the 4870 performed WAY above what AMD was hoping for, it was a surprise to them. They couldn't play too much with the price because it was already out in the medias for a while. Shit happens, they have been sued for being lucky with their final products for price fixin and next gen cards AHD to go up in prices breaking the amazing deal they sold for.

chizow - Tuesday, September 18, 2012 - link

"I admit that AT LAUNCH the 7970 was worse than the gtx 280 compared to last gen parts but you have to consider what's coming out too."Finally, now was that so hard?

Galidou - Monday, September 17, 2012 - link

Worst increase in performance, not, gtx 680 is 20-25% average faster than gtx 580. Biggest increase in price, sure but do you know anything about price fixing between AMD and Nvidia, yep, the prices are fixed by both companies.Even if they were sued just before the days of radeon 4870 and gtx 280(thus explaining in part why the price of the 4870 wasn't adjusted to Nvidia because they were forbid to and were being checked) they continue to do that.

Galidou - Monday, September 17, 2012 - link

While speaking about all that, pricing of the 4870 and 7970 do you really know everything around that, because it seems not when you are arguing, you just seem to put everything on the shoulder of a company not knowing any of the background.Do you know the price of the 4870 was already decided and it was in correlation with Nvidia's 9000 series performance. That the 4870 was supposed to compete against 400$ cards and not win and the 4850 supposed to compete against 300$ series card and not win. You heard right, the 9k series, not the GTX 2xx.

The results even just before the coming out of the cards were already ''known''. The real things were quite different with the final product and last drivers enhancements. The performance of the card was actually a surprise, AMD never thought it was supposed to compete against the gtx 280, because they already knew the performance of the latter and that it was ''unnaittanable'' considering the size of the thing. Life is full of surprise you know.

Do you know that after that, Nvidia sued AMD/ATI for price fixing asking for more communications between launch and less ''surprises''. Yes, they SUED them because they had a nice surprise... AMD couldn't play with prices too much because they were already published by the media and it was not supposed to compete against gtx2xx series. They had hoped that at 300$ it would ''compete'' against the gtx260 and not win against i thus justifying the price of the things at launch. And here you are saying it's a mistake launching insults at me, telling me I have a low intelligence and showing you're a know it all....

Do you know that this price fixing obligation is the result of the pricing of the 7970, I bet AMD would of loved to price the latter at 400$ and could do it but it would of resulted in another war and more suing from Nvidia that wanted to price it's gtx 680 500$ 3 month after so to not break their consumers joy, they communicate A LOT more than before so everyone is happy, except now it hurts AMD because you compare to last gen and it makes things seems less of a deal. But with things back to normal we will be able to compare last gen after the refreshed radeon 7xxx parts and new gen after that.

Nvidia the ''giant'' suing companies on the limit of ''extinction'', nice image indeed. Imagine the rich bankers starting to sue people in the streets, and they are the one you defend so vigorously. If they are that rich, do you rightly think the gtx 280 was well priced even considering it was double the last generation... It just means one thing, they could sell their card for less money but instead they sue the other company to take more money from our pockets, nice image.... very nice..... But that doesn't mean I won't buy an Nvidia card, I just won't defend them as vigorously as you do.... For every Goliath, we need a David, and I prefer David over Goliath.... even if I admire the strenght of the latter....

Galidou - Monday, September 17, 2012 - link

I was wrong, Nvidia didn't sue over AMD, both companies were sued for price fixing but things are back now, anyway all this stuff is taking way too much of my time, you have your way of seeing things as facts, I have my way of seeing things as my opinion, I'll give you the benefit of the doubt because you're so much more intelligent than me and I don't care about the ultimate truth as I don't beleive in such a thing.Being sued back in 2008 in the times they were working on gtx2xx and 4870 series might explain the lack of information on each others and the reason why they couldn'T play with the price once they knew the surprise. They were probably forbid to adjust price based on each other performance for the benefit of the consumer. But the surprise of that SO small chip performing sometimes better than a gpu 110% bigger was a real shock for the small company.

CeriseCogburn - Wednesday, September 19, 2012 - link

You truly are an estrogen doused total licker bleeding red that no tamp can ever stop.Thanks for the pathetic entertainment.

Now you may whine some more in your sensitive little girl voice.

Galidou - Thursday, September 20, 2012 - link

Wow, chizow's acolyte is back. I guess it's his troll name and when he can'T stand it anymore he logs with CeriseCogburn to insult people so he Chizow's name remain clean.Who's whining, when I read you, it seems that's all you can do whine whine whine.... read everything you ever wrote in the last 6 months and that's ALL you do insulting people and whining.... look in the mirror dude.