Building the 2012 AnandTech SMB / SOHO NAS Testbed

by Ganesh T S on September 5, 2012 6:00 PM EST- Posted in

- IT Computing

- Storage

- NAS

Motherboard

A number of vendors exist in the dual processor workstation motherboard market. At the time of the build, LGA 2011 Xeons had already been introduced, and we decided to focus on boards supporting those processors. Since we wanted to devote one physical disk and one network interfaces to each VM, it was essential that the board have enough PCI-E slots for multiple quad-ported server NICs as well as enough native SATA ports. For our build, we chose the Asus Z9PE-D8 WS motherboard with an SSI EEB form factor..

Based on the C602 chipset, this dual LGA 2011 motherboard supports 8 DIMMs and has 7 PCIe 3.0 slots. The lanes can be organized as (2 x16 + 1 x16 + 1x8 or 4 x8 + 1x16 + 1 x8). All the slots are physically 16 lanes wide. The Intel C602 chipset provides two 6 Gbps SATA ports and eight SATA 3 Gbps ports. A Marvell PCIe 9230 controller provides four extra 6 Gbps ports making for a total of 14 SATA ports. This allows us to devote two ports to the host OS of the workstation and one port to each of the twelve planned VMs. The Z9PE-D8 WS motherboard also has two GbE ports based on the Intel 82574L. Two Gigabit LAN controllers are not going to be sufficient for all our VMs. We will address this issue further down in the build.

The motherboard also has 4 USB 3.0 ports, thanks to an ASMedia USB 3.0 controller. The Marvell SATA - PCIe bridge and the ASMedia USB3 controller are connected to the 8 PCIe lanes in the C602. All the PCIe 3.0 lanes come from the processors. Asus also provides support for SSD caching (where any installed SSD can be used as a cache for frequently accessed data, without any size limitations) in the motherboard. The Z9PE-D8 WS also has a Realtek ALC898 HD audio codec, but neither of the above aspects are of relevance to our build.

CPUs

One of the main goals of the build was to ensure low power consumption. At the same time, we wanted to run twelve VMs simultaneously. In order to ensure smooth operation, each VM needs at least one vCPU allocated exclusively to it. The Xeon E5-2600 family (Sandy Bridge-EP) has CPUs with core counts ranging from 2 to 8, with TDPs from 60 W to 150 W. Each core has two threads. Keeping in mind the number of VMs we wanted to run, we specifically looked at the 6 and 8 core variants, as two of those processors would give us 12 and 16 cores. Within these, we restricted ourselves to the low power variants. These included the hexa-core E5-2630L (60 W TDP) and the octa-core E5-2648L / E5-2650L (70 W TDP).

CPU decisions for machines meant to run VMs have to be usually made after taking the requirements of the workload into consideration. In our case, the workload for each VM involved IOMeter and Intel NASPT (more on these in the software infrastructure section). Both of these softwares tend to be I/O-bound, rather than CPU-bound, and can run reliably on even Pentium 4 processors. Therefore, the per-core performance of the three processors was not a factor that we were worried about.

Out of the three processors, we decided to go ahead with the hexa-core Xeon E5-2630L. The cores run at 2 GHz, but can Turbo up to 2.5 GHz when just one core is active. Each core has a 256 KB L2 cache, with a common 15 MB L3. With a TDP of just 60W, it enabled us to focus on energy efficiency. Two Xeon E5-2630Ls (a total of 120W TDP) enabled us to proceed with our plan to run 12 VMs concurrently.

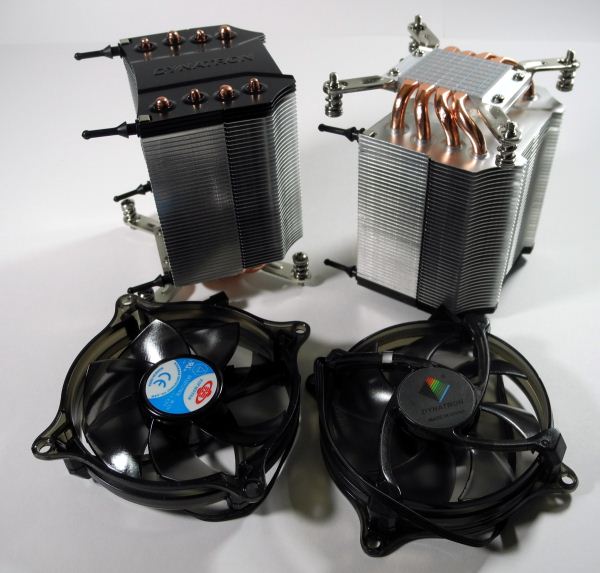

Coolers

The choice of coolers for the processors is dictated by the chassis used for the build. At the start of the build, we decided to go with a tower desktop configuration. Asus recommended the Dynatron R17 for use with the Z9PE-D8 WS, and we went ahead with their suggestion.

The R17 coolers are meant for the LGA 2011 sockets for 3U and above rackmount form factors as well as tower desktop and workstation solutions. They are made of aluminium fins with four copper heat pipes. A thermal compound is pre-printed at the base. Installation of the R17s was quite straightforward, but care had to be taken to ensure that the side meant to mount the cooler’s fans didn’t face the DIMM slots on the Z9PE-D8 WS.

The fans on the R17 operate between 1000 and 2500 rpm, and consume between 0.96W and 3W at these speeds. Noise levels are respectable and range from 17 dbA to 32 dbA. The R17 has the ability to cool CPUs with up to 160W TDP. The 60W E5-2630Ls were effectively maintained between 45C and 55C even under our full workloads by the Dynatron R17s.

74 Comments

View All Comments

waldo - Wednesday, September 5, 2012 - link

Some of the biggest problems I have found in running my small business related to NAS's is file integrity under load. Is there a way to see if they have file integrity issues under load? Not just i/o or response times.Also, it would be interesting to see how their "feature set" holds up under load, as all of the NAS's purport to offer a variety of additional services other than purely file access/storage. Or is that only applicable to your lengthier reviews?

Lastly, most of these nas's don't have version tracking or something similar, so in a media setup, it would be interesting to see how they handle accessing the same file at the same time....can they serve it multiple times to multiple clients?

waldo - Wednesday, September 5, 2012 - link

One last thought...it would be interesting to see free nas or some other DIY as an alternative.Peanutsrevenge - Wednesday, September 5, 2012 - link

Top marks!Bet it was satisfying when the SSH script was comlpete, just press this button and .....

tygrus - Thursday, September 6, 2012 - link

How big a NAS can you test ? Does the server slow it down when testing 7+ virtual client load against NAS ? What is the client/host CPU usage, host system_cpu%, VM overhead ?Please test the server by running simultaneous tests to multiple NAS. Compare 6 clients alone to 1 NAS at a time with 2 sets of 6 clients to 2 NAS (or 3x4, 4x3). Is there any difference ? Is the test affected by the testbed CPU speed (try using a faster CPU eg. E5-2670) ? Can you test 16 or 24 clients (1 server) with 2 VM / SSD. Might need more RAM ? Now we are getting less SMB / SOHO and more enterprise :)

jwcalla - Thursday, September 6, 2012 - link

I got a bit of a chuckle out of G.Skill sending you non-ECC RAM.ganeshts - Thursday, September 6, 2012 - link

That is OK for our application :) We aren't running this workstation in a 'production' environment.bobbozzo - Thursday, September 6, 2012 - link

Curious, would ECC RAM use noticeably more power?extide - Thursday, September 6, 2012 - link

Why would they bother with ECC ram? Totally un-needed for this application..bsd228 - Monday, September 10, 2012 - link

ECC is absolutely needed for this application - Data integrity matters, not just data throughput.bobbozzo - Thursday, September 6, 2012 - link

Page 2, in the sentence"Out of the three processors, we decided to go ahead with the hexa-core Xeon E5-2630L"

The URL in the HREF has a space in it, and therefore doesn't work.

Thanks for the article!