The GeForce GTX 660 Ti Review, Feat. EVGA, Zotac, and Gigabyte

by Ryan Smith on August 16, 2012 9:00 AM ESTThat Darn Memory Bus

Among the entire GTX 600 family, the GTX 660 Ti’s one unique feature is its memory controller layout. NVIDIA built GK104 with 4 memory controllers, each 64 bits wide, giving the entire GPU a combined memory bus width of 256 bits. These memory controllers are tied into the ROPs and L2 cache, with each controller forming part of a ROP partition containing 8 ROPs (or rather 1 ROP unit capable of processing 8 operations), 128KB of L2 cache, and the memory controller. To disable any of those things means taking out a whole ROP partition, which is exactly what NVIDIA has done.

The impact on the ROPs and the L2 cache is rather straightforward – render operation throughput is reduced by 25% and there’s 25% less L2 cache to store data in – but the loss of the memory controller is a much tougher concept to deal with. This goes for both NVIDIA on the design end and for consumers on the usage end.

256 is a nice power-of-two number. For video cards with power-of-two memory bus widths, it’s very easy to equip them with a similarly power-of-two memory capacity such as 1GB, 2GB, or 4GB of memory. For various minor technical reasons (mostly the sanity of the engineers), GPU manufacturers like sticking to power-of-two memory busses. And while this is by no means a true design constraint in video card manufacturing, there are ramifications for skipping from it.

The biggest consequence of deviating from a power-of-two memory bus is that under normal circumstances this leads to a card’s memory capacity not lining up with the bulk of the cards on the market. To use the GTX 500 series as an example, NVIDIA had 1.5GB of memory on the GTX 580 at a time when the common Radeon HD 5870 had 1GB, giving NVIDIA a 512MB advantage. Later on however the common Radeon HD 6970 had 2GB of memory, leaving NVIDIA behind by 512MB. This also had one additional consequence for NVIDIA: they needed 12 memory chips where AMD needed 8, which generally inflates the bill of materials more than the price of higher speed memory in a narrower design does. This ended up not being a problem for the GTX 580 since 1.5GB was still plenty of memory for 2010/2011 and the high pricetag could easily absorb the BoM hit, but this is not always the case.

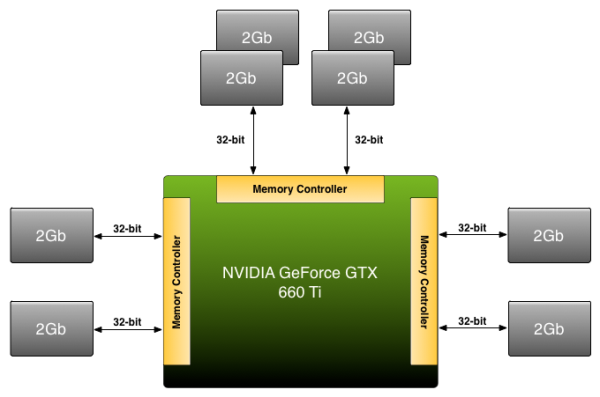

Because NVIDIA has disabled a ROP partition on GK104 in order to make the GTX 660 Ti, they’re dropping from a power-of-two 256bit bus to an off-size 192bit bus. Under normal circumstances this means that they’d need to either reduce the amount of memory on the card from 2GB to 1.5GB, or double it to 3GB. The former is undesirable for competitive reasons (AMD has 2GB cards below the 660 Ti and 3GB cards above) not to mention the fact that 1.5GB is too small for a $300 card in 2012. The latter on the other hand incurs the BoM hit as NVIDIA moves from 8 memory chips to 12 memory chips, a scenario that the lower margin GTX 660 Ti can’t as easily absorb, not to mention how silly it would be for a GTX 680 to have less memory than a GTX 660 Ti.

Rather than take the usual route NVIDIA is going to take their own 3rd route: put 2GB of memory on the GTX 660 Ti anyhow. By putting more memory on one controller than the other two – in effect breaking the symmetry of the memory banks – NVIDIA can have 2GB of memory attached to a 192bit memory bus. This is a technique that NVIDIA has had available to them for quite some time, but it’s also something they rarely pull out and only use it when necessary.

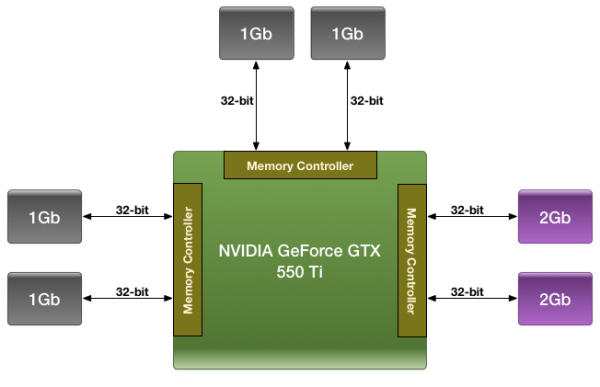

We were first introduced to this technique with the GTX 550 Ti in 2011, which had a similarly large 192bit memory bus. By using a mix of 2Gb and 1Gb modules, NVIDIA could outfit the card with 1GB of memory rather than the 1.5GB/768MB that a 192bit memory bus would typically dictate.

For the GTX 660 Ti in 2012 NVIDIA is once again going to use their asymmetrical memory technique in order to outfit the GTX 660 Ti with 2GB of memory on a 192bit bus, but they’re going to be implementing it slightly differently. Whereas the GTX 550 Ti mixed memory chip density in order to get 1GB out of 6 chips, the GTX 660 Ti will mix up the number of chips attached to each controller in order to get 2GB out of 8 chips. Specifically, there will be 4 chips instead of 2 attached to one of the memory controllers, while the other controllers will continue to have 2 chips. By doing it in this manner, this allows NVIDIA to use the same Hynix 2Gb chips they already use in the rest of the GTX 600 series, with the only high-level difference being the width of the bus connecting them.

Of course at a low-level it’s more complex than that. In a symmetrical design with an equal amount of RAM on each controller it’s rather easy to interleave memory operations across all of the controllers, which maximizes performance of the memory subsystem as a whole. However complete interleaving requires that kind of a symmetrical design, which means it’s not quite suitable for use on NVIDIA’s asymmetrical memory designs. Instead NVIDIA must start playing tricks. And when tricks are involved, there’s always a downside.

The best case scenario is always going to be that the entire 192bit bus is in use by interleaving a memory operation across all 3 controllers, giving the card 144GB/sec of memory bandwidth (192bit * 6GHz / 8). But that can only be done at up to 1.5GB of memory; the final 512MB of memory is attached to a single memory controller. This invokes the worst case scenario, where only 1 64-bit memory controller is in use and thereby reducing memory bandwidth to a much more modest 48GB/sec.

How NVIDIA spreads out memory accesses will have a great deal of impact on when we hit these scenarios. In the past we’ve tried to divine how NVIDIA is accomplishing this, but even with the compute capability of CUDA memory appears to be too far abstracted for us to test any specific theories. And because NVIDIA is continuing to label the internal details of their memory bus a competitive advantage, they’re unwilling to share the details of its operation with us. Thus we’re largely dealing with a black box here, one where poking and prodding doesn’t produce much in the way of meaningful results.

As with the GTX 550 Ti, all we can really say at this time is that the performance we get in our benchmarks is the performance we get. Our best guess remains that NVIDIA is interleaving the lower 1.5GB of address while pushing the last 512MB of address space into the larger memory bank, but we don’t have any hard data to back it up. For most users this shouldn’t be a problem (especially since GK104 is so wishy-washy at compute), but it remains that there’s always a downside to an asymmetrical memory design. With any luck one day we’ll find that downside and be able to better understand the GTX 660 Ti’s performance in the process.

313 Comments

View All Comments

CeriseCogburn - Saturday, August 25, 2012 - link

The 660Ti has a bios SUPER roxxor feature...in the MSI version.. ROFL !! hahahahttp://www.techpowerup.com/reviews/MSI/GTX_660_Ti_...

It seems that MSI has added some secret sauce, no other board partner has, to their card's BIOS. One indicator of this is that they raised the card's default power limit from 130 W to 175 W, which will certainly help in many situations.

The card essentially uses the same power as other cards, but is faster - leading to improved performance per Watt.

Overclocking works great as well and reaches the highest real-life performance, despite not reaching the lowest GPU clock. This is certainly an interesting development. We will, hopefully, see more board partners pick up this change.

ROFL HAHAHAAHAAAAAAAAAAA

So this is the one you want now Galidou.

" Pros: This thing is pretty amazing. Tried running Skyrim on Ultra, 2k textures, and 14 other visual mods. With this card, I ran it all with no lagg at all, with a temp under 67. Love it. "

http://www.newegg.com/Product/Product.aspx?Item=N8...

Galidou - Tuesday, September 4, 2012 - link

Gibgabyte did the same, the board power is up to 180 watts if you tweak it and still both overclocked(my wife's gigabyte 660 ti OC and my 7950 sapphire 7950 OC) the 7950 wins hands down at 3 monitor resolution.How can you still trying to explain things when the only side of the medal you can speak of is Nvidia. Sorry, I see the good of both while you can't say a good thing about AMD. Both of my computer uses intel overclocked sandy bridge/ivy bridge K cpus, I'm no AMD fan but I can recognize I did the right thing and I did my research and having BOTH freaking cards in HANDS and testing them side by side with my 3570k @ 4,6ghz.

My 7950 wins @ 3 monitors in skyrim EASILY, you can't say anything to that because you ain't got both cards in hands. Geez, will you freaking understand some day. And no I ain't got any freaking problem with my drivers... And I paid the 7950 the same price than the gtx 660 ti. EXACT same price. 319$ before taxes.

Geez it's complicated when arguing with you because you ain't open to any opinions/facts other than: AMD IS CRAP, NVIDIA WINS EVERYTHING, AMD IS CRAP, NVIDIA WINS EVERYTHING, HERE'S MY LINK TO A WEBSITE THAT SHOWS THE 660TI WINNING AGAINST A 7970 AT EVERYTHING EVEN 6 MONITORS LOOK LOOK LOOK.

TheJian - Friday, August 24, 2012 - link

I was speaking to their finances. If you see in one of my other posts, I believed they deserved 20bil from Intel, but courts screwed them. That is part of what I meant. They deserved their profits and more. Tough to get profits when Intel is stealing them basically by blocking your products at every end.No comment was directed at "dumb" employees. I said it was hard to overcome, not easy. Also that they had the crown for 3 years and weren't allowed to get just desserts. I'm sorry you didn't get that from the posts. I like AMD. I just fear they're on their last financial leg. I've owned their stock 4 times over the last 10 years. There doesn't look like there will be a 5th is all I'm saying. I speak from a stock/company financial position sometimes since I've bought both and follow their income statements. I'm sure they're all great people that work there, no comment on them (besides management's mishandling of Dirk Meyer, ATI overpurchase).

felipetga - Thursday, August 16, 2012 - link

I have been holding to upgrade my GTX 460 256bits. I wonder if this card will be bottlenecked by my C2Q 9550 @ 3.6ghz....dishayu - Thursday, August 16, 2012 - link

It won't. You need to SLI/CF 2 top end cards for the processor to be a bottleneck.tipoo - Thursday, August 16, 2012 - link

Only on some games, but the majority aren't as CPU intensive as they are GPU intensive, so it would still be a nice upgrade for you.Jamahl - Thursday, August 16, 2012 - link

Do you realise that the majority of 660 Ti's being benchmarked at other techsites are overclocked vs the stock Radeons?Biorganic - Thursday, August 16, 2012 - link

Exactly this. Anyone who follows these respective cards, 7950:670, 7970:680 etc knows that the AMD alternatives have excellent overclocking potential. All these reviews are comparing high clocked GTX vs stock or very conservatively boosted AMD cards. I can get my 7950 to 1000 mHz on stock voltage. That will destroy this toy they call a TI. Sorry but the results seem a bit biased.Ryan Smith - Thursday, August 16, 2012 - link

"Sorry but the results seem a bit biased."Just so we're clear, are you talking about our article, or articles on other sites?

if it's the former, in case you've missed it we are explicitly testing a reference clocked GTX 660 Ti in the form of Zotac's card at reference clocks (this is hardware identical to their official reference clocked model).

mwildtech - Thursday, August 16, 2012 - link

Biased?? This guy is an idiot. Anandtech is the least biased tech site on the interwebs. Ryan - awesome review! keep up the good work.