The next-gen MacBook Pro with Retina Display Review

by Anand Lal Shimpi on June 23, 2012 4:14 AM EST- Posted in

- Mac

- Apple

- MacBook Pro

- Laptops

- Notebooks

Driving the Retina Display: A Performance Discussion

As I mentioned earlier, there are quality implications of choosing the higher-than-best resolution options in OS X. At 1680 x 1050 and 1920 x 1200 the screen is drawn with 4x the number of pixels, elements are scaled appropriately, and the result is downscaled to 2880 x 1800. The quality impact is negligible however, especially if you actually need the added real estate. As you’d expect, there is also a performance penalty.

At the default setting, either Intel’s HD 4000 or NVIDIA’s GeForce GT 650M already have to render and display far more pixels than either GPU was ever intended to. At the 1680 and 1920 settings however the GPUs are doing more work than even their high-end desktop counterparts are used to. In writing this article it finally dawned on me exactly what has been happening at Intel over the past few years.

Steve Jobs set a path to bringing high resolution displays to all of Apple’s products, likely beginning several years ago. There was a period of time when Apple kept hiring ex-ATI/AMD Graphics CTOs, first Bob Drebin and then Raja Koduri (although less public, Apple also hired chief CPU architects from AMD and ARM among other companies - but that’s another story for another time). You typically hire smart GPU guys if you’re building a GPU, the alternative is to hire them if you need to be able to work with existing GPU vendors to deliver the performance necessary to fulfill your dreams of GPU dominance.

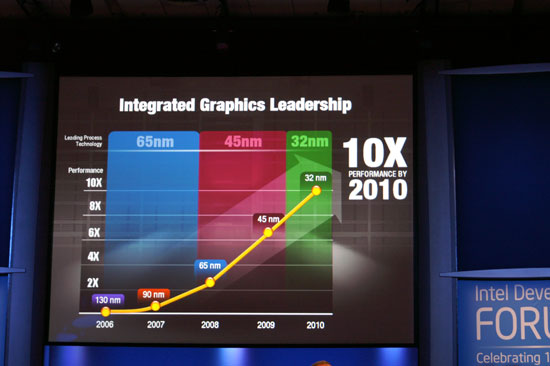

In 2007 Intel promised to deliver a 10x improvement in integrated graphics performance by 2010:

In 2009 Apple hired Drebin and Koduri.

In 2010 Intel announced that the curve had shifted. Instead of 10x by 2010 the number was now 25x. Intel’s ramp was accelerated, and it stopped providing updates on just how aggressive it would be in the future. Paul Otellini’s keynote from IDF 2010 gave us all a hint of what’s to come (emphasis mine):

But there has been a fundamental shift since 2007. Great graphics performance is required, but it isn't sufficient anymore. If you look at what users are demanding, they are demanding an increasingly good experience, robust experience, across the spectrum of visual computing. Users care about everything they see on the screen, not just 3D graphics. And so delivering a great visual experience requires media performance of all types: in games, in video playback, in video transcoding, in media editing, in 3D graphics, and in display. And Intel is committed to delivering leadership platforms in visual computing, not just in PCs, but across the continuum.

Otellini’s keynote would set the tone for the next few years of Intel’s evolution as a company. Even after this keynote Intel made a lot of adjustments to its roadmap, heavily influenced by Apple. Mobile SoCs got more aggressive on the graphics front as did their desktop/notebook counterparts.

At each IDF I kept hearing about how Apple was the biggest motivator behind Intel’s move into the GPU space, but I never really understood the connection until now. The driving factor wasn’t just the demands of current applications, but rather a dramatic increase in display resolution across the lineup. It’s why Apple has been at the forefront of GPU adoption in its iDevices, and it’s why Apple has been pushing Intel so very hard on the integrated graphics revolution. If there’s any one OEM we can thank for having a significant impact on Intel’s roadmap, it’s Apple. And it’s just getting started.

Sandy Bridge and Ivy Bridge were both good steps for Intel, but Haswell and Broadwell are the designs that Apple truly wanted. As fond as Apple has been of using discrete GPUs in notebooks, it would rather get rid of them if at all possible. For many SKUs Apple has already done so. Haswell and Broadwell will allow Apple to bring integration to even some of the Pro-level notebooks.

To be quite honest, the hardware in the rMBP isn’t enough to deliver a consistently smooth experience across all applications. At 2880 x 1800 most interactions are smooth but things like zooming windows or scrolling on certain web pages is clearly sub-30fps. At the higher scaled resolutions, since the GPU has to render as much as 9.2MP, even UI performance can be sluggish. There’s simply nothing that can be done at this point - Apple is pushing the limits of the hardware we have available today, far beyond what any other OEM has done. Future iterations of the Retina Display MacBook Pro will have faster hardware with embedded DRAM that will help mitigate this problem. But there are other limitations: many elements of screen drawing are still done on the CPU, and as largely serial architectures their ability to scale performance with dramatically higher resolutions is limited.

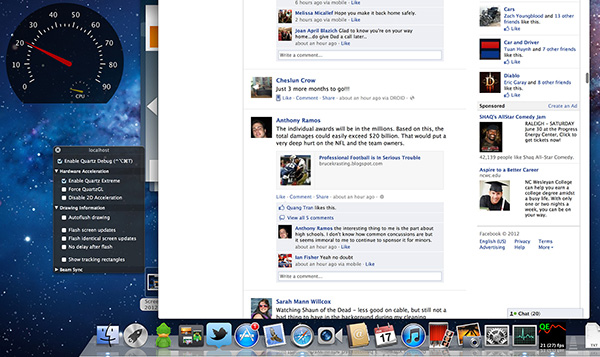

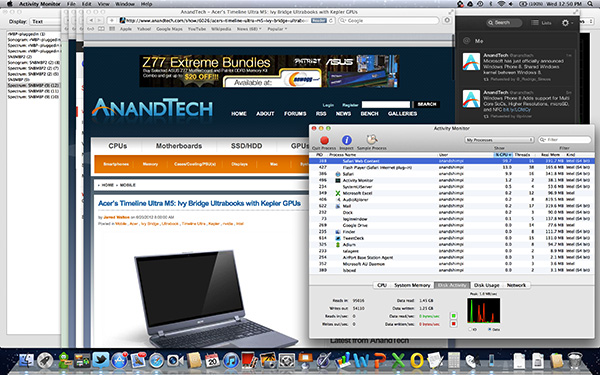

Some elements of drawing in Safari for example aren’t handled by the GPU. Quickly scrolling up and down on the AnandTech home page will peg one of the four IVB cores in the rMBP at 100%:

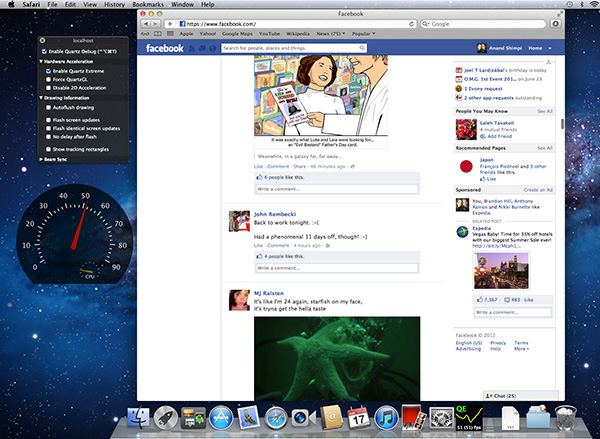

The GPU has an easy time with its part of the process but the CPU’s workload is borderline too much for a single core to handle. Throw a more complex website at it and things get bad quickly. Facebook combines a lot of compressed images with text - every single image is decompressed on the CPU before being handed off to the GPU. Combine that with other elements that are processed on the CPU and you get a recipe for choppy scrolling.

To quantify exactly what I was seeing I measured frame rate while scrolling as quickly as possible through my Facebook news feed in Safari on the rMBP as well as my 2011 15-inch High Res MacBook Pro. While last year’s MBP delivered anywhere from 46 - 60 fps during this test, the rMBP hovered around 20 fps (18 - 24 fps was the typical range).

Scrolling in Safari on a 2011, High Res MBP - 51 fps

Scrolling in Safari on the rMBP - 21 fps

Remember at 2880 x 1800 there are simply more pixels to push and more work to be done by both the CPU and the GPU. It’s even worse in those applications that have higher quality assets: the CPU now has to decode images at 4x the resolution of what it’s used to. Future CPUs will take this added workload into account, but it’ll take time to get there.

The good news is Mountain Lion provides some relief. At WWDC Apple mentioned the next version of Safari is ridiculously fast, but it wasn’t specific about why. It turns out that Safari leverages Core Animation in Mountain Lion and more GPU accelerated as a result. Facebook is still a challenge because of the mixture of CPU decoded images and a standard web page, but the experience is a bit better. Repeating the same test as above I measured anywhere from 20 - 30 fps while scrolling through Facebook on ML’s Safari.

Whereas I would consider the rMBP experience under Lion to be borderline unacceptable, everything is significantly better under Mountain Lion. Don’t expect buttery smoothness across the board, you’re still asking a lot of the CPU and GPU, but it’s a lot better.

471 Comments

View All Comments

KoolAidMan1 - Friday, July 6, 2012 - link

Please, MBAs have always had good CPUs, and what is happening now with Ivy Bridge is nothing new.I get it, in your world, Ivy Bridge is magically low when it is in an Apple laptop, got it.

Your argument is undermined because you have none, and your name calling only nails down how desperate you are.

Spunjji - Tuesday, June 26, 2012 - link

Idiot.KoolAidMan1 - Saturday, June 23, 2012 - link

16:9 display, who cares?Ohhmaagawd - Saturday, June 23, 2012 - link

"Rubbish, there are plenty of other companies who are far more innovative than Apple whose machines look basic in comparison - Sony's older Z series had a very high resolution 13.1in 1080p screen, blu-ray writer, quad SSDs in RAID 0, integrated and discrete graphics card and the fastest of te dual core i7's while still smaller and lighter than Apple's 13in machines and that was a couple of years ago. Apple aren't even close to touching most of its technology and probably never will."how is any of that innovative? Quad SSDs/RAID 0 is pretty cool - i'll get them that. But other than that? I looked at these things. They have freaking VGA ports. They look like decent machines with above average designs, but that's about it.

So what's innovative about apple laptops? mag safe. glass trackpads that don't suck (no one else makes a useable trackpad IMO). unibody aluminum case. magnetic latch system is unmatched. event the little prongs on the small power supply are nicer than anything else I've see. ability to sleep and wake up :) (I still haven't used a Windows laptop that consistently can do this). backlit keyboard. thunderbolt connector (first on mac) allows you to realistically use only two connections - thunderbolt for display/data and power. first to have ultra thin laptops (Air). and now the retina display.

OCedHrt - Sunday, June 24, 2012 - link

As others answered, the VGA ports is because many projects use VGA still and the target is upper management and enthusiasts. This comes from Japan's management hierarchy. Except Sony to refresh with a dongle of some kind in the future now that Apple doesn't have an exclusive on thunderbolt.Ohhmaagawd - Sunday, June 24, 2012 - link

Thunderbolt was never mac exclusive: http://www.pcmag.com/article2/0,2817,2380954,00.as...And any company could have used display port as apple did previously.

Answer to the projector prob is a dongle (that's what I do). Or buy a decent projector. Or better yet - just get an HDTV.

Spunjji - Tuesday, June 26, 2012 - link

Oh sure, buy a decent projector for every client you're visiting on your business trips. Problem solved!Dumbass.

vegemeister - Monday, July 2, 2012 - link

>They have freaking VGA ports.Er, how is this a problem?

kmmatney - Sunday, June 24, 2012 - link

Too bad Sony doesn't have the balls to make a 16:10 display.ramb0 - Monday, June 25, 2012 - link

yeah sure. The Finger Swipe security feature is probably the best innovation outside of Apple. I mean, that feature totally took off. It's amazing Apple hasn't caught on yet. I guess they're too busy innovating features that people actually give a fuck about. And by "people" i'm talking about the majority, not little nit pick wankers like you.