NVIDIA GeForce GTX 690 Review: Ultra Expensive, Ultra Rare, Ultra Fast

by Ryan Smith on May 3, 2012 9:00 AM ESTPower, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. More so than even single GPU cards, this is perhaps the most important set of metrics for a multi-GPU card. Poor cooling that results in high temperatures or ridiculous levels of noise can quickly sink a multi-GPU card’s chances. Ultimately with a fixed power budget of 300W or 375W, the name of the game is dissipating that heat as quietly as you can without endangering the GPUs.

| GeForce GTX 600 Series Voltages | ||||

| Ref GTX 690 Boost Load | Ref GTX 680 Boost Load | Ref GTX 690 Idle | ||

| 1.175v | 1.175v | 0.987v | ||

It’s interesting to note that the GPU voltages on GTX 680 and GTX 690 are identical; both idle at the 0.987v, and both max out at 1.175v for the top boost bin. It would appear that NVIDIA’s binning process for the GTX 690 is looking almost exclusively at leakage; they don’t need to find chips that operate at a lower voltage, they merely need chips that don’t waste too much power.

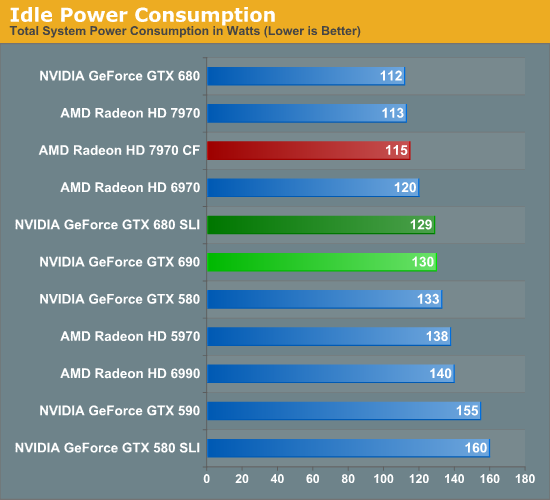

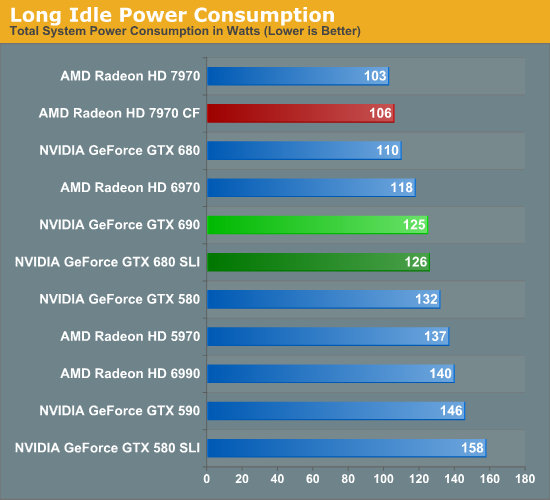

NVIDIA has progressively brought down their idle power consumption and it shows. Where the GTX 590 would draw 155W at the wall at idle, we’re drawing 130W with the GTX 690. For a single GPU NVIDIA’s idle power consumption is every bit as good as AMD’s, however they don’t have any way of shutting off the 2nd GPU like AMD does, meaning that the GTX 690 still draws more power at idle than the 7970CF. Being able to shut off that 2nd GPU really mitigates one of the few remaining disadvantages of a dual-GPU card, and it’s a shame NVIDIA doesn’t have something like this.

Long idle power consumption merely amplifies this difference. Now NVIDIA is running 2 GPUs while AMD is running 0, which means the GTX 690 is leading to us pulling 19W more at the wall while doing absolutely nothing.

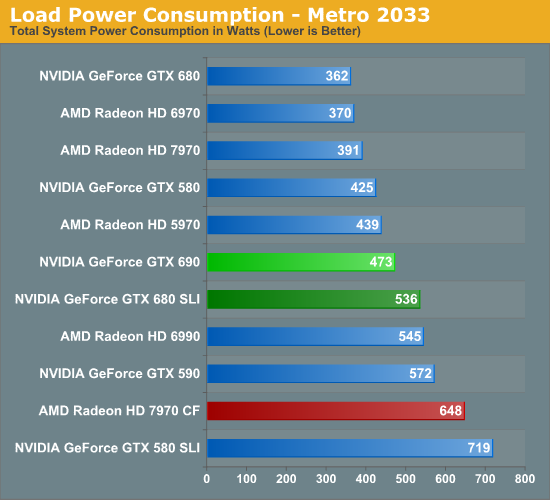

Thanks to NVIDIA’s binning, the load power consumption of the GTX 690 looks very good here. Under Metro we’re drawing 63W less at the wall compared to the GTX 680 SLI, even though we’ve already established that performance is within 5%. The gap with the 7970CF is even larger; the 7970CF may have a performance advantage, but it comes at a cost of 175W more at the wall.

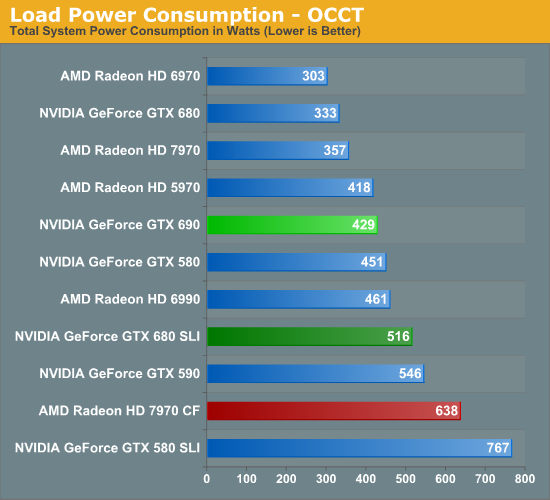

OCCT power is much the same story. Here we’re drawing 429W at the wall, an incredible 87W less than the GTX 680 SLI. In fact a GTX 690 draws less power than a single GTX 580. That is perhaps the single most impressive statistic you’ll see today. Meanwhile compared to the 7970CF the difference at the wall is 209W. The true strength of multi-GPU cards is their power consumption relative to multiple cards, and thanks to NVIDIA’s ability to get the GTX 690 so very close to the GTX 680 SLI the GTX 690 is absolutely sublime here.

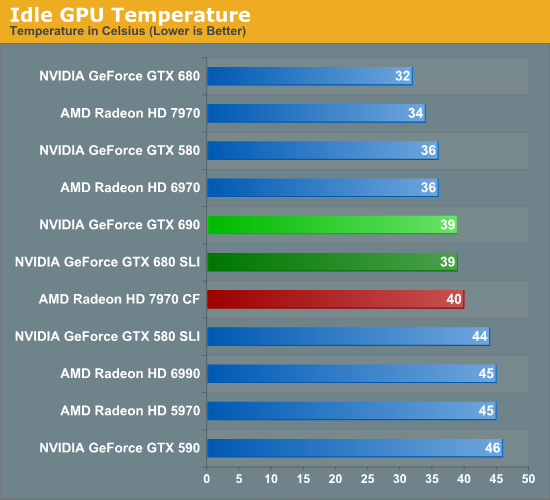

Moving on to temperatures, how well does the GTX 690 do? Quite well. Like all dual-GPU cards GPU temperatures aren’t as good as with single-GPU cards, but it’s also no worse than any dual-GPU setup. In fact of all the dual-GPU cards in our benchmark selection this is the coolest, beating even the GTX 590. Kepler’s low power consumption really pays off here.

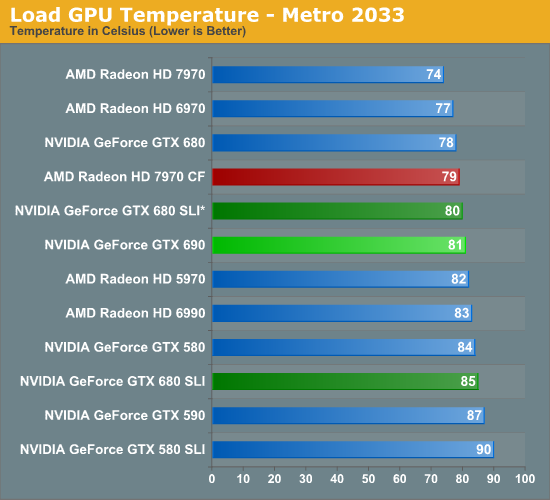

For load temperatures we’re going to split things up a bit. While our official testing protocol is to test with our video cards directly next to each other when doing multi-card configurations, we’ve gone ahead and tested the GTX 680 SLI both in an adjacent and spaced configuration, with the spaced configuration marked with a *.

When it comes to load temperatures the GTX 690 once again does well for itself. Under Metro it’s warmer than most single GPU cards, but only barely so. The difference from a GTX 680 is only 3C, 1C with a spaced GTX 680 SLI, and it’s 4C cooler than an adjacent GTX 680 SLI setup. More importantly perhaps is that Metro temperatures are 6C cooler than on the GTX 590.

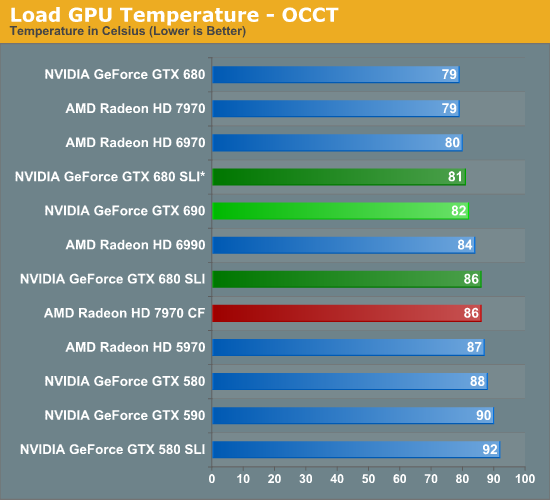

As for OCCT, the numbers are different but the story is the same. The GTX 690 is 3C warmer than the GTX 680, 1C warmer than a spaced GTX 680 SLI, and 4C cooler than an adjacent GTX 680 SLI. Meanwhile temperatures are now 8C cooler than the GTX 590 and even 6C cooler than the GTX 580.

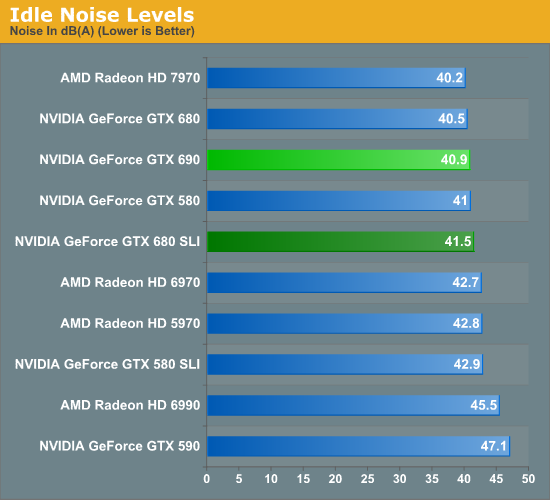

So the GTX 680 does well with power consumption and temperatures, but is there a noise tradeoff? At idle the answer is no; at 40.9dB it’s effectively as quiet as the GTX 680 and incredibly enough over 6dB quieter than the GTX 590. NVIDA’s progress at idle continues to impress, even if they can’t shut off the second GPU.

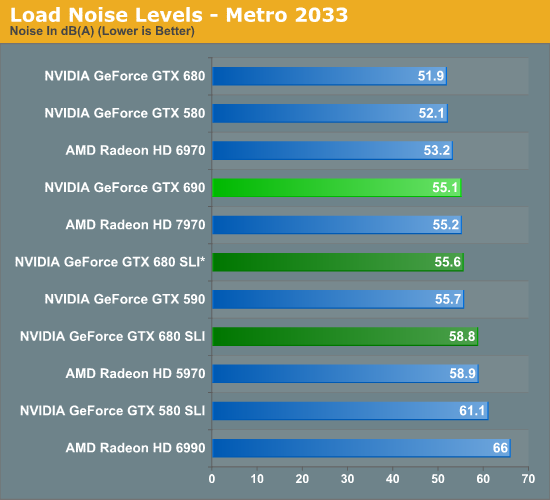

When NVIDIA was briefing us on the GTX 690 they said that the card would be notably quieter than even a GTX 680 SLI, which is quite the claim given how quiet the GTX 680 SLI really is. So out of all the tests we have run, this is perhaps the result we’ve been the most eager to get to. The results are simply amazing. The GTX 690 is quieter than a GTX 680 SLI alright; it’s quieter than a GTX 680 SLI whether the cards are adjacent or spaced. The difference with spaced cards is only 0.5dB under Metro, but it’s still a difference. Meanwhile with that 55.1dB noise level the GTX 690 is doing well against a number of other cards here, effectively tying the 7970 and beating out every other multi-GPU configuration on the board.

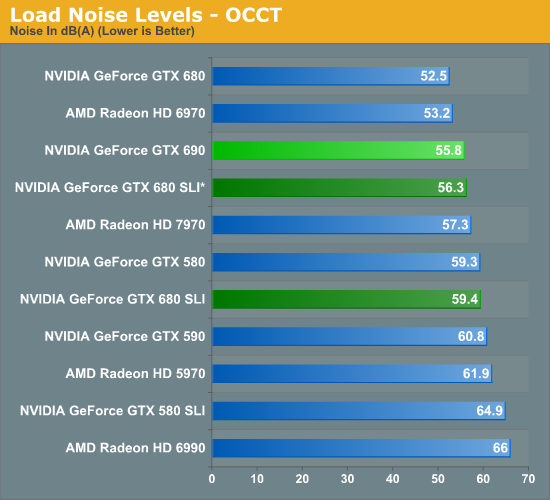

OCCT is even more impressive, thanks to a combination of design and the fact that NVIDIA’s power target system effectively serves as a throttle for OCCT. 55.8dB is not only just a hair louder than under Metro, but it’s still a hair quieter than a spaced GTX 680 SLI setup. It’s also quieter than a 7970, a GTX 580, and every other multi-GPU configuration we’ve tested. The only thing it’s not quieter than is the GTX 680 and the 6970.

With all things considered the GTX 690 is not that much quieter than the GTX 590 under gaming loads, but NVIDIA has improved performance just enough that they can beat their own single-GPU cards in SLI. And at the same time the GTX 690 consumes significantly less power for what amounts to a temperature tradeoff of only a couple of degrees. The fact that the GTX 690 can’t quite reach the GTX 680 SLI’s performance may have been disappointing thus far, but after looking at our power, temperature, and noise data it’s a massive improvement on the GTX 680 SLI for what amounts to a very small gaming performance difference.

200 Comments

View All Comments

CeriseCogburn - Thursday, May 3, 2012 - link

Keep laughing, this card cannot solid v-sync 60 at that "tiny panel" with only 4xaa in the amd fans revived favorite game crysis.Can't do it at 1920X guy.

I guess you guys all like turning down your tiny cheap cards settings all the time, even with your cheapo panels?

I mean this one can't even keep up at 1920X, gotta turn down the in game settings, keep the CP tweaked and eased off, etc.

What's wrong with you guys ?

What don't you get ?

nathanddrews - Thursday, May 3, 2012 - link

Currently the only native 120Hz displays (true 120Hz input, not 60Hz frame doubling) are 1920x1080. If you want VSYNC @ 120Hz, then you need to be able to hit at least 120fps @ 1080p. Even the GTX690 fails to do that at maximum quality settings on some games...CeriseCogburn - Thursday, May 3, 2012 - link

It can't do 60 v-sync at 1920 in crysis, and that's only on 4xaa.These people don't own a single high end card, that's for sure, or something is wrong with their brains.

nathanddrews - Thursday, May 3, 2012 - link

You must be talking about minimum fps, because on Page 5 the GTX690 is clearly averaging 85fps @1080p.Tom's Hardware (love 'em or hate 'em) has benchmarks with AA enabled and disabled. Maximum quality with AA disabled seems to be the best way to get 120fps in nearly every game @ 1080p with this card.

CeriseCogburn - Friday, May 4, 2012 - link

You must be ignoring v-sync and stutter with frames that drop below 60, and forget 120 frames a sec.Just turn down the eye candy... on the 3 year old console ports, that are "holding us back"... at 1920X resolutions.

Those are the facts, combined with the moaning about ported console games.

Ignore those facts and you can rant and wide eye spew like others - now not only is there enough money for $500 card(s)/$1000dual, there's extra money for high end monitors when the current 1920X pukes out even the 690 and CF 7970 - on the old console port games.

Whatever, everyone can continue to bloviate that these cards destroy 1920X, until they look at the held back settings benches and actually engage their brains for once.

hechacker1 - Thursday, May 3, 2012 - link

Well not if you want to do consistent 120FPS gaming. Then you need all the horsepower you can get.Hell my 6970 struggles to maintain 120FPS, and thus makes the game choppy, even though it's only dipping to 80fps or so.

So now that I have a 120FPS monitor, it's incredibly easy to see stutters in game performance.

Time for an upgrade (1080p btw).

Sabresiberian - Thursday, May 3, 2012 - link

Actually, they use the 5760x1200 because most of us Anandtech readers prefer the 1920x1200 monitors, not because they are trying to play favorites.CeriseCogburn - Thursday, May 3, 2012 - link

Those monitors are very rare. Of course none of you have even one.Traciatim - Thursday, May 3, 2012 - link

My monitor runs 1920x1200, and I specifically went out of my way to get 16:10 instead of 16:9. You fail.CeriseCogburn - Friday, May 4, 2012 - link

Yes you went out of your way, why did you have to they are so common, I'm sure you did.In any case, since they are so rare the bias is still present here as shown.