NVIDIA GeForce GTX 690 Review: Ultra Expensive, Ultra Rare, Ultra Fast

by Ryan Smith on May 3, 2012 9:00 AM ESTPower, Temperature, & Noise

As always, we’re wrapping up our look at a video card’s stock performance with a look at power, temperature, and noise. More so than even single GPU cards, this is perhaps the most important set of metrics for a multi-GPU card. Poor cooling that results in high temperatures or ridiculous levels of noise can quickly sink a multi-GPU card’s chances. Ultimately with a fixed power budget of 300W or 375W, the name of the game is dissipating that heat as quietly as you can without endangering the GPUs.

| GeForce GTX 600 Series Voltages | ||||

| Ref GTX 690 Boost Load | Ref GTX 680 Boost Load | Ref GTX 690 Idle | ||

| 1.175v | 1.175v | 0.987v | ||

It’s interesting to note that the GPU voltages on GTX 680 and GTX 690 are identical; both idle at the 0.987v, and both max out at 1.175v for the top boost bin. It would appear that NVIDIA’s binning process for the GTX 690 is looking almost exclusively at leakage; they don’t need to find chips that operate at a lower voltage, they merely need chips that don’t waste too much power.

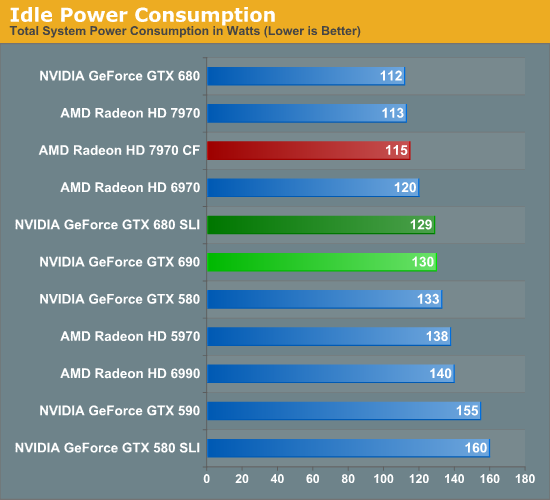

NVIDIA has progressively brought down their idle power consumption and it shows. Where the GTX 590 would draw 155W at the wall at idle, we’re drawing 130W with the GTX 690. For a single GPU NVIDIA’s idle power consumption is every bit as good as AMD’s, however they don’t have any way of shutting off the 2nd GPU like AMD does, meaning that the GTX 690 still draws more power at idle than the 7970CF. Being able to shut off that 2nd GPU really mitigates one of the few remaining disadvantages of a dual-GPU card, and it’s a shame NVIDIA doesn’t have something like this.

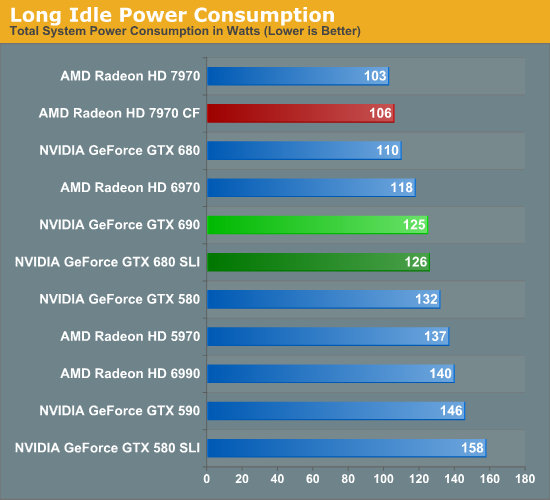

Long idle power consumption merely amplifies this difference. Now NVIDIA is running 2 GPUs while AMD is running 0, which means the GTX 690 is leading to us pulling 19W more at the wall while doing absolutely nothing.

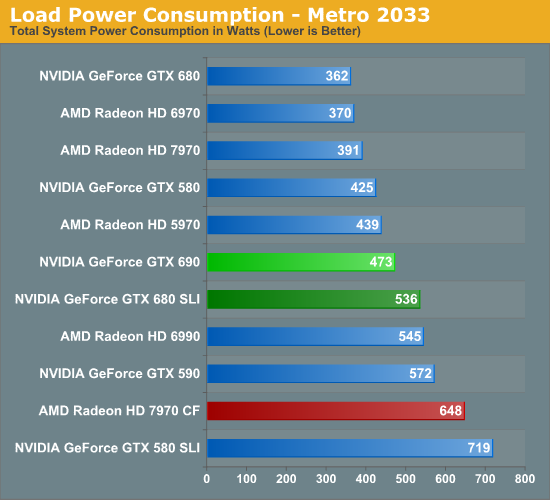

Thanks to NVIDIA’s binning, the load power consumption of the GTX 690 looks very good here. Under Metro we’re drawing 63W less at the wall compared to the GTX 680 SLI, even though we’ve already established that performance is within 5%. The gap with the 7970CF is even larger; the 7970CF may have a performance advantage, but it comes at a cost of 175W more at the wall.

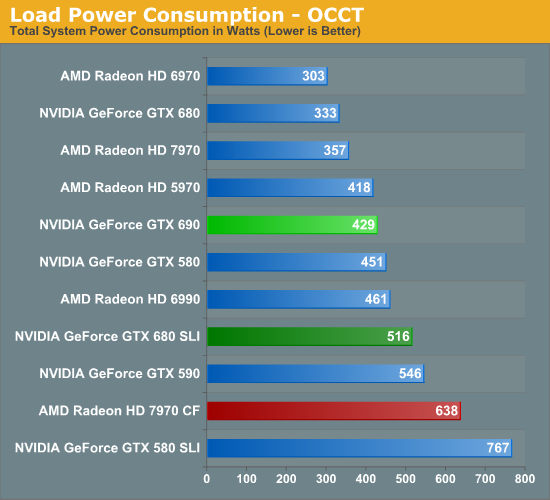

OCCT power is much the same story. Here we’re drawing 429W at the wall, an incredible 87W less than the GTX 680 SLI. In fact a GTX 690 draws less power than a single GTX 580. That is perhaps the single most impressive statistic you’ll see today. Meanwhile compared to the 7970CF the difference at the wall is 209W. The true strength of multi-GPU cards is their power consumption relative to multiple cards, and thanks to NVIDIA’s ability to get the GTX 690 so very close to the GTX 680 SLI the GTX 690 is absolutely sublime here.

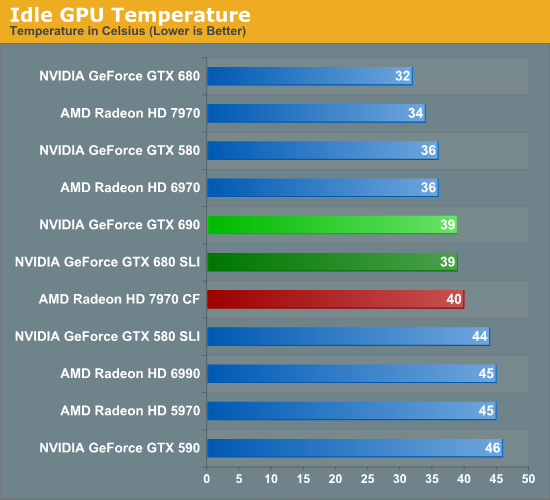

Moving on to temperatures, how well does the GTX 690 do? Quite well. Like all dual-GPU cards GPU temperatures aren’t as good as with single-GPU cards, but it’s also no worse than any dual-GPU setup. In fact of all the dual-GPU cards in our benchmark selection this is the coolest, beating even the GTX 590. Kepler’s low power consumption really pays off here.

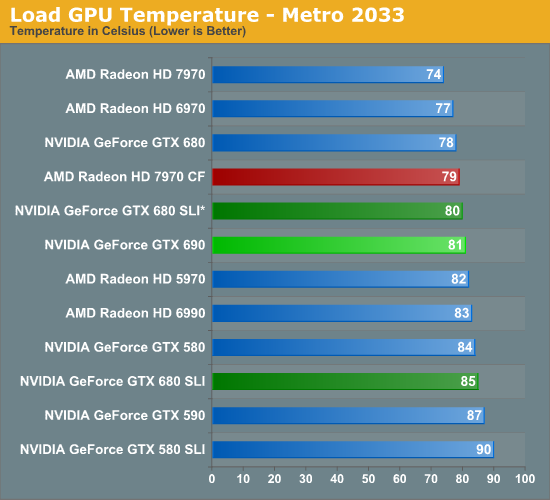

For load temperatures we’re going to split things up a bit. While our official testing protocol is to test with our video cards directly next to each other when doing multi-card configurations, we’ve gone ahead and tested the GTX 680 SLI both in an adjacent and spaced configuration, with the spaced configuration marked with a *.

When it comes to load temperatures the GTX 690 once again does well for itself. Under Metro it’s warmer than most single GPU cards, but only barely so. The difference from a GTX 680 is only 3C, 1C with a spaced GTX 680 SLI, and it’s 4C cooler than an adjacent GTX 680 SLI setup. More importantly perhaps is that Metro temperatures are 6C cooler than on the GTX 590.

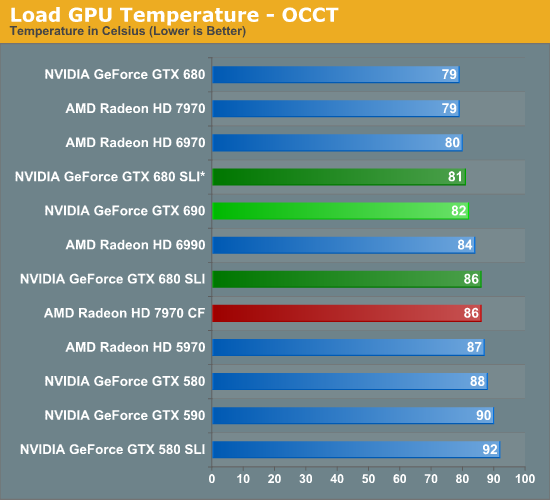

As for OCCT, the numbers are different but the story is the same. The GTX 690 is 3C warmer than the GTX 680, 1C warmer than a spaced GTX 680 SLI, and 4C cooler than an adjacent GTX 680 SLI. Meanwhile temperatures are now 8C cooler than the GTX 590 and even 6C cooler than the GTX 580.

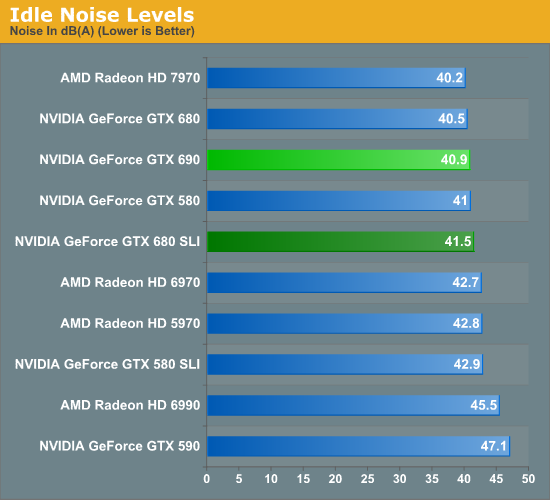

So the GTX 680 does well with power consumption and temperatures, but is there a noise tradeoff? At idle the answer is no; at 40.9dB it’s effectively as quiet as the GTX 680 and incredibly enough over 6dB quieter than the GTX 590. NVIDA’s progress at idle continues to impress, even if they can’t shut off the second GPU.

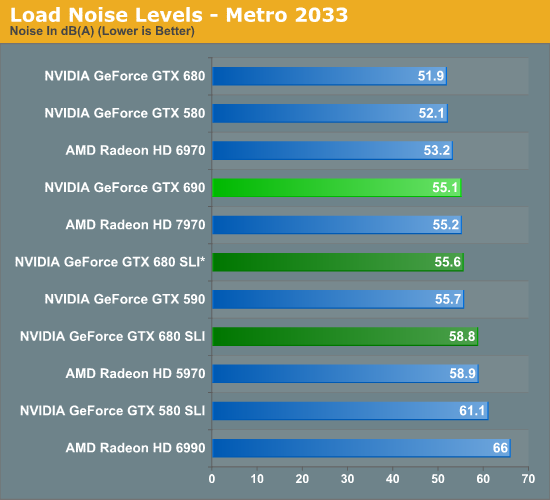

When NVIDIA was briefing us on the GTX 690 they said that the card would be notably quieter than even a GTX 680 SLI, which is quite the claim given how quiet the GTX 680 SLI really is. So out of all the tests we have run, this is perhaps the result we’ve been the most eager to get to. The results are simply amazing. The GTX 690 is quieter than a GTX 680 SLI alright; it’s quieter than a GTX 680 SLI whether the cards are adjacent or spaced. The difference with spaced cards is only 0.5dB under Metro, but it’s still a difference. Meanwhile with that 55.1dB noise level the GTX 690 is doing well against a number of other cards here, effectively tying the 7970 and beating out every other multi-GPU configuration on the board.

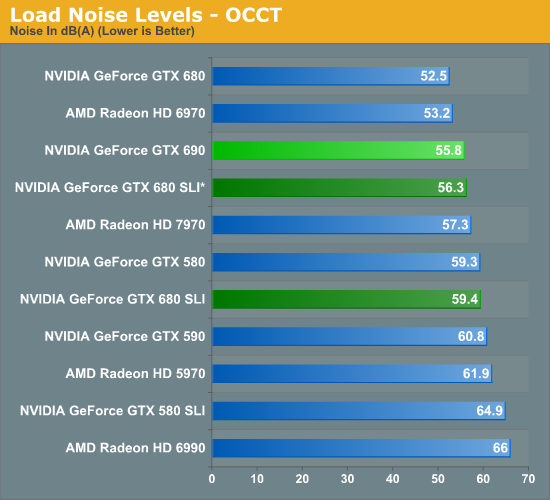

OCCT is even more impressive, thanks to a combination of design and the fact that NVIDIA’s power target system effectively serves as a throttle for OCCT. 55.8dB is not only just a hair louder than under Metro, but it’s still a hair quieter than a spaced GTX 680 SLI setup. It’s also quieter than a 7970, a GTX 580, and every other multi-GPU configuration we’ve tested. The only thing it’s not quieter than is the GTX 680 and the 6970.

With all things considered the GTX 690 is not that much quieter than the GTX 590 under gaming loads, but NVIDIA has improved performance just enough that they can beat their own single-GPU cards in SLI. And at the same time the GTX 690 consumes significantly less power for what amounts to a temperature tradeoff of only a couple of degrees. The fact that the GTX 690 can’t quite reach the GTX 680 SLI’s performance may have been disappointing thus far, but after looking at our power, temperature, and noise data it’s a massive improvement on the GTX 680 SLI for what amounts to a very small gaming performance difference.

200 Comments

View All Comments

JPForums - Thursday, May 3, 2012 - link

Not mine. I'm running a 1920x1200 IPS.

1920x1200 is more common in the higher end monitor market.

A quick glance at newegg shows 16 1920x1200 models with at 24" alone. (starting at $230)

Besides, I can't imagine many buy a $1000 dollar video card and pair it with a single $200 display.

It makes more sense to me to check 1920x1200 performance than 1920x1080 for several reasons:

1) 1920x1200 splits the difference between 16x10 and 25x14 or 25x16 better than 1920x1080.

1680x1050 = ~1.7MP

1920x1080=~2MP

1920x1200=~2.3MP

2560*1440=~3.7MP

2560x1600=~4MP

2) People willing to spend $1000 for a video card are generally in a better position to get a nicer monitor. 1920x1200 monitors are more common at higher prices.

3) They already have three of them around to run 5760x1200. Why go get another monitor?

Opinionated Side Points:

Movies transitioned to resolutions much wider than 1080P long ago. A little extra black space really makes no difference.

1920x1200 is a perfectly valid resolution. If Nvidia is having trouble with it, I want to know. When particular resolutions don't scale properly, it is probable that there is either a bug or shenanigans are at work in the more common resolutions.

I prefer using 1920x1200 as a starting point for moving to triple screen setups. I already thing 1920x1080 looks squashed, so 5760x1080 looks downright flattened. Also 3240x1920 just doesn't look very surround to me (3600x1920 seems borderline surround).

CeriseCogburn - Saturday, May 5, 2012 - link

There are only 18 models available in all of newegg with 1920x1200 resolution - only 6 of those are under $400, they are all over $300.+

There are 242 models available in 1920x1080, with nearly 150 models under $300.

You people are literally a bad joke when it comes to even a tiny shred of honesty.

Lerianis - Sunday, May 6, 2012 - link

I don't know about the 'sadly' there in all honesty. I personally like 1920*1080 better than *1200, because nearly everything is done in the former resolution.Stuka87 - Thursday, May 3, 2012 - link

Who buys a GTX690 to play on a 1080P display? Even a 680 is overkill for 1080. You can save a lot of money with a 7870 and still run everything out there.vladanandtechy - Thursday, May 3, 2012 - link

Stuka i agree with you.....but when you buy such a card....you think in the future....5 maybe 6 years....and i can't gurantee that we will do gaming in 1080p then:)....retrospooty - Thursday, May 3, 2012 - link

"Stuka i agree with you.....but when you buy such a card....you think in the future....5 maybe 6 years....and i can't gurantee that we will do gaming in 1080p then:)...."I have to totally disagree with that. Anyone that pays $500+ for a video card is a certain "type" of buyer. That type of buyer will NEVER wait 5-6 years for an upgrade. That guy is getting the latest and greatest of every other generation, if not every generation of cards.

vladanandtechy - Thursday, May 3, 2012 - link

You shouldn't "totally disagree".......meet me...."the exception"....i am the type of buyer who is looking for the "long run"....but i must confess....if i could....i would be the type of buyer you describe....cyaorionismud - Thursday, May 3, 2012 - link

retrospooty and I mean you no disrespect, but if you're spending $500 and buying for the "long run," you're doing it wrong.If you had spent $250, you could have 80% of the performance for 2.5 years, then spend another $250 and have 200% of the performance for the remaining 2.5 years.

von Krupp - Thursday, May 3, 2012 - link

Don't say that.I bought two (2) HD 7970s on the premise that I'm not going to upgrade them for a good long while. At least four years, probably closer to six. I ran from 2005 to 2012 with a GeForce 7800GT just fine and my single core AMD CPU was actually the larger reason why I needed to move on.

Now granted, I also purchased a snazzy U2711 just so the power of these cards wouldn't go to waste (though I'm quite CPU-bound by this i7-3820), but I don't consider dropping AA in future titles to maintain performance to be that big of a loss; I already only run with 8x AF because , frankly, I'm too busy killing things to notice otherwise. I intend to drive this rig for the same mileage. It costs less for me to buy the best of the best at the time of purchase for $1000 and play it into the ground than it is to keep buying $350 cards to barely keep up every two years, all over a seven year duration. Since I now have this fancy 2560x1440 resolution and want to use it, the $250-$300 offerings don't cut it. And the, don't forget to adjust for inflation year over year.

So yes, I'm going to be waiting between 4 and 6 years to upgrade. Under certain conditions, buying the really expensive stuff is as much of an economical move as it is a power grab. Not all of us who build $3000 computers do it on a regular basis.

P.S. Thank you consoles for extending PC hardware life cycles. Makes it easier to make purchases.

Makaveli - Thursday, May 3, 2012 - link

lol agree let put a $500 videocard with a $200 TN panel at 1920x1080 umm ya no!