Mobile Ivy Bridge and ASUS N56VM Preview

by Jarred Walton on April 23, 2012 12:02 PM ESTIvy Bridge HD 4000: Medium Quality Gaming Now Possible

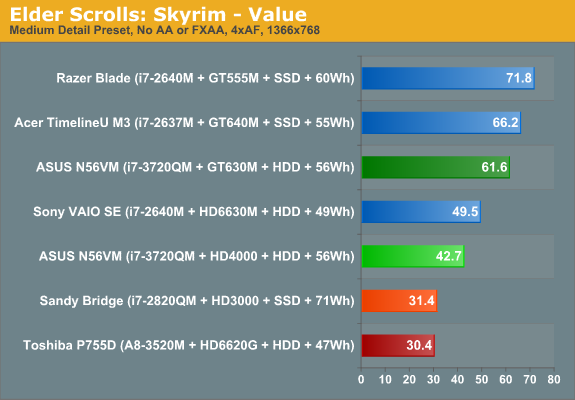

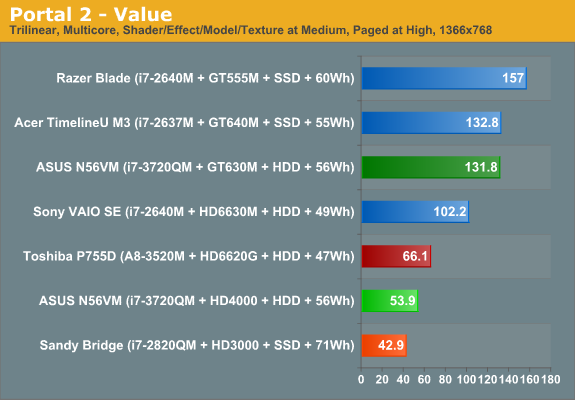

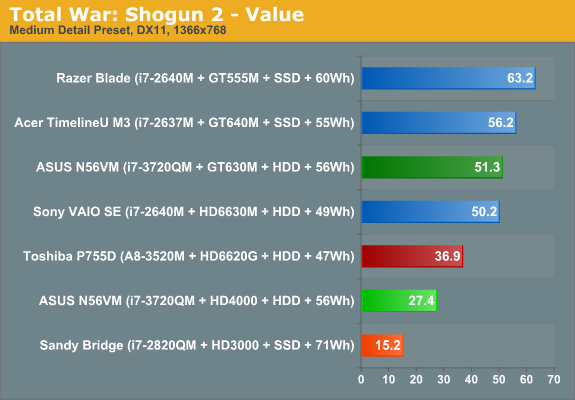

We’ve run a larger than normal set of games this time around. We’ll start with our current 2012 gaming suite, which we’ve discussed previously. The goal of our 2012 game tests is to get reasonable quality rather than bare minimum quality, so we’ve set the bar at around medium detail for our Value settings and high detail for our Mainstream settings. Along with the 2012 suite, we also ran all of the gaming tests from our 2011 suite at our medium detail settings.

We won’t provide a complete list of results here, but you can find those in Mobile Bench (including Mainstream and Enthusiast performance results, though not surprisingly HD 4000 falls well short of playability at those settings). What we will do is show how HD 4000 compares to HD 3000, HD 6620G (Llano A8), HD 6630M, and GT 640M.

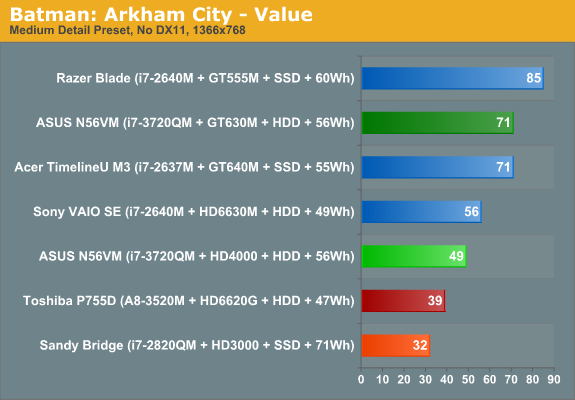

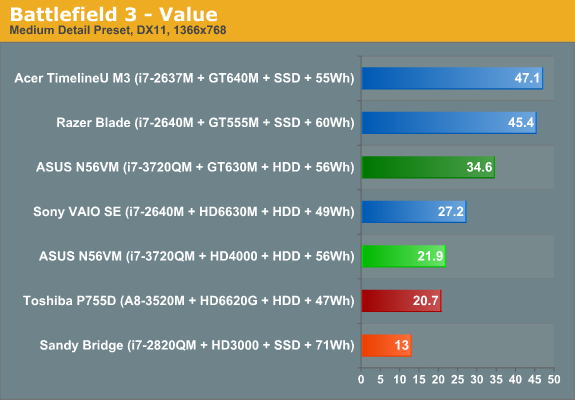

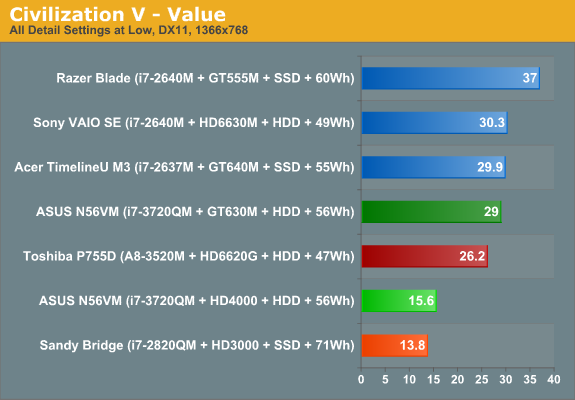

We don’t have a huge selection of laptop hardware (yet) for our 2012 gaming suite, and Ivy Bridge tends to place near the bottom of the Value gaming charts, but that’s only part of the story. Of the seven games we’ve selected for our current tests, two fail to deliver acceptable frame rates: Battlefield 3 and Civilization V. Civ5 is actually still playable at low frame rates, since it’s not a real-time game, but all things considered we’d still like to see >30 FPS. Battlefield 3 on the other hand is simply a beast—notice that Llano A8 along with Sony’s VAIO SE and Z2 with discrete GPUs all fail to break 30 FPS. If you’re into the multiplayer element of BF3, you’d really want a faster GPU; we’d suggest NVIDIA’s GT 555M or AMD’s HD 6730M as a more reasonable target to handle BF3 at our Value (medium) settings.

The remaining games all run acceptably, with the only possible exception being Portal 2. Many times during the game, when you’re looking through a portal the frame rate takes a substantial hit. This is something you’ll see to a lesser extent on other GPUs, but those GPUs already average well over 60 FPS so a 15-20 FPS drop isn’t that noticeable. HD 4000 unfortunately can drop below 30 FPS on some Portal 2 levels, which means despite the moderately high average frame rate it’s sometimes borderline unplayable. If you'd like to read more discussion of HD 4000 gaming potential, we'd also point you at Ryan's dissection of Ivy Bridge graphics performance on the desktop.

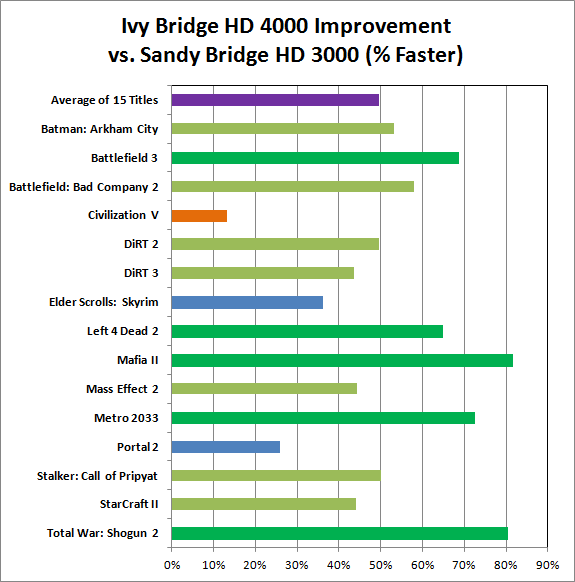

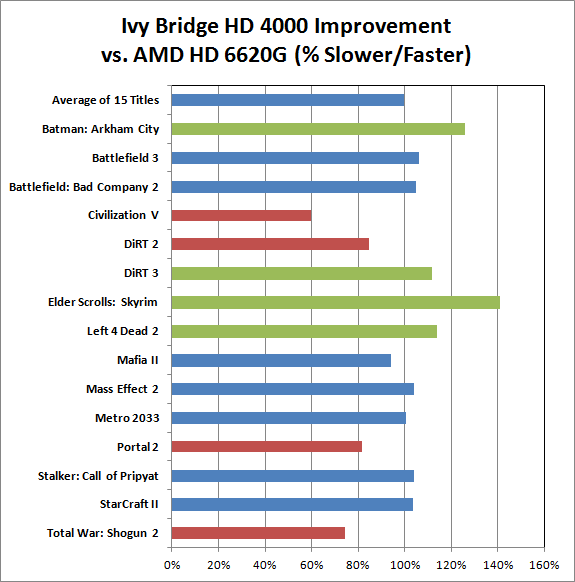

If we skip comparisons with all the faster discrete GPUs, things become quite a bit more interesting. We’re looking at fifteen games from the past two or three years, all run at medium detail 1366x768 settings. (Note that the benchmark we use in the above chart for Left 4 Dead 2 differs from our previous tests, as Valve broke backwards compatibility for their timedemo about five months back.) Comparing Sandy Bridge and Ivy Bridge, HD 4000 ends up being nearly 50% faster than HD 3000 on average. The only title in our suite that doesn’t see a substantial boost in performance is Civilization V.

We’ve speculated in the past that the problem is with Intel’s shader and/or geometry throughput, which might also explain the slowdowns we see in other titles (e.g. Portal 2 and Left 4 Dead 2 both suffer from frame rate dips in Intel’s IGPs). In a similar story, enabling tessellation in Deus Ex: Human Revolution absolutely killed frame rates on HD 4000—it’s perfectly playable with most of the quality settings enabled, but turn on tessellation and the frame rates plummet to less than half. Hopefully, Haswell’s IGP will get the geometry and shader processing capabilities it needs to push the rumored 40 EUs on the fastest chips. Still, Intel has really stepped up their commitment to graphics performance over the past several generations. That’s best illustrated by a comparison with the only other major IGP, AMD’s Llano:

We used two different sets of results for the above chart, one from the original Llano A8-3500M laptop AMD shipped us (for the 2011 games) and the second is the Toshiba Satellite P755D with A8-3520M. Red bars indicate games where AMD wins, green bars are for Intel wins, and blue bars are for ties (the two score within 10% of each other—100% being identical performance). Gathering all the gaming results together, what we end up with is Intel’s HD 4000 offering a very similar experience to Llano A8 in overall gaming capability. On the desktop, Llano continues to enjoy a fairly sizeable lead over Ivy Bridge, but on laptops with much lower TDPs it's a different story.

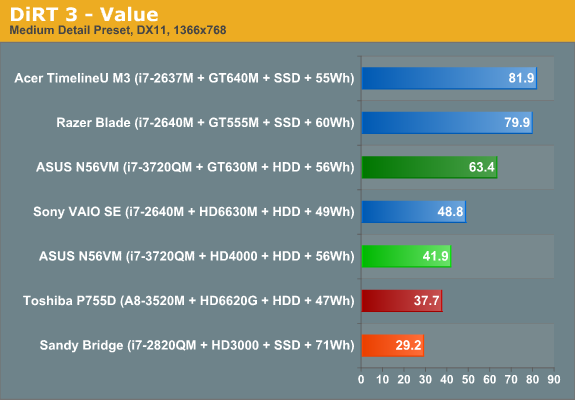

There are some titles where AMD pulls off substantial wins (Civ5, DiRT2, Portal 2, and Total War: Shogun 2), and several titles where Intel takes a similarly large lead (Batman, DiRT 3, Left 4 Dead 2, and Skyrim). Neither platform will handle medium detail settings at 1366x768 for every game out there, but they’re both generally fast enough to handle most games. Here are the details numbers:

|

Core i7-3720QM vs. AMD A8-3500M/3520M Gaming Perforamnce Comparison |

||

| HD 4000 | HD 6620G | |

| Total War: Shogun 2 | 27.4 | 36.9 |

| StarCraft II | 27.7 | 26.9 |

| Stalker: Call of Pripyat | 48.3 | 46.7 |

| Portal 2 | 53.9 | 66.1 |

| Metro 2033 | 26.9 | 26.9 |

| Mass Effect 2 | 43.6 | 42.1 |

| Mafia II | 28.5 | 30.4 |

| Left 4 Dead 2 | 40.2 | 35.5 |

| Elder Scrolls: Skyrim | 42.7 | 30.4 |

| DiRT 3 | 41.9 | 37.7 |

| DiRT 2 | 36.5 | 43.2 |

| Civilization V | 15.6 | 26.2 |

| Battlefield: Bad Company 2 | 39.0 | 37.3 |

| Battlefield 3 | 21.9 | 20.7 |

| Batman: Arkham City | 49.0 | 39.0 |

| Average of 15 Titles | 36.2 | 36.4 |

Granted, the A8-3500M/3520M aren't the fastest Llano parts, and the Llano systems we tested were both using DDR3-1333 memory. Give Llano an MX part and faster memory and performance should improve around 20% (5-10% for the RAM, and 10-15% for the CPU). Outside of a few titles, however, both solutions are playable on most of the same subset of games. And yes, we know AMD has Trinity coming out that should improve on Llano’s GPU performance; AMD has suggested it will be around 50% faster, but we can’t comment on Trinity performance right now—check back in a few weeks.

What About Drivers?

Of course, there’s another question that always comes up when we look at Intel’s IGP: driver quality. When Sandy Bridge launched we did a similar investigation and found that there were minor to moderate problems in four of the twenty games we tested. We trimmed our list down to 15 titles this time out, and if we only look at games where there was clearly some sort of driver problem we have two failures. The first isn’t quite as serious: StarCraft II appears to have a memory leak with the current HD 4000 drivers, as it ends up using nearly 4GB of RAM after 10 to 15 minutes of play before crashing to desktop. (I had this happen in a Versus AI match three out of three times in testing.) Prior to running out of memory, however, performance is generally playable, and HD 3000 doesn’t appear to have the same problem.

The second problem title is Battlefield 3; almost everything renderers properly, but the overlay of text (e.g. subtitles and some HUD elements) didn’t work for me, and navigating the menu also didn’t work properly in fullscreen mode—the mouse wouldn’t register on any of the buttons, so I had to switch to windowed mode, click on the menu settings I wanted to change (e.g. click on “Video” and then change the resolution and quality settings), apply, and then switch back to fullscreen mode. What’s more, BF3 would also lose the menu system entirely after loading a level, typically requiring a restart to bring it back. Performance is acceptable at minimum detail settings, but until the overlay/menu issues get sorted out BF3 isn’t what I would deem playable. (Note that I also experienced the same overlay/menu issues on HD 3000, so this appears to be a driver bug with Intel’s latest 8.15.10.2696 drivers.) I've uploaded a YouTube video showing the bug as well as how things should work.

[Update, 4/28/2012: It's not clear what the precise cause of the Battlefield 3 glitch was, but a 1.5GB patch just got pushed live via Origin some time in the past few days. With the patch in place and running the same Intel drivers, the bugs observed above are no longer present. Performance is still sub-30FPS, but for the single-player campaign you could probably manage in a pinch.]

Looking at the big picture, Ivy Bridge is still a very large step forward for Intel’s graphics division. You’ll note that I didn’t provide any examples of DX11 not working properly in recent games; that’s because as far as I can tell all the DX11 titles I tried rendered correctly. I’m sure there are other exceptions out there, but besides the above fifteen titles I also briefly loaded and played an additional eight games and found they all rendered properly in brief testing.

If you’re interested, the list of additional games I tried includes Rage, Super Street Fighter IV: Arcade Edition, Deus Ex: Human Revolution, Duke Nukem Forever, Dungeon Siege III, Far Cry 2, Just Cause 2, and The Witcher 2. Of these, only The Witcher 2 struggled to reach playable frame rates; even at minimum detail settings, in-game frame rates were typically in the high teens, though The Witcher 2 tends to perform poorly on NVIDIA and AMD mainstream mobile GPUs as well—it’s a bit of a graphics pig. So that brings the total number of games I tried on Ivy Bridge to 23, with only one game showing clear rendering issues, and a second experiencing periodic instability due to a memory leak. While not perfect, it’s another healthy step in the right direction.

49 Comments

View All Comments

JarredWalton - Tuesday, May 1, 2012 - link

Ivy Bridge is technically capable of supporting three displays, but it needs three TMDS transceivers in the laptop (or on the desktop motherboard) to drive the displays simultaneously. Some laptop makers will likely save $0.25 or whatever by only including two, but others will certainly include the full triple head support.JarredWalton - Thursday, May 10, 2012 - link

Just a quick correction, in case anyone is wondering:For triple displays, Ivy Bridge needs to run TWO of the displays off of DisplayPort, and the other can be LVDS/VGA/HDMI/DVI. I can tell you exactly how many laptops I've seen with dual DP outputs: zero. Anyway, it's an OEM decision, and I'm skeptical we'll see 2xDP any time soon.

JarredWalton - Tuesday, April 24, 2012 - link

"I'm not sure what your point is, at all"? You cannot be serious. Either you have no understanding of thermodynamics, or you're just an anonymous Internet troll. I don't know what your problem is, rarson, but your comments on all the Ivy Bridge articles today are the same FUD with nothing to back it up.Ivy Bridge specifications allow for internal temperatures of up to 100C, just like most other Intel chips. At maximum load the chip in the N56VM hits 89C, but it's doing that with the fan hardly running at all and generating almost no noise compared to other laptops. Is that so hard to understand? A dual-core Sandy Bridge i7-2640M in the VAIO SE hits higher temperatures while generating more noise. I guess that means Sandy Bridge is a hot chip in your distorted world view? But that would be wrong as well. The reality is that the VAIO SE runs hot and loud because of the way Sony designed the laptop, and the N56VM runs hot and quiet because of the way ASUS designed the laptop.

The simple fact is Ivy Bridge in this laptop runs faster than Sandy Bridge in other laptops, even at higher temperatures than some laptops that we've seen. There was a conscious decision to let internal CPU temperatures get higher instead of running the fans faster and creating more noise. If the fan were generating 40dB of noise, I can guarantee that the chip temperature wouldn't be 89C under load. Again, this is simple thermodynamics. Is that so difficult to understand?

How do we determine what Ivy Bridge temperatures are like "in general"? How do you know that it's a "hot chip"? You don't, so you're just pulling stuff out of the air and making blanket statements that have no substance. It seems you either work for AMD and think you're doing them a favor with these comments (you're not), or you have a vendetta against Intel and you're hoping to make people in general think Ivy Bridge is bad just because you say so (it's not).

mtoma - Tuesday, April 24, 2012 - link

I really don't want to play dumb - but if I get an honest answer I'll be pleased: Jarred said that the panel used in Asus N56VM is an LG LP156WF1. OK - how can I find the display type in a specific laptop? I have a Lenovo T61 and... I need help. I want to know the manufacturer, display type, viewing angles. Thanks!JarredWalton - Tuesday, April 24, 2012 - link

I use Astra32 (www.astra32.com), a free utility that will usually report the monitor type. However, if the OEM chooses to overwrite the information in the LCD firmware, you'll get basically a meaningless code. You can also look at LaptopScreen.com and see if they have the information/screen you need (http://www.laptopscreen.com/English/model/IBM-Leno...leovande321 - Wednesday, May 15, 2013 - link

AUO 10.1 "SD + B101EVT03.2 1280X800 Matte Laptop Screen Grade A +I hope to help you!!!

AUO BOE CMO CPT IVO 10.1 14.0 15.6 LED CCFL whoalresell

Wholesale Laptop Screens www.globalresell.com

Spunjji - Thursday, April 26, 2012 - link

Calm down there. His comment is pointing out that measuring the temperatures of this laptop will tell you nothing about how hot mobile Ivy Bridge is as a platform. We need more information. It looks like it's not as cool as Intel marketing want everyone to believe, but we just don't know yet.JarredWalton - Thursday, April 26, 2012 - link

The real heart of the matter is that more performance (IVB) just got stuffed into less space. 22nm probably wasn't enough to dramatically reduce voltages and thus power, so the internal core temperatures are likely higher than SNB in many cases, even though maximum power draw may have gone down.For the desktop, that's more of a concern, especially if you want to overclock. For a laptop, as long as the laptop doesn't get noisy and runs stable, I have no problem with the tradeoff being made, and I suspect it's only a temporary issue. By the time ULV and dual-core IVB ship, 22nm will be a bit more mature and have a few more kinks ironed out.

leovande321 - Wednesday, May 15, 2013 - link

AUO 10.1 "SD + B101EVT03.2 1280X800 Matte Laptop Screen Grade A +I hope to help you!!!

AUO BOE CMO CPT IVO 10.1 14.0 15.6 LED CCFL whoalresell

Wholesale Laptop Screens www.globalresell.com

raghu78 - Wednesday, May 2, 2012 - link

Even though you have mentioned that 45w Llano would have improved the gaming performance it would have been better to include such a configuration in your testing. Given that you were testing a 45w high end next gen core i7 product which itself skews the balance in Intel's favour given the vast difference in CPU processing capability the least you could have done was put a similar wattage AMD Llano SKU. The result would be that other than Batman and Skyrim the rest would all be better on HD 6620G. As they say "a picture is worth a thousand words ". All your charts cannot be undone by a small note at the end of the charts. The damage has been done.This is my opinion that objective comparisons can only be made under similar parameters. Its even more critical in the notebook market which have strict thermal restrictions. The desktop market is slightly less restrictive except for HTPCs which need 65w or lesser processors. When the comparisons for Trinity 35w are made it should be against 35w Ivybridge core i3 and core i5. By benching a ivybridge core i7 with a 45w rating and comparing with a Trinity 35w we aren't making a fair and objective comparison. Also the fact that the ivybridge core i7 and trinity are not in the same price segment makes things worse. I hope my comments are not taken negatively.