The Intel Ivy Bridge (Core i7 3770K) Review

by Anand Lal Shimpi & Ryan Smith on April 23, 2012 12:03 PM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

Quick Sync Image Quality & Performance

Intel obviously focused on increasing GPU performance with Ivy Bridge, but a side effect of that increased GPU performance is more compute available for Quick Sync. As you may recall, Sandy Bridge's secret weapon was an on-die hardware video transcode engine (Quick Sync), designed to keep Intel's CPUs competitive when faced with the onslaught of GPU computing applications. At the time, video transcode seemed to be the most likely candidate for significant GPU acceleration so the move made sense. Plus it doesn't hurt that video transcoding is an extremely popular activity to do with one's PC these days.

The power of Quick Sync was how it leveraged fixed function decode (and some encode) hardware with the on-die GPU's EU array. The combination of the two resulted in some pretty incredible performance gains not only over traditional software based transcoding, but also over the fastest GPU based solutions as well.

Intel put to rest any concerns about image quality when Quick Sync launched, and thankfully the situation hasn't changed today with Ivy Bridge. In fact, you get a bit more flexibility than you had a year ago.

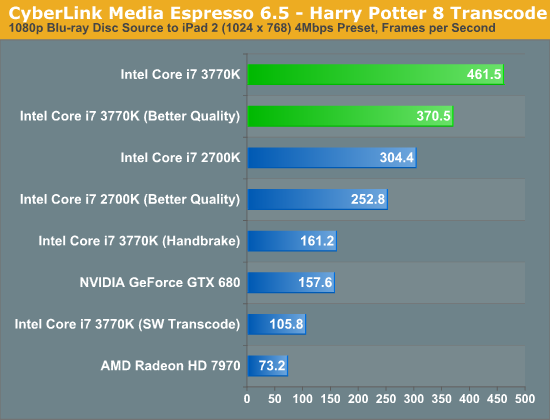

Intel's latest drivers now allow for a selectable tradeoff between image quality and performance when transcoding using Quick Sync. The option is exposed in Media Espresso and ultimately corresponds to an increase in average bitrate. To test image quality and performance, I took the last Harry Potter Blu-ray, stripped it of its DRM and used Media Espresso to make it playable on an iPad 2 (1024 x 768 preset).

In the case of our Harry Potter transcode, selecting the Better Quality option increased average bitrate from to 3.86Mbps to 5.83Mbps. The resulting file size for the entire movie increased from 3.78GB to 5.71GB. Both options produced a good quality transcode, picking one over the other really depends on how much time (and space) you have as well as the screen size of the device you'll be watching it on. For most phone/tablet use I'd say the faster performing option is ideal.

| Intel Core i7 3770K (x86) | Intel Quick Sync (SNB) | Intel Quick Sync (IVB) | Intel Quick Sync, Better (IVB) | NVIDIA GeForce GTX 680 | AMD Radeon HD 7970 |

| original | original | original | original | original | original |

While AMD has yet to enable VCE in any publicly available software, NVIDIA's hardware encoder built into Kepler is alive and well. Cyberlink Media Espresso 6.5 will take advantage of the 680's NVENC engine which is why we standardized on it here for these tests. Once again, Quick Sync's transcoding abilities are limited to applications like Media Espresso or ArcSoft's Media Converter—there's still no support in open source applications like Handbrake.

Compared to the output from Quick Sync, NVENC appears to produce a softer image. However, if you compare the NVENC output to what we got from the software/x86 path you'll see that the two are quite similar. It seems that Quick Sync, at least in this case, is sharpening/adding more noise beyond what you'd normally expect. I'm not sure I'd call it bad, but I need to do some more testing before I know whether or not it's a good thing.

The good news is that NVENC doesn't pose any of the horrible image quality issues that NVIDIA's CUDA transcoding path gave us last year. For getting videos onto your phone, tablet or game console I'd say the output of either of these options, NVENC or Quick Sync, is good enough.

Unfortunately AMD's solution hasn't improved. The washed out images we saw last year, particularly in dark scenes prior to a significant change in brightness are back again. While NVENC delivers acceptable image quality, AMD does not.

The performance story is unfortunately not much different from last year either. The chart below is average frame rate over the entire encode process.

Just as we saw with Sandy Bridge, Quick Sync continues to be an incredible way to get video content onto devices other than your PC. One thing I wanted to make sure of was that Media Espresso wasn't somehow holding x86 performance back to make the GPU accelerated transcodes seem much better than they actually are. I asked our resident video expert, Ganesh, to clone Media Espresso's settings in a Handbrake profile. We took the profile and performed the same transcode, the result is listed above as the Core i7 3770K (Handbrake). You will notice that the Handbrake x86/x264 path is definitely faster than Cyberlink's software path, by over 50% to be exact. However even using Handbrake as a reference, Quick Sync transcodes over 2x faster.

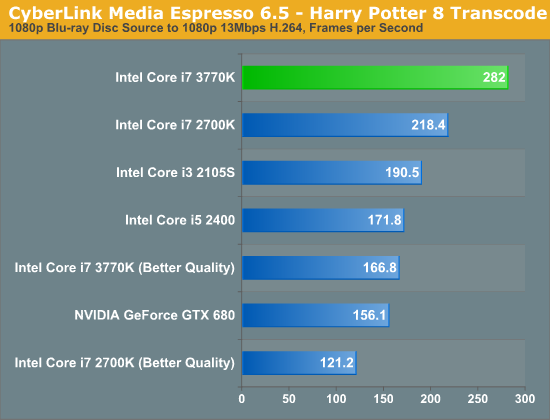

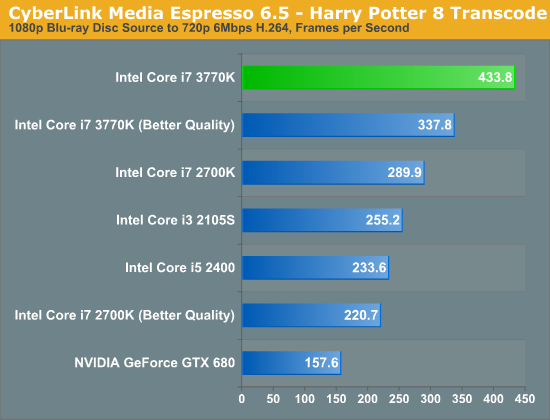

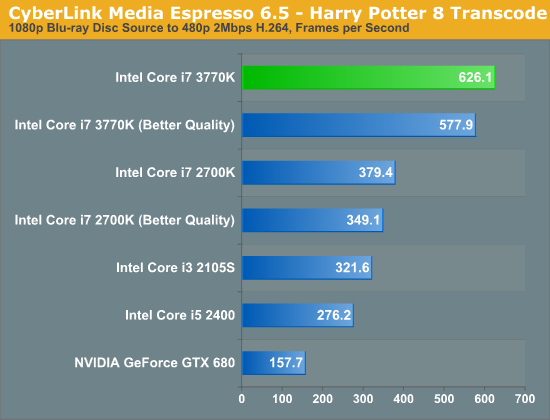

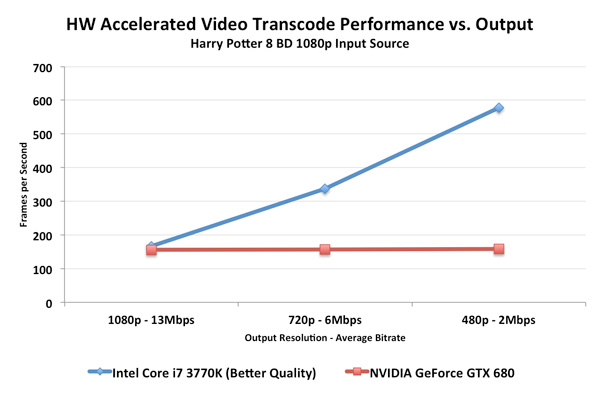

In the tests below I took the same source and varied the output quality with some custom profiles. I targeted 1080p, 720p and 480p at decreasing average bitrates to illustrate the relationship between compression demands and performance:

Unfortunately NVENC performance does not scale like Quick Sync. When asked to preserve a good amount of data, both NVENC and Quick Sync perform similarly in our 1080p/13Mbps test. However ask for more aggressive compression ratios for lower resolution/bitrate targets, and the Intel solution quickly distances itself from NVIDIA. One theory is that NVIDIA's entropy encode block could be the limiting factor here.

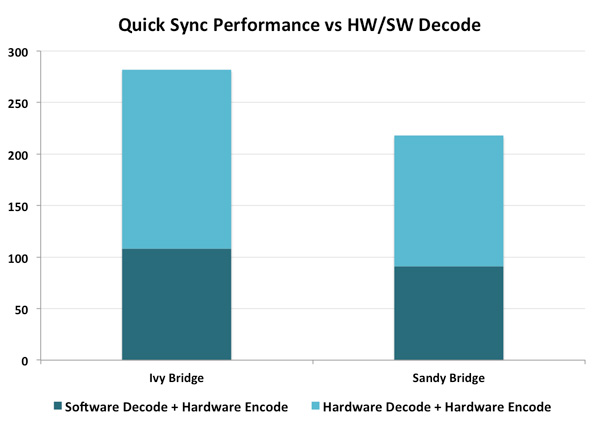

Ivy Bridge's improved Quick Sync appears to be aided both by an improved decoder and the HD 4000's faster/larger EU array. The graph below helps illustrate:

If we rely on software decoding but use Intel's hardware encode engine, Ivy Bridge is 18% faster than Sandy Bridge in this test (1080p 13Mbps output from BD source, same as above). If we turn on both hardware decode and encode, the advantage grows to 29%. More than half of the performance advantage in this case is due to the faster decode engine on Ivy Bridge.

173 Comments

View All Comments

wingless - Monday, April 23, 2012 - link

I'll keep my 2600K.....just kidding

formulav8 - Monday, April 23, 2012 - link

I hope you give AMD even more praise when Trinity is released Anand. IMO you way overblew how great Intels igp stuff. Its their 4th gen that can't even beat AMDs first gen.Just my opinion :p

Zstream - Monday, April 23, 2012 - link

I agree..dananski - Monday, April 23, 2012 - link

As much as I like the idea of decent Skyrim framerates on every laptop, and even though I find the HD4000 graphics an interesting read, I couldn't care less about it in my desktop. Gamers will not put up with integrated graphics - even this good - unless they're on a tight budget, in which case they'll just get Llano anyway, or wait for Trinity. As for IVB, why can't we have a Pentium III sized option without IGP, or get 6 cores and no IGP?Kjella - Tuesday, April 24, 2012 - link

Strategy, they're using their lead in CPUs to bundle it with a GPU whether you want it or not. When you take your gamer card out of your gamer machine it'll still have an Intel IGP for all your other uses (or for your family or the second-hand market or whatever), that's one sale they "stole" from AMD/nVidia's low end. Having a separate graphics card is becoming a niche market for gamers. That's better for Intel than lowering the expectation that a "premium" CPU costs $300, if you bring the price down it's always much harder to raise it again...Samus - Tuesday, April 24, 2012 - link

As amazing this CPU is, and how much I'd love it (considering I play BF3 and need a GTX560+ anyway) I have to agree the GPU improvement is pretty disappointing...After all that work, Intel still can't even come close to AMD's integrated graphics. It's 75% of AMD's performance at best.

Cogman - Thursday, May 3, 2012 - link

There is actually a good reason for both AMD and Intel to keep a GPU on their CPUs no matter what. That reason is OpenCV. This move makes the assumption that OpenCV or programming languages like it will eventually become mainstream. With a GPU coupled to every CPU, it saves developers from writing two sets of code to deal with different platforms.froggr - Saturday, May 12, 2012 - link

OpenCV is Open Computer Vision and runs either way. I think you're talking about OpenCL (Open Compute Language). and even that runs fine without a GPU. OpenCL can use all cores CPU + GPU and does not require separate code bases.OpenCL runs faster with a GPU because it's better parallellized.

frozentundra123456 - Monday, April 23, 2012 - link

Maybe we could actually see some hard numbers before heaping so much praise on Trinity??I will be convinced about the claims of 50% IGP improvements when I see them, and also they need to make a lot of improvements to Bulldozer, especially in power consumption, before it is a competitive CPU. I hope it turns out to be all the AMD fans are claiming, but we will see.

SpyCrab - Tuesday, April 24, 2012 - link

Sure, Llano gives good gaming performance. But it's pretty much at Athlon II X4 CPU performance.