Intel's SSD 910: Finally a PCIe SSD from Intel

by Anand Lal Shimpi on April 12, 2012 3:00 AM EST- Posted in

- Storage

- SSDs

- Intel

- Intel SSD 910

Solid state storage has quickly been able to saturate the SATA interface just as quickly as new standards are introduced. The first generation of well-built MLC SSDs quickly bumped into the limits of 3Gbps SATA, as did the first generation of 6Gbps MLC SSDs. With hard drives no where near running out of headroom on a 6Gbps interface, it's clear that SSDs need to transition to an interface that can offer significantly higher bandwidth.

The obvious choice is PCI Express. A single PCIe 2.0 lane is good for 500MB/s of data upstream and downstream, for an aggregate of 1GB/s. Build a PCIe 2.0 x16 SSD and you're talking 8GB/s in either direction. The first PCIe 3.0 chipsets have already started shipping and they'll offer even higher bandwidth per lane (~1GB/s per lane, per direction).

PCI Express is easily scalable and it's just as ubiquitous as SATA in modern systems, it's a natural fit for ultra high performance SSDs. While SATA Express will hopefully merge the two in a manner that preserves backwards compatibility for existing SATA drives, the server market needs solutions today.

In the past you needed a huge chassis to deploy an 8-core server, but thanks to Moore's Law you can cram a dozen high-performance x86 cores into a single 1U or 2U chassis. These high density servers are great for compute performance, but they do significantly limit per-server storage capacity. With the largest eMLC drives topping out at 400GB and SLC drives well below that, if you have high performance needs in a small rackmount chassis you need to look beyond traditional 2.5" drives.

Furthermore, if all you're going to do is combine a bunch of SAS/SATA drives behind a PCIe RAID controller it makes more sense to cut out the middleman and combine the two.

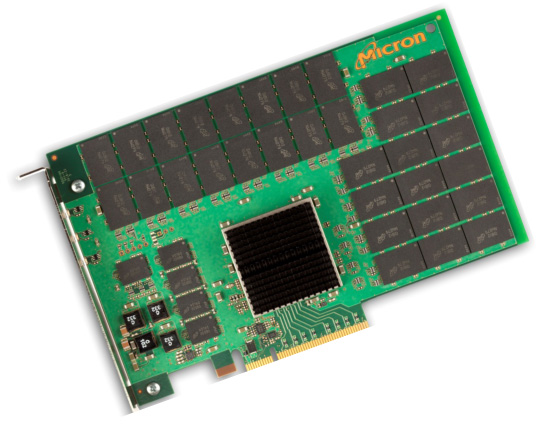

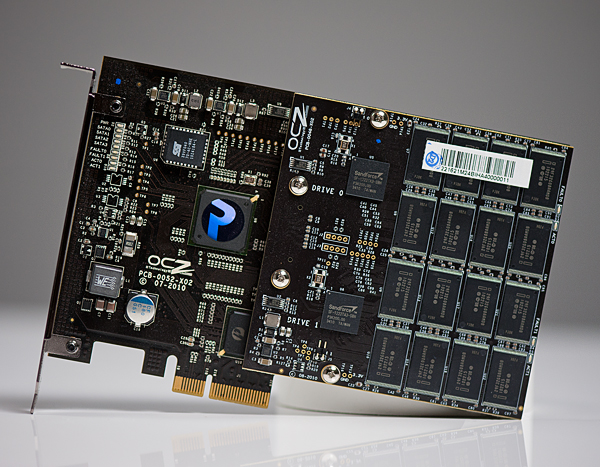

Micron's P320h

We've seen PCIe SSDs that do just that, including several from OCZ under the Z-Drive and RevoDrive brands. Although OCZ has delivered many iterations of PCIe SSDs at this point they all still follow the same basic principle: combine independent SAS/SATA SSD controllers on a PCIe card with a SAS/SATA RAID controller of some sort. Eventually we'll see designs that truly cut out the middlemen and use native PCIe-to-NAND SSD controllers and a simple PCIe switch or lane aggregator. Micron has announced one such drive with the P320h. The NVMe specification is designed to support the creation of exactly this type of drive, however we have yet to see any implementations of the spec.

Many companies have followed in OCZ's footsteps and built similar drives, but many share one thing in common: the use of SandForce controllers. If you're working with encrypted or otherwise incompressible data, SandForce isn't your best bet. There are also concerns about validation, compatibility and reliability of SF's controllers.

Intel's SSD 910

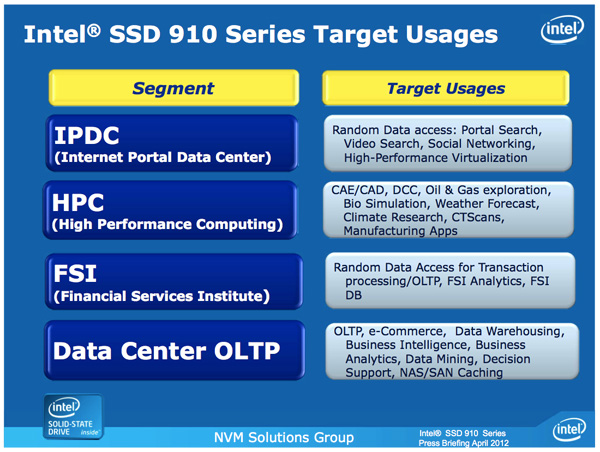

Similar to its move into the MLC SSD space, Intel is arriving late to the PCIe SSD game - but it hopes to gain marketshare on the back of good performance, competitive pricing and reliability.

The first member of the new PCIe family is the Intel SSD 910, consistent with Intel's 3-digit model number scheme.

| Enterprise SSD Comparison | ||||||

| Intel SSD 910 | Intel SSD 710 | Intel X25-E | Intel SSD 320 | |||

| Interface | PCIe 2.0 x8 | SATA 3Gbps | SATA 3Gbps | SATA 3Gbps | ||

| Capacities | 400 / 800 GB | 100 / 200 / 300GB | 32 / 64GB | 80 / 120 / 160 / 300 / 600GB | ||

| NAND | 25nm MLC-HET | 25nm MLC-HET | 50nm SLC | 25nm MLC | ||

| Max Sequential Performance (Reads/Writes) | 2000 / 1000 MBps | 270 / 210 MBps | 250 / 170 MBps | 270 / 220 MBps | ||

| Max Random Performance (Reads/Writes) | 180K / 75K IOPS | 38.5K / 2.7K IOPS | 35K / 3.3K IOPS | 39.5K / 600 IOPS | ||

| Endurance (Max Data Written) | 7 - 14 PB | 500TB - 1.5PB | 1 - 2PB | 5 - 60TB | ||

| Encryption | - | AES-128 | - | AES-128 | ||

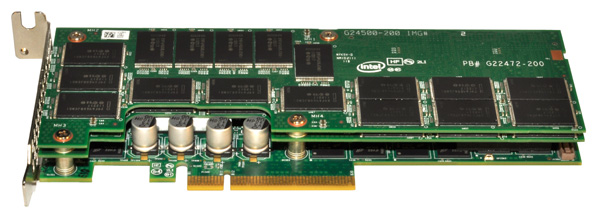

The 910 is a single-slot, half-height, half-length PCIe 2.0 x8 card with either 896GB or 1792GB of Intel's 25nm MLC-HET NAND. Part of the high endurance formula is extra NAND for redundancy as well as larger than normal spare area on the drive itself. Once those two things are accounted for, what remains is either 400GB or 800GB of available storage.

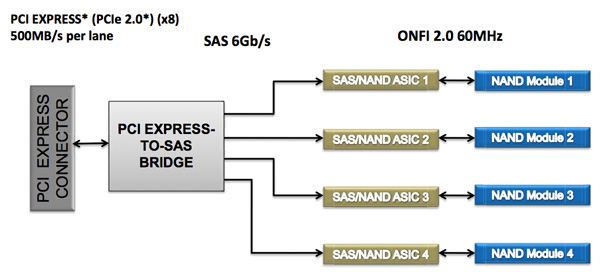

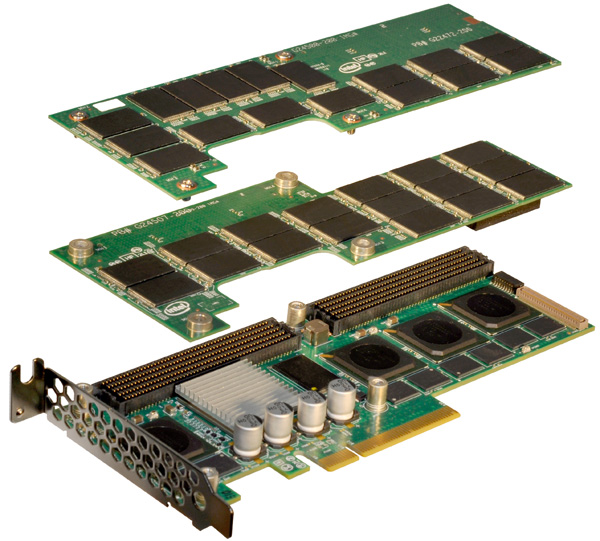

The 910's architecture is surprisingly simple. The solution is a layered design composed of two or three boards stacked on one another. The first PCB features either two or four SAS SSD controllers, jointly developed by Intel and Hitachi (the same controllers are used in Hitachi's Ultrastar SSD400M). These controllers are very similar to Intel's X25-M/G2/310/320 controller family but with a couple of changes. The client controller features a single CPU core, while the Intel/Hitachi controller features two cores (one managing the NAND side of the drive while the other managing the SAS interface). Both are 10-channel designs, although the 910's implementation features 14 NAND packages per controller.

In front of the four controllers is an LSI 2008 SAS to PCIe bridge. There's no support for hardware RAID, each controller presents itself to the OS as a single drive with a 200GiB (186GB) capacity. You are free to use software RAID to aggregate the drives as you see fit but by default you'll see either two or four physical drives appear.

The second PCB is home to 896GB of Intel's 25nm MLC-HET NAND, spread across 28 TSSOP packages. The third PCB is only present if you order the 800GB version, and it adds an extra 896GB of NAND (another 28 packages). Even in a fully populated three-board stack, the 910 only occupies a single PCIe slot.

The 910's TDP is set at 25W and requires cooling capable of moving air at 200 linear feet per minute for proper operation.

The use of LSI's 2008 SAS PCIe controller makes sense as there's widespread OS support for the controller, in many cases you won't need to even supply a 3rd party driver. The 910 isn't bootable, but I don't believe that's much of an issue as you're more likely to deploy a server with a small boot drive anyway. There's also no support for hardware encryption, a more unfortunate omission.

Intel's performance specs for the 910 are understandably awesome:

| Intel SSD 910 Performance Specs | ||||

| 400GB | 800GB | |||

| Random 4KB Read (Up to) | 90K IOPS | 180K IOPS | ||

| Random 4KB Write (Up to) | 38K IOPS | 75K IOPS | ||

| Sequential Read (Up to) | 1000 MB/s | 2000 MB/s | ||

| Sequential Write (Up to) | 750 MB/s | 1000 MB/s | ||

Intel's specs come from aggregating performance across all controllers, but you're still looking at a great combination of performance and capacity. These numbers are applicable to both compressible and incompressible data.

The 910 will ship with a software tool that allows you to get even more performance out of the drive (up to 1.5GB/s write speed) by increasing the board's operating power to 28W from 25W.

| Intel SSD 910 Endurance Ratings | ||||

| 400GB | 800GB | |||

| 4KB Random Write | Up to 5PB | Up to 7PB | ||

| 8KB Random Write | Up to 10PB | Up to 14PB | ||

The 910 is rated for up to 2.5PB of 4KB or 3.5PB of 8KB random writes per NAND module (200GB).

The pricing is also fairly reasonable. The 400GB model carries a $1929 MSRP while the 800GB will set you back $3859, both come in below $5/GB. Samples are available today, with the first production of Intel's SSD 910 available sometime in the first half of the year.

| Intel SSD 910 Pricing | ||||

| 400GB | 800GB | |||

| MSRP | $1929 | $3859 | ||

| $ per GB | $4.8225 | $4.8238 | ||

I have to say that I'm pretty excited to see Intel's 910 in action. Intel's reputation as an SSD maker carries a lot of weight in the enterprise market already. The addition of a high-end PCIe solution will likely be well received by its existing customers and others who have been hoping for such a solution.

71 Comments

View All Comments

Sabresiberian - Thursday, April 12, 2012 - link

A gibibyte isn't a base 2 number, but a number derived from powers of 2. Base 2 numbers only contain 1's and 0's.http://en.wikipedia.org/wiki/Gibibyte

If you are going to correct someone and sneer at them for their usage ("at least do it correctly"), I suggest you know what you are talking about and spell correctly.

;)

Juddog - Thursday, April 12, 2012 - link

7 - 14 PB is a sick amount of endurance for an SSD - it seems like everybody here is focusing on the IOPS but the endurance of 7 - 14 PB is just crazy for an SSD. Something like that would make an excellent longer-term investment; a lot of companies are still hesitant to jump into the SSD market for their servers because of the endurance issue.The only reason I mention this is that I've personally spoken with infrastructure guys / DBA's that won't invest in SSDs because of the reliability factory - they want something that will take a constant beating in terms of IO at a constant rate.

SQLServerIO - Thursday, April 12, 2012 - link

I'm very happy with the endurance. As a DBA who has had solid state in production systems for the last four years if you REALLY understand your write load you find that endurance of 1TB to 2TB is good enough for two to three years of heavy use. Even "heavy write" databases see 20% writes vs reads. Fusion-io rates some of their cards at 32PB of write endurance but do cost a bit more :)FunBunny2 - Thursday, April 12, 2012 - link

Folklore says that SSD death is more predictable than HDD, modulo infant mortality. Have you found this to be true?rs2 - Thursday, April 12, 2012 - link

I just love it when a ~$4000 component is considered to have "fairly reasonable" pricing.FunBunny2 - Thursday, April 12, 2012 - link

Compared to STEC or Fusion-io or Texas Memory or Violin, yeah, it is. Whether it's a relevant part to this site's gamers, is the other question.Sabresiberian - Thursday, April 12, 2012 - link

Exactly; the readers of Anandtech.com aren't all "gamers", Anandtech never pretended to be a "gamer" site, and many enthusiasts build for actual business and scientific work, not just playing games.This thing isn't for me; I build as an "enthusiast gamer". The Revodrive is the best solution I know about, for my purposes and in PCIe form. This device is clearly intended for a different use (I was hoping it would be more like the Revodrive, but it's not). Not knowing much at all about building that kind of serious machine, I can hardly expect to comment knowledgeably on the price (except by paying attention to what others, who are knowledgeable, post here).

;)

Zstream - Thursday, April 12, 2012 - link

So, the best solution would be to have a raid-1 setup with two regular spindle drives and move the "golden images" to this SSD?quanstro - Thursday, April 12, 2012 - link

if you compare against six 80gb ssd 320s, you would geta bigger drive (480mb) more iops (237k) more throughput

(1440mb/s) and lower cost ($834). and if you need more

capacity, you can add capacity in small hot-swappable

increments.

the 320s would also be bootable and offer aes-128 and

could fit on most any motherboard-based controller.

i'm not sure i see where a pci-e attached controller would

offer a better solution than regular ssds yet.

ShieTar - Thursday, April 12, 2012 - link

You forgot to mention the 3.6k writing iops of that solution. And the endurance that is lower by more than a factor of 100.Also, I would expect a significant percentage of the potential customers to put more than one of these boards into one server. Server-Boards come with up to 7 PCIe x16 slots, and you can not connect 42 ssd 320s to a motherboard controller. So you need to be putting SAS-Controllers into our PCIe slots anyways.

So, if nothing else you save yourself dozens of cables which can fail, and you save the space of the 42 SSDs, which helps with the cooling.