NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM ESTCompute: What You Leave Behind?

As always our final set of benchmarks is a look at compute performance. As we mentioned in our discussion on the Kepler architecture, GK104’s improvements seem to be compute neutral at best, and harmful to compute performance at worst. NVIDIA has made it clear that they are focusing first and foremost on gaming performance with GTX 680, and in the process are deemphasizing compute performance. Why? Let’s take a look.

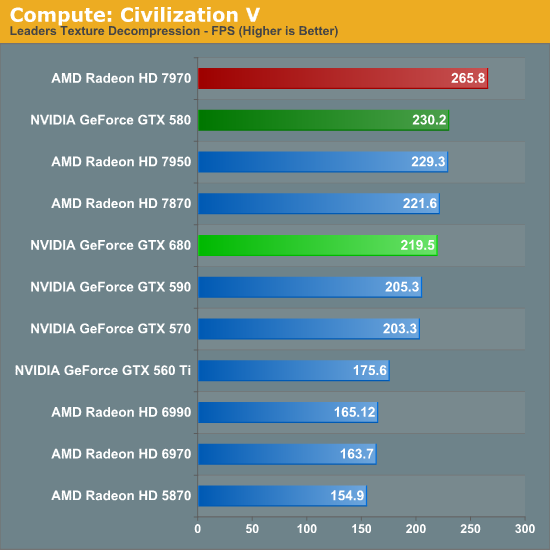

Our first compute benchmark comes from Civilization V, which uses DirectCompute to decompress textures on the fly. Civ V includes a sub-benchmark that exclusively tests the speed of their texture decompression algorithm by repeatedly decompressing the textures required for one of the game’s leader scenes. Note that this is a DX11 DirectCompute benchmark.

Remember when NVIDIA used to sweep AMD in Civ V Compute? Times have certainly changed. AMD’s shift to GCN has rocketed them to the top of our Civ V Compute benchmark, meanwhile the reality is that in what’s probably the most realistic DirectCompute benchmark we have has the GTX 680 losing to the GTX 580, never mind the 7970. It’s not by much, mind you, but in this case the GTX 680 for all of its functional units and its core clock advantage doesn’t have the compute performance to stand toe-to-toe with the GTX 580.

At first glance our initial assumptions would appear to be right: Kepler’s scheduler changes have weakened its compute performance relative to Fermi.

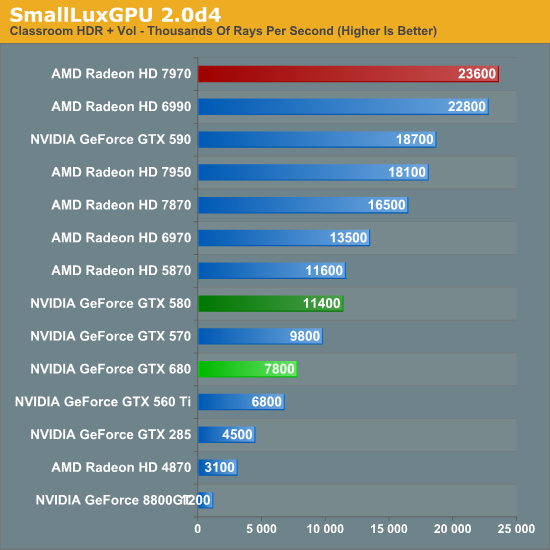

Our next benchmark is SmallLuxGPU, the GPU ray tracing branch of the open source LuxRender renderer. We’re now using a development build from the version 2.0 branch, and we’ve moved on to a more complex scene that hopefully will provide a greater challenge to our GPUs.

CivV was bad; SmallLuxGPU is worse. At this point the GTX 680 can’t even compete with the GTX 570, let alone anything Radeon. In fact the GTX 680 has more in common with the GTX 560 Ti than it does anything else.

On that note, since we weren’t going to significantly change our benchmark suite for the GTX 680 launch, NVIDIA had a solid hunch that we were going to use SmallLuxGPU in our tests, and spoke specifically of it. Apparently NVIDIA has put absolutely no time into optimizing their now all-important Kepler compiler for SmallLuxGPU, choosing to focus on games instead. While that doesn’t make it clear how much of GTX 680’s performance is due to the compiler versus a general loss in compute performance, it does offer at least a slim hope that NVIDIA can improve their compute performance.

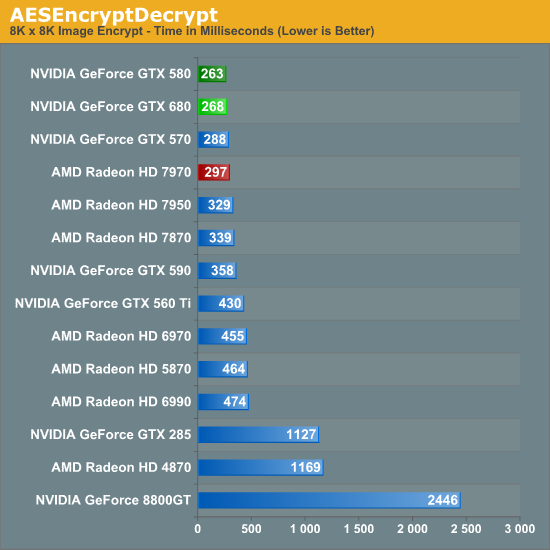

For our next benchmark we’re looking at AESEncryptDecrypt, an OpenCL AES encryption routine that AES encrypts/decrypts an 8K x 8K pixel square image file. The results of this benchmark are the average time to encrypt the image over a number of iterations of the AES cypher.

Starting with our AES encryption benchmark NVIDIA begins a recovery. GTX 680 is still technically slower than GTX 580, but only marginally so. If nothing else it maintains NVIDIA’s general lead in this benchmark, and is the first sign that GTX 680’s compute performance isn’t all bad.

For our fourth compute benchmark we wanted to reach out and grab something for CUDA, given the popularity of NVIDIA’s proprietary API. Unfortunately we were largely met with failure, for similar reasons as we were when the Radeon HD 7970 launched. Just as many OpenCL programs were hand optimized and didn’t know what to do with the Southern Islands architecture, many CUDA applications didn’t know what to do with GK104 and its Compute Capability 3.0 feature set.

To be clear, NVIDIA’s “core” CUDA functionality remains intact; PhysX, video transcoding, etc all work. But 3rd party applications are a much bigger issue. Among the CUDA programs that failed were NVIDIA’s own Design Garage (a GTX 480 showcase package), AccelerEyes’ GBENCH MatLab benchmark, and the latest Folding@Home client. Since our goal here is to stick to consumer/prosumer applications in reflection of the fact that the GTX 680 is a consumer card, we did somewhat limit ourselves by ruling out a number of professional CUDA applications, but there’s no telling that compatibility there would fare any better.

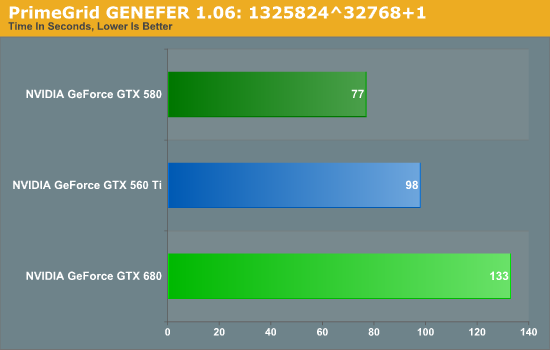

We ultimately started looking at Distributed Computing applications and settled on PrimeGrid, whose CUDA accelerated GENEFER client worked with GTX 680. Interestingly enough it primarily uses double precision math – whether this is a good thing or not though is up to the reader given the GTX 680’s anemic double precision performance.

Because it’s based around double precision math the GTX 680 does rather poorly here, but the surprising bit is that it did so to a larger degree than we’d expect. The GTX 680’s FP64 performance is 1/24th its FP32 performance, compared to 1/8th on GTX 580 and 1/12th on GTX 560 Ti. Still, our expectation would be that performance would at least hold constant relative to the GTX 560 Ti, given that the GTX 680 has more than double the compute performance to offset the larger FP64 gap.

Instead we found that the GTX 680 takes 35% longer, when on paper it should be 20% faster than the GTX 560 Ti (largely due to the difference in the core clock). This makes for yet another test where the GTX 680 can’t keep up with the GTX 500 series, be it due to the change in the scheduler, or perhaps the greater pressure on the still-64KB L1 cache. Regardless of the reason, it is becoming increasingly evident that NVIDIA has sacrificed compute performance to reach their efficiency targets for GK104, which is an interesting shift from a company that was so gung-ho about compute performance, and a slightly concerning sign that NVIDIA may have lost faith in the GPU Computing market for consumer applications.

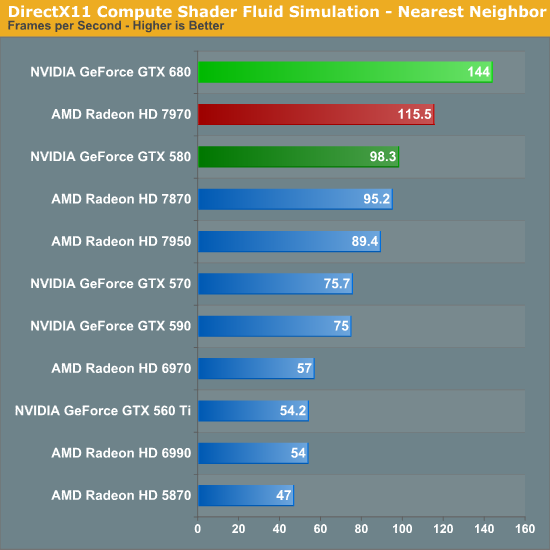

Finally, our last benchmark is once again looking at compute shader performance, this time through the Fluid simulation sample in the DirectX SDK. This program simulates the motion and interactions of a 16k particle fluid using a compute shader, with a choice of several different algorithms. In this case we’re using an (O)n^2 nearest neighbor method that is optimized by using shared memory to cache data.

Redemption at last? In our final compute benchmark the GTX 680 finally shows that it can still succeed in some compute scenarios, taking a rather impressive lead over both the 7970 and the GTX 580. At this point it’s not particularly clear why the GTX 680 does so well here and only here, but the fact that this is a compute shader program as opposed to an OpenCL program may have something to do with it. NVIDIA needs solid compute shader performance for the games that use it; OpenCL and even CUDA performance however can take a backseat.

404 Comments

View All Comments

SlyNine - Sunday, March 25, 2012 - link

Well, the driver themself can take more CPU power to run. But with a quad core CPU the thought is laughable. Back in the single CPU/core days it was actually an issue. And before DX9 (or 10) Drivers were only capable of accessing single cores I believe.SlyNine - Sunday, March 25, 2012 - link

Then look for an overclocked review. Anandtech is always going to do an out of the box for the first review.This is what they(amd/nvidia) are promising you, nothing more.

papapapapapapapababy - Monday, March 26, 2012 - link

USELESS !YESSS OMFG i cant wait to play the latest crappy kinect port with this!.... at 600.000.000 FPS and in 3-D! GTFO GUYS! REALLY....

just put this ridiculously large, ugly, noisy, silly, and overpriced, toxic waste where it belongs: faaar away from me, ( sensible user) inside one bulky OnLive cloud server. (and pushing avatar 2 graphics, no HDps2 ports)

henrikfm - Monday, March 26, 2012 - link

Most monitors have 60Hz refresh rate, you can't benefit from higher frame rates because only 60 frames are drawn.By looking at the benchmarks and considering a resolution of 1920, the latest cards fail in 3 games to deliver at least 60fps: Crysis, Metro and BF3. In the first two games the HD7970 beats de GTX680, only loses in BF3 where nVidia has a clear advantage (in my opinion AMD has to work in drivers for BF3).

So, the GTX680 is faster when the speed really doesn't matter because you're already around 100fps. The guys who are running multiple monitors and higher resolutions will have also money to buy multiple GPU setups, and that is another story.

Still the GTX680 is a better card, but for $500 I would expect a card to deliver at least 60fps at 1920 for a 2008 released videogame like Crysis. Neither nVidia nor AMD can do that with a single GPU, it's disappointing.

gramboh - Monday, March 26, 2012 - link

I'll agree about Metro because there is a sequel (Last Light) coming out in Q1-2013 which will presumably be similar in the graphics department.Crysis is irrelevant other than for benchmarking, who still plays it? Single player campaign is entertaining for the eye candy once through (in 2008).

BF3 is the game that matters because of the MP component, people will be playing it for years to come. AMD really really has to improve performance on the 7950/7970 in BF3, I won't even consider buying it (vs. the 680) unless they can make up some significant ground.

CeriseCogburn - Tuesday, March 27, 2012 - link

I just have to do it, sorry.You forgot Shogun 2 total war, the hardest game in this bench set, that Nvidia wins in all 3 resolutions.

You also forgot average frames are not low frames, so you need far above 60 fps average before you don't dip below 60 fps.

Furthermore, all the eye candy is not cranked, driving the average and dips even lower when it is.

You got nothing right.

b3nzint - Monday, March 26, 2012 - link

back in 7970 review, its got cool stuffs tech. like PRT, MST hubm, DDMA and bla bla bla. why gtx680 dont have sh** like that. pardon my english. its like this thing is built only for 1 purpose only and thats a success. thanksmpx - Monday, March 26, 2012 - link

This new Nvidia card supposedly has an architecture that burdens CPU with scheduling etc. It may mean that it requires a faster CPU than ATI cards to reach similar performance. And since fast CPUs are expansive it may mean it's actually more expansive.BoFox - Monday, March 26, 2012 - link

The key word in your first sentence is "supposedly".I see no evidence of this. It actually does far better in Starcraft 2, a game that already burdens the CPU. It also excels in Skyrim, while still doing just fine in Civilization V, which are also the most CPU-intensive games out there.

BoFox - Monday, March 26, 2012 - link

In SC2 which is a very CPU-dependent game, the card still does amazingly well against the rest of others. The same also goes for Skyrim, beating the red team by a whopping percentage.