NVIDIA GeForce GTX 680 Review: Retaking The Performance Crown

by Ryan Smith on March 22, 2012 9:00 AM EST“How do you follow up on Fermi?” That’s the question we had going into NVIDIA’s press briefing for the GeForce GTX 680 and the Kepler architecture earlier this month. With Fermi NVIDIA not only captured the performance crown for gaming, but they managed to further build on their success in the professional markets with Tesla and Quadro. Though it was a very clearly a rough start for NVIDIA, Fermi ended up doing quite well in the end.

So how do you follow up on Fermi? As it turns out, you follow it up with something that is in many ways more of the same. With a focus on efficiency, NVIDIA has stripped Fermi down to the core and then built it back up again; reducing power consumption and die size alike, all while maintaining most of the aspects we’ve come to know with Fermi. The end result of which is NVIDIA’s next generation GPU architecture: Kepler.

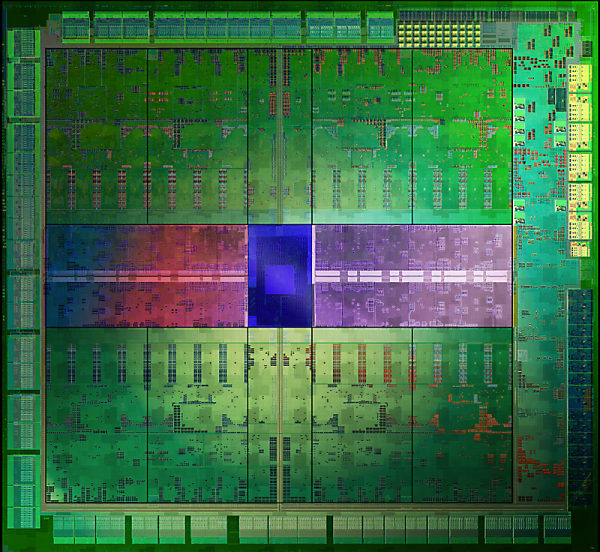

Launching today is the GeForce GTX 680, at the heart of which is NVIDIA’s new GK104 GPU, based on their equally new Kepler architecture. As we’ll see, not only has NVIDIA retaken the performance crown with the GeForce GTX 680, but they have done so in a manner truly befitting of their drive for efficiency.

| GTX 680 | GTX 580 | GTX 560 Ti | GTX 480 | |

| Stream Processors | 1536 | 512 | 384 | 480 |

| Texture Units | 128 | 64 | 64 | 60 |

| ROPs | 32 | 48 | 32 | 48 |

| Core Clock | 1006MHz | 772MHz | 822MHz | 700MHz |

| Shader Clock | N/A | 1544MHz | 1644MHz | 1401MHz |

| Boost Clock | 1058MHz | N/A | N/A | N/A |

| Memory Clock | 6.008GHz GDDR5 | 4.008GHz GDDR5 | 4.008GHz GDDR5 | 3.696GHz GDDR5 |

| Memory Bus Width | 256-bit | 384-bit | 256-bit | 384-bit |

| Frame Buffer | 2GB | 1.5GB | 1GB | 1.5GB |

| FP64 | 1/24 FP32 | 1/8 FP32 | 1/12 FP32 | 1/12 FP32 |

| TDP | 195W | 244W | 170W | 250W |

| Transistor Count | 3.5B | 3B | 1.95B | 3B |

| Manufacturing Process | TSMC 28nm | TSMC 40nm | TSMC 40nm | TSMC 40nm |

| Launch Price | $499 | $499 | $249 | $499 |

Technically speaking Kepler’s launch today is a double launch. On the desktop we have the GTX 680, based on the GK104 GPU. Meanwhile in the mobile space we have the GT640M, which is based on the GK107 GPU. While NVIDIA is not like AMD in that they don’t announce products ahead of time, it’s a sure bet that we’ll eventually see GK107 move up to the desktop and GK104 move down to laptops in the future.

What you won’t find today however – and in a significant departure from NVIDIA’s previous launches – is Big Kepler. Since the days of the G80, NVIDIA has always produced a large 500mm2+ GPU to serve both as a flagship GPU for their consumer lines and the fundamental GPU for their Quadro and Tesla lines, and have always launched with that big GPU first. At 294mm2 GK104 is not Big Kepler, and while NVIDIA doesn’t comment on unannounced products, somewhere in the bowels of NVIDIA Big Kepler certainly lives, waiting for its day in the sun. As such this is the first NVIDIA launch where we’re not in a position to talk about the ramifications for Tesla or Quadro, or really for that matter what NVIDIA’s peak performance for this generation might be.

Anyhow, we’ll jump into the full architectural details of GK104 in a bit, but let’s quickly talk about the specs first. Unlike Fermi or AMD’s GCN, Kepler is not a brand new architecture. To be sure there are some very important changes, but at a high level the workings of Kepler have not significantly changed compared to Fermi. With Kepler what we’re ultimately looking at is a die shrunk distillation of Fermi, and in the case of GK104 that’s specifically a distillation of GF114 rather than GF110.

Starting from the top, GTX 680 features a fully enabled GK104 GPU – unlike the first generation of Fermi products there are no shenanigans with disabled units here. This means GTX 680 has 1536 CUDA cores, a massive increase from GTX 580 (512) and GTX 560 Ti (384). Note however that NVIDIA has dropped the shader clock with Kepler, opting instead to double the number of CUDA cores to achieve the same effect, so while 1536 CUDA cores is a big number it’s really only twice the number of cores of GF114 as far as performance is concerned. Joining those 1536 CUDA cores are 32 ROPs and 128 texture units; the number of ROPs is effectively unchanged from GF114, while the number of texture units has been doubled. Meanwhile on the memory and cache side of things GTX 680 features a 256-bit memory bus coupled with 512KB of L2 cache.

As for clockspeeds, GTX 680 will introduce a few wrinkles courtesy of Kepler. As we mentioned before, the shader clock is gone in Kepler, with everything now running off of the core clock (or as NVIDIA likes to put it, the graphics clock). At the same time Kepler introduces the Boost Clock – effectively a turbo clock for the GPU – so we still have a 3rd clock to pay attention to. With that said, GTX 680 ships at a base clock of 1006MHz and a boost clock of 1058MHz. On the memory side of things NVIDIA has finally managed to fully hammer out their memory controller, allowing NVIDIA to ship with a memory clock of 6.006GHz.

Taken altogether, on paper GTX 680 has roughly 195% the shader performance, 260% the texture performance, 87% of the ROP performance, and 100% of the memory bandwidth of GTX 580. Or as compared to its more direct ancestor the GTX 560 Ti, GTX 680 has 244% of the shader performance, 244% of the texture performance, 122% of the ROP performance, and 150% of the memory bandwidth of GTX 560 Ti. Compared to GTX 560 Ti NVIDIA has effectively doubled every aspect of their GPU except for ROP performance, which is the one area where NVIDIA believes they already have enough performance.

On the power front, GTX 680 has a few different numbers to contend with. NVIDIA’s official TDP is 195W, though as with the GTX 500 series they still consider this is an average number rather than a true maximum. The second number is the boost target, which is the highest power level that GPU Boost will turbo to; that number is 170W. Finally, while NVIDIA doesn’t publish an official idle TDP, the GTX 680 should have an idle TDP of around 15W. Overall GTX 680 is targeted at a power envelope somewhere between GTX 560 Ti and GTX 580, though it’s closer to the former than the latter.

As for GK104 itself, as we’ve already mentioned GK104 is a smaller than average GPU for NVIDIA, with a die size of 294mm2. This is roughly 89% the size of GF114, or compared to GF110 a mere 56% of the size. Inside that 294mm2 NVIDIA packs 3.5B transistors thanks to TSMC’s 28nm process, only 500M more than GF110 and largely explaining why GK104 is so small compared to GF110. Or to once again make a comparison to GF114, this is 1050M (53%) more than GF114, which makes the fact that GK104 doubles most of GF114’s functional units all the more surprising. With Kepler NVIDIA is going to be heavily focusing on efficiency, and this is one such example of Kepler’s efficiency in action.

Last but not least, let’s talk about pricing and availability. GTX 680 is the successor to GTX 580 and NVIDIA will be pricing it accordingly, with an MSRP of $500. This is the same price that the GTX 580 and GTX 480 launched at back in 2010, and while it’s consistent for an x80 video card it’s effectively a conservative price given GK104’s die size. NVIDIA does need to bring their pricing in at the right point to combat AMD, but they’re in no more of a hurry than AMD to start any price wars, so it’s conservative pricing all around for the time being.

AMD’s competition of course is the recently launched Radeon HD 7970 and 7950. Priced at $550 and $450, the GTX 680 sits right in between them in terms of pricing. However with regard to gaming performance the GTX 680 is generally more than a match for the 7970, which is going to leave AMD in a tough spot. AMD’s partners do have factory overclocked cards, but those only close the performance gap at the cost of an even wider price gap. NVIDIA has priced the GTX 680 to undercut the 7970, and that’s exactly what will be happening today.

As for availability, we’re told that it should be similar to past high end video card launches, which is to say it will be touch and go. As with any launch NVIDIA has been stockpiling cards but it’s still a safe bet that GTX 680 will sell out in the first day. Beyond the initial launch it’s not clear whether NVIDIA will be able to keep up with demand over the next month or so. NVIDIA has been fairly forthcoming to their investors about how 28nm production is going, and while yields have been acceptable TSMC doesn’t have enough wafers to satisfy all of their customers at once, so NVIDIA is still getting fewer wafers than they’d like. Until very recently AMD’s partners have had a difficult time keeping the 7970 in stock, and it’s likely it will be the same story for NVIDIA’s partners.

404 Comments

View All Comments

CeriseCogburn - Sunday, March 25, 2012 - link

They get to show amd "catching up" so they like it. They get to try to puke out Kepler's 2G ram and make amd's 3G shine, so they "can't resist" - and when frame rates fall below playable, "they all of a sudden" "don't care", even when the puking attempt fails. They haven't been able to resist since the 580 w 1.5G vs 2G 6950/6970 it was a great blame the low ram game for any changes.Then they checked 6950 1G 2G and 2G was slower...but so what.

Now 2G Kepler has put the ram lie to rest even in triple monitor gaming... but any lesser win or loss or slimming margin can still be blamed on that, it gets people "buying the amd card" and they get real frustrated here when they can't figure out why Nvidia is winning when they don't believe it should be. It's always expressed in the article how shocked they are. So ram is a convenient scapegoat. It's always used a "future proofing" notion as well, though no evidence has ever surfaced for that.

_vor_ - Sunday, March 25, 2012 - link

What's with all the nerdrage? Do you work for NVIDIA?formulav8 - Sunday, March 25, 2012 - link

Get over yourself already. NVidia doesn't even like You. Can't believe how people feel about a stinking stupid corporation.CeriseCogburn - Tuesday, March 27, 2012 - link

It's not about a corporation it's about facts guy. Facts mean my friends and my readers get the best they can get for the buck they are paying.Just because amd is behind and therefore lies are told, does not mean the truth should not shine through !

The truth shall shine through !

AnnonymousCoward - Sunday, March 25, 2012 - link

Personally, I don't care if the card has 64kB of RAM. Or 8 million stream processors. Performance, cost, power, and noise are what matter.And back to my point: performance in the 20-50fps range at 2560x1600 4xAA is meaningless and not a criteria for judgment.

CeriseCogburn - Tuesday, March 27, 2012 - link

I never disagreed with that point I merely explained why things are done in such and such a way while ignoring other things.It's not difficult at all.

Zephyr66z0r - Sunday, March 25, 2012 - link

Well I understand 'some' of the tech behind the GTX680 one thing stands out. 256bit bus width, when you see that with nvidia its along the lines of GTX560.... so does that mean there's going be a 384bit (mid-high) or 512bit(high-enth, 256bit + 256bit + 2 GPU) card/s coming out?I can't wait, anyone done SLi with it yet?

dmnwlv - Sunday, March 25, 2012 - link

First off, I think nVidia has done a good job with the new GTX680.However I do not need a game that is already running at 100+ frames to be even faster.

It needs to be fast at where it counts - games that are still running slow at 60 fps and below.

For this, of 3 relevant games, nVidia is faster at just one of them. Experience (if you also remember) has shown that the results could be very different once frames for some settings/games hit below 60fps.

Hence I cannot agree with all the big f about GTX680 is so much faster fuss.

You guys are led by the heart (much alike ati fanboys you used to call) than the brain.

And all other compute tests are non-relevant to me (and majority of you to be honest).

gramboh - Monday, March 26, 2012 - link

What about a little game (that several million people play) called Battlefield 3? NV has a massive lead with the GTX 680 over the 7970/7950. AT only benches single player, but the game is even more punishing in 64 player multiplayer. Having a smooth framerate at max detail with 4X AA/16X AF is a big competitive advantage and makes the game significantly more enjoyable.Kind of disappointed the card isn't faster in Witcher 2, which I think has the best graphics of a single player game.

CeriseCogburn - Tuesday, March 27, 2012 - link

Have all of you people repeating that FUD forgotten Shogun 2 Total War ?It's the hardest game in the bench set according to anandtech...

How is it that THE HARDEST GAME that Nvidia swept top to bottom at every resolution is suddenly and completely forgotten about, while we hear these other FUD declarations ?

How does that work, just repeat what some other mistaken fudder spewed ?