The Apple iPad Review (2012)

by Vivek Gowri & Anand Lal Shimpi on March 28, 2012 3:14 PM ESTThe A5X SoC

The ridiculousness of the new iPad begins at its heart: the A5X SoC.

The A5X breaks Apple's longstanding tradition of debuting its next smartphone SoC in the iPad first. I say this with such certainty because the A5X is an absolute beast of an SoC. As it's implemented in the new iPad, the A5X under load consumes more power than an entire iPhone 4S.

In many ways in the A5X is a very conservative design, while in others it's absolutely pushing the limits of what had been previously done in a tablet. Similar to the A5 and A4 before it, the A5X is still built on Samsung's 45nm LP process. Speculation about a shift to 32nm or even a move TSMC was rampant this go around. I'll admit I even expected to see a move to 32nm for this chip, but Apple decided that 45nm was the way to go.

Why choose 45nm over smaller, cooler running options that are on the table today? Process maturity could be one reason. Samsung has yet to ship even its own SoC at 32nm, much less one for Apple. It's quite possible that Samsung's 32nm LP simply wasn't ready/mature enough for the sort of volumes Apple needed for an early 2012 iPad launch. The fact that there was no perceivable slip in the launch timeframe of the new iPad (roughly 12 months after its predecessor) does say something about how early 32nm readiness was communicated to Apple. Although speculation is quite rampant about Apple being upset enough with Samsung to want to leave for TSMC, the relationship on the foundry side appears to be good from a product delivery standpoint.

Another option would be that 32nm was ready but Apple simply opted against using it. Companies arrive at different conclusions as to how aggressive they need to be on the process technology side. For example, ATI/AMD was typically more aggressive on adopting new process technologies while NVIDIA preferred to make the transition once all of the kinks were worked out. It could be that Apple is taking a similar approach. Wafer costs generally go up at the start of a new process node, combine that with lower yields and strict design rules and it's not a guarantee that you'd actually save any money from moving to a new process technology—at least not easily or initially. The associated risk of something going wrong might have been one that Apple wasn't willing to accept.

| CPU Specification Comparison | ||||||||

| CPU | Manufacturing Process | Cores | Transistor Count | Die Size | ||||

| Apple A5X | 45nm | 2 | ? | 163mm2 | ||||

| Apple A5 | 45nm | 2 | ? | 122mm2 | ||||

| Intel Sandy Bridge 4C | 32nm | 4 | 995M | 216mm2 | ||||

| Intel Sandy Bridge 2C (GT1) | 32nm | 2 | 504M | 131mm2 | ||||

| Intel Sandy Bridge 2C (GT2) | 32nm | 2 | 624M | 149mm2 | ||||

| NVIDIA Tegra 3 | 40nm | 4+1 | ? | ~80mm2 | ||||

| NVIDIA Tegra 2 | 40nm | 2 | ? | 49mm2 | ||||

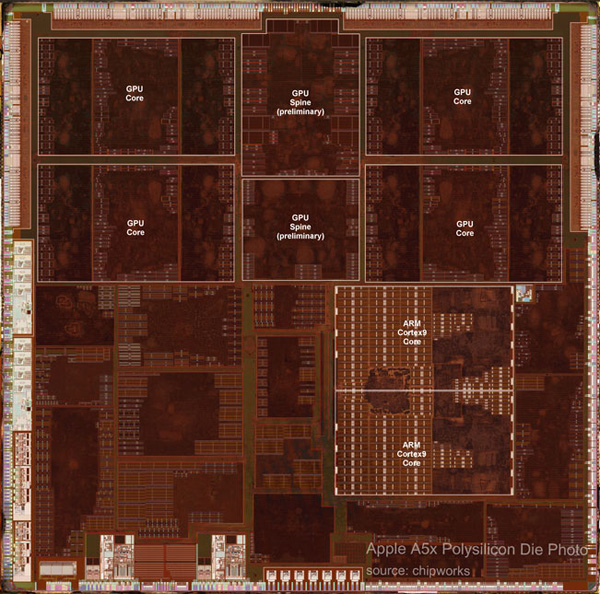

Whatever the reasoning, the outcome is significant: the A5X is approximately 2x the size of NVIDIA's Tegra 3, and even larger than a dual-core Sandy Bridge desktop CPU. Its floorplan is below:

Courtesy: Chipworks

From the perspective of the CPU, not much has changed with the A5X. Apple continues to use a pair of ARM Cortex A9 cores running at up to 1.0GHz, each with MPE/NEON support and a shared 1MB L2 cache. While it's technically possible for Apple to have ramped up CPU clocks in pursuit of higher performance (A9 designs have scaled up to 1.6GHz on 4x-nm processes), Apple has traditionally been very conservative on CPU clock frequency. Higher clocks require higher voltages (especially on the same process node), which result in an exponential increase in power consumption.

| ARM Cortex A9 Based SoC Comparison | ||||||

| Apple A5X | Apple A5 | TI OMAP 4 | NVIDIA Tegra 3 | |||

| Manufacturing Process | 45nm LP | 45nm LP | 45nm LP | 40nm LPG | ||

| Clock Speed | Up to 1GHz | Up to 1GHz | Up to 1GHz | Up to 1.5GHz | ||

| Core Count | 2 | 2 | 2 | 4+1 | ||

| L1 Cache Size | 32KB/32KB | 32KB/32KB | 32KB/32KB | 32KB/32KB | ||

| L2 Cache Size | 1MB | 1MB | 1MB | 1MB | ||

| Memory Interface to the CPU | Dual Channel LP-DDR2 | Dual Channel LP-DDR2 | Dual Channel LP-DDR2 | Single Channel LP-DDR2 | ||

| NEON Support | Yes | Yes | Yes | Yes | ||

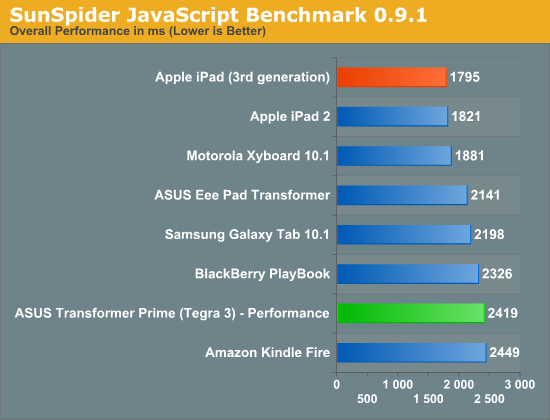

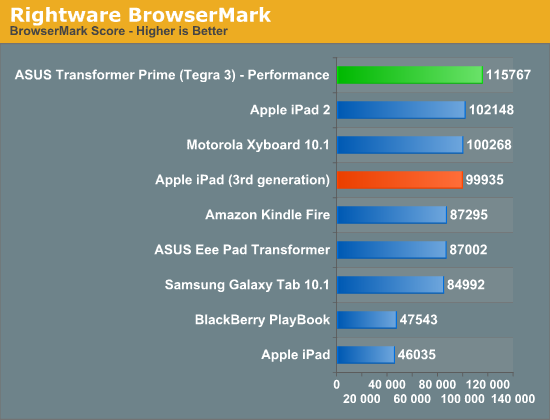

With no change on the CPU side, CPU performance remains identical to the iPad 2. This means everything from web page loading to non-gaming app interactions are no faster than they were last year:

JavaScript performance remains unchanged, as you can see from both the BrowserMark and SunSpider results above. Despite the CPU clock disadvantage compared to the Tegra 3, Apple does have the advantage of an extremely efficient and optimized software stack in iOS. Safari just went through an update in improving its Javascript engine, which is why we see competitive performance here.

Geekbench has been updated with Android support, so we're able to do some cross platform comparisons here. Geekbench is a suite composed of completely synthetic, low-level tests—many of which can execute entirely out of the CPU's L1/L2 caches.

| Geekbench 2 | ||||||

| Apple iPad (3rd gen) | ASUS TF Prime | Apple iPad 2 | Motorola Xyboard 10.1 | |||

| Integer Score | 688 | 1231 | 684 | 883 | ||

| Blowfish ST | 13.2 MB/s | 23.3 MB/s | 13.2 MB/s | 17.6 MB/s | ||

| Blowfish MT | 26.3 MB/s | 60.4 MB/s | 26.0 MB/s | - | ||

| Text Compress ST | 1.52 MB/s | 1.58 MB/s | 1.51 MB/s | 1.63 MB/s | ||

| Text Compress MT | 2.85 MB/s | 3.30 MB/s | 2.83 MB/s | 2.93 MB/s | ||

| Text Decompress ST | 2.08 MB/s | 2.00 MB/s | 2.09 MB/s | 2.11MB/s | ||

| Text Decompress MT | 3.20 MB/s | 3.09 MB/s | 3.27 MB/s | 2.78 MB/s | ||

| Image Compress ST | 4.09 Mpixels/s | 5.56 Mpixels/s | 4.08 Mpixels/s | 5.42 Mpixels/s | ||

| Image Compress MT | 8.12 Mpixels/s | 21.4 Mpixels/s | 7.98 Mpixels/s | 10.5 Mpixels/s | ||

| Image Decompress ST | 6.70 Mpixels/s | 9.37 Mpixels/s | 6.67 Mpixels/s | 9.18 Mpixels/s | ||

| Image Decompress MT | 13.2 Mpixels/s | 20.3 Mpixels/s | 13.0 Mpixels/s | 17.9 Mpixels/s | ||

| Lua ST | 257.2 Knodes/s | 417.9 Knodes/s | 257.0 Knodes/s | 406.9 Knodes/s | ||

| Lua MT | 512.3 Knodes/s | 1500 Knodes/s | 505.6 Knodes/s | 810.0 Knodes/s | ||

| FP Score | 920 | 2223 | 915 | 1514 | ||

| Mandelbrot ST | 279.5 MFLOPS | 334.8 MFLOPS | 279.0 MFLOPS | 328.9 MFLOPS | ||

| Mandelbrot MT | 557.0 MFLOPS | 1290 MFLOPS | 550.3 MFLOPS | 648.0 MFLOPS | ||

| Dot Product ST | 221.9 MFLOPS | 477.5 MFLOPS | 221.5 MFLOPS | 455.2 MFLOPS | ||

| Dot Product MT | 438.9 MFLOPS | 1850 MFLOPS | 439.4 MFLOPS | 907.4 MFLOPS | ||

| LU Decomposition ST | 217.5 MFLOPS | 171.4 MFLOPS | 214.6 MFLOPS | 177.9 MFLOPS | ||

| LU Decomposition MT | 434.2 MFLOPS | 333.9 MFLOPS | 437.4 MFLOPS | 354.1 MFLOPS | ||

| Primality ST | 177.3 MFLOPS | 175.6 MFLOPS | 178.0 MFLOPS | 172.9 MFLOPS | ||

| Primality MT | 321.5 MFLOPS | 273.2 MFLOPS | 316.9 MFLOPS | 220.7 MFLOPS | ||

| Sharpen Image ST | 1.68 Mpixels/s | 3.87 Mpixels/s | 1.68 Mpixels/s | 3.86 Mpixels/s | ||

| Sharpen Image MT | 3.35 Mpixels/s | 9.85 Mpixels/s | 3.32 Mpixels/s | 7.52 Mpixels/s | ||

| Blur Image ST | 666.0 Kpixels/s | 1.62 Kpixels/s | 664.8 Kpixels/s | 1.58 Kpixels/s | ||

| Blur Image MT | 1.32 Mpixels/s | 6.25 Mpixels/s | 1.31 Mpixels/s | 3.06 Mpixels/s | ||

| Memory Score | 821 | 1079 | 829 | 1122 | ||

| Read Sequential ST | 312.0 MB/s | 249.0 MB/s | 347.1 MB/s | 364.1 MB/s | ||

| Write Sequential ST | 988.6 MB/s | 1.33 GB/s | 989.6 MB/s | 1.32 GB/s | ||

| Stdlib Allocate ST | 1.95 Mallocs/sec | 2.25 Mallocs/sec | 1.95 Mallocs/sec | 2.2 Mallocs/sec | ||

| Stdlib Write | 2.90 GB/s | 1.82 GB/s | 2.90 GB/s | 1.97 GB/s | ||

| Stdlib Copy | 554.6 MB/s | 1.82 GB/s | 564.5 MB/s | 1.91 GB/s | ||

| Stream Score | 331 | 288 | 335 | 318 | ||

| Stream Copy | 456.4 MB/s | 386.1 MB/s | 466.6 MB/s | 504 MB/s | ||

| Stream Scale | 380.2 MB/s | 351.9 MB/s | 371.1 MB/s | 478.5 MB/s | ||

| Stream Add | 608.8 MB/s | 446.8 MB/s | 654.0 MB/s | 420.1 MB/s | ||

| Stream Triad | 457.7 MB/s | 463.7 MB/s | 437.1 MB/s | 402.8 MB/s | ||

Almost entirely across the board NVIDIA delivers better CPU performance, either as a result of having more cores, having higher clocked cores or due to an inherent low-level Android advantage. Prioritizing GPU performance over a CPU upgrade is nothing new for Apple, and in the case of the A5X Apple could really only have one or the other—the new iPad gets hot enough and draws enough power as it is; Apple didn't need an even more power hungry set of CPU cores to make matters worse.

Despite the stagnation on the CPU side, most users would be hard pressed to call the iPad slow. Apple does a great job of prioritizing responsiveness of the UI thread, and all the entire iOS UI is GPU accelerated, resulting in a very smooth overall experience. There's definitely a need for faster CPUs to enable some more interesting applications and usage models. I suspect Apple will fulfill that need with the A6 in the 4th generation iPad next year. That being said, in most applications I don't believe the iPad feels slow today.

I mention most applications because there are some iOS apps that are already pushing the limits of what's possible today.

iPhoto: A Case Study in Why More CPU Performance is Important

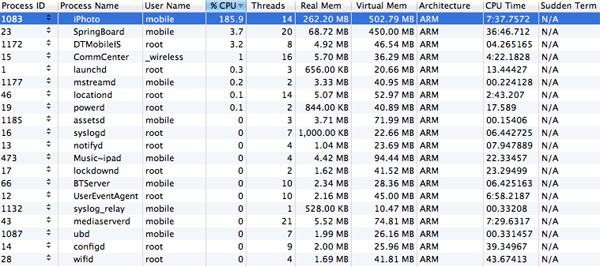

In our section on iPhoto we mentioned just how frustratingly slow the app can be when attempting to use many of its editing tools. In profiling the app it becomes abundantly clear why it's slow. Despite iPhoto being largely visual, it's extremely CPU bound. For whatever reason, simply having iPhoto open is enough to eat up an entire CPU core.

Use virtually any of the editing tools and you'll see 50—95% utilization of the remaining, unused core. The screenshot below is what I saw during use of the saturation brush:

The problem is not only are the two A9s not fast enough to deal with the needs of iPhoto, but anything that needs to get done in the background while you're using iPhoto is going to suffer as well. This is most obvious when you look at how long it takes for UI elements within iPhoto to respond when you're editing. It's very rare that we see an application behave like this on iOS, even Infinity Blade only uses a single core most of the time, but iPhoto is a real exception.

I have to admit, I owe NVIDIA an apology here. While I still believe that quad-cores are mostly unnecessary for current smartphone/tablet workloads, iPhoto is a very tangible example of where Apple could have benefitted from having four CPU cores on A5X. Even an increase in CPU frequency would have helped. In this case, Apple had much bigger fish to fry: figuring out how to drive all 3.1M pixels on the Retina Display.

234 Comments

View All Comments

doobydoo - Saturday, March 31, 2012 - link

Lucian Armasu, you talk the most complete nonsense of anyone I've ever seen on here.The performance is not worse, by any stretch of the imagination, and lets remember that the iPad 2 runs rings around the Android competition graphically anyway. If you want to run the same game at the same resolution, which wont look worse, at all (it would look exactly the same) it will run at 2x the FPS or more (up-scaled). Alternatively, for games for which it is beneficial, you can quadruple the quality and still run the game at perfectly acceptable FPS, since the game will be specifically designed to run on that device. Attempting anything like that quality on any other tablet is not only impossible by virtue of their inferior screens, they don't have the necessary GPU either.

In other words, you EITHER have a massive improvement in quality, or a massive improvement in performance, over a device (iPad 2) which was still the fastest performing GPU tablet even a year after it came out. The game developers get to make this decision - so they just got 2 great new options on a clearly much more powerful device. To describe that as not worth an upgrade is quite frankly ludicrous, you have zero credibility from here on in.

thejoelhansen - Wednesday, March 28, 2012 - link

Hey Anand,First of all - thank you so much for the quality reviews and benchmarks. You've helped me build a number of servers and gaming rigs. :)

Secondly, I'm not sure I know what you mean when you state that "Prioritizing GPU performance over a CPU upgrade is nothing new for Apple..." (Page 11).

The only time I can remember Apple doing so is when keeping the 13" Macbook/ MBPs on C2Ds w/ Nvidia until eventually relying on Intel's (still) anemic "HD" graphics... Is that what you're referring to?

I seem to remember Apple constantly ignoring the GPU in favor of CPU upgrades, other than that one scenario... Could be mistaken. ;)

And again - thanks for the great reviews! :)

AnnonymousCoward - Wednesday, March 28, 2012 - link

"Retina Display" is a stupid name. Retinas sense light, which the display doesn't do.xype - Thursday, March 29, 2012 - link

GeForce is a stupid name, as the video cards don’t have anything to do with influencing the gravitational acceleration of an object or anything close to that.Retina Display sounds fancy and is lightyears ahead of "QXGA IPS TFT Panel" when talking about it. :P

Sabresiberian - Thursday, March 29, 2012 - link

While I agree that "Retina Display" is a cool enough sounding name, and that's pretty much all you need for a product, unless it's totally misleading, it's not an original use of the phrase. The term has been used in various science fiction stories and tends to mean a display device that projects an image directly onto the retina.I always thought of "GeForce" as being an artist's licensed reference to the cards being a Force in Graphics, so the name had a certain logic behind it.

;)

seapeople - Tuesday, April 3, 2012 - link

Wait, so "Retina Display" gets you in a tizzy but "GeForce" makes perfect sense to you? You must have interesting interactions in everyday life.ThreeDee912 - Thursday, March 29, 2012 - link

It's basically the same concept with Gorilla Glass or UltraSharp displays. It obviously doesn't mean that Corning makes glass out of gorillas, or that Dell will cut your eyes out and put them on display. It's just a marketing name.SR81 - Saturday, March 31, 2012 - link

Funny I always believed the "Ge" was short for geometry. Whatever the case you can blame the name on the fans who came up with it.tipoo - Thursday, March 29, 2012 - link

iPad is a stupid name. Pads collect blood from...Well, never mind. But since when are names always literal?doobydoo - Saturday, March 31, 2012 - link

What would you call a display which had been optimised for use by retinas?Retina display.

They aren't saying the display IS a retina, they are saying it is designed with retinas in mind.

The scientific point is very clear and as such I don't think the name is misleading at all. The point is the device has sufficient PPI at typical viewing distance that a person with typical eyesight wont be able to discern the individual pixels.

As it happens, strictly speaking, the retina itself is capable of discerning more pixels at typical viewing distance than the PPI of the new iPad, but the other elements of the human eye introduce loss in the quality of the image which is then ultimately sent on to the brain. While scientifically this is a distinction, to end consumers it is a distinction without a difference, so the name makes sense in my opinion.