AMD Radeon HD 7870 GHz Edition & Radeon HD 7850 Review: Rounding Out Southern Islands

by Ryan Smith on March 5, 2012 12:01 AM ESTBattlefield 3

Its popularity aside, Battlefield 3 may be the most interesting game in our benchmark suite for a single reason: it’s the first AAA DX10+ game. It’s been 5 years since the launch of the first DX10 GPUs, and 3 whole process node shrinks later we’re finally to the point where games are using DX10’s functionality as a baseline rather than an addition. Not surprisingly BF3 is one of the best looking games in our suite, but as with past Battlefield games that beauty comes with a high performance cost

For anyone keeping score, we reran all of our numbers after the recent Battlefield 3 Radeon HD 7000 series performance patch. The results are virtually identical. While we don’t have official confirmation, we believe that DICE switched to a different FXAA codepath; however doing this doesn’t seem to have impacted the performance of the 7000 series, which is either a testament to AMD’s shader compiler, or proof that the overhead from FXAA is very low in the first place.

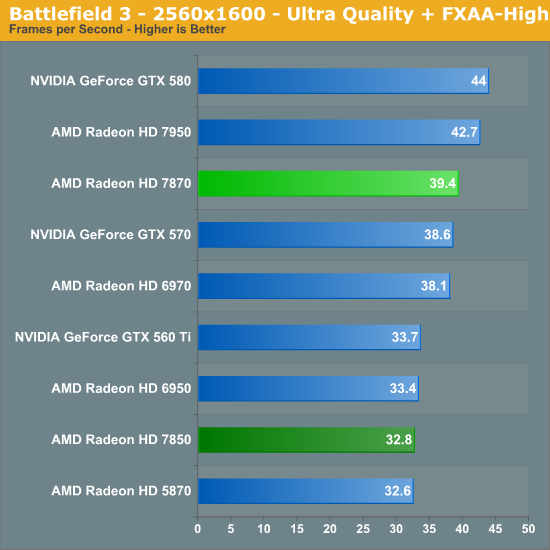

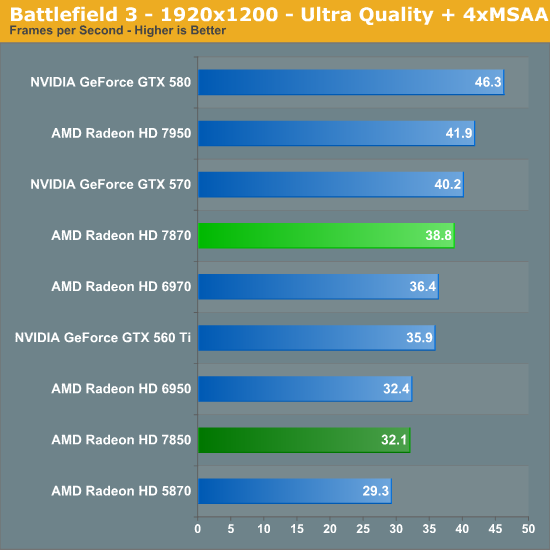

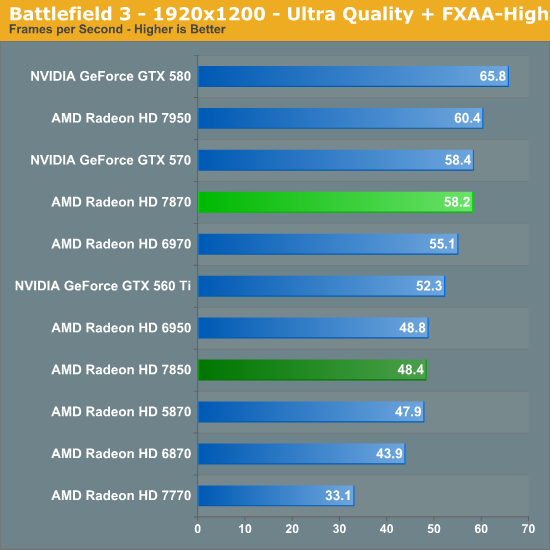

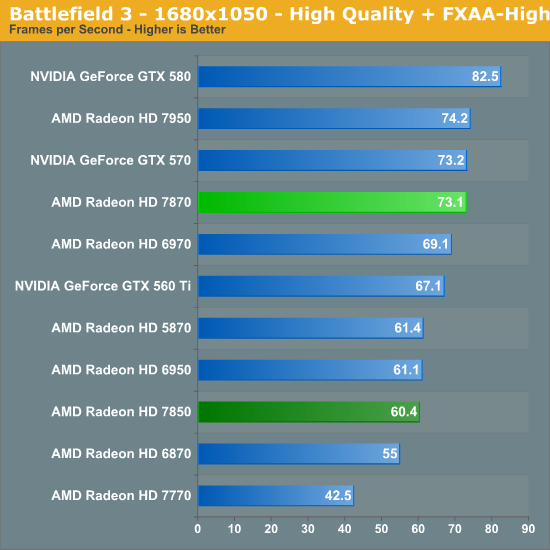

In any case while AMD’s BF3 performance has improved since the 7970 launch, it’s still one of their weaker games. The 7870 can hang with the GTX 570 at 1920 but the 7850 once more falls behind the GTX 560 Ti. The 7850 in particular just isn’t doing very well here, and it would be necessary to drop down in settings or in resolution to get fluid gameplay out of BF3. However at the same time we do see some further evidence of the impact of having 2GB of VRAM, as both 7800 cards improve relative to NVIDIA’s cards at 2560.

Meanwhile compared to the 6900 series, the 7800 series takes another small lead. At 19x12 without MSAA the 7870 has 5% on the 6970, while the 7850 is effectively tied with the 6950. It’s interesting to note however that relative to the 7950, the 7870 is doing very well here, trailing AMD’s faster card by less than 4%. The fact of the matter is that with the same basic frontend and the same number of ROPs, the 7870’s 1GHz core clock can significantly eat into the performance lead of the 7950 if the 7950 is primarily performance bound by either of those two rendering stages.

173 Comments

View All Comments

Kaboose - Monday, March 5, 2012 - link

7870, beats GTX 570 and is about even with the GTX 580, uses 150watts less power at load, is quieter, is cooler, and has idle power draw > 23watts less. How is this a disappointment? The only disappointment i see is the price which is the result of no competition from Nvidia.Kiste - Monday, March 5, 2012 - link

A new generation of GPUs used to give us a whole hell of a lot more performance at any given price point. The current AMD stuff does not and that is a disappointment.Case in point: you even have to talk these things up by basically saying "oh, well, at least they draw less power".

Kaboose - Monday, March 5, 2012 - link

dropping power consumption by over 50% is something of a gimmick? Dropping load temps by 14c compared to the GTX 570 is not significant? 14c is a fairly large degree of deference, this gives higher room for overclocking as well as a cooler system overall. When Nvidia releases Kepler and we have both companies with 28nm then we can (hopefully) see some competition in price. In my opinion the 7870 at $325 would be a great card right now. Once Kepler is out $285-300 I think would be nice. I agree it is over priced right now however.If Nvidia releases Kepler and gives us a LOT more performance over last generation then I will concede that the 7xxx series is a failure. However from the way AMD is behaving it doesn't appear Kepler is going to do much in terms of raw performance either.

Kiste - Monday, March 5, 2012 - link

While reducing power consumption might not be a gimmick, it is the result of the new process node and thus in itself not particularly impressive, especially when you more or less keep the performance the same as with the previous generation.I'm still not impressed, sorry. Price/performance plain and simply sucks ass with these cards, barely beating the stuff that's on the market right now in that regard.

And even with the high-end SI cards there's barely much of a performance boost compared to what's already been on the market for months.

Sure, less power draw is nice. I won't complain about it but if a brand new generation of GPUs comes out and I am not even one little bit compelled to upgrade from my aging, heavily overclocked GTX570, then something is cleary wrong here.

Exodite - Monday, March 5, 2012 - link

We'll just have to wait and see what Nvidia provides when they finally decide to put competition on the market, won't we?I'd happily agree to finding the 7900-series not as high-performing as I'd like, and the 7700-series too expensive.

From the reviews I've read so far the 7800-series, the 7850 especially, is pretty much the perfect card ATM.

Low power, low noise, cool, 2GB VRAM and runs between a 560 Ti and 570 in performance.

It's definitely the card I'd recommend to anyone at this point, especially given the fact that we'll see better coolers than AMDs atrocities once we get release versions.

Iketh - Monday, March 5, 2012 - link

You must live in a cold climate. You're happy with a heavily overclocked 570?? I live in FL, and that card increases my power bill $30-$70 each month over my 6870 during 3 of the 4 seasons, and I'm talking from experience. Do you have any idea how hard an A/C has to work in a small 2 bedroom house to counter the blast of heat from an overclocked gaming rig??If you live in a hot climate, test it for yourself.

You don't compare just the power draw of the cards themselves....

Kiste - Monday, March 5, 2012 - link

I'm not quite sure if you're actually expecting a serious answer to that kind of hyperbolic drivel.Jamahl - Monday, March 5, 2012 - link

You really don't get it do you? These cards REALLY DO heat up rooms. Where do you think the heat goes? Ever heard of the law of conservation of energy?londiste - Monday, March 5, 2012 - link

oh damn, i need to get two of those, maybe they'll reduce my heating bill at winter :)Kiste - Monday, March 5, 2012 - link

Spelling "really do" in capital letter doesn't make it any more less ridiculous a statement.My whole PC (GPU, OCed CPU, 4 HDDS) draws slightly more than 300W under typical gaming loads. You can't "heat up a room" with that, much less with just the GPU.