The Xeon E5-2600: Dual Sandy Bridge for Servers

by Johan De Gelas on March 6, 2012 9:27 AM EST- Posted in

- IT Computing

- Virtualization

- Xeon

- Opteron

- Cloud Computing

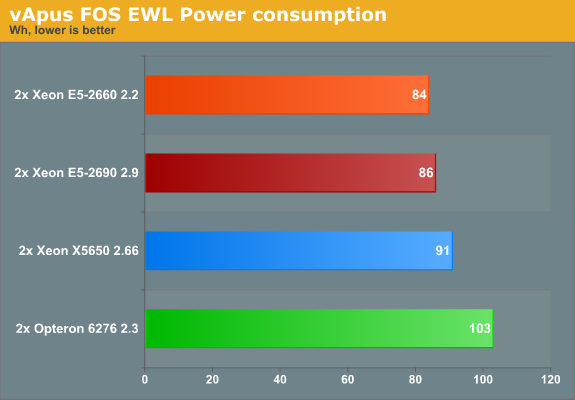

Measuring Real-World Power Consumption

The Equal Workload (EWL) version of vApus FOS is very similar to our previous vApus Mark II "Real-world Power" test. To create a real-world “equal workload” scenario, we throttle the number of users in each VM to a point where you typically get somewhere between 20% and 80% CPU load on a modern dual CPU server. The amount of requests is the same for each system, hence "equal workload". The CPU load is typically around 30-50%, with peaks up to 65% (for more info see here). At the end of the test, we get to a low 10%, which is ideal for the machine to boost to higher CPU clocks (Turbo) and race to idle.

We used the "Balanced" power policy and enabled C-states as the current ESXi settings make poor use of the C6 capabilities of the latest Opterons and Xeons.

First let's check out the response times.

| vApus FOS Response times (ms) | ||||||||

| CPU | PhpBB1 | PHPBB2 | MySQL OLAP | Zimbra | ||||

| AMD Opteron 6276 | 101 | 30 | 3.8 | 41 | ||||

| AMD Opteron 6174 | 118 | 41 | 3.8 | 45 | ||||

| Intel Xeon X5650 | 45 | 18 | 2.4 | 29 | ||||

| Intel Xeon E5-2660 | 41 | 18 | 2.5 | 25 | ||||

| Intel Xeon E5-2690 | 27 | 14 | 2.3 | 23 | ||||

It's worth noting that enabling the C-states in ESXi improves the performance/watt ratio of the Opteron 6276 quite a bit. Not only is the power consumption lower (see below), but enabling C6 allows higher turbo clocks, which in turn benefits response times. Compared to our previous test (standard out of the box "Balanced") all response times improve by 10% except for MySQL (which is already very low).

Even with that improvement however it is not enough to beat the Xeon E5. The Xeon E5 delivers extremely low response times....

... while sipping very little power, despite being run inside a feature rich server. Kudos to Intel for a job very well done.

81 Comments

View All Comments

JohanAnandtech - Wednesday, March 7, 2012 - link

Argh. You are absolutely right. I reversed all divisions. I am fixing this as we type. Luckily this does not alter the conclusion: LS-DYNA does not scale with clockspeed very well.alpha754293 - Wednesday, March 7, 2012 - link

I think that I might have an answer for you as to why it might not scale well with clock speed.When you start a multiprocessor LS-DYNA run, it goes through a stage where it decomposes the problem (through a process called recursive coordinate bisection (RCB)).

This decomposition phase is done every time you start the run, and it only runs on a single processor/core. So, suppose that you have a dual-socket server where the processors say...are hitting 4 GHz. That can potentially be faster than say if you had a four-socket server, but each of the processors are only 2.4 GHz.

In the first case, you have a small number of really fast cores (and so it will decompose the domain very quickly), whereas in the latter, you have a large number of much slower cores, so the decomposition will happen slowly, but it MIGHT be able to solve the rest of it slightly faster (to make up for the difference) just because you're throwing more hardware at it.

Here's where you can do a little more experimenting if you like.

Using the pfile (command line option/flag 'p=file'), not only can you control the decomposition method, but you can also tell it to write the decomposition to a file.

So had you had more time, what I would have probably done is written out the decompositions for all of the various permutations you're going to be running. (n-cores, m-number of files.)

When you start the run, instead of it having to decompose the problem over and over again each time it starts, you just use the decomposition that it's already done (once) and then that way, you would only be testing PURELY the solving part of the run, rather than from beginning to end. (That isn't to say that the results you've got is bad - it's good data), but that should help to take more variables out of the equation when it comes to why it doesn't scale well with clock speed. (It should).

IntelUser2000 - Tuesday, March 6, 2012 - link

Please refrain from creating flamebait in your posts. Your post is almost like spam, almost no useful information is there. If you are going to love one side, don't hate the other.Alexko - Tuesday, March 6, 2012 - link

It's not "like spam", it's just plain spam at this point. A little ban + mass delete combo seems to be in order, just to cleanup this thread—and probably others.ultimav - Wednesday, March 7, 2012 - link

My troll meter is reading off the charts with this guy. Reading between the lines, he's actually a hardcore AMD fan trying to come across as the Intel version of Sharikou to paint Intel fans in a bad light. Pretty obvious actually.JohanAnandtech - Wednesday, March 7, 2012 - link

We had to mass delete his posts as they indeed did not contain any useful info and were full of insults. The signal to noise ratio has been good the last years, so we must keep it that way.Inteluser2000, Alexko, Ultimav, tipoo: thx for helping to keep the tone civil here. Appreciate it.

- Johan.

tipoo - Wednesday, March 7, 2012 - link

And thank you for removing that stuff.tipoo - Tuesday, March 6, 2012 - link

We get it. Don't spam the whole place with the same post.tipoo - Tuesday, March 6, 2012 - link

No, he's just a rational persons. I don't care which company you like, if you say the same thing 10 times in one article someones sure to get annoyed and with justification.MySchizoBuddy - Tuesday, March 6, 2012 - link

I'm again requesting that when you do the benchmarks please do a Performance per watt metric along with stress testing by running folding@home for straight 48hours.