The Xeon E5-2600: Dual Sandy Bridge for Servers

by Johan De Gelas on March 6, 2012 9:27 AM EST- Posted in

- IT Computing

- Virtualization

- Xeon

- Opteron

- Cloud Computing

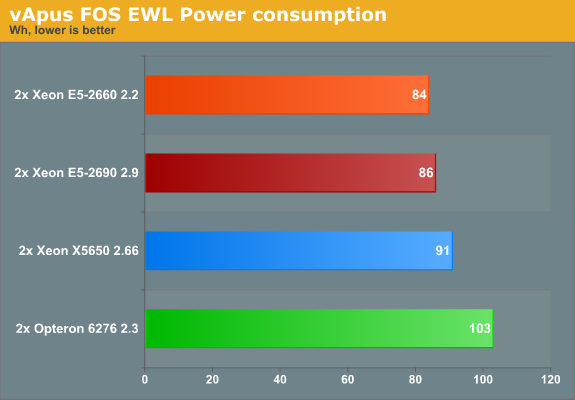

Measuring Real-World Power Consumption

The Equal Workload (EWL) version of vApus FOS is very similar to our previous vApus Mark II "Real-world Power" test. To create a real-world “equal workload” scenario, we throttle the number of users in each VM to a point where you typically get somewhere between 20% and 80% CPU load on a modern dual CPU server. The amount of requests is the same for each system, hence "equal workload". The CPU load is typically around 30-50%, with peaks up to 65% (for more info see here). At the end of the test, we get to a low 10%, which is ideal for the machine to boost to higher CPU clocks (Turbo) and race to idle.

We used the "Balanced" power policy and enabled C-states as the current ESXi settings make poor use of the C6 capabilities of the latest Opterons and Xeons.

First let's check out the response times.

| vApus FOS Response times (ms) | ||||||||

| CPU | PhpBB1 | PHPBB2 | MySQL OLAP | Zimbra | ||||

| AMD Opteron 6276 | 101 | 30 | 3.8 | 41 | ||||

| AMD Opteron 6174 | 118 | 41 | 3.8 | 45 | ||||

| Intel Xeon X5650 | 45 | 18 | 2.4 | 29 | ||||

| Intel Xeon E5-2660 | 41 | 18 | 2.5 | 25 | ||||

| Intel Xeon E5-2690 | 27 | 14 | 2.3 | 23 | ||||

It's worth noting that enabling the C-states in ESXi improves the performance/watt ratio of the Opteron 6276 quite a bit. Not only is the power consumption lower (see below), but enabling C6 allows higher turbo clocks, which in turn benefits response times. Compared to our previous test (standard out of the box "Balanced") all response times improve by 10% except for MySQL (which is already very low).

Even with that improvement however it is not enough to beat the Xeon E5. The Xeon E5 delivers extremely low response times....

... while sipping very little power, despite being run inside a feature rich server. Kudos to Intel for a job very well done.

81 Comments

View All Comments

alpha754293 - Tuesday, March 6, 2012 - link

Thanks for running those.Are those results with HTT or without?

If you can write a little more about the run settings that you used (with/without HTT, number of processes), that would be great.

Very interesting results thought.

It would have been interesting to see what the power consumption and total energy consumption numbers would be for these runs (to see if having the faster processor would really be that beneficial).

Thanks!

alpha754293 - Tuesday, March 6, 2012 - link

I should work with you more to get you running some Fluent benchmarks as well.But, yes, HPC simulations DO take a VERY long time. And we beat the crap out of our systems on a regular basis.

jhh - Tuesday, March 6, 2012 - link

This is the most interesting part to me, as someone interested in high network I/O. With the packets going directly into cache, as long as they get processed before they get pushed out by subsequent packets, the packet processing code doesn't have to stall waiting for the packet to be pulled from RAM into cache. Potentially, the packet never needs to be written to RAM at all, avoiding using that memory capacity. In the other direction, web servers and the like can produce their output without ever putting the results into RAM.meloz - Tuesday, March 6, 2012 - link

I wonder if this Data Direct I/O Technology has any relevance to audio engineering? I know that latency is a big deal for those guys. In past I have read some discussion on latency at gearslutz, but the exact science is beyond me.Perhaps future versions of protools and other professional DAWs will make use of Data Direct I/O Technology.

Samus - Tuesday, March 6, 2012 - link

wow. 20MB of on-die cache. thats ridiculous.PwnBroker2 - Tuesday, March 6, 2012 - link

dont know about the others but not ATT. still using AMD even on the new workstation upgrades but then again IBM does our IT support, so who knows for the future.the new xeon's processors are beasts anyways, just wondering what the server price point will be.

tipoo - Tuesday, March 6, 2012 - link

"AMD's engineers probably the dumbest engineers in the world because any data in AMD processor is not processed but only transferred to the chipset."...What?

tipoo - Tuesday, March 6, 2012 - link

Think you've repeated that enough for one article?tipoo - Wednesday, March 7, 2012 - link

Like the Ivy bridge comments, just for future readers note that this was a reply to a deleted troll and no longer applies.IntelUser2000 - Tuesday, March 6, 2012 - link

Johan, you got the percentage numbers for LS-Dyna wrong.You said for the first one: the Xeon E5-2660 offers 20% better performance, the 2690 is 31% faster. It is interesting to note that LS-Dyna does not scale well with clockspeed: the 32% higher clockspeed of the Xeon E5-2690 results in only a 14% speed increase.

E5-2690 vs Opteron 6276: +46%(621/426)

E5-2660 vs Opteron 6276: +26%(621/492)

E5-2690 vs E5-2660: +15%(492/426)

In the conclusion you said the E5 2660 is "56% faster than X5650, 21% faster than 6276, and 6C is 8% faster than 6276"

Actually...

LS Dyna Neon-

E5-2660 vs X5650: +77%(872/492)

E5-2660 vs 6276: +26%(621/492)

E5-2660 6C vs 6276: +9%(621/570)

LS Dyna TVC-

E5-2660 vs X5650: +78%(10833/6072)

E5-2660 vs 6276: +35%(8181/6072)

E5-2660 6C vs 6276: +13%(8181/7228)

It's funny how you got the % numbers for your conclusions. It's merely the ratio of lower number vs higher number multiplied by 100.