A Look at Enterprise Performance of Intel SSDs

by Anand Lal Shimpi on February 8, 2012 6:36 PM EST- Posted in

- Storage

- IT Computing

- SSDs

- Intel

Measuring How Long Your Intel SSD Will Last

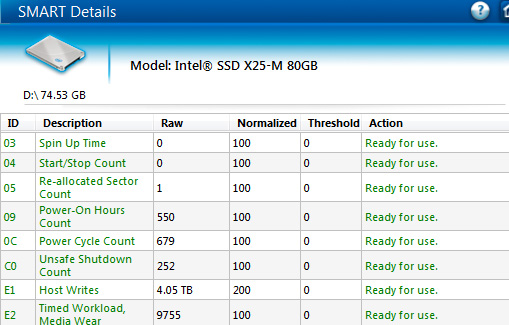

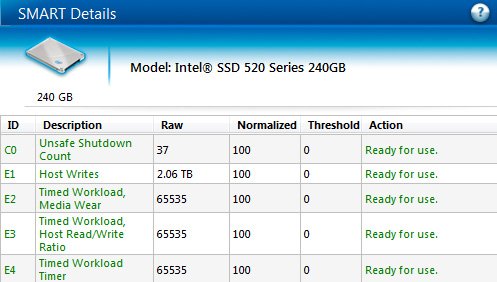

Earlier in this review I talked about Intel's SMART attributes that allow you to accurately measure write amplification for a given workload. If you fire up Intel's SSD Toolbox or any tool that allows you to monitor SMART attributes you'll notice a few fields of interest. I mentioned these back in our 710 review, but the most important for our investigations here are E2 (226) and E4 (228):

The raw value of attribute E2, when divided by 1024, gives you an accurate report of the amount of wear on your NAND since the last timer reset. In this case we're looking at an Intel X25-M G2 (the earliest drive to support E2 reporting) whose E2 value is at 9755. Dividing that by 1024 gives us 9.526% (the field is only accurate to three decimal points).

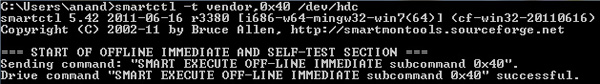

I mentioned that this data is only accurate since the last timer reset, that's where the value stored in E4 comes into play. By executing a SMART EXECUTE OFFLINE IMMEDIATE subcommand 40h to the drive you'll reset the timer in E4 and the data stored in E2 and E3. The data in E2/E3 will then reflect the wear incurred since you reset the timer, giving you a great way of measuring write amplification for a specific workload.

How do you reset the E4 timer? I've always used smartmontools to do it. Download the appropriate binary from sourceforge and execute the following command:

smartctl -t vendor,0x40 /dev/hdX where X is the drive whose counter you're trying to reset (e.g. hda, hdb, hdc, etc...).

Doing so will reset the E4 counter to 65535. The counter then begins at 0 and will count up in minutes. After the first 60 minutes you'll get valid data in E2/E3. While E2 gives you an indication of how much wear your workload puts on the NAND, E3 gives you the percentage of IO operations that are reads since you reset E4. E3 is particularly useful for determining how write heavy your workload is at a quick glance. Remember, it's the process of programing/erasing a NAND cell that is most destructive - read heavy workloads are generally fine on consumer grade drives.

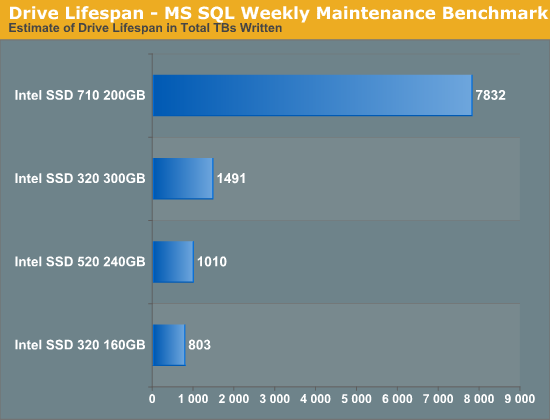

I reset the workload timer (E4) on all of the Intel SSDs that supported it and ran a loop of our MS SQL Weekly Maintenance benchmark that resulted in 320GB of writes to the drive. I then measured wear on the NAND (E2) and used that to calculate how many TBs we could write to these drives, using this workload, before we'd theoretically wear out their NAND. The results are below:

There are a few interesting takeaways from this data. For starters, Intel's SSD 710 uses high endurance MLC (aka eMLC, MLC-HET) which is good for a significant increase in p/e cycles. With tens of thousands of p/e cycles per NAND cell, the Intel SSD 710 offers nearly an order of magnitude better endurance than the Intel SSD 320. Part of this endurance advantage is delivered through an incredible amount of spare area. Remember that although the 710 featured here is a 200GB drive it actually has 320GB of NAND on board. If you set aside a similar amount of spare area on the 320 you'd get a measurable increase in endurance. We actually see an example of that if you look at the gains the 300GB SSD 320 offers over the 160GB drive. Both drives are subjected to the same sized workload (just under 60GB), but the 300GB drive has much more unused area to use for block recycling. The result is an 85% increase in estimated drive lifespan for an 87.5% increase in drive capacity.

What does this tell us for how long these drives would last? If all they were doing was running this workload once a week, even the 160GB SSD 320 would be just fine. In reality our SQL server does far more than this but even then we'd likely be ok with a consumer drive. Note that the 800TBs of writes for the 160GB 320 is well above the 15TB Intel rates the drive for. The difference here is that Intel is calculating lifespan based on 4KB random writes with a very high write amplification. If we work backwards from these numbers for the MLC drives you'll end up with around 4000 - 5000 p/e cycles. In reality, even 25nm Intel NAND lasts longer than what it's rated for so what you're seeing is that this workload has a very low write amplification on these drives thanks to its access pattern and small size relative to the capacity of these drives.

Every workload is going to be different but what may have been a brutal consumer of IOs in the past may still be right at home on consumer SSDs in your server.

55 Comments

View All Comments

ssj4Gogeta - Thursday, February 9, 2012 - link

I think what you're forgetting here is that the 90% or 100% figures are _including_ the extra work that an SSD has to do for writing on already used blocks. That doesn't mean the data is incompressible; it means it's quite compressible.For example, if the SF drive compresses the data to 0.3x its original size, then including all the extra work that has to be done, the final value comes out to be 0.9x. The other drives would directly write the data and have an amplification of 3x.

jwilliams4200 - Thursday, February 9, 2012 - link

No, not at all. The other SSDs have a WA of about 1.1 when writing the same data.Anand Lal Shimpi - Thursday, February 9, 2012 - link

Haha yes I do :) These SSDs were all deployed in actual systems, replacing other SSDs or hard drives. At the end of the study we looked at write amplification. The shortest use case was around 2 months I believe and the longest was 8 months of use.This wasn't simulated, these were actual primary use systems that we monitored over months.

Take care,

Anand

Ryan Smith - Thursday, February 9, 2012 - link

Indeed. I was the "winner" with the highest write amplification due to the fact that I had large compressed archives regularly residing on my Vertex 2, and even then as Anand notes the write amplification was below 1.0.jwilliams4200 - Thursday, February 9, 2012 - link

And still you dodge my question.If the Sandforce controller can achieve decent compression, why did it not do better than the Intel 320 in the endurance test in this article?

I think the answer is that your "8 month study" is invalid.

Anand Lal Shimpi - Thursday, February 9, 2012 - link

SandForce can achieve decent compression, but not across all workloads. Our study was limited to client workloads as these were all primary use desktops/notebooks. The benchmarks here were derived from enterprise workloads and some tasks on our own servers.It's all workload dependent, but to say that SandForce is incapable of low write amplification in any environment is incorrect.

Take care,

Anand

jwilliams4200 - Friday, February 10, 2012 - link

If we look at the three "workloads" discussed in this thread:(1) anandtech "enterprise workload"

(2) xtremesystems.org client-workload obtained by using data actually found on user drives and writing it (mostly sequential) to a Sandforce 2281 SSD

(3) anandtech "8 month" client study

we find that two out of three show that Sandforce cannot achieve decent compression on realistic data.

I think you should repeat your "client workload" tests and be more careful with tracking exactly what is being written. I suspect there was a flaw in your study. Either benchmarks were run that you were not aware of, or else it could be something like frequent hibernation where a lot of empty RAM is being dumped to SSD. I can believe Sandforce can achieve a decent compression ratio on unused RAM! :)

RGrizzzz - Wednesday, February 8, 2012 - link

What the heck is your site doing where you're writing that much data? Does that include the Anandtech forums, or just Anandtech.com?extide - Wednesday, February 8, 2012 - link

Probably logs requests and browser info and whatnot.Stuka87 - Wednesday, February 8, 2012 - link

That most likely includes the CMS and a large amount of the content, the Ad system, our users accounts for commenting here, all the Bench data, etc.The forums would use their own vBulletin database. But most likely run on the same servers.