The Radeon HD 7970 Reprise: PCIe Bandwidth, Overclocking, & The State Of Anti-Aliasing

by Ryan Smith on January 27, 2012 4:30 PM EST- Posted in

- GPUs

- AMD

- Radeon

- Radeon HD 7000

Improving the State of Anti-Aliasing: Leo Makes MSAA Happen, SSAA Takes It Up A Notch

As you may recall in our initial review of the 7970, I expressed my bewilderment that AMD had not implemented Adaptive Anti-Aliasing (AAA) and Super Sample Anti-Aliasing (SSAA) for DX10+ in the Radeon HD 7000 series. There has never been a long-term AA mode gap in recent history, and it was AMD who made DX9 SSAA popular again in the first place when they made it a front-and-center feature of the Radeon HD 5000 series. AMD’s response at the time was that they preferred to find a way to have games implement these AA modes natively, which is not an unreasonable position, but an unfortunate one given the challenge in just getting game developers to implement MSAA these days.

So it was with a great deal of surprise and glee on our part that when AMD released their first driver update last week, they added an early version of AAA and SSAA support for DX10+ games. Given their earlier response this was unexpected, and in retrospect either AMD was already working on this at the time (and not ready to announce it), or they’ve managed to do a lot of work in a very short period of time.

As it stands, AMD’s DX10+ AAA/SSAA implementation is still a work in progress, and it will only work on games that natively support MSAA. Given the way the DX10+ rendering pipeline works, this is a practical compromise as it’s generally much easier to implement SSAA after the legwork has already been done to get MSAA working.

We haven’t had a lot of time to play with the new drivers, but in our testing AAA/SSAA do indeed work. A quick check with Crysis: Warhead finds that AMD’s DX10+ SSAA implementation is correctly resolving transparency aliasing and shader aliasing as it should be.

Crysis: Warhead SSAA: Transparent Texture Anti-Aliasing

Crysis: Warhead SSAA: Shader Anti-Aliasing

Of course, if DX10+ SSAA only works with games that already implement MSAA, what does this mean for future games? As we alluded to earlier, built-in MSAA support is not quite prevalent across modern games, and the DX10+ pipeline makes forcing it from the driver side a tricky endeavor at best. One of the biggest technical culprits (as opposed to quickly ported console games) is the increasing use of deferred rendering, which makes MSAA more difficult for developers to implement.

In short, in a traditional (forward) renderer, the rendering process is rather straightforward and geometry data is preserved until the frame is done rendering. And while this normally is all well and good, the one big pitfall of a forward renderer is that complex lighting is very expensive to run because you don’t know precisely which lights will hit which geometry, resulting in the lighting equivalent of overdraw where objects are rendered multiple times to handle all of the lights.

In deferred rendering however, the rendering process is modified, most fundamentally by breaking it down into several additional parts and using an additional intermediate buffer (the G-Buffer) to store the results. Ultimately through deferred rendering it’s possible to decouple lighting from geometry such that the lighting isn’t handled until after the geometry is thrown away, which reduces the amount of time spent calculating lighting as only the visible scene is lit.

The downside to this however is that in its most basic implementation deferred rendering makes MSAA impossible (since the geometry has been thrown out), and it’s difficult to efficiently light complex materials. The MSAA problem in particular can be solved by modifying the algorithm to save the geometry data for later use (a deferred geometry pass), but the consequence is that MSAA implemented in such a manner is more expensive than usual both due to the amount of memory the saved geometry consumes and the extra work required to perform the extra sampling.

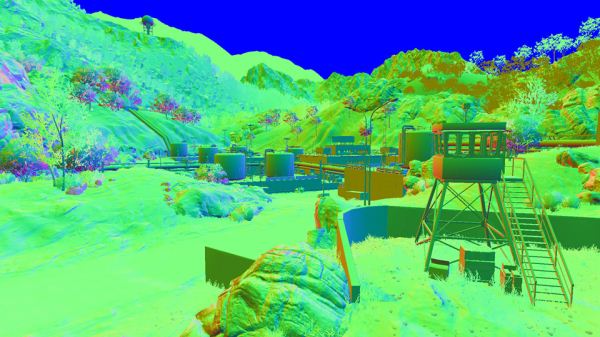

Battlefield3 G-Buffer. Image Courtesy DICE

For this reason developers have quickly been adopting post-process AA methods, primarily NVIDIA’s Fast Approximate Anti-Aliasing (FXAA). Similar in execution to AMD’s driver-based Morphological Anti-Aliasing, FXAA works on the fully rendered image and attempts to look for aliasing and blur it out. The results generally aren’t as good as MSAA (and especially not SSAA), but it’s very quick to implement (it’s just a shader program) and has a very small performance hit. Compared to the difficultly of implementing MSAA on a deferred renderer, this is faster and cheaper, and it’s why MSAA support for DX10+ games is anything but universal.

But what if there was a way to have a forward renderer with performance similar to that of a deferred renderer? That’s what AMD is proposing with one of their key tech demos for the 7000 series: Leo. Leo showcases AMD’s solution to the forward rendering lighting performance problem, which is to use a compute shader to implement light culling such that the compute shader identifies the tiles that any specific light will hit ahead of time, and then using that information only the relevant lights are computed on any given tile. The overhead for lighting is still greater than pure deferred rendering (there’s still some unnecessary lighting going on), but as proposed by AMD, it should make complex lighting cheap enough that it can be done in a forward renderer.

As AMD puts it, the advantages are twofold. The first advantage of course is that MSAA (and SSAA) compatibility is maintained, as this is still a forward render; the use of the compute shader doesn’t have any impact on the AA process. The second advantage relates to lighting itself: as we mentioned previously, deferred rendering doesn’t work well with complex materials. On the other hand forward rendering handles complex materials well, it just wasn’t fast enough until now.

Leo in turn executes on both of these concepts. Anti-aliasing is of course well represented through the use of 4x MSAA, but so are complex materials. AMD’s theme for Leo is stop motion animation, so a number of different material types are directly lit, including fabric, plastic, cardboard, and skin. The total of these parts may not be the most jaw-dropping thing you’ve ever seen, but the fact that it’s being done in a forward renderer is amazingly impressive. And if this means we can have good lighting and excellent support for real anti-aliasing, we’re all for it.

Unfortunately it’s still not clear at this time when 7970 owners will be able to get their hands on the demo. The version released to the press is still a pre-final version (version number 0.9), so presumably AMD’s demo team is still hammering out the demo before releasing it to the public.

Update: AMD has posted the Leo demo, along with their Ptex demo over at AMD Developer Central. It should work with any DX11 card, though a quick check has it failing on NVIDIA cards

47 Comments

View All Comments

CeriseCogburn - Saturday, June 23, 2012 - link

Yep Termie, now the hyper enthusiast experts with their 7970's are noobs unable to be skilled enough to overclock...Can you amd fans get together sometime and agree on your massive fudges once and for all - we just heard no one but the highest of all gamers and end user experts buys these cards - with the intention of overclocking the 7970 to the hilt, as the expert in them demands the most performance for the price...

We just heard MONTHS of that crap - now it's the opposite....

Suddenly, the $579.00 amd fanboy buyers can't overclock...

How about this one- add this one to the arsenal of hogwash...

" Don't void your warranty !" by overclocking even the tiniest bit..

( We know every amd fanboy will blow the crap out of their card screwing around and every tip given around the forums is how to fake out the vendor, lie, and get a free replacement after doing so )

darkswordsman17 - Tuesday, January 31, 2012 - link

First, sorry for this response being several days later.Fair enough. I didn't mean it as a real criticism just more of a nitpick. I realize the state of voltage control on video cards isn't exactly stellar and I'm sure AMD/nVidia aren't keen on you doing it.

Its certainly not as robust as CPU voltage adjustment is today, which I didn't mean to confuse as I understand there's a pretty significant disparity.

I sould have expanded my on my comment a bit more.I have a hunch AMD is being pretty conservative on voltage with these (in both directions, its higher than it needs to be, but its not as high as it could fairly safely be either). Firstly, probably to play it safe with the chips from the new process, but also I think they're giving themselves some breathing room for improvement. After 40nm, they probably didn't want to go for broke right out of the gate and leave some extra that they could push to improve as needed (they have space to release a 7980; something in line with the 4890). Considering the results, its not like they really need to, especially coupled with the rumored 28nm issues.

Oh, and likewise to Termie, I do still appreciate the work and realize you can't please everyone. I liked the update and actually I think you did enough to touch on the subject in the 7950 review (namely addressing the lack of quality software management for GPUs currently).

mczak - Friday, January 27, 2012 - link

The Leo demo as mentioned in the article has been released (no idea about version):http://developer.amd.com/samples/demos/pages/AMDRa...

Requires 7970 to run (not sure why exactly if it's just DirectX11/DirectCompute?).

mczak - Friday, January 27, 2012 - link

Actually Dave Baumann clarified it should run on other hw as well.ltcommanderdata - Friday, January 27, 2012 - link

It seemed like we've just finished seeing most major engines like Unreal Engine 3, FROSTBITE 2.0, CryEngine 3 transition to a deferred rendering model. Is it very difficult for developers to modify their existing/previous forward renderers to incorporate the new lighting technique used in the Leo Demo? Otherwise, given the investment developers have put into deferred rendering, I'm guessing they're not looking to transitioned back to an improved forward renderer anytime soon.On a related note, you mentioned the lack of MSAA is a common problem to DX10+. Given this improved lighting technique requires compute shaders, is it actually DX11 GPU only, ie. does it require CS5.0 or can it be implemented in CS4.x to support DX10 GPUs? According to the latest Steam survey, by far the majority of GPUs are still DX10, so game developers won't be dropping support for them for a few years. Some games do support DX11 only features like tessellation, but I presume that having to implement 2 different rendering/lighting models is a lot more work, which could hinder adoption if the technique isn't compatible with DX10 GPUs.

Logsdonb - Friday, January 27, 2012 - link

No one has tested the 7970 in a crossfire configuration under PCI 3.0. I would expect increased bandwidth to benefit the most in that environment. I realize the 7800 series will be better candidates for crossfire given price, heat, and power consumption but a test with the 7900 series would show the potential.piroroadkill - Friday, January 27, 2012 - link

I'm sorry, I might be pretty drunk, but I'm falling at the first page."PCIe Bandwidth..."

There's a clear difference between 8x and 16x PCIe 3.0

Even if it is small, it is there, showing some bottlenecking. If it was inside the margin of error, you'd expect they'd switch places. They didn't. There is clear bottlenecking.

Concillian - Friday, January 27, 2012 - link

I saw some stuff flying around about SMAA a month or two ago... seemed promising and a better alternative to FXAA, but I haven't seen much in the "official" media outlets about it.It'd be nice to see some analysis on SMAA vs. FXAA vs. Morphological AA in an article covering the current state of AA.

Ryan Smith - Friday, January 27, 2012 - link

As I understand it, SMAA is still a work in progress. It would be premature to comment on it at this time.tipoo - Friday, January 27, 2012 - link

If I remember correctly, TB provides the bandwidth of a PCIe 4x connection. So if a high end card like this isn't bottlenecked with that much constraint, it sure looks good for external graphics! You'd need a separate power plug of course, but it now looks feasible.