XFX’s Radeon HD 7970 Black Edition Double Dissipation: The First Semi-Custom 7970

by Ryan Smith on January 9, 2012 6:00 AM ESTThe Test, Power, Temp, & Noise

| CPU: | Intel Core i7-3960X @ 4.3GHz |

| Motherboard: | EVGA X79 SLI |

| Chipset Drivers: | Intel 9.2.3.1022 |

| Power Supply: | Antec True Power Quattro 1200 |

| Hard Disk: | Samsung 470 (240GB) |

| Memory: | G.Skill Ripjaws DDR3-1867 4 x 4GB (8-10-9-26) |

| Video Cards: |

XFX Radeon HD 7970 Black Edition Double Diss. AMD Radeon HD 7970 AMD Radeon HD 6990 AMD Radeon HD 6970 AMD Radeon HD 6950 AMD Radeon HD 5870 AMD Radeon HD 5850 AMD Radeon HD 4870 NVIDIA GeForce GTX 590 NVIDIA GeForce GTX 580 NVIDIA GeForce GTX 570 NVIDIA GeForce GTX 470 NVIDIA GeForce GTX 285 |

| Video Drivers: |

NVIDIA ForceWare 290.36 Beta AMD Catalyst Beta 8.921.2-111215a |

| OS: | Windows 7 Ultimate 64-bit |

We’ll start things in reverse today by first looking at the power, temperature, & noise characteristics of the 7970 BEDD. The custom cooler is the single biggest differentiating factor for the BEDD, followed by its factory overclock.

| Radeon HD 7900 Series Voltages | ||||

| Ref 7970 Load | Ref 7970 Idle | XFX 7970 Black Edition DD | ||

| 1.17v | 0.85v | 1.17v | ||

As we noted in our introduction, the BEDD ships at the same voltage as the reference 7970: 1.17v. Since XFX is using the AMD PCB too, the power characteristics are virtually identical, save for the overclock and the power draw of the two fans.

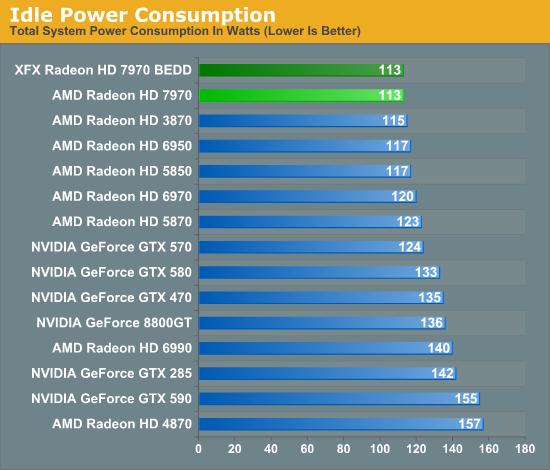

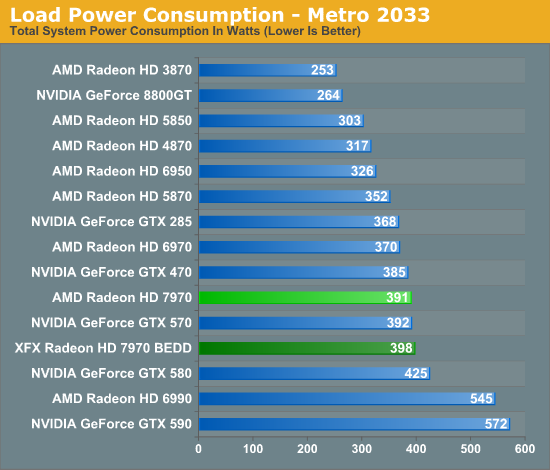

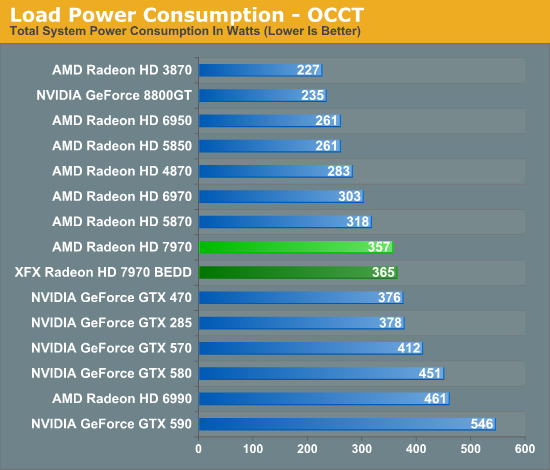

Even with two fans the idle power consumption of the BEDD is identical to the reference 7970. Meanwhile under load we see that the power consumption for the BEDD creeps up slightly compared to the reference 7970. With Metro 2033 we see system power consumption peak at 398W, 7W over the reference card, meanwhile under OCCT system power consumption peaks at 365W, 8W over the reference card.

It’s worth noting that as XFX has not touched the PowerTune limits for the BEDD, it’s capped at the same 250W limit as the reference 7970 by default. So far we haven’t seen any proof that the BEDD is being throttled at this level under any of our games or compute benchmarks, however we can’t completely rule this out as we still don’t have any tools that can read the real clockspeed of the 7970 when PowerTune throttling is active. Whenever an overclock is involved there’s always a risk of hitting that PowerTune limit before a card can fully stretch its legs, hence the need to be concerned about PowerTune if it hasn’t already been adjusted. As for our power tests, the difference seems to largely boil down to the higher power consumption of XFX’s fans when they’re operating above idle.

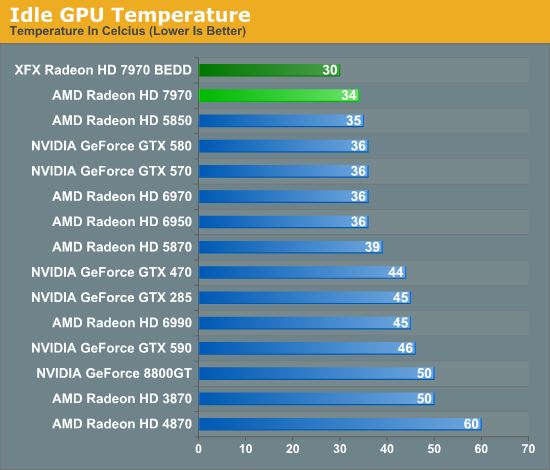

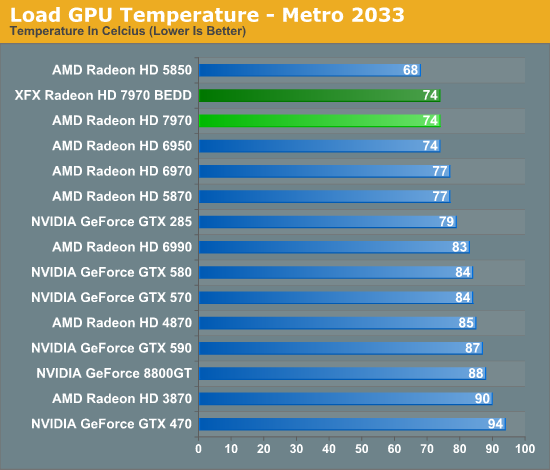

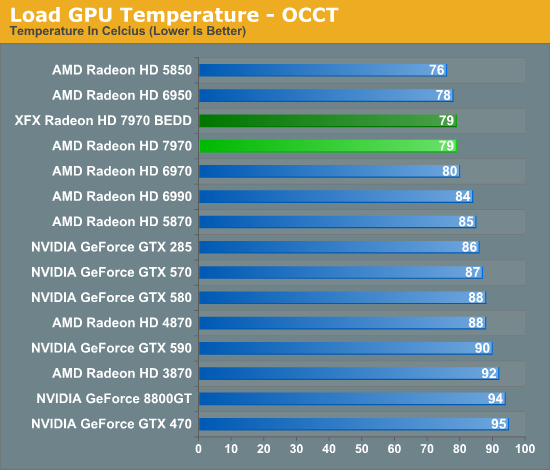

One of the key advantages of open air designs is that they do a better job of dissipating heat from the GPU, which is what we’re seeing here with the BEDD under idle. At 30C the BEDD is 4C cooler than the reference 7970, with all of that being a product of the Double Dissipation cooler.

However it’s interesting to note that temperatures under load end up being identical to the reference 7970. The BEDD is no cooler than the reference 7970 even with its radically different cooling apparatus. This is ultimately a result of the fact that the BEDD is a semi-custom card; not only is XFX using AMD’s PCB, but they’re using AMD’s aggressive fan profile. At any given temperature the BEDD’s fans ramp up to the same speed (as a percentage) as AMD’s fans, meaning that the BEDD’s fans won’t ramp up until the card hits the same temperatures that trigger a ramp-up on the reference design. As a result the BEDD is no cooler than the reference 7970, though with AMD’s aggressive cooling policy the reference 7970 would be tough to beat.

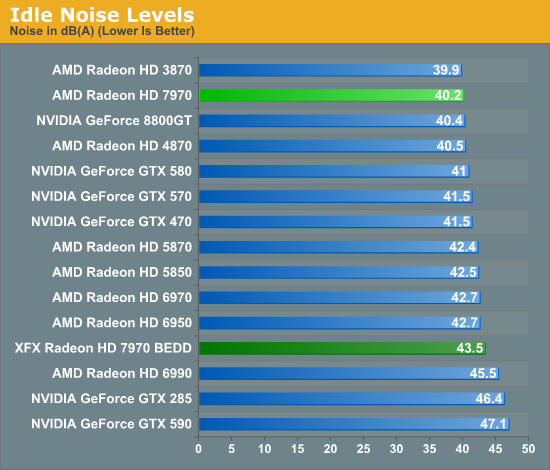

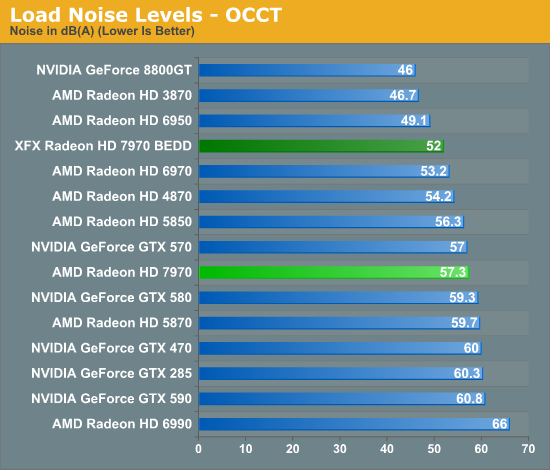

Finally taking a look at noise we can see the full impact of XFX’s replacement cooler. For XFX this is both good and bad. On the bad side, their Double Dissipation cooler can’t match the 7970 reference cooler when idling; 43.5dB isn’t particularly awful but it’s noticeable, particularly when compared to the reference 7970. Consequently the BEDD is definitely not a good candidate for a PC that needs to be near-silent at idle.

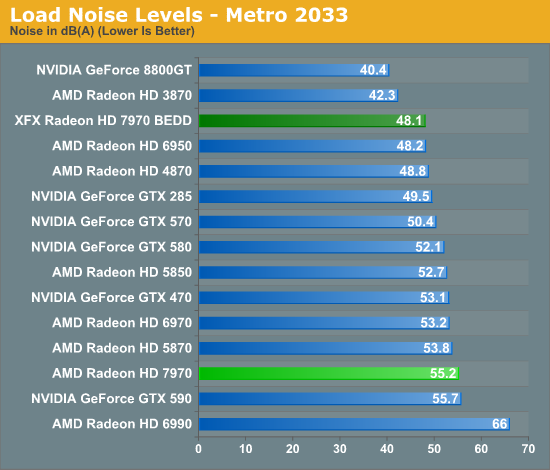

On the flip side under load we finally see XFX’s cooler choice pay off. AMD’s aggressive fan profile made the reference 7970 one of the loudest single-GPU cards in our lineup, but XFX’s Double Dissipation cooler fares significantly better here. At 48.1dB under Metro it’s not only quieter than the reference 7970 by a rather large 7dB, but it’s also quieter than every other modern high-end card in our lineup, effectively tying with the reference 6950. Even under our pathological OCCT test it only reaches 52dB, 5dB quieter than the reference 7970.

Ultimately where the BEDD was a poor candidate for noise under idle, it’s an excellent candidate for a quiet computer under load thanks to the open air nature of XFX’s Double Dissipation cooler, and certainly the launch card to get if you want a load-quiet 7970. Just don’t throw it directly up against another card in CrossFire, as these open air cards typically fare poorly without an open slot to work with.

93 Comments

View All Comments

chizow - Tuesday, January 10, 2012 - link

15-25% is right according to this review and every other one on the internet, beta drivers or not.If you want to nitpick about results, you'll see the differences are actually even lower than 15-25% in the one bench that matters the most, minimum FPS.

As for not being able to interpret or follow an argument, the 50% was in reference to the 7970 compared to last-gen AMD parts like the 6970/5870, not the 580.

Kjella - Monday, January 9, 2012 - link

The 4870 was the killer? The 5000 series kicked major ass with the 5870 flying off the charts and the 5850 - the one I got - was a total killer at $259 MSRP that I managed to get before the price hike. You'll still be paying $200+ to get a card to beat it and the 7970 did nothing to shake that up. I'm waiting for Ivy Bridge anyway, hopefully we'll have Kepler by then but if they don't improve the performance/$ more than AMD did I might just sit it out for another generation.SlyNine - Tuesday, January 10, 2012 - link

Agreed but I'm not really willing to spend more then I did for this 5870.However the more I look at the benchmarks the more I wonder what they will look like when you really start to push the 7970.

Thinking back to the 9700pro launch alot of people didn't consider that to be alot faster then the 4600 because they were benchmarking them at irrelevant setting for the 9700pro. But when you really pushed each card the 9700pro was more then 4x as fast.

Galid - Monday, January 9, 2012 - link

Nnvidia fanboy.... saying something like ''I don't THINK that will hold true in this case when (video card that doesn't exist yet made by nvidia) is finally released'' totally uselessThe only reason 4870 was so cheap is because the die was SO small compared to GT200 parts... and Nvidia's politic is to make the fastest video card at the expense of big die(lower yields) and high costs....

chizow - Tuesday, January 10, 2012 - link

" Nvidia's politic is to make the fastest video card at the expense of big die(lower yields) and high costs.... "And let your own thoughts govern your conclusions....see how they match mine...

Its funny that you immediately jump to the fanboy conclusion when even the AMD fanboys are coming to the same conclusion. High-end Kepler once released will be faster than Tahiti, its not a matter of if, its a matter of when.

AMD made a ~50% jump in performance going to 28nm, to expect anything less from Nvidia with their 28nm part would be folly. A 50% increase from GTX 580 puts Kepler comfortably ahead of Tahiti, but given 7970 is only 15-25% faster, it doesn't even need to increase that much.

Also, the 4870 was so cheap because ATI badly needed to regain market and mindshare. They stumbled horribly with the R600/2900XT debacle and while the RV670/3870 was a massive improvement in thermals, the performance was still behind Nvidia's 3rd or 4th fastest part (8800GTS) and still significantly slower than Nvidia's amazing mid-range 8800GT.

Still, I think they underpriced it by a large amount given it was half the price of a GTX 280 and only ~15% slower. Just a lost opportunity there for ATI but they felt it was more important to get mind/marketshare back at that point and now they get to reap the windfall. The trickle down effect becomes most obvious once you start projecting performance of the mid-range parts against last-gen parts.

Morg. - Tuesday, January 10, 2012 - link

50% ? not really .As I said before, this is mostly a matter of TDP.

The only reason the gtx580 was ahead (and that was only at full HD and lower resolutions) was its higher TDP / bigger die size.

nVidia may choose to release yet another high TDP part for 680, just like 580 and it may just give them the same edge, but they will NOT win this round, just like they did NOT win the previous one.

The main problem for nVidia on this round is being late to the 28nm party, other than that it's business as usual.

Morg. - Tuesday, January 10, 2012 - link

What I mean by that is simply that perf/watt/dollar is the ONLY measure of a good GPU or CPU, the actual market position of the part does not make it "good" or "bad", just "fitting".The gtx580 was the perfect fit for "biggest and baddest single gpu card", it however had worse perf/watt than 6-series, and much worse perf/dollar.

AMD could easily have decided to double die size on 6- series and beaten the crap out of the gtx580 but they didn't, because they targeted a completely different market position for their products.

chizow - Tuesday, January 10, 2012 - link

Huh? No.If you target the high-end performance segment, the only thing that matters is performance. Performance per watt isn't going to net you any more FPS in the games you're buying or upgrading a card for, and its certainly not going to close the gap in frames per second when you're already trailing the competition.

You're quite possibly the ONLY person who I've ever seen claim perf/watt is the leading factor when it comes to high-end GPUs. Maybe if you were referring to the Server CPU space, but even there raw performance with form factor is a major consideration over perf/watt. No one's running mission critical systems on an Atom farm because of power considerations, that's for sure.

And yes, of course Nvidia is going to release another high-end massive GPU, that's always been their strategy. If you haven't noticed, AMD has quietly gone along this path as well, losing their small die strategy along the way, making it harder and harder for them to maintain their TDP advantages or produce their 2xGPU parts for each generation. AMD used to crank out an X2 with no sacrifices, but lately they've had to employ the same castration/downclocking methods Nvidia has used to stay within PCI specs.

And to set the record straight, Nvidia has won the last two generations. AMD certainly had their wins at various price points, but ultimately the GTX 280/285 were better than the 4870/4890 and the 480/580 were better than the 5870/6970. Going down from there, Nvidia was competitive in both price and performance with all of AMD's parts, and in many cases, provided amazing value at price points AMD struggled to compete with (See GTX 460, GTX 560Ti).

Morg. - Tuesday, January 10, 2012 - link

Right .perf/watt is everything.

That is why the 6990 completely raped the 590 . perf/watt .

The 6990 with minor tweaks actually matched a 6970 CF.

The 590 with minor tweaks actually exploded. and it was more expensive...

Your fanboism clouds your mind young padawan ... nVidia may have taken single GPU crown on the last two rounds, but they never had price/performance for anything.

Need I remind you you could get a CF of 6950's for the price of a gtx580 ? and that said CF would kill a 680 should it be 50% faster than said 580 ?

Gtx 460 and 560 ti were failure compared to AMD's offerings - in terms of performance.

They had the nVidia logo, the nVidia drivers (good thing actually) and some stuff.

But they did NOT have better performance for the same price. 560 Ti was almost in the same price bracket as 6950 and much slower, non-unlockable etc..

So yes, single GPU crown all you like ..

best GPU / arch. ?

well I'd say that's best efficiency with comparable performance = AMD

(Again .. if you're one of those who think a gtx580 is a good card for one full HD screen .. go ahead spend 550 bucks on a GPU and 100 on the screen -- otherwise the reality is AMD was within 5% with the 6970)

chizow - Tuesday, January 10, 2012 - link

Perf/watt and perf/price means nothing when the concern in this segment is absolute performance. Once you start compromising and qualifying your criteria, you start down a slippery slope that you simply can't recover from. If anything, lower performance means you necessarily win the perf/watt and perf/price categories but by doing so, you lose the premium value of compromising nothing for performance.By your metric, an IGP or integrated GPU would be winning the GPU market because it costs nothing and uses virtually no power, but of course, it would be completely asinine to make that assumption when referencing the high-end discrete GPU market where raw performance relative to the market is the only determinant of price.

You can go down the line all you like in price/performance segments with CF/SLI, for every example you give there's an equally if not more compelling offering from Nvidia with the GTX 460, 560, 560Ti, 570 etc. that offers price and performance points that have AMD matched or beaten. Because the deck is stacked starting at the top, and when you have the highest performing part in the segment, that sets the tone for everything else in the market.

AMD is finally coming to grips with this which is why they are pricing this card as a halo product and not as a mid-range product.