Intel Core i7 3960X (Sandy Bridge E) Review: Keeping the High End Alive

by Anand Lal Shimpi on November 14, 2011 3:01 AM EST- Posted in

- CPUs

- Intel

- Core i7

- Sandy Bridge

- Sandy Bridge E

No Integrated Graphics, No Quick Sync

All of this growth in die area comes at the expense of one of Sandy Bridge's greatest assets: its integrated graphics core. SNB-E features no on-die GPU, and as a result it does not feature Quick Sync either. Remember that Quick Sync leverages the GPU's shader array to accelerate some of the transcode pipe, without its presence on SNB-E there's no Quick Sync.

Given the target market for SNB-E's die donor (Xeon servers), further increasing the die area by including an on-die GPU doesn't seem to make sense. Unfortunately desktop users suffer as you lose a very efficient way to transcode videos. Intel argues that you do have more cores to chew through frames with, but the fact remains that Quick Sync frees up your cores to do other things while SNB-E requires that they're all tied up in (quickly) transcoding video. If you don't run any Quick Sync enabled transcoding applications, you won't miss the feature on SNB-E. If you do however, this will be a tradeoff you'll have to come to terms with.

Tons of PCIe and Memory Bandwidth

Occupying the die area where the GPU would normally be is SNB-E's new memory controller. While its predecessor featured a fairly standard dual-channel DDR3 memory controller, SNB-E features four 64-bit DDR3 memory channels. With a single DDR3 DIMM per channel Intel officially supports speeds of up to DDR3-1600, with two DIMMs per channel the max official speed drops to 1333MHz.

With a quad-channel memory controller you'll have to install DIMMs four at a time to take full advantage of the bandwidth. In response, memory vendors are selling 4 and 8 DIMM kits specifically for SNB-E systems. Most high-end X79 motherboards feature 8 DIMM slots (2 per channel). Just as with previous architectures, installing fewer DIMMs is possible, it simply reduces the peak available memory bandwidth.

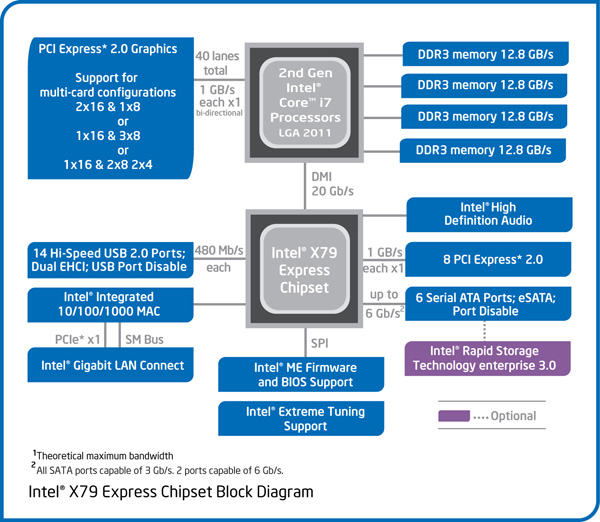

Intel increased bandwidth on the other side of the chip as well. A single SNB-E CPU features 40 PCIe lanes that are compliant with rev 3.0 of the PCI Express Base Specification (aka PCIe 3.0). With no PCIe 3.0 GPUs available (yet) to test and validate the interface, Intel lists PCIe 3.0 support in the chip's datasheet but is publicly guaranteeing PCIe 2.0 speeds. Intel does add that some PCIe devices may be able to operate at Gen 3 speeds, but we'll have to wait and see once those devices hit the market.

The PCIe lanes off the CPU are quite configurable as you can see from the diagram above. Users running dual-GPU setups can enjoy the fact that both GPUs will have a full x16 interface to SNB-E (vs x8 in SNB). If you're looking for this to deliver a tangible performance increase, you'll be disappointed:

| Multi GPU Scaling - Radeon HD 5870 CF | |||||

| Max Quality, 4X AA/16X AF | Metro 2033 (19x12) | Crysis: Warhead (19x12) | Crysis: Warhead (25x16) | ||

| Intel Core i7 3960X (2 x16) | 1.87x | 1.80x | 1.90x | ||

| Intel Core i7 2600K (2 x8) | 1.94x | 1.80x | 1.88x | ||

Modern GPUs don't lose much performance in games, even at high quality settings, when going from a x16 to a x8 slot.

I tested PCIe performance with an OCZ Z-Drive R4 PCIe SSD to ensure nothing was lost in the move to the new architecture. Compared to X58, I saw no real deltas in transfers to/from the Z-Drive R4:

| PCI Express Performance - OCZ Z-Drive R4, Large Block Sequential Speed - ATTO | ||||

| Intel X58 | Intel X79 | |||

| Read | 2.62 GB/s | 2.66 GB/s | ||

| Write | 2.49 GB/s | 2.50 GB/s | ||

The Letdown: No SAS, No Native USB 3.0

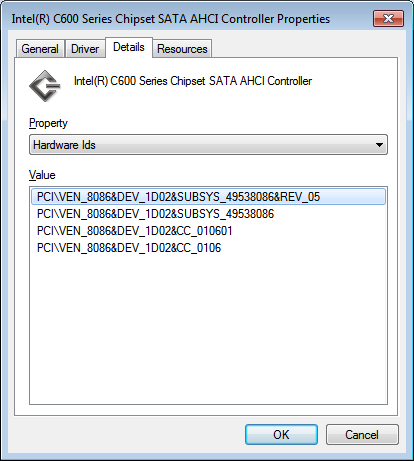

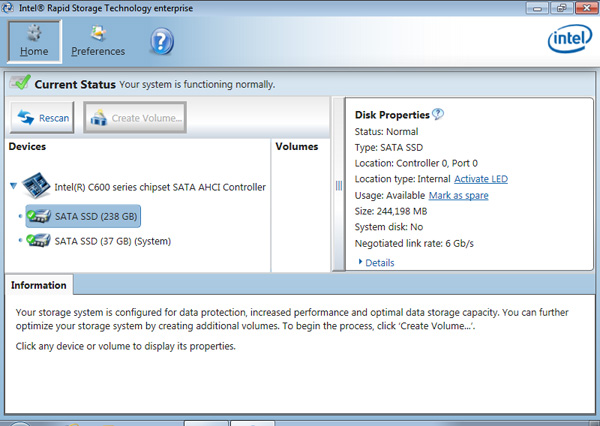

Intel's current RST (Rapid Story Technology) drivers don't support X79, however Intel's RSTe (for enterprise) 3.0 will support the platform once available. We got our hands on an engineering build of the software, which identifies the X79's SATA controller as an Intel C600:

Intel's enterprise chipsets use the Cxxx nomenclature, so this label makes sense. A quick look at Intel's RSTe readme tells us a little more about Intel's C600 controller:

SCU Controllers:

- Intel(R) C600 series chipset SAS RAID (SATA mode)

Controller

- Intel C600 series chipset SAS RAID ControllerSATA RAID Controllers:

- Intel(R) C600 series chipset SATA RAID ControllerSATA AHCI Controllers:

- Intel(R) C600 series chipset SATA AHCI Controller

As was originally rumored, X79 was supposed to support both SATA and SAS. Issues with the implementation of the latter forced Intel to kill SAS support and go with the same 4+2 3Gbps/6Gbps SATA implementation 6-series chipset users get. I would've at least liked to have had more 6Gbps SATA ports. It's quite disappointing to see Intel's flagship chipset lacking feature parity with AMD's year-old 8-series chipsets.

I ran a sanity test on Intel's X79 against some of our H67 data for SATA performance with a Crucial m4 SSD. It looks like 6Gbps SATA performance is identical to the mainstream Sandy Bridge platform:

| 6Gbps SATA Performance - Crucial m4 256GB (FW0009) | ||||||

| 4KB Random Write (8GB LBA, QD32) | 4KB Random Read (100% LBA, QD3) | 128KB Sequential Write | 128KB Sequential Read | |||

| Intel X79 | 231.4 MB/s | 57.6 MB/s | 273.3 MB/s | 381.7 MB/s | ||

| Intel Z68 | 234.0 MB/s | 59.0 MB/s | 269.7 MB/s | 372.1 MB/s | ||

Intel still hasn't delivered an integrated USB 3.0 controller in X79. Motherboard manufacturers will continue to use 3rd party solutions to enable USB 3.0 support.

163 Comments

View All Comments

Zak - Monday, November 14, 2011 - link

I want native USB3 plus significantly higher number of PCIe channels so I can run two cards at full 16x and a decent RAID controller at 4x without having to pay over $300 for the mobo. Oh, and for god's sake say goodbye to the PCI slots please while improving the motherboard layout so dual slot cards don't cover any available PCIe slots.Bullshit like "Three PCIe x16 slots!!!! (running at 8x, 8x, 2x) make me sick. The latest Intel motherboards were rather underwhelming in terms of features.

chizow - Monday, November 14, 2011 - link

It really seems as if Intel wants to kill off this high-end enthusiast desktop segment completely; what we have here is a by-product of their server market and perhaps the last of a dying breed. First sign was the change to multiple sockets and locking clock frequency on their non-enthusiast parts. Also, SB-E comes with a huge increase in platform cost compared to Nehalem that doesn't really justify the increase in performance over SB.$500 for the entry-level SB-E CPU and $300+ for the motherboard is going to be a bitter pill to swallow for those used to the $200-$300 entry-level Nehalem CPUs and $200 boards. I know there's going to be a 4-core part that may be closer to that price point sometime next year, but again, one has to ask if it will be worthwhile over a 2600K at that point, especially since the K is unlocked and the SB-E part isn't.

Also factor in the reality PCIE 3.0 is going to be a negligible benefit of the chipset. Maybe if ATI/Nvidia's next-gen GPUs make use of the extra bandwidth. You also don't get any additional benefits in the way of SATA or USB support compared to last-gen SB products....its really quite disappointing for a chipset that was held off this long.

Overall the performance looks good, but at the price and size....is this the path CPUs are headed for? Huge and hot like GPUs? I mean we thought Bulldozer was massive, SB-E is just as big but at least it delivers when it comes to performance I guess. I can see why Intel wanted to bifurcate their server/desktop business, but I think the unfortunate casualty will be the high-end enthusiast market that don't want to pay e"X"treme prices for the privelege.

redisnidma - Monday, November 14, 2011 - link

Looking at these results, you have to wander what in the world was AMD thinking when they designed Bulldozer (AKA Crapdozer).Feel sorry for them. :(

just4U - Monday, November 14, 2011 - link

For the most part Amd's bulldozer did give us 2500K speeds.. and multithreaded performance is there. This cpu is the fastest we've seen but it certainly doesn't blow one away in comparison to the 2600K. The Amd CPU is criticized for one thing really.. it's single threaded performance which is no better then it's cheapest proccessors.Guspaz - Monday, November 14, 2011 - link

A minor performance boost in most real-world scenarios, and yet a massive increase in cost and power consumption...This whole chip is basically a big kludge. Take an 8-core Xeon and disable a quarter of the chip, slap a "consumer" label on it, and call it a day? That's not even trying, that's just lazy.

This chip is 50% faster than SNB in heavily multithreaded applications because it has 50% more cores. A much more interesting chip would have been to take the existing SandyBridge chips and increase the core count, rather than taking a Xeon and disabling parts of the core.

EJ257 - Monday, November 14, 2011 - link

Actually isn't that basically what they did with this? They took 8 SB cores and threw it on the die, took out the IGP and dropped in a massive L3 cache. I mean if your going to be building a gaming rig based on SB-E, would you actually care about the IGP at that point when you got SLI or X-Fire GPUs?I understand how this move would make some people feel like they've been slapped in the face by Intel. Years as loyal customers and this time around they get a "crippled" part to call a flagship. Look at what the state of the high end CPU market is like. At this point Intel is dominating and there is really no incentive for them to do a completely different chip when a "crippled" Xeon can run circles around the best AMD has to offer. From their point of view this is the most economic way to do business. But yes, meh indeed when you already have a i7 2600K running smoothly.

adamantinepiggy - Monday, November 14, 2011 - link

Or will there be a desktop version again without ECC support and a workstation Xeon version that does? (890x/990x vs x5680 Xeon) I'll take ECC support for RAM over faster RAM with eight populated slots please. The larger and larger memory amounts means more likelihood of bit errors, but for two generations of CPU's from Intel, no EEC RAM support on the CPU memory controller.hechacker1 - Monday, November 14, 2011 - link

I agree. With massive amounts of memory that you could potentially put onto this platform, I'd really like to have a version with ECC for the workstation.BSMonitor - Monday, November 14, 2011 - link

I understand the need to keep news positive for AMD. Competition and all. However, repeatedly stating over time that they are competitive on price is kind of misleading in the grand scheme. Each new CPU arch. from Intel yields double digit performance gains(lately). AMD's are often delayed and in BD's case yield backwards results in many benchmarks.The truth is, clock for clock, given as many transistors, given as much power and heat, AMD is grossly not competitive.

The ONLY reason one can say that their chips are competitive in relation to price, is that they have NO other choice but to sell them at that price. AMD looks at where it's new CPU's relate in terms of performance to Intel's lineup and price accordingly. As many R&D $$, transistors, etc that go into each FX-8150, the flagship CPU should be at least competing with the 2600K, 990X, etc of the world. Forcing either Intel either to lower the $1000 tag on SNB-E or allowing AMD a $1000 alternative.

However, all we get from AMD is mediocre, late to market attempts to "catch-up". My point, AMD needs a new infusion of engineers and/or new approach. A complete new idea/redesign/etc..

Let's face it, the x86 market is now Intel x86. Perhaps, AMD should take what it knows in processor design and embrace a new idea.. Maybe a mixed ARM/x86 or an enhanced ARM 64-bit for desktop PCs. Something to stand out and deliver on. Pure x86, AMD is falling further behind. BD did not even catch up to 4 core SNB. And Ivy Bridge is being held back, as there is no really competition for it. The landscape looks like AMD will be out of the desktop CPU space within a year or two. Or at least religated to Cyrix status from the 2000's.

bji - Monday, November 14, 2011 - link

Your point about AMD's prices don't make any sense. You're saying that AMD is not a good value because it is selling its chips at a price that makes them a good value rather than making faster chips and selling them for more money like Intel does?!?Since when does "a good price:performance ratio" not equal "a good value" just because the CPU vendor doesn't have high (or any!) profit margins?