Intel Core i7 3960X (Sandy Bridge E) Review: Keeping the High End Alive

by Anand Lal Shimpi on November 14, 2011 3:01 AM EST- Posted in

- CPUs

- Intel

- Core i7

- Sandy Bridge

- Sandy Bridge E

No Integrated Graphics, No Quick Sync

All of this growth in die area comes at the expense of one of Sandy Bridge's greatest assets: its integrated graphics core. SNB-E features no on-die GPU, and as a result it does not feature Quick Sync either. Remember that Quick Sync leverages the GPU's shader array to accelerate some of the transcode pipe, without its presence on SNB-E there's no Quick Sync.

Given the target market for SNB-E's die donor (Xeon servers), further increasing the die area by including an on-die GPU doesn't seem to make sense. Unfortunately desktop users suffer as you lose a very efficient way to transcode videos. Intel argues that you do have more cores to chew through frames with, but the fact remains that Quick Sync frees up your cores to do other things while SNB-E requires that they're all tied up in (quickly) transcoding video. If you don't run any Quick Sync enabled transcoding applications, you won't miss the feature on SNB-E. If you do however, this will be a tradeoff you'll have to come to terms with.

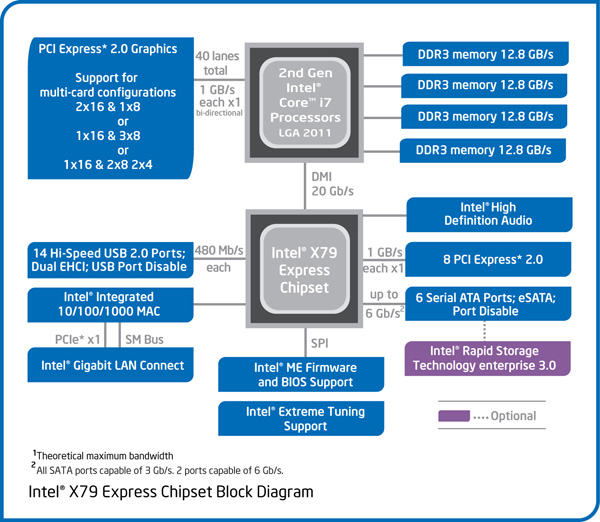

Tons of PCIe and Memory Bandwidth

Occupying the die area where the GPU would normally be is SNB-E's new memory controller. While its predecessor featured a fairly standard dual-channel DDR3 memory controller, SNB-E features four 64-bit DDR3 memory channels. With a single DDR3 DIMM per channel Intel officially supports speeds of up to DDR3-1600, with two DIMMs per channel the max official speed drops to 1333MHz.

With a quad-channel memory controller you'll have to install DIMMs four at a time to take full advantage of the bandwidth. In response, memory vendors are selling 4 and 8 DIMM kits specifically for SNB-E systems. Most high-end X79 motherboards feature 8 DIMM slots (2 per channel). Just as with previous architectures, installing fewer DIMMs is possible, it simply reduces the peak available memory bandwidth.

Intel increased bandwidth on the other side of the chip as well. A single SNB-E CPU features 40 PCIe lanes that are compliant with rev 3.0 of the PCI Express Base Specification (aka PCIe 3.0). With no PCIe 3.0 GPUs available (yet) to test and validate the interface, Intel lists PCIe 3.0 support in the chip's datasheet but is publicly guaranteeing PCIe 2.0 speeds. Intel does add that some PCIe devices may be able to operate at Gen 3 speeds, but we'll have to wait and see once those devices hit the market.

The PCIe lanes off the CPU are quite configurable as you can see from the diagram above. Users running dual-GPU setups can enjoy the fact that both GPUs will have a full x16 interface to SNB-E (vs x8 in SNB). If you're looking for this to deliver a tangible performance increase, you'll be disappointed:

| Multi GPU Scaling - Radeon HD 5870 CF | |||||

| Max Quality, 4X AA/16X AF | Metro 2033 (19x12) | Crysis: Warhead (19x12) | Crysis: Warhead (25x16) | ||

| Intel Core i7 3960X (2 x16) | 1.87x | 1.80x | 1.90x | ||

| Intel Core i7 2600K (2 x8) | 1.94x | 1.80x | 1.88x | ||

Modern GPUs don't lose much performance in games, even at high quality settings, when going from a x16 to a x8 slot.

I tested PCIe performance with an OCZ Z-Drive R4 PCIe SSD to ensure nothing was lost in the move to the new architecture. Compared to X58, I saw no real deltas in transfers to/from the Z-Drive R4:

| PCI Express Performance - OCZ Z-Drive R4, Large Block Sequential Speed - ATTO | ||||

| Intel X58 | Intel X79 | |||

| Read | 2.62 GB/s | 2.66 GB/s | ||

| Write | 2.49 GB/s | 2.50 GB/s | ||

The Letdown: No SAS, No Native USB 3.0

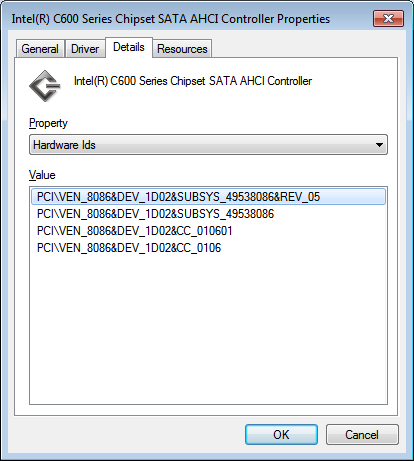

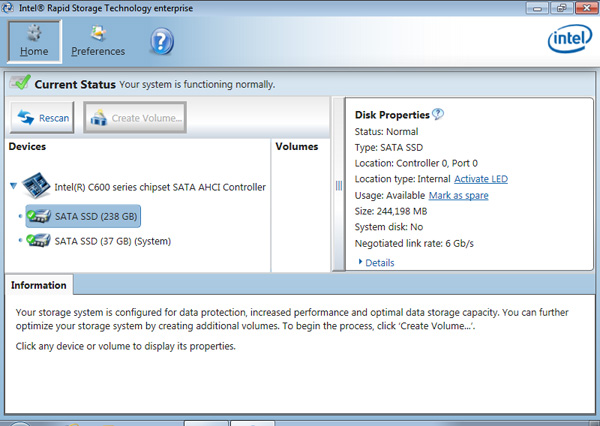

Intel's current RST (Rapid Story Technology) drivers don't support X79, however Intel's RSTe (for enterprise) 3.0 will support the platform once available. We got our hands on an engineering build of the software, which identifies the X79's SATA controller as an Intel C600:

Intel's enterprise chipsets use the Cxxx nomenclature, so this label makes sense. A quick look at Intel's RSTe readme tells us a little more about Intel's C600 controller:

SCU Controllers:

- Intel(R) C600 series chipset SAS RAID (SATA mode)

Controller

- Intel C600 series chipset SAS RAID ControllerSATA RAID Controllers:

- Intel(R) C600 series chipset SATA RAID ControllerSATA AHCI Controllers:

- Intel(R) C600 series chipset SATA AHCI Controller

As was originally rumored, X79 was supposed to support both SATA and SAS. Issues with the implementation of the latter forced Intel to kill SAS support and go with the same 4+2 3Gbps/6Gbps SATA implementation 6-series chipset users get. I would've at least liked to have had more 6Gbps SATA ports. It's quite disappointing to see Intel's flagship chipset lacking feature parity with AMD's year-old 8-series chipsets.

I ran a sanity test on Intel's X79 against some of our H67 data for SATA performance with a Crucial m4 SSD. It looks like 6Gbps SATA performance is identical to the mainstream Sandy Bridge platform:

| 6Gbps SATA Performance - Crucial m4 256GB (FW0009) | ||||||

| 4KB Random Write (8GB LBA, QD32) | 4KB Random Read (100% LBA, QD3) | 128KB Sequential Write | 128KB Sequential Read | |||

| Intel X79 | 231.4 MB/s | 57.6 MB/s | 273.3 MB/s | 381.7 MB/s | ||

| Intel Z68 | 234.0 MB/s | 59.0 MB/s | 269.7 MB/s | 372.1 MB/s | ||

Intel still hasn't delivered an integrated USB 3.0 controller in X79. Motherboard manufacturers will continue to use 3rd party solutions to enable USB 3.0 support.

163 Comments

View All Comments

Valitri - Wednesday, November 16, 2011 - link

Good review as always.Turns out to be slightly less than I was expecting. The performance "jump" from an 1155 SB just isn't there for generic enthusiasts and gamers. Perhaps encoders, renderers, and mathmaticians will enjoy the performance but it doesn't do much for me. Makes me very happy I stepped to a 2500k and I look forward to Ivy Bridge early next year.

Gonemad - Wednesday, November 16, 2011 - link

If there are 16GB DIMMs, and this sucker has 8 DIMMs sockets... 128GB in a home system... hmmm. It makes SSDs all the less appealing. (Specially because you just blew lots of money in DIMM memory, but still...). Pop in a Ramdrive, wait 5 minutes to boot... don't wait anymore. I can see some specific usage that could benefit of this kind of storage subsystem speed. Even if it is a 'tiny' 64GB ramdrive.It may not entirely replace a small SSD, but you can do some neat tricks with that kind of RAM at home. I know only one module is many times more expensive than a SSD, but just the fact that you can do it is remarkable.

Too bad this chip costs a lot, and IT. IS. HUGE. The thing has the size of a cup-holder, or at least the socket. With that amount of die you could build 2 * i7- 2600k and with the amount of money you blow on one, you can still pay for 3 * i7s.

Oh yes, check for yourselves. That's your premium profit margin right there.

This sucker has 435mm2 while the Sandy Bridge 4c has 216mm2. Twice more!

This behemoth will nick your pockets in $999, when a i7-2600k cuts you $317.

Nearly 3 times more. More than 3 times in fact. It is almost pi() times more. Wait, it is pi times more expensive, up to the third decimal. Hmm. I bet you are paying for the lost wafer too. Or it is just a wild coincidence. It doesn't perform twice as better, only 50% better, in some benchies. And it is so big that you can almost call it a TILE, not a CHIP. I am betting that on the same die you build 3 * 2600k, you can build only 2 of these and lose the difference. It should squash the competition. It is a bomb.

Some chip.

Diminishing returns indeed.

Wolfpup - Wednesday, November 16, 2011 - link

"All of this growth in die area comes at the expense of one of Sandy Bridge's greatest assets: its integrated graphics core"Whaaaaat? Greatest assets? It's a waste of space. It should be used for more cache or another core or whatever on the quad version. I can't believe this site...Anandtech of all places...has ANYTHING positive to say about integrated graphics!~

noeldillabough - Wednesday, November 16, 2011 - link

For laptops the integrated graphics is AWESOME however on my gaming machine with top end graphics cards eating space for integrated graphics seems silly.jmelgaard - Thursday, November 17, 2011 - link

The fact that you still talk about X number of cores shows you haven't understood my posts.Your thinking: "How many cores can I make my game utilize"

My model: "How many small enough jobs of processing can I split my game up into"

Number of cores have no relevance in modern architectures, while in a Game engine you properly wan't to take control over the execution of those jobs, priority jobs etc.

The funny thing is, your BF3 already runs on 500+ cores when it comes to the rendering, lighting, polygon transformations and so on... All by chopping the big job of rendering a screen into little bits of work... just like i suggest you can do with the rest of a game, just like we do with so many other applications today.

"I doubt it. There's a reason why game engines are modified as they get older."

Almost every single corp only sees ahead to the next budget year...

seapeople - Saturday, November 19, 2011 - link

Of course it's inevitable you would resort to personal attacks and profanity in an argument you are losing.It's a different mindset... do you think graphics work is programmed by thinking "Ok, today's GPU's have 500 cores, so let's optimize our game to use exactly 500 threads..."

abhicherath - Sunday, November 20, 2011 - link

Why?Why are those 2 fused off....seriously for a 1000 buck CPU, you don't expect intel to hold stuff back....gosh, this is competition crap. If AMD's bulldozers were powerful as hell and outperformed the i7's i sure as hell expect that those 2 cores would be active....what's your opinion?

jmelgaard - Sunday, November 20, 2011 - link

"This doesn't involve a diatribe about number of cores in modern architectures."What what?... Do you even know what you are writing anymore?

What I am talking about is software architecture, which is highly relevant to the discussion.

Flerp - Sunday, November 20, 2011 - link

Even though there are very healthy gains in specific areas, I find the Sandy E to be a bit underwhelming, especially compared to how badly the X58 slaughtered the 775 platforms when it made its debut. I guess I'll be holding on to my X58 platform for another year or so and see what kind of improvements Ivy will bring.jmelgaard - Monday, November 21, 2011 - link

@rarsonThe whole reason I begin to talk about software architectures is because you are so hell-bend on sticking to your idea of "optimizing to a number of cores", I had to have you realize that you need to let go of that idea, your refusal to do so only makes me hope that you don't actually work with software development. No offence intended, because I would never be fit building a house either, it's not my field.

If you had ever gotten to understand that, the next discussion would be if it was beneficial to adopt this strategy within games, if it was viable and if it had a ROI that was worth pursuing if you could choose outside the bounds of this years budget, would it be an architecture that might cost us 2 to 3 times to pursue now, but saved us 20% development costs on our next games or engines for the next 10 years.

Of-course this could be a swing-and-miss if someone revolutionized how we look at our processors, much like they have done with GPU's, but as we have "barely" entered the multi-core-cpu-era, I don't expect this to happen within the next 10 years.

However that is all irrelevant because 10 years is not the time-frame, it is not even 5 or 3... the time-frame is a year at a time, and the cheapest solution in the time-frame of this years budget, that's the chosen one, that's the reason you are looking for, that is why they do it. And this is how almost, if not all, stock-based corporation operates. Why?... Because they have to satisfy stockholders... There is no other reason or rationalizations behind it.

DICE it self is not a stock-based company, but it is a fully owned by Electronic Arts, which is. And so EA's financial numbers is directly impacted by DICE as it counts towards EA's assets (not necessarily Revenue though).

With that, I am done with you, your best argument seems to be "They did it, so that must be the right thing to do"... When was anyone's choice ever evidence of it being the best one?