Bulldozer for Servers: Testing AMD's "Interlagos" Opteron 6200 Series

by Johan De Gelas on November 15, 2011 5:09 PM ESTMaxwell Render Suite

The developers of Maxwell Render Suite--Next Limit--aim at delivering a renderer that is physically correct and capable of simulating light exactly as it behaves in the real world. As a result their software has developed a reputation of being powerful but slow. And "powerful but slow" always attracts our interest as such software can be quite interesting benchmarks for the latest CPU platforms. Maxwell Render 2.6 was released less than two weeks ago, on November 2, and that's what we used.

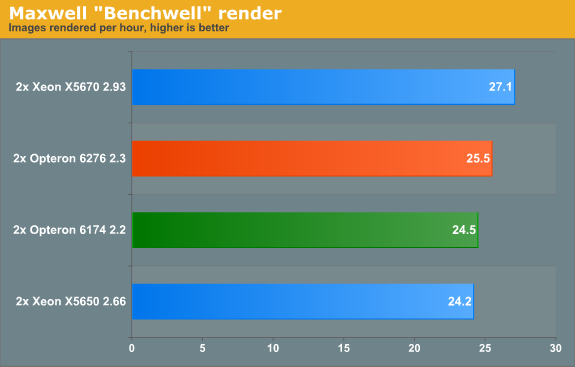

We used the "Benchwell" benchmark, a scene with HDRI (high dynamic range imaging) developed by the user community. Note that we used the "30 day trial" version of Maxwell. We converted the time reported to render the scene in images rendered per hour to make it easier to interprete the numbers.

Since Magny-cours made its entrance, AMD did rather well in the rendering benchmarks and Maxwell is no difference. The Bulldozer based Opteron 6276 gives decent but hardly stunning performance: about 4% faster than the predecessor. Interestingly, the Maxwell renderer is not limited by SSE (Floating Point) performance. When we disable CMT, the AMD Opteron 6276 delivered only 17 frames per second. In other words the extra integer cluster delivers 44% higher performance. There is a good chance that the fact that you disable the second load/store unit by disabling CMT is the reason for the higher performance that the second integer cluster delivers.

Rendering: Blender 2.6.0

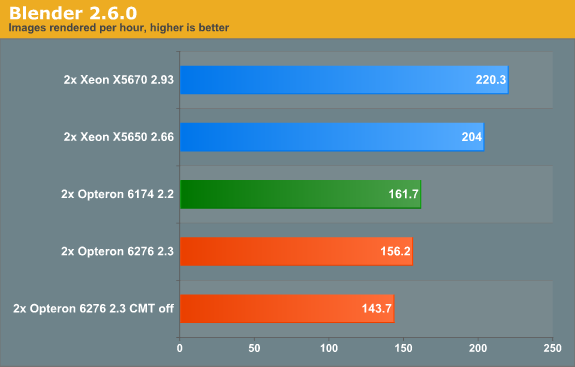

Blender is a very popular open source renderer with a large community. We tested with the 64-bit Windows version 2.6.0a. If you like, you can perform this benchmark very easily too. We used the metallic robot, a scene with rather complex lighting (reflections) and raytracing. To make the benchmark more repetitive, we changed the following parameters:

- The resolution was set to 2560x1600

- Antialias was set to 16

- We disabled compositing in post processing

- Tiles were set to 8x8 (X=8, Y=8)

- Threads was set to auto (one thread per CPU is set).

To make the results easier to read, we again converted the reported render time into images rendered per hour, so higher is better.

Last time we checked (Blender 2.5a2) in Windows, the Xeon X5670 was capable of 136 images per hour, while the Opteron 6174 did 113. So the Xeon was about 20% faster. Now the gap widens: the Xeon is now 36% faster. The interesting thing that we discovered is that the Opteron is quite a bit faster when benchmarked in linux. We will follow up with some Linux numbers in the next article. The Opteron 6276 is in this benchmark 4% slower than its older brother, again likely due in part to the newness of its architecture.

106 Comments

View All Comments

zappb - Tuesday, November 29, 2011 - link

Thanks Johan for the ungodly amount of time you and your team spent on this review, also thanks to all contributors to the comments which was very useful to get more context to someone like myself who is not very up to speed with server tech.The 45 Watt and 65 watt Opterons not mentioned on the front page of the article (but mentioned in the comments - are these based on Interlagos?)

To me it looks like a big win for AMD - and these benchmarks are are not even optimised for the architecture (Linux kernel 3 was not used - can't wait to see updated benchmarks, something like FreeBSD or when we get an updated scheduler for the windows server OS's...should make a big difference.

Really low idle power consumption is nice, and Im planning to pick one of these up (for home use) to play around with FreeBSD, vm's, etc...just for training purposes,

The other point about Intel's sandybridge Xeons, these are just going to be 8 core 3960x right? Which may not change the current server landscape very much depending on their prices.

JWesterby - Friday, February 10, 2012 - link

Respect is due, Johan! You did a very useful review under significant limitations. The very best part is to point an unbiased light at a damned interesting CPU. There is an important "next step," which I will address shortly.As always, just the mention of AMD brings out hysterical attacks. One would think we were talking about Stem Cell research!! There is no real discussion -- it's pitchforks, lit torches, and a stake ready for poor Johan and anyone else ready and willing to consider the mere possibility that AMD have produced worthy technology!!

Computer technology - doing it, anyway - has changed. It's become ALL about the bloody money, and the "culture" of the people doing technology has also changed -- it has become much more cut-throat, there is far less collegiality, and the number of people willing to take risks on projects has become really uncommon. Qualified people doing serious technology just because they can is uncommon.

There is no end to posers (including some on this board), Machiavellian Fortune 500 IT managers, and "Project Managers" who are clueless (there ARE some great IT managers and wonderful PM's but their numbers are shrinking). My hat to those in Open Source - they are the Last Bastion of decency for the sake of decency, and technology merely for the joy of doing it !!

"Back in the day" people seemed really into the technology, solving difficult problems, and making good things happen. There was truly a culture. For example not taking a moment to help someone on the team or otherwise made you a jerk. Development was a craft or an art, and we were all in it together. We are loosing that, and it's become more dog-eat-dog with a kind of mean spirit. What a shame. Many of the comments here are perfect examples -- people who would rather burn down the temple than give a new and challenging technology a good think.

Personally I can't wait to get my hands on a couple of AMD's new CPU's, build a decent server, and carefully work out the issues with patience. These new Opterons are like a whole new tech that may be the beginning of all new territory.

My passion and some professional work is coding at the back end in C/C++ and I'm just beginning to understand CUDA and using GPU's to beef up parallel code. My work is all around (big) data warehousing, cutting edge columnar databases, almost everything running virtual, all the way through to analytics on BI platforms. I do all of that both on MS Server 2008, Solaris and FreeBSD. All that is a perfect environment to test AMD's "new territory."

Probably worth a blog at some point because these processors are new territory and using them well will take some work just keeping track of all the small things that shake out. That's the "next step" that this and other reviews require to really understand AMD's Bulldozers. Doing that well, if AMD is right with these chips, means being able to build some great back-end servers at a much more approachable price; more importantly without paying an "Intel" tax, and in the end having two strong vendors and thereby more freedom to make the best choice for the requirement.

PhotoPrint - Sunday, December 25, 2011 - link

you should make fair comparison at the same price range!lts like comparing GTX580 VS AMD RADEON 6950!

g101 - Wednesday, January 11, 2012 - link

Wow, anad let the truth about bulldozer leak out.ppennisi - Wednesday, March 7, 2012 - link

To obtain maximum performance from my Dell R715 server equipped with dual Interlagos processor I had to DISABLE C1E in the BIOS.Under VMware the machines performance changed completely, almost doubled in performance.

Maybe you should try it.

anti_shill - Monday, April 2, 2012 - link

Here's a more accurate reflection of Bulldozer/ interlagos performance, untainted by intel ad bucks...http://www.phoronix.com/scan.php?page=article&...

But if u really want to see what the true story is, have a look at AMD's stock price lately, and their server wins. They absolutely smoke intel on virtualization, and anything that requires a lot of threads. It's not even close. That would be the reason this review pits Interlagos against an Intel processor that costs twice as much.