Apple iPhone 4S: Thoroughly Reviewed

by Anand Lal Shimpi & Brian Klug on October 31, 2011 7:45 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 4S

Battery Life

I'll begin this section with an admission: we need to update our battery life suite. With the introduction of the very first iPhone I introduced a web page loading test that simply cycled through a bunch of web pages, pausing on each one to simulate reading time (I measured how long it took me to read a typical content page and used that as the reading time). Our web browsing battery life test is largely dominated by the power consumption of the display, but it also causes the CPU to wake up from its low power states and hits the WiFi/cellular stacks as well. The test managed to do reasonably well over the years however it's getting a bit long in the tooth, especially given that mobile browsers have become more aggressive in caching content. The move to iOS 5 in particular hurt our web browser test as it cached so much of the content of each page that our cellular results now closely mirror our WiFi results on the iPhone 4/4S. There's still a bit of a penalty to be paid over 3G, but not nearly as much as it should be in the real world. The test data is still valid, it's simply no longer representative of real world web browsing battery life, but rather a more academic look at very light (but continuous) smartphone usage. Thankfully we do have other tools at our disposal until we update the web browsing suite. Brian Klug devised a hotspot test that really stresses the cellular baseband of these phones by constantly streaming content over the Internet, via the phone being tested, to a tethered notebook. Between our hotspot, web browsing and call tests we should be able to get a good idea of the overall performance of the iPhone 4S on battery.

Before we get to the results, let's talk a little bit about what we should see architecturally. As Brian already mentioned at the start of the review, battery capacity is up slightly in the iPhone 4S. The increase is marginal at best, on the order of 1%, meaning it shouldn't result in a tangible impact to battery life.

The display is a major consumer of power but with the specs unchanged since the original iPhone, the 4S' panel shouldn't consume any more power than its predecessor. This leaves the A5 SoC and the Qualcomm MDM6610 baseband as the primary influencers on power consumption.

Process technology hasn't changed going from the A4 to the A5, both chips were built using Samsung's 45nm process as far as we know. At the core level, a single ARM Cortex A9 core is about 10 - 50% faster than a Cortex A8 at the same frequency. Thankfully Apple kept frequency constant with the move to the A5 in the 4S, making this comparison a bit easier to make.

NVIDIA originally told me that the Cortex A9 was more power efficient than the A8 it replaced. The A9 has a shorter, more efficient pipeline and, in the case of the A5, isn't pushing ridiculous frequencies. Based on Apple's frequency targets alone I'd say that it's probably a safe bet that we're looking at a 45nm LP implementation.

To claim the A9 is more power efficient than the A8 isn't enough however. If we look at Larrabee and Intel's first five years of Atom it's clear that when faced with the ultimate goal of minimizing power consumption, an in-order core is the way to go. In the ARM space, the recently announced Cortex A7 offers an additional datapoint: when ARM needed a low power core, it picked an in-order design with an 8-stage pipeline. The additional hardware required by an OoO architecture consumes significant power, and the gains in performance aren't always enough to offset the corresponding increase in power.

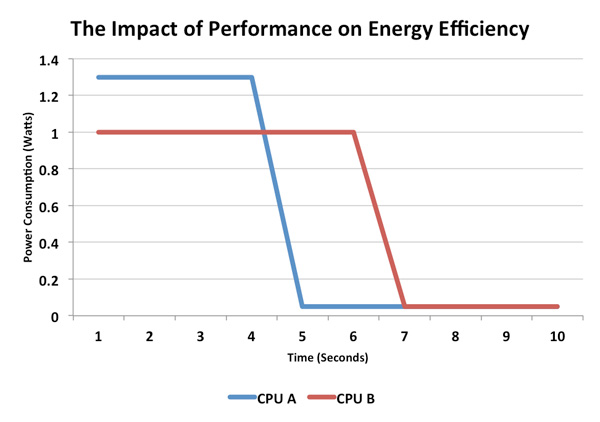

Why would being faster make a microprocessor use less power? The concept is called race to sleep. At idle the CPU in an SoC is mostly clock gated if not power gated entirely. In this deep sleep state, power draw is on the order of a few milliwatts. Under full load however, power consumption can be well above a watt. If a faster processor consumes more power under load but can get to sleep quicker, the power savings may give it an advantage over a slower processor. Consider the following examples:

Here we have two hypothetical CPUs, one with a max power draw of 1W and another with a max power draw of 1.3W. The 1.3W chip is faster under load but it draws 30% more power. Running this completely made-up workload, the 1.3W chip completes the task in 4 seconds vs. 6 for its lower power predecessor and thus overall power consumed is lower. Another way of quantifying this is to say that in the example above, over 10 seconds CPU A does 5.5 Joules of work vs. 6.2J for CPU B (assuming both chips have the same 0.05W idle power consumption).

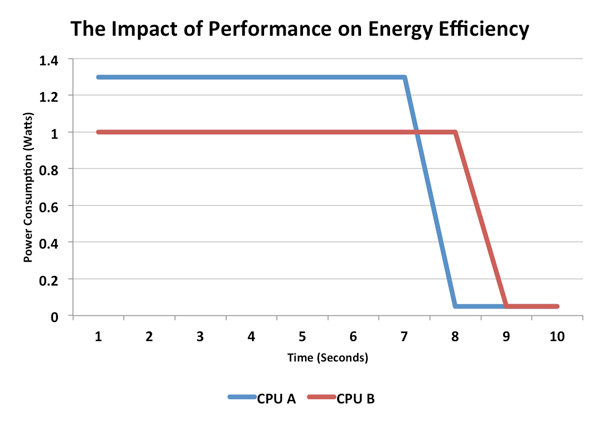

Now let's take the same two hypothetical CPUs and present them with a workload that doesn't scale nearly as well on the faster part:

Despite being faster, the 1.3W CPU isn't fast enough to overcome the 30% increase in power. Here CPU A does 9.25J of work vs. 8.1J for CPU B. Perhaps the faster CPU has more cores and the workload isn't well threaded, or maybe the workload is more optimized for the slower architecture, regardless of the reason this is just as valid of a scenario.

Albeit overly simplified, these two cases are examples of what could happen between the iPhone 4 and iPhone 4S. ARM hasn't published a lot of data comparing the Cortex A8 to A9, but ARM has publicly stated that a single A9 core can consume 10 - 20% more power than a single A8 core. If we assume those numbers are under max load, then the A9 simply needs to be more than 10 - 20% faster than the A8 in order to come out ahead. As we've already seen from some of our benchmarks, that's not too difficult, particularly in web browsing. But in other tests, the advantage is more marginal.

The comparison becomes more complex when you take into account there are two Cortex A9s in Apple's A5 SoC vs. a single Cortex A8 in Apple's A4. This is potentially an advantage as a well threaded app could run both cores at a lower voltage/frequency combination (reducing power at an exponential level) while the single core would have to run at its maximum voltage/frequency levels.

It's also possible than two cores would consume more power, but for that to happen you'd have to be running a heavily threaded app at full frequency for a considerable amount of time. To date I haven't seen many smartphone apps that would create such a scenario, but it's akin to looping Cinebench on a quad-core vs. a dual-core part and noting a reduction in battery life for the quad-core CPU. Although the former is quicker to complete the task, the fact that you're looping it indefinitely prevents its speed from ever being an advantage for battery life.

I crudely measured power consumption on the iPhone 4 and 4S (both on AT&T) doing a variety of tasks. The granularity of my measurements is what makes them crude, I was limited to a resolution of 0.1W. While this data would've been far more useful given 0.01W resolution, we are able to use it to get a general idea of power consumption between these two phones. I briefly contemplating inserting a multimeter in-line with the battery however I chickened out, not wanting to risk damage to my phone or review device. I highlighted the obvious power advantages although keep in mind some of these advantages may be smaller (or larger) than they appear due to the 0.1W resolution of my measurements:

| Power Consumption Comparison | ||||

| Apple iPhone 4 (AT&T) | Apple iPhone 4S (AT&T) | |||

| Idle | 0.7W | 0.7W | ||

| Launch Safari | 0.9W | 0.9W | ||

| Load AnandTech.com | 1.0W | 1.1W | ||

| Maps (Determine Current Location via GPS/WiFi) | 1.3W | 1.4W | ||

Power at idle and during application launches was pretty much unchanged between the two devices, which is to be expected. The 4S did draw measurably more power loading web pages. As we've already seen however, the average performance gain in our web page loading tests was over 30%, easily making up for the increase in power draw here. Maps however pulled more power on the 4S.

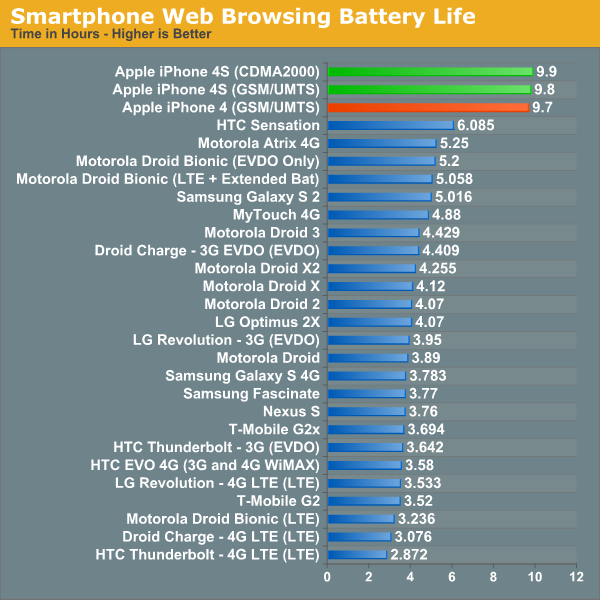

What does all of this mean? The iPhone 4S has the potential to have slightly better, equal or much worse battery life than the iPhone 4. It really depends on your workload. If you're mostly browsing the web, the 4S should be about equal to if not slightly better than the 4. Our numbers seem to back that up:

Even though the 3G results are skewed by an unrealistic amount of caching, the CPU still has to work to render and display each page. Since the workload remains the same between the iPhone 4 and 4S, the latter simply enjoys a performance improvement (pages load quicker) while extending battery life a bit thanks to being asleep for longer.

There is one caveat to web browsing battery life: the 4S will only last longer if you do the same amount of work on it. Typically, if web pages load quicker, you end up browsing more on the faster device than you would on the slower device. If you do browse more on the 4S as a result of its speed improvements, battery life won't be as good as it was on the 4. There's nothing you can do about this - faster CPUs and faster Internet connections have always encouraged faster browsing, but it's something to keep in mind if you make the upgrade.

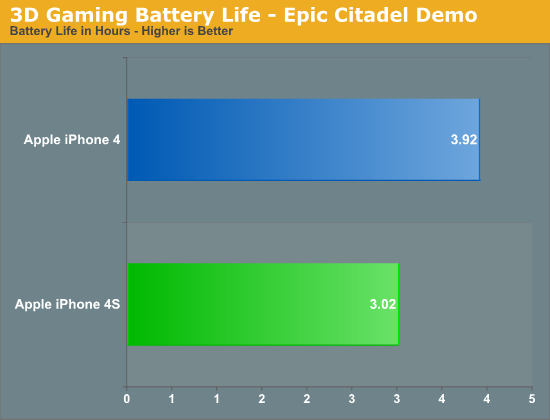

3D Gaming Battery Life

| Power Consumption Comparison | ||||

| Apple iPhone 4 (AT&T) | Apple iPhone 4S (AT&T) | |||

| Launch Infinity Blade | 2.2W | 2.6W | ||

| Infinity Blade (Opening Scene, Steady State) | 2.0W | 2.2W | ||

Infinity Blade is a GPU intensive 3D game, which obviously causes the GPU transistors to fire up on both SoCs. Given the beefier GPU in the 4S, much higher power consumption here isn't unexpected. Since battery capacities haven't really changed, and the 4S does draw significantly more power under heavy GPU load (even limited by Vsync), you can expect lower battery life when running GPU intensive 3D games. To put some real world numbers to the data I ran a loop of Epic's Citadel demo on both the 4 and 4S until both phones died:

The iPhone 4 lasted around 30% longer in our GPU test compared to the iPhone 4S. This is actually a trend we have seen before, with the move to the 3GS we noted a similar impact on battery life compared to the previous iPhone 3G. If you're going to do any heavy 3D gaming, expect the iPhone 4S to burn through your battery quicker - although you will have a better experience on the 4S thanks to a smoother frame rate. Note that for sufficiently light 3D workloads (e.g. where the iPhone 4 is already bumping into Vsync), it's unlikely that you'll see much of a difference in battery life between the two phones. Citadel is simply too strenuous of a test for the 4. What really penalizes the 4S is its ability to run at nearly 2x the frame rate of the 4.

| Power Consumption Comparison | ||||

| Apple iPhone 4 (AT&T) | Apple iPhone 4S (AT&T) | |||

| Launch iBooks | 1.3W | 1.2W | ||

| iBooks Page Turning Animation (Rapid Movement) | 1.6W | 1.5W | ||

If you're concerned that GPU acceleration throughout the OS will penalize the 4S, I wouldn't be too worried. The data above shows power consumption while running iBooks. For the second test I took a book page and quickly moved it left/right to trigger the ever impressive page turning animation. Doing so drove power consumption up, but the 4S consistently pulled less power than the iPhone 4. If you're going to be at the forefront of 3D gaming on iOS, the 4S won't last as long as its predecessor. For casual use, you should be just fine.

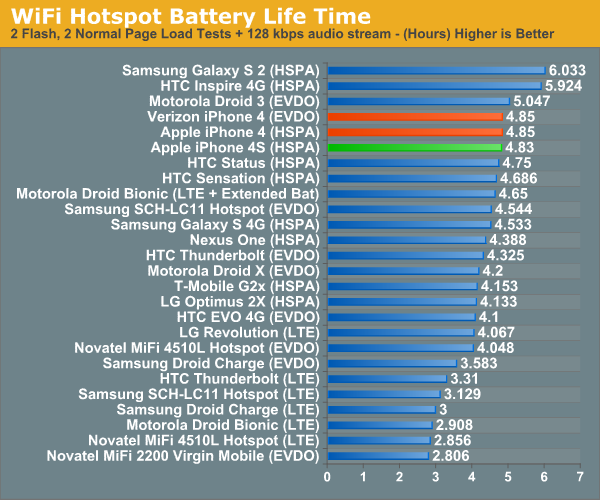

3G/WiFi Battery Life

I ran several speedtests in the same location on both 3G and WiFi to see if I could get a clear idea of whether or not the baseband and WiFi stack in the 4S was more power efficient than in the 4. The results unanimously agree, the 4S is more power efficient at uploading/downloading at the limits of 3G and WiFi:

| Power Consumption Comparison | ||||

| Apple iPhone 4 (AT&T) | Apple iPhone 4S (AT&T) | |||

| Speed Test (3G, Downstream) | 2.8W | 2.4W | ||

| Speed Test (3G, Upstream) | 3.0W | 2.8W | ||

| Speed Test (WiFi, Downstream) | 1.5W | 1.4W | ||

| Speed Test (WiFi, Upstream) | 1.6W | 1.4W | ||

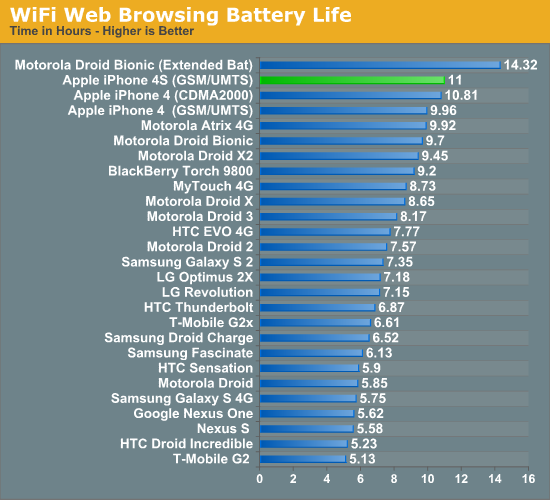

Our tethered test gives us a good idea of how quickly the 4S will die under moderate cellular data load. Apple's power advantages under iOS are due to wonderful management of idle time, similar to what we've seen with OS X vs. Windows 7. Under load however, Apple is bound by the same physical realities as its competitors and the question of battery life becomes one of battery capacity divided by peak power draw. Here the iPhone 4S does very well, but it's outpaced by the upper echeleon of Android phones:

It is surprising that despite the peak power advantages above, we didn't see any improvement in our WiFi hotspot test. The only explanation I have is that the power advantage may not be as pronounced if we're not pushing the limits of the wireless interfaces.

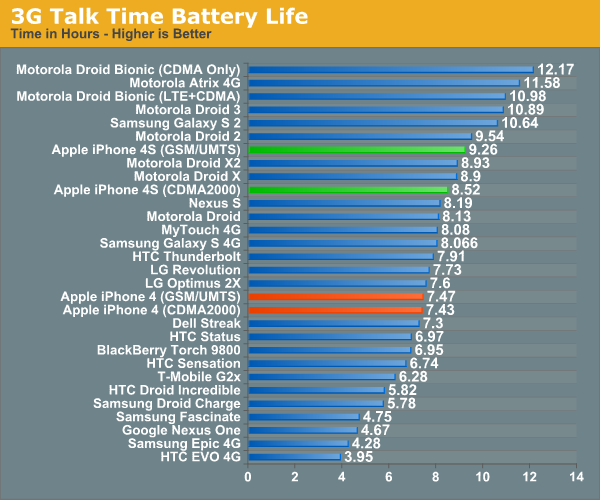

Call time, on the other hand, improves tangibly compared to the iPhone 4. As the screen is off and the CPU mostly idle during this test, it really just echoes the numbers we saw above. Qualcomm's MDM6610 seems to outclass the outgoing Infineon X-Gold baseband when it comes to power efficiency:

Based on the data we have here, I'd say Apple's claim of 8 hours of battery life is fairly realistic under some sort of continuous use/load. If you're constantly pulling data don't expect to see more than 5 hours, but if you're mostly reading/watching/consuming content you will get closer to 10 hours on the iPhone 4S. Call time falls at the longer end of the spectrum, but be warned: run a demanding 3D title and you'll see barely over 3 hours of use out of the iPhone 4S. It looks like any serious 3D gaming is going to have to be tethered or at least near a power outlet. The move to 28/32nm should buy us some more power headroom, but then again there are even faster GPUs just around the corner.

Based on our data, concerns about the iPhone 4S' battery life seem unrelated to hardware. The raw power consumption numbers show a platform that's competitive with its predecessor in most areas, only really hurting when it comes to heavy 3D workloads. If you're seeing worse battery life on the 4S, the cause would appear to be software related. Wipe, setup from scratch (no restore), remove/re-add all accounts and reset network settings would be the best course of action if you're seeing higher than normal power consumption.

Moving forward, I wouldn't be too surprised to see battery life remain around this level for the near future without significant advancements in battery or process technology. As we look toward the next-generation of microprocessor architectures, they simply become more robust out-of-order designs. As we've learned from the move to multi-core on the PC side however, continued gains in single threaded performance become increasingly difficult to come by - particularly without expending a lot of energy. There is hope for an increase in efficiency via heterogeneous multiprocessing, but just how much that will buy us remains to be seen. Process technology and architecture are going to become even more important over the coming years in the mobile space.

199 Comments

View All Comments

doobydoo - Friday, December 2, 2011 - link

Its still absolute nonsense to claim that the iPhone 4S can only use '2x' the power when it has available power of 7x.Not only does the iPhone 4s support wireless streaming to TV's, making performance very important, there are also games ALREADY out which require this kind of GPU in order to run fast on the superior resolution of the iPhone 4S.

Not only that, but you failed to take into account the typical life-cycle of iPhones - this phone has to be capable of performing well for around a year.

The bottom line is that Apple really got one over all Android manufacturers with the GPU in the iPhone 4S - it's the best there is, in any phone, full stop. Trying to turn that into a criticism is outrageous.

PeteH - Tuesday, November 1, 2011 - link

Actually it is about the architecture. How GPU performance scales with size is in large part dictated by the GPU architecture, and Imagination's architecture scales better than the other solutions.loganin - Tuesday, November 1, 2011 - link

And I showed it above Apple's chip isn't larger than Samsung's.PeteH - Tuesday, November 1, 2011 - link

But chip size isn't relevant, only GPU size is.All I'm pointing out is that not all GPU architectures scale equivalently with size.

loganin - Tuesday, November 1, 2011 - link

But you're comparing two different architectures here, not two carrying the same architecture so the scalability doesn't really matter. Also is Samsung's GPU significantly smaller than A5's?Now we've discussed back and forth about nothing, you can see the problem with Lucian's argument. It was simply an attempt to make Apple look bad and the technical correctness didn't really matter.

PeteH - Tuesday, November 1, 2011 - link

What I'm saying is that Lucian's assertion, that the A5's GPU is faster because it's bigger, ignores the fact that not all GPU architectures scale the same way with size. A GPU of the same size but with a different architecture would have worse performance because of this.Put simply architecture matters. You can't just throw silicon at a performance problem to fix it.

metafor - Tuesday, November 1, 2011 - link

Well, you can. But it might be more efficient not to. At least with GPU's, putting two in there will pretty much double your performance on GPU-limited tasks.This is true of desktops (SLI) as well as mobile.

Certain architectures are more area-efficient. But the point is, if all you care about is performance and can eat the die-area, you can just shove another GPU in there.

The same can't be said of CPU tasks, for example.

PeteH - Tuesday, November 1, 2011 - link

I should have been clearer. You can always throw area at the problem, but the architecture dictates how much area is needed to add the desired performance, even on GPUs.Compare the GeForce and the SGX architectures. The GeForce provides an equal number of vertex and pixel shader cores, and thus can only achieve theoretical maximum performance if it gets an even mix of vertex and pixel shader operations. The SGX on the other hand provides general purpose cores that work can do either vertex or pixel shader operations.

This means that as the SGX adds cores it's performance scales linearly under all scenarios, while the GeForce (which adds a vertex and a pixel shader core as a pair) gains only half the benefit under some conditions. Put simply, if a GeForce core is limited by the number of pixel shader cores available, the addition of a vertex shader core adds no benefit.

Throwing enough core pairs onto silicon will give you the performance you need, but not as efficiently as general purpose cores would. Of course a general purpose core architecture will be bigger, but that's a separate discussion.

metafor - Tuesday, November 1, 2011 - link

I think you need to check your math. If you double the number of cores in a Geforce, you'll still gain 2x the relative performance.Double is a multiplier, not an adder.

If a task was vertex-shader bound before, doubling the number of vertex-shaders (which comes with doubling the number of cores) will improve performance by 100%.

Of course, in the case of 543MP2, we're not just talking about doubling computational cores.

It's literally 2 GPU's (I don't think much is shared, maybe the various caches).

Think SLI but on silicon.

If you put 2 Geforce GPU's on a single die, the effect will be the same: double the performance for double the area.

Architecture dictates the perf/GPU. That doesn't mean you can't simply double it at any time to get double the performance.

PeteH - Tuesday, November 1, 2011 - link

But I'm not talking about relative performance, I'm talking about performance per unit area added. When bound by one operation adding a core that supports a different operation is wasted space.So yes, doubling space always doubles relative performance, but adding 20 square millimeters means different things to the performance of different architectures.