Apple iPhone 4S: Thoroughly Reviewed

by Anand Lal Shimpi & Brian Klug on October 31, 2011 7:45 PM EST- Posted in

- Smartphones

- Apple

- Mobile

- iPhone

- iPhone 4S

Camera Improvements

Arguably the second largest hardware change (with the A5 SoC being the first and largest) in the 4S is the inclusion of a much improved 8MP camera. In case you’ve forgotten, the iPhone 4 previously included a 5 MP camera. Back when the 4 was introduced, Apple talked for the first time about backside illumination, and pixel sizes. In a later update, the camera got even better with the ability to buffer three full size images and merge to HDR in real time. This time, Apple brought up F/# and backside illumination again, and added one more thing.

Though Apple never talked about any of their optical design for the iPhone 4 camera, to the best of my knowledge the design likely was close to reference designs reported on a few lens lists consisting of four plastic elements. For the 4S, Apple has mixed things up by including its own optical design front and center, and made special note of a five plastic element design. I’ve put together a table showing the 4 and 4S in comparison based on what information is available.

Note that many have speculated that Apple is dual sourcing the CMOS sensor which seems likely, and given the sensors out there the two most likely choices are Omnivision’s OV8830 and Sony’s IMX105. Both of these have almost identical specifications, including 1.4µm pixels, a 1/3.2“ format, and an improved backside illumination process over the previous generation wafer-scale process. Omnivision’s BSI–2 process cites some specifications that seem to line up with what Apple talked about in their presentation, including better quantum efficiency (ability to convert photons into electrons), low-light sensitivity, and larger well capacity (which translates to increased dynamic range). You’ll note that the 4S uses the same sensor format as the previous generation - 1/3.2”, and includes more pixels, which results in the pixel size going down from 1.75µm to 1.4µm.

| iPhone 4 vs. 4S Cameras | ||

| Property | iPhone 4 | iPhone 4S |

| CMOS Sensor | OV5650 | OV8830/IMX105 |

| Sensor Format | 1/3.2" (4.54 x 3.42 mm) | 1/3.2" (4.54 x 3.42 mm) |

| Optical Elements | 4 Plastic | 5 Plastic |

| Pixel Size | 1.75 µm | 1.4 µm |

| Focal Length | 3.85 mm | 4.28 mm |

| Aperture | F/2.8 | F/2.4 |

| Image Capture Size | 2592 x 1936 (5 MP) | 3264 x 2448 (8 MP) |

| Average File Size | ~2.03 MB (AVG) | ~2.77 MB (AVG) |

Everybody likes talking about sensors (and I see lots of attention given to them), but any good photographer knows that it’s a combination of optical system and sensor that matters to performance. Optical design is important, and having studied as an optical engineer I find it interesting that Apple would draw attention to having a custom design of their very own with an additional plastic element. For a while I’ve held off on really talking about smartphone camera optics, but while we’re here, let’s touch briefly on them.

Thus far this generation and the one before it have primarily used 4 plastic elements, and virtually everyone but Nokia uses nothing but plastic (Nokia famously uses Zeiss-branded designs, often with glass elements). Optical design is generally driven by material availability, and there are only a few optical grade (read: transmissive in the visible) thermoplastics out there - Styrene, Polystyrene, ZEONEX, PMMA (Acrylic) and so forth - the list is actually relatively short. Thankfully polystyrene and PMMA can be used to make something of an achromatic pair, with polystyrene as a flint, and PMMA as something of a crown. Plastic provides unique constraints as well though - coatings don’t stick well, not very many have great optical properties, they have a high coefficient of thermal expansion, high index variation with temperature (which oddly decreases with increasing temperature), and less heat resistance or durability among others. With all those downsides you might wonder why smartphone vendors use plastic, and that reason is simple - they’re cheap, but more importantly, they can be molded into complicated shapes. Those complicated shapes are aspheres, which are difficult to fabricate out of glass, and afford much finer control over aberrations using fewer elements, which is an absolute necessity when working with very little package depth.

Apple's 4S versus 4 infographic

Apple's 4S versus 4 infographic

So what does adding another element get you? Well, when you’ve faced with limited material choices, adding more surfaces gives you another opportunity to balance aberrations that start blowing up rapidly as you increase F/#. That said, there are tradeoffs as well to adding surfaces - more back reflections, increased cost, and a thicker system. In the keynote, Apple notes that sharpness is improved by 30% in their new 5 element design, and MTF is what they’re undoubtably alluding to.

Genius electronic optical - 5P lens. Compare to above.

Genius electronic optical has a page on their website with a lens system that seems likely to be what’s in the 4S, as the specifications include 8 MP resolution (same size), same sensor format, F/# (2.4), 5 plastic elements (5P) and looks basically like what’s in the 4S. Other than that, however, there’s not much more that I can say about this Apple specific design without destructively taking things apart. One thing is for certain however, and it’s that Apple is getting serious about camera performance, something that other handset vendors like HTC (with its F/2.2 systems) are also doing.

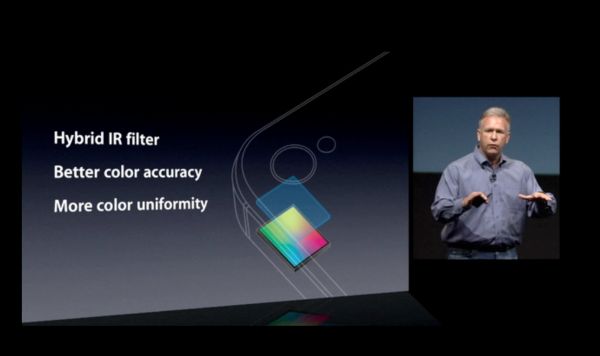

Apple made mention that it also included an IR filter in the 4S optical design. If you recall back to our Kinect story, I used the 4 camera to photograph the IR laser structured light projector that Kinect uses to build a 3D picture. The 4 no doubt has an IR filter (though not a great one), but it’s probably just a thin film rather than a discrete filter right before the sensor. The 4S includes what Apple has deemed a ‘hybrid IR filter’ right on top of the sensor, which is possibly just a combination of UV/IR CUT filter (UV is a problem too), and an anti-aliasing filter.

If you try and take the same Kinect (IR source) picture with the 4S, thankfully all those non-visible, IR wavelength photons get rejected by the filter. This doesn’t sound like much until you realize that silicon is transparent in the IR and will bounce around off the metal structures inside a CMOS or CCD and create lovely diffraction effects on fancy sensors. I digress though since that’s probably not what Apple was trying to combat here. On a larger scale, IR will generally just cause undesirably incorrect color representation, and thus people stick an IR filter either in the lens somewhere or before the sensor, which is what has been done in the 4S. The thin film IR filters that smartphones have used in the past also are largely to blame for some of the color nonuniformity and color spot (magenta/green circle) issues that people have started taking note of. With these thin film IR filters, rays incident on the filter at an angle (as we move across the field) change the frequency response of the filter and the result is that infamous circular color nonuniformity. I wager the other effect is some weird combination of vignetting and the microlens array on the CMOS, but when I saw Apple make note of their improved IR filter my thoughts immediately raced to this ‘hybrid IR filter’ as being their logical cure for the infamous green circle the iPhone 4 exhibits.

Another minor difference on the 4S is that the LED flash is improved. The previous LED flash had a distinctively yellow-green hue, the LED flash on the 4S seems slightly brighter and also has a temperature that’s subjectively much closer to daylight, though I didn’t measure it directly. I habitually avoided using LED illumination on the 4 and will probably continue to do so on the 4S (and use HDR instead), but it does bear noting that the LED characteristics are improved. Unfortunately the diffuser and illumination pattern still isn’t very uniform or wide. It also seems that all this talk of moving the LED flash to the other side of the device to combat red eye turned out wrong as well.

199 Comments

View All Comments

doobydoo - Friday, December 2, 2011 - link

Its still absolute nonsense to claim that the iPhone 4S can only use '2x' the power when it has available power of 7x.Not only does the iPhone 4s support wireless streaming to TV's, making performance very important, there are also games ALREADY out which require this kind of GPU in order to run fast on the superior resolution of the iPhone 4S.

Not only that, but you failed to take into account the typical life-cycle of iPhones - this phone has to be capable of performing well for around a year.

The bottom line is that Apple really got one over all Android manufacturers with the GPU in the iPhone 4S - it's the best there is, in any phone, full stop. Trying to turn that into a criticism is outrageous.

PeteH - Tuesday, November 1, 2011 - link

Actually it is about the architecture. How GPU performance scales with size is in large part dictated by the GPU architecture, and Imagination's architecture scales better than the other solutions.loganin - Tuesday, November 1, 2011 - link

And I showed it above Apple's chip isn't larger than Samsung's.PeteH - Tuesday, November 1, 2011 - link

But chip size isn't relevant, only GPU size is.All I'm pointing out is that not all GPU architectures scale equivalently with size.

loganin - Tuesday, November 1, 2011 - link

But you're comparing two different architectures here, not two carrying the same architecture so the scalability doesn't really matter. Also is Samsung's GPU significantly smaller than A5's?Now we've discussed back and forth about nothing, you can see the problem with Lucian's argument. It was simply an attempt to make Apple look bad and the technical correctness didn't really matter.

PeteH - Tuesday, November 1, 2011 - link

What I'm saying is that Lucian's assertion, that the A5's GPU is faster because it's bigger, ignores the fact that not all GPU architectures scale the same way with size. A GPU of the same size but with a different architecture would have worse performance because of this.Put simply architecture matters. You can't just throw silicon at a performance problem to fix it.

metafor - Tuesday, November 1, 2011 - link

Well, you can. But it might be more efficient not to. At least with GPU's, putting two in there will pretty much double your performance on GPU-limited tasks.This is true of desktops (SLI) as well as mobile.

Certain architectures are more area-efficient. But the point is, if all you care about is performance and can eat the die-area, you can just shove another GPU in there.

The same can't be said of CPU tasks, for example.

PeteH - Tuesday, November 1, 2011 - link

I should have been clearer. You can always throw area at the problem, but the architecture dictates how much area is needed to add the desired performance, even on GPUs.Compare the GeForce and the SGX architectures. The GeForce provides an equal number of vertex and pixel shader cores, and thus can only achieve theoretical maximum performance if it gets an even mix of vertex and pixel shader operations. The SGX on the other hand provides general purpose cores that work can do either vertex or pixel shader operations.

This means that as the SGX adds cores it's performance scales linearly under all scenarios, while the GeForce (which adds a vertex and a pixel shader core as a pair) gains only half the benefit under some conditions. Put simply, if a GeForce core is limited by the number of pixel shader cores available, the addition of a vertex shader core adds no benefit.

Throwing enough core pairs onto silicon will give you the performance you need, but not as efficiently as general purpose cores would. Of course a general purpose core architecture will be bigger, but that's a separate discussion.

metafor - Tuesday, November 1, 2011 - link

I think you need to check your math. If you double the number of cores in a Geforce, you'll still gain 2x the relative performance.Double is a multiplier, not an adder.

If a task was vertex-shader bound before, doubling the number of vertex-shaders (which comes with doubling the number of cores) will improve performance by 100%.

Of course, in the case of 543MP2, we're not just talking about doubling computational cores.

It's literally 2 GPU's (I don't think much is shared, maybe the various caches).

Think SLI but on silicon.

If you put 2 Geforce GPU's on a single die, the effect will be the same: double the performance for double the area.

Architecture dictates the perf/GPU. That doesn't mean you can't simply double it at any time to get double the performance.

PeteH - Tuesday, November 1, 2011 - link

But I'm not talking about relative performance, I'm talking about performance per unit area added. When bound by one operation adding a core that supports a different operation is wasted space.So yes, doubling space always doubles relative performance, but adding 20 square millimeters means different things to the performance of different architectures.