Rage Against the (Benchmark) Machine

by Jarred Walton on October 14, 2011 11:55 PM ESTTechnical Discussion

The bigger news with Rage is that this is id’s launch title to demonstrate what their id Tech 5 engine can do. It’s also the first major engine in a long while to use OpenGL as the core rendering API, which makes it doubly interesting for us to investigate as a benchmark. And here’s where things get really weird, as id and John Carmack have basically turned the whole gaming performance question on its head. Instead of fixed quality and variable performance, Rage shoots for fixed performance and variable quality. This is perhaps the biggest issue people are going to have with the game, especially if they’re hoping to be blown away by id’s latest graphical tour de force.

Running on my gaming system (if you missed it earlier, it’s an i7-965X @ 3.6GHz, 12GB RAM, GTX 580 graphics), I get a near-constant 60FPS, even at 2560x1600 with 8xAA. But there’s the rub: I don’t ever get more than 60FPS, and certain areas look pretty blurry no matter what I do. The original version of the game offered almost no options other than resolution and antialiasing, while the latest patch has opened things up a bit by adding texture cache and anisotropic filtering settings—these can be set to either Small/Low (default pre-patch) or Large/High. If you were hoping for a major change in image quality, however, post-patch there’s still plenty going on that limits the overall quality. For one, even with 21GB of disk space, id’s megatexturing may provide near-unique textures for the game world but many of the textures are still low resolution. Antialiasing is also a bit odd, as it appears have very little effect on performance (up to a certain point); the most demanding games choke at 2560x1600 4xAA, even with a GTX 580, but Rage chugs along happily with 8xAA. (16xAA on the other hand cuts frame rates almost in half.)

The net result is that both before and after the latest patch, people have been searching for ways to make Rage look better/sharper, with marginal success. I grabbed one of the custom configurations listed on the Steam forums to see if that helped at all. There appears to be a slight tweak in anisotropic filtering, but that’s about it. [Edit: removed link as the custom config appears mostly worthless—see updates.] I put together a gallery of several game locations using my native 2560x1600 resolution with 8xAA, at the default Small/Low settings (for texturing/filtering), at Large/High, and using the custom configuration (Large/High with additional tweaks). These are high quality JPEG files that are each ~1.5MB, but I have the original 5MB PNG files available if anyone wants them.

You can see that post-patch, the difference between the custom configuration and the in-game Large/High settings is negligible at best, while the pre-patch (default) Small/Low settings have some obvious blurriness in some locations. Dead City in particular looked horribly blurred before the patch; I started playing Rage last week, and I didn’t notice much in the way of texture blurriness until I hit Dead City, at which point I started looking for tweaks to improve quality. It looks better now, but there are still a lot of textures that feel like they need to be higher resolution/quality.

Something else worth discussing while we’re on the subject is Rage’s texture compression format. S3TC (also called DXTC) is the standard compressed texture format, first introduced in the late 90s. S3TC/DXTC achieves a constant 4:1 or 6:1 compression ratio of textures. John Carmack has stated that all of the uncompressed textures in Rage occupy around 1TB of space, so obviously that’s not something they could ship/stream to customers, as even with a 6:1 compression ratio they’d still be looking at 170GB of textures. In order to get the final texture content down to a manageable 16GB or so, Rage uses the HD Photo/JPEG XR format to store their textures. The JPEG XR content then gets transcoded on-the-fly into DXTC, which is used for texturing the game world.

The transcoding process is one area where NVIDIA gets to play their CUDA card once more. When Anand benchmarked the new AMD FX-8150, he ran the CPU transcoding routine in Rage as one of numerous tests. I tried the same command post-patch, and with or without CUDA transcoding my system reported a time of 0.00 seconds (even with one thread), so that appears to be broken now as well. Anyway, I’d assume that a GTX 580 will transcode textures faster than any current CPU, but just how much faster I can’t say. AMD graphics on the other hand will currently have to rely on the CPU for transcoding.

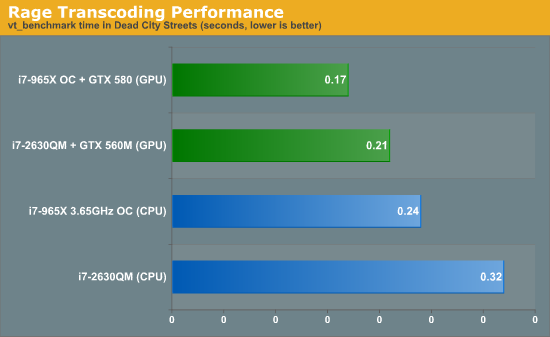

Update: Sorry, I didn't realize that you had to have a game running rather than just using vt_benchmark at the main menu. Bear in mind that I'm using a different location than Anand used in his FX-8150 review; my save is in Dead City, which tends to be one of the more taxing areas. I'm using two different machines as a point of reference, one a quad-core (plus Hyper-Threading) 3.65GHz i7-965 and the other a quad-core i7-2630QM. I've also got results with and without CUDA, since both systems are equipped with NVIDIA GPUs. Here's the result, which admittedly isn't much:

This is using "vt_benchmark 8" and reporting the best score, but regardless of the number of threads it's pretty clear that CUDA is able to help speed up the image transcoding process. How much this actually affects gameplay isn't so clear, as new textures are likely transcoded in small bursts once the initial level load is complete. It's also worth pointing out that the GPU transcoding looks like it would be of more benefit with slower CPUs, as my desktop realized a 41% improvement while the lower clocked notebook (even with a slower GPU) realized a 52% improvement. I also tested the GTX 580 and GTX 560M with and without CUDA transcoding and didn’t notice a difference in perforamnce, but I don’t have any empirical data. That brings us to the final topic.

80 Comments

View All Comments

cactusdog - Saturday, October 15, 2011 - link

What is it with games these days? It seems like nearly every game, even highly anticipated games are broken, have features missing or have serious flaws when they're first released. Its like AMD and Nvidia dont give them any attention until they are released and people are complaining.Its like they dont care about PC gaming anymore.

Stas - Saturday, October 15, 2011 - link

Try Hard Reset. PC exclusive of highest quality. Don't apply, if you don't enjoy a mix of Serious Sam, Painkiller, and Doom 3 :)cmdrdredd - Saturday, October 15, 2011 - link

It's not AMD or Nvidia at all...blame the devs and bean counters at the big publishers for pushing for console releases.AssBall - Saturday, October 15, 2011 - link

^^ Thisckryan - Saturday, October 15, 2011 - link

Back when I was just knee-high to a bullfrog, I seem to remember having some technical difficulties with Ultima VII. In that case, it was certainly worth the aggravation. It's not a new phenomenon, but in fairness, games and the platforms they run on are much more complicated now. In between the time you spend with Microsoft for your Xbox version and Sony for your PS3 version, you have to find time to work with AMD/nVidia for your PC version. Technical issues are bad, but giving me a barely-warmed over console port is the worse sin. Sometimes the itchy rash of consolitis is minor, like some small UI issues (Press the Start Button!). Other times, it's deeply annoying, making me wonder if the developers have actually ever played a PC game before. Some games, like TES:Oblivion, have issues that can be corrected later by a dedicated mod community -- another important element for the identity of PC gaming.So while technical issues at launch for a game that's been in development for 3 to 5 years are inexcusable, there are some issues that are worse. Inane plots, bad mechanics, and me-too gameplay styles are making me question how committed some companies are to making PC gaming worthwhile (for consumers and therefore the industry as well). The PC is far and away the finest gaming platform, but some developers really need to try harder. Maybe PC gamers just expect more, but I say that we're a forgiving bunch -- but you have to bring your (triple) A-game.

JonnyDough - Monday, October 17, 2011 - link

^^ THIS!Snowstorms - Saturday, October 15, 2011 - link

consoles have drastically influenced PC optimizationit has gotten so bad that I have started to check if the game is a PC exclusive, if it's not I'm already on my toes

brucek2 - Saturday, October 15, 2011 - link

id claims the problems were mostly driver incompatibilities, which is a story we've all heard before.I wish publishers would include the drivers they qualified their games on as an optional part of their release. In an ideal situation the current and/or beta drivers already on your system would be fine and you wouldn't need them. But in the all too common screwed up situation, you could at least install those particular drivers for this particular game and know it was supposed to work.

taltamir - Saturday, October 15, 2011 - link

Why is it nvidia/AMD's job to fix games?they do it, but they shouldn't need to. The drivers are out, they exist, they are speced.

And every single indie developer manages to make games that work without a driver update. Meanwhile major A titles ofter are released buggy and then nVidia and AMD scramble to hack it to so that the drivers identify the EXE and fix it. For example, Both companies gut the AA process included in most games and forces it to use a proper & modern AA solution for better quality and performance. (MSAA has been obsolete for years.)

JarredWalton - Saturday, October 15, 2011 - link

Most major AA titles have the developers in contact with NVIDIA and AMD, and they tell the GPU guys what stuff isn't working well and needs fixing (although anecdotally, I hear NVIDIA is much better at responding to requests). This is particularly true when a game does something "new", i.e. an up-to-date OpenGL release when we really haven't seen much from that arena in a while. I agree with the above post that says the devs should state what driver version they used for testing, but I wouldn't be surprised if that's covered by an NDA with the GPU companies.