Facebook's "Open Compute" Server tested

by Johan De Gelas on November 3, 2011 12:00 AM ESTThe Facebook Server

In the basement of the Palo Alto, California headquarters, three Facebook engineers built Facebook's custom-designed servers, power supplies, server racks, and battery backup systems. The Facebook server had to be much cheaper than the average server, as well as more power efficient.

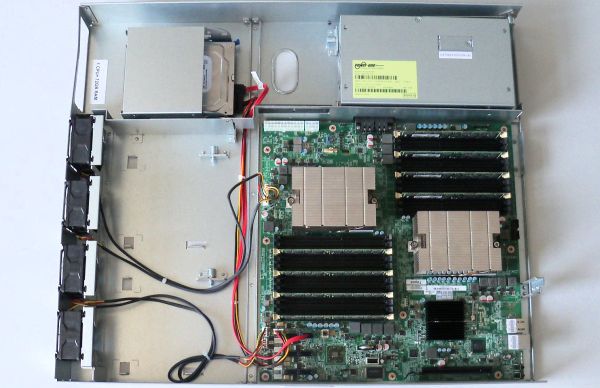

The first change they made was the chassis height, going for a 1.5U high design as a compromise between density and making the server easier to cool. 1.5U allows them to use taller heatsinks, larger (60mm) lower-RPM fans than the screaming 40mm energy hoggers used in a 1U chassis. The result is that the fans consume only 2% to 4% of the total power, which is pretty amazing as we have seen 1U fans that can consume up to one third of the total system power. It seems that air-cooling in the Open Compute 1.5U server is as efficient as the best 3U servers.

At the same time, Facebook Engineering kept the chassis very simple, without any plastic. It makes the airflow through the server smoother and reduces weight. The bottom plate of one server serves as the top plate for the server beneath it.

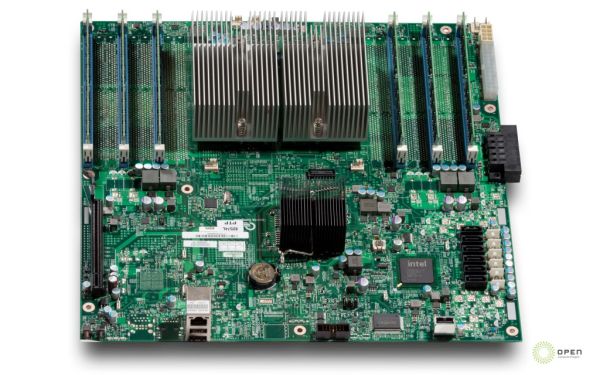

Facebook has designed an AMD and an Intel motherboard, both manufactured by Quanta. Much attention was paid to the efficiency of the voltage regulators (94% efficiency). The other trick was again to remove anything that was not absolutely necessary. These motherboards have no BMC, very few USB (2) and NIC ports (2), one expansion slot, and are headless (no videochip).

The only thing that an administrator can do remotely is "reboot over LAN". The idea is that if that does not help, the problem is in 99% of cases severe enough that you have to send an administrator to the server anyway.

The AMD servers are mostly used as Memcached servers, as the four channels of AMD Magny-cours Opterons 6100 are capable of using 12 DIMMs per CPU, or 24 DIMMs in total. That works out to 384GB of caching memory.

In contrast the Facebook Open Compute Xeon servers only have six DIMM slots as they are used for processing intensive tasks such as the PHP "assembling" data servers.

67 Comments

View All Comments

JohanAnandtech - Thursday, November 3, 2011 - link

It is an ATI ES 1000, that is a server/thin client chip. That chip is only 2D. I can not find the power specs, but considering that the chip does not even need a heatsink, I think this chip consumes maybe 1W in idle.mczak - Thursday, November 3, 2011 - link

ES 1000 is almost the same as radeon 7000/ve (no that's not HD 7000...) (some time in the past you could even force 3d in linux with the open-source driver though it usually did not work). The chip also has dedicated ram chip (though only 16bit wide memory interface) and I'm not sure how good the powersaving methods of it are (probably not downclocking but supporting clock gating) - not sure if it fits into 1W at idle (but certainly shouldn't be much more).JohanAnandtech - Thursday, November 3, 2011 - link

I can not find any good tech resources on the chip, but I can imagine that AMD/ATI have shrunk the chip since it's appearance in 2005. If not, and the chip does consume quite a bit, it is a bit disappointing that server vendors still use it as the videochip is used very rarely. You don't need a videochip for RDP for example.mczak - Thursday, November 3, 2011 - link

I think the possibility of this chip being shrunk since 2005 is 0%. The other question is if it was shrunk from rv100 or if it's actually the same - even if it was shrunk it probably was a well mature process like 130nm in 2005 otherwise it's 180nm.At 130nm (estimated below 20 million transistors) the die size would be very small already and probably wouldn't get any smaller due to i/o anyway. Most of the power draw might be due to i/o too so shrink wouldn't help there neither. It is possible though it's really below 1W (when idle).

Taft12 - Thursday, November 3, 2011 - link

A shrink WOULD allow production of many more units on each wafer. Since almost every server shipped needs an ES1000 chip, demand is consistently on the order of millions per year.mczak - Thursday, November 3, 2011 - link

There's a limit how much i/o you can have for a given die size (actually the limiting factor is not area but circumference so making it rectangular sort of helps). i/o pads apparently don't shrink well hence if your chip has to have some size because you've got too many i/o pads a shrink will do nothing but make it more expensive (since smaller process nodes are generally more expensive per area).Being i/o bound is quite possible for some chips though I don't know if this one really is - it's got at least display outputs, 16bit memory interface, 32bit pci interface and the required power/ground pads at least.

In any case even at 180nm the chip should be below 40mm² already hence the die size cost is probably quite low compared to packaging, cost of memory etc.

Penti - Saturday, November 5, 2011 - link

It's the integrated BMC/ILO solution which also includes a GPU that would use more power then the ES1000 any how. That is also what is lacking in the simple Google / Facebook compute-node setup. They don't need that kind off management and can handle that a node goes offline.haplo602 - Thursday, November 3, 2011 - link

It seems to me that the HP server is doing as well as the Facebook ones. Considering it has more featuers (remote management, integrated graphics) and a "common" PSU.JohanAnandtech - Thursday, November 3, 2011 - link

The HP does well. However, if you don't need integrated graphics and you hardly use the BMC at all, you still end up with a server that wastes power on features you hardly use.twhittet - Thursday, November 3, 2011 - link

I would assume cost is also a major factor. Why pay for so many features you don't need? Manufacturing costs should be lower if they actually build these in bulk.