Intel's Ivy Bridge Architecture Exposed

by Anand Lal Shimpi on September 17, 2011 2:00 AM EST- Posted in

- CPUs

- Intel

- Ivy Bridge

- IDF 2011

- Trade Shows

The New GPU

Westmere marked a change in the way Intel approached integrated graphics. The GPU was moved onto the CPU package and used an n-1 manufacturing process (45nm when the CPU was 32nm). Performance improved but it still wasn't exactly what we'd call acceptable.

Sandy Bridge brought a completely redesigned GPU core onto the processor die itself. As a co-resident of the CPU, the GPU was treated as somewhat of an equal - both processors were built on the same 32nm process.

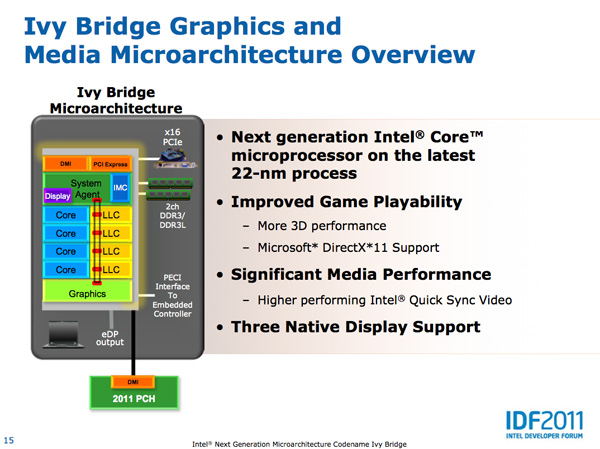

With Ivy Bridge the GPU remains on die but it grows more than the CPU does this generation. Intel isn't disclosing the die split but there are more execution units this round (16 up from 12 in SNB) so it would appear as if the GPU occupies a greater percentage of the die than it did last generation. It's not near a 50/50 split yet, but it's continued indication that Intel is taking GPU performance seriously.

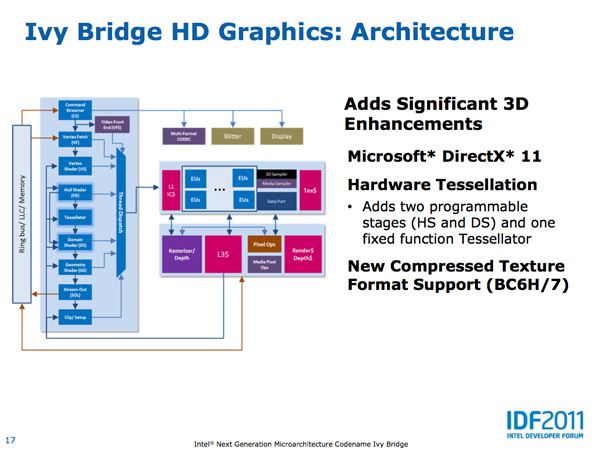

The Ivy Bridge GPU adds support for OpenCL 1.1, DirectX 11 and OpenGL 3.1. This will finally bring Intel's GPU feature set on par with AMD's. Ivy also adds three display outputs (up from two in Sandy Bridge). Finally, Ivy Bridge improves anisotropic filtering quality. As Intel Fellow Tom Piazza put it, "we now draw circles instead of flower petals" referring to image output from the famous AF tester.

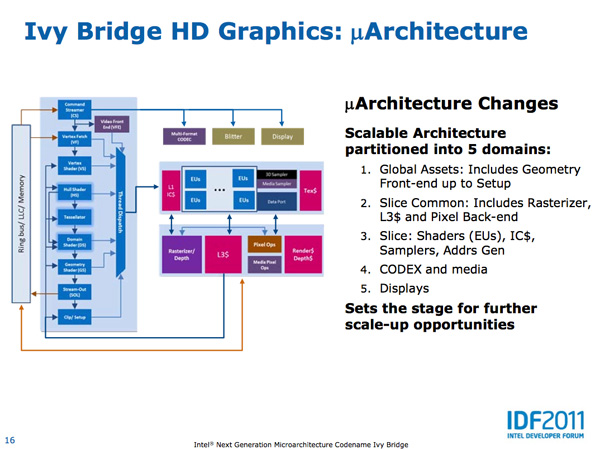

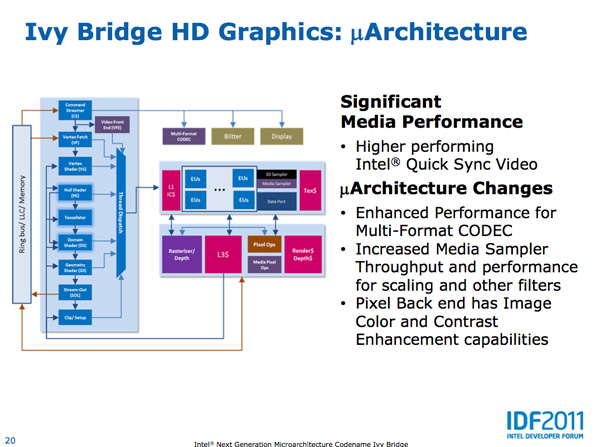

Intel made the Ivy Bridge GPU more modular than before. In SNB there were two GPU configurations: GT1 and GT2. Sandy Bridge's GT1 had 6 EUs (shaders/cores/execution units) while GT2 had 12 EUs, both configurations had one texture sampler. Ivy Bridge was designed to scale up and down more easily. GT2 has 16 EUs and 2 texture samplers, while GT1 has an unknown number of EUs (I'd assume 8) and 1 texture sampler.

I mentioned that Ivy Bridge was designed to scale up, unfortunately that upwards scaling won't be happening in IVB - GT2 will be the fastest configuration available. The implication is that Intel had plans for IVB with a beefier GPU but it didn't make the cut. Perhaps we will see that change in Haswell.

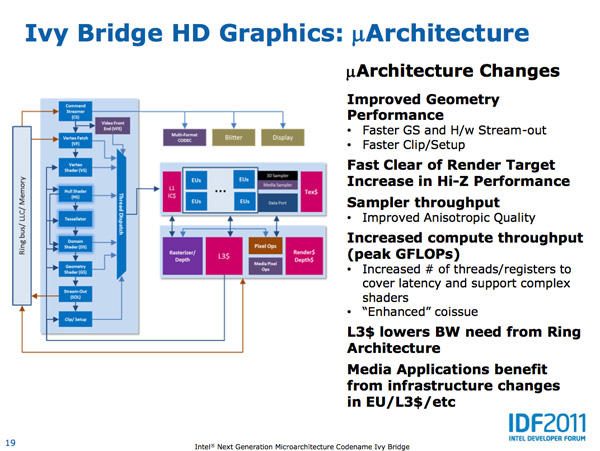

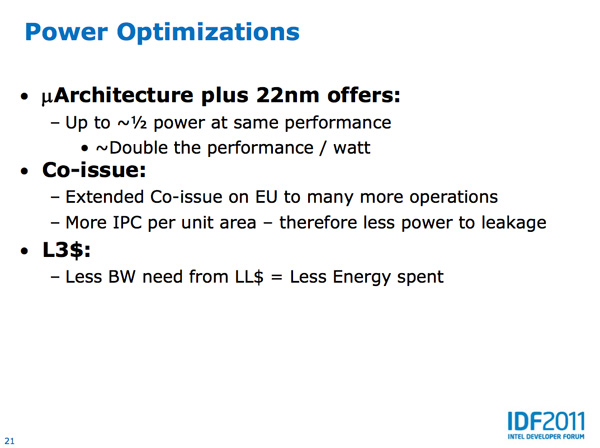

As we've already mentioned, Intel is increasing the number of EUs in Ivy Bridge however these EUs are much better performers than their predecessors. Sandy Bridge's EUs could co-issue MADs and transcendental operations, Ivy Bridge can do twice as many MADs per clock. As a result, a single Ivy Bridge EU gets close to twice the IPC of a Sandy Bridge EU - in other words, you're looking at nearly 2x the GFLOPS in shader bound operations as Sandy Bridge per EU. Combine that with more EUs in Ivy Bridge and this is where the bulk of the up-to-60% increase in GPU performance comes from.

Intel also added a graphics-specific L3 cache within Ivy Bridge. Despite being able to share the CPU's L3 cache, a smaller cache located within the graphics core allows frequently accessed data to be accessed without firing up the ring bus.

There are other performance enhancements within the shader core. Scatter & gather operations now execute 32x faster than Sandy Bridge, which has implications for both GPU compute and general 3D gaming performance.

Despite the focus on performance, Intel actually reduced the GPU clock in Ivy Bridge. It now runs at up to 95% of the SNB GPU clock, at a lower voltage, while offering much higher performance. Thanks primarily to Intel's 22nm process (the aforementioned architectural improvements help as well), GPU performance per watt nearly doubles over Sandy Bridge. In our Llano review we found that AMD delivered much longer battery life in games (nearly 2x SNB) - Ivy Bridge should be able to help address this.

Quick Sync Performance Improved

With Sandy Bridge Intel introduced an extremely high performing hardware video transcode engine called Quick Sync. The solution ended up delivering the best combination of image quality and performance of any available hardware accelerated transcoding options from AMD, Intel and NVIDIA. Quick Sync leverages a combination of fixed function hardware, IVB's video decode engine and the EU array.

The increase in EUs and improvements to their throughput both contribute to increases in Quick Sync transcoding performance. Presumably Intel has also done some work on the decode side as well, which is actually one of the reasons Sandy Bridge was so fast at transcoding video. The combination of all of this results in up to 2x the video transcoding performance of Sandy Bridge. There's also the option of seeing less of a performance increase but delivering better image quality.

I've complained in the past about the lack of free transcoding applications (e.g. Handbrake, x264) that support Quick Sync. I suspect things will be better upon Ivy Bridge's arrival.

97 Comments

View All Comments

JonnyDough - Monday, September 19, 2011 - link

4-5 year old GPU? Heh, bud...most hardware takes years to develop. And the HD3000 series may be a bit dated but it makes even the XBox 360 look weak in comparison. Hardly dismal.moozoo - Saturday, September 17, 2011 - link

Does its GPU support double precision under OpenCL? i.e. cl_khr_fp64Does Trinity?

Ryan Smith - Saturday, September 17, 2011 - link

We don't have solid details on either one, but don't count on it. The reasons we don't see full FP64 support on non-halo GPUs are still in play for CPUs.Galcobar - Saturday, September 17, 2011 - link

Perhaps I'm missing something in the acronyms, but the table and text seems to disagree on the availability of SSD caching.The text states "All of the 7-series consumer chipsets will support Intel's Rapid Storage Technology (RST, aka SSD caching)."

The table, however, puts No under the Z75 column for Intel SRT (SSD caching).

As I understand things, you need RST (software) to support SRT (bound to the motherboard), but without SRT you don't get SSD caching.

Anand Lal Shimpi - Saturday, September 17, 2011 - link

Fixed :) SRT is only on the Z77/H77, not the Z75.Take care,

Anand

mlkmade - Saturday, September 17, 2011 - link

I know its really early to be talking about this cause ivy won't be out for awhile..but what about what amounts to be "ivyb-e" ? I'm sure details are very scarce...but will it follow the desktop path (both s1155) and be socket compatible? in this case s2011? if ivyb-e is socket compatible with sb-e...that'd be great..but by then all the chipset problems would be fleshed out huh..buy a new mono anywayAnand Lal Shimpi - Saturday, September 17, 2011 - link

I would hope so, but as of now there is no IVB-E on the roadmaps so anything I'd say here would be uninformed and speculative at this point :-/Take care,

Anand

ltcommanderdata - Saturday, September 17, 2011 - link

Does Ivy Bridge finally allow the IGP and QuickSync engine to be available even with a discrete GPU plugged in for both mobile and desktop without resorting to specific chipsets (ie. limited to the high-end chipset) or third-party software (relying on motherboard makers and OEMs to deal with Lucid)? WIth the IGP being OpenCL and DirectCompute capable, even if you have the latest Quad SLI/Crossfire setup it would be useful to have the IGP help out in GPGPU tasks.And it's interesting that with AMD introducing a beefier form of SMT with two full integer cores, Intel decided not to similarly increase hardware resource duplication to expand Hyperthreading. Instead Intel is focusing on improving single threaded performance by making sure a single thread can use all the resources if Hyperthreading is not needed. Seeing most software isn't making use of 8 simultaneous threads, focusing on making 4 threads (1 per core) work as fast as possible does make sense.

Meegulthwarp - Saturday, September 17, 2011 - link

"As we've already seen, introducing a 35W quad-core part could enable Apple to ship a quad-core IVB in a 13-inch MacBook Pro." Here is to hoping that someone other than apple will also ship a decent 13-inch with a quad.Other than that great insight, I really hope the GPU on IVB will be half way useable. I think we've hit a point where CPU performance is more than adequate for 95% of consumers. Now just need to up the GPU performance and get power down so we can use our laptops on battery all day. I'm more than happy with my 2 year old C2D CPU performance but want battery life, hugely tempted with AMD's A6-3400M. But with Bulldozer looming I think I may hold back for 6 months.

Anand Lal Shimpi - Saturday, September 17, 2011 - link

I hope so too, I simply used Apple as an example because it has migrated to quad-core in every member of its MBP family with the exception of the 13-inch. I've updated the statement to be a bit more broad :)Take care,

Anand