AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTNot Just A New Architecture, But New Features Too

So far we’ve talked about Graphics Core Next as a new architecture, how that new architecture works, and what that new architecture does that Cayman and other VLIW architectures could not. But along with the new architecture GCN will bring with it a number of new compute features to further flesh out AMD’s GPU computing capabilities and to cement the GPU’s position as the CPU’s partner rather than a subservient peripheral.

In terms of base features the biggest change will be that GCN will implement the underlying features necessary to support C++ and other advanced languages. As a result GCN will be adding support for pointers, virtual functions, exception support, and even recursion. These underlying features mean that developers will not need to “step down” from higher languages to C to write code for the GPU, allowing them to more easily program for the GPU and CPU within the same application. For end-users the benefit won’t be immediate, but eventually it will allow for more complex and useful programs to be GPU accelerated.

Because the underlying feature set is evolving, the memory subsystem is also evolving to be able to service those features. The chief change here is that the hardware is being adapted to support an ISA that uses unified memory. This goes hand-in-hand with the earlier language features to allow programmers to write code to target both the CPU and the GPU, as programs (or rather compilers) can reference memory anywhere, without the need to explicitly copy memory from one device to the other before working on it. Now there’s still a significant performance impact when accessing off-GPU memory – particularly in the case of dGPUs where on-board memory is many times faster than system memory – so developers and compilers will still be copying data around to keep it close to the processor that’s going to use it, but this essentially becomes abstracted from developers.

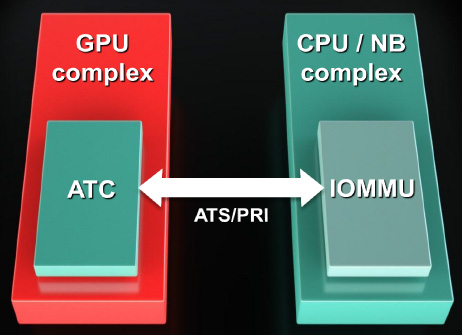

Now what’s interesting is that the unified address space that will be used is the x86-64 address space. All instructions sent to a GCN GPU will be relative to the x86-64 address space, at which point the GPU will be responsible for doing address translation to local memory addresses. In fact GCN will even be incorporating an I/O Memory Mapping Unit (IOMMU) to provide this functionality; previously we’ve only seen IOMMUs used for sharing peripherals in a virtual machine environment. GCN will even be able to page fault half-way gracefully by properly stalling until the memory fetch completes. How this will work with the OS remains to be seen though, as the OS needs to be able to address the IOMMU. GCN may not be fully exploitable under Windows 7.

Finally on the memory side, AMD is adding proper ECC support to supplement their existing EDC (Error Detection & Correction) functionality, which is used to ensure the integrity of memory transmissions across the GDDR5 memory bus. Both the SRAM and VRAM memory can be ECC protected. For the SRAM this is a free operation, while for the VRAM there will be a performance overhead. We’re assuming that AMD will be using a virtual ECC scheme like NVIDIA, where ECC data is distributed across VRAM rather than using extra memory chips/controllers.

Elsewhere we’ve already mentioned FP64 support. All GCN GPUs will support FP64 in some form, making FP64 support a standard feature across the entire lineup. The actual FP64 performance is configurable – the architecture supports ½ rate FP64, but ¼ rate and 1/16 rate are also options. We expect AMD to take a page from NVIDIA here and configure lower-end consumer parts to use the slower rates since FP64 is not currently important for consumer uses.

Finally, for programmers some additional hardware changes have been made to improve debug support by allowing debugging tools to tap the GPU at additional points. The new ISA for GCN will already make debugging easier, but this will further that goal. As with other developer features this won’t directly impact end-users, but it will ultimately lead to better software sooner as the features and tools available for debugging GPU programs have been well behind the well-established tools used for debugging CPU programs.

83 Comments

View All Comments

mariush - Thursday, December 22, 2011 - link

Last paragraph, last page....It’s clear that 2011 is shaping up to be a big year for GPUs, and we’re not even half-way through. So stay tuned, there’s much more to come.

Say what?

ajp_anton - Thursday, December 22, 2011 - link

It's an old article (I'm guessing June 17th based on first comment), but bumped to the top because of the "launch". Don't know why the article's date is new...AbheekG - Friday, August 9, 2019 - link

2019 and still an article worth studying and referring to. Amazing job with this, it's a prime example of quality tech journalism!