AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTMany SIMDs Make One Compute Unit

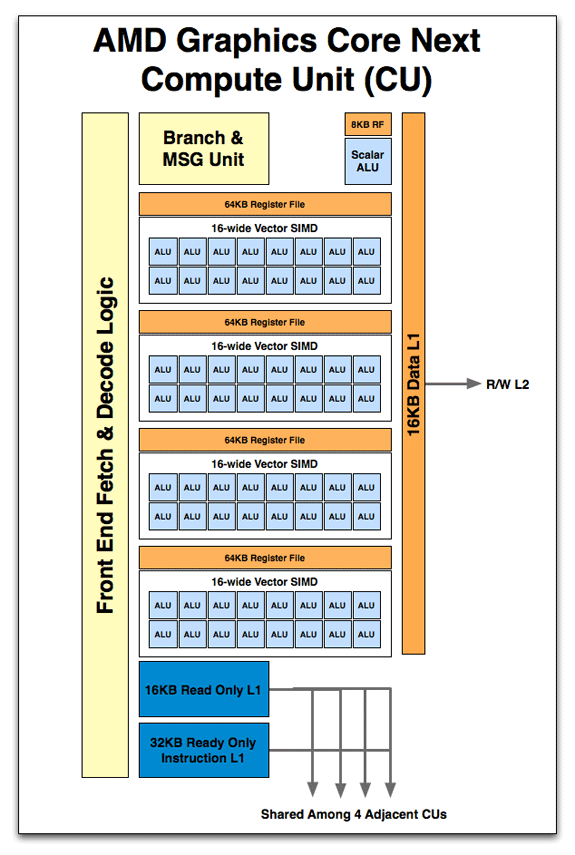

When we move up a level we have the Compute Unit, what AMD considers the fundamental unit of computation. Whereas a single SIMD can execute vector operations and that’s it, combined with a number of other functional units it makes a complete unit capable of the entire range of compute tasks. In practice this replaces a Cayman SIMD, which was a collection of Cayman SPs. However a GCN Compute Unit is capable of far, far more than a Cayman SIMD.

So what’s in a Compute Unit? Just as a Cayman SIMD was a collection of SPs, a Compute Unit starts with a collection of SIMDs. 4 SIMDs are in a CU, meaning that like a Cayman SIMD, a GCN CU can work on 4 instructions at once. Also in a Compute Unit is the control hardware & branch unit responsible for fetching, decoding, and scheduling wavefronts and their instructions. This is further augmented with a 64KB Local Data Store and 16KB of L1 data + texture cache. With GCN data and texture L1 are now one and the same, and texture pressure on the L1 cache has been reduced by the fact that AMD is now keeping compressed rather than uncompressed texels in the L1 cache. Rounding out the memory subsystem is access to the L2 cache and beyond. Finally there is a new unit: the scalar unit. We’ll get back to that in a bit.

But before we go any further, let’s stop here for a moment. Now that we know what a CU looks like and what the weaknesses are of VLIW, we can finally get to the meat of the issue: why AMD is dropping VLIW for non-VLIW SIMD. As we mentioned previously, the weakness of VLIW is that it’s statically scheduled ahead of time by the compiler. As a result if any dependencies crop up while code is being executed, there is no deviation from the schedule and VLIW slots go unused. So the first change is immediate: in a non-VLIW SIMD design, scheduling is moved from the compiler to the hardware. It is the CU that is now scheduling execution within its domain.

Now there’s a distinct tradeoff with dynamic hardware scheduling: it can cover up dependencies and other types of stalls, but that hardware scheduler takes up die space. The reason that the R300 and earlier GPUs were VLIW was because the compiler could do a fine job for graphics, and the die space was better utilized by filling it with additional functional units. By moving scheduling into hardware it’s more dynamic, but we’re now consuming space previously used for functional units. It’s a tradeoff.

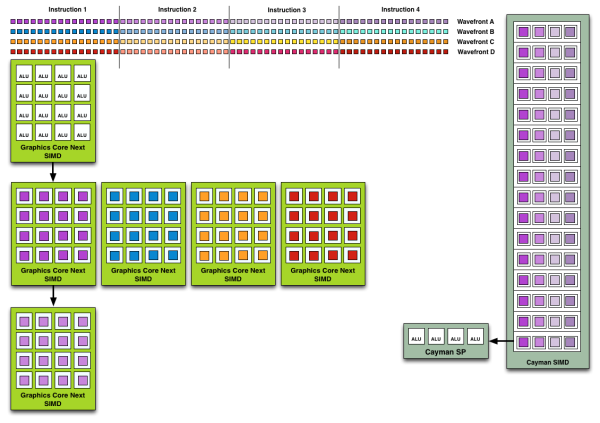

So what can you do with dynamic scheduling and independent SIMDs that you could not do with Cayman’s collection of SPs (SIMDs)? You can work around dependencies and schedule around things. The worst case scenario for VLIW is that something scheduled is completely dependent or otherwise blocking the instruction before and after it – it must be run on its own. Now GCN is not an out-of-order architecture; within a wavefront the instructions must still be executed in order, so you can’t jump through a pixel shader program for example and execute different parts of it at once. However the CU and SIMDs can select a different wavefront to work on; this can be another wavefront spawned by the same task (e.g. a different group of pixels/values) or it can be a wavefront from a different task entirely.

Wavefront Execution Example: SIMD vs. VLIW. Not To Scale - Wavefront Size 16

Cayman had a very limited ability to work on multiple tasks at once. While it could consume multiple wavefronts from the same task with relative ease, its ability to execute concurrent tasks was reliant on the API support, which was limited to an extension to OpenCL. With these hardware changes, GCN can now concurrently work on tasks with relative ease. Each GCN SIMD has 10 wavefronts to choose from, meaning each CU in turn has up to a total of 40 wavefronts in flight. This in a nutshell is why AMD is moving from VLIW to non-VLIW SIMD for Graphics Core Next: instead of VLIW slots going unused due to dependencies, independent SIMDs can be given entirely different wavefronts to work on.

As a consequence, compiling also becomes much easier. With the compiler freed from scheduling tasks, compilation behaves in a rather standard manner, since most other architectures are similarly scheduled in hardware. Writing a compiler still isn’t absolutely easy, but when it comes to optimizing the execution of a program the compiler can focus on other matters, making it much easier for other languages to target GCN. In fact without the need to generate long VLIW instructions or to including scheduling information, the underlying ISA for GCN is also much simpler. This makes debugging much easier since the code generated reflects the fact that scheduling is now done in hardware, which is reflected in our earlier assembly code example.

Now while leaving behind the drawbacks of VLIW is the biggest architectural improvement for compute performance coming from Cayman, the move to non-VLIW SIMDs is not the only benefit. We still have not discussed the final component of the CU: the Scalar ALU. New to GCN, the Scalar unit serves to further keep inefficient operations out of the SIMDs, leaving the vector ALUs on the SIMDs to execute instructions en mass. The scalar unit is composed of a single scalar ALU, along with an 8KB register file.

So what does a scalar unit do? First and foremost it executes “one-off” mathematical operations. Whole groups of pixels/values go through the vector units together, but independent operations go to the scalar unit as to not waste valuable SIMD time. This includes everything from simple integer operations to control flow operations like conditional branches (if/else) and jumps, and in certain cases read-only memory operations from a dedicated scalar L1 cache. Overall the scalar unit can execute one instruction per cycle, which means it can complete 4 instructions over the period of time it takes for one wavefront to be completed on a SIMD.

Conceptually this blurs a bit more of the remaining line between a scalar GPU and a vector GPU, but by having both types of units it means that each unit type can work on the operations best suited for it. Besides avoiding feeding SIMDs non-vectorized datasets, this will also improve the latency for control flow operations, where Cayman had a rather nasty 44 cycle latency.

83 Comments

View All Comments

ClagMaster - Tuesday, June 21, 2011 - link

What is being describe is tantamont Vector Processing that was featured on CRAY supercomputers available in the 70's through 90's. In the machines I once programmed (using CFT77 compiler), a vector was 64 64-bit words that was processed through a pipe.789427 - Thursday, June 23, 2011 - link

Is it just me, or will we be seeing AMD refresh cycles quadruple for their processors because of on-die graphics?I sense a prefix/suffix CPU/GPU diversification happening soon - and a bit of confusion with maybe some sideport memory enabled chips coming our way.

2/4/8 cores with

6550, 6750, 6850 level graphics and

512Mb/1Gb sideport

all for $100-$200 and crossfire capable?

Drool now?

cb

Kakkoii - Sunday, August 21, 2011 - link

This pleases me, because this will likely mean that AMD no longer has such a performance per dollar and watt difference from Nvidia. Thus further degrading most arguments AMD fanboys have against Nvidia. I see this being a benefit for Nvidia in the long term. After AMD claiming what Nvidia was doing wasn't right, they basically give up and are doing it themselves now too.Cyber.Angel - Saturday, October 15, 2011 - link

exactly what I was thinkingAMD/ATI is catching up - in the HPC sector

otherwise they are still a better buy in the consumer market

and in 2012 also in HPC

Nvidia uses too much power

too bad if even Trinity is not using this new GPU design...

Wreckage - Wednesday, December 21, 2011 - link

I'm guessing we won't see product until sometime next year.tzhu07 - Wednesday, December 21, 2011 - link

Looking forward to buying a 7970 (or possibly a 7950) to go along with my Sandy Bridge build. I'm currently running on Intel HD3000 and it's killing me. But just a few more days now. Hopefully I can hit the refresh button on my browser fast enough to catch one before they sell out.OwnedKThxBye - Thursday, December 22, 2011 - link

Typo on the last page. At no point has AMD specified when a GPU will appear using GCN will appear, so it’s very much a guessing game.R3MF - Thursday, December 22, 2011 - link

"We expect AMD to take a page from NVIDIA here and configure lower-end consumer parts to use the slower rates since FP64 is not currently important for consumer uses."Will AMD be likewise crippling the FP64 support native to the chip, in products that have the resident features, if they are sold in a consumer SKU rather than a more expensive professional SKU?

I refer to nvidia's practice of crippling access to FP64 functionality in Geforce 580 cards that is otherwise available in Tesla 580 products.

zarck - Thursday, December 22, 2011 - link

For the GPGPU GRID, a test with Radeon 7970 and Folding@Home it's possible ?https://fah-web.stanford.edu/projects/FAHClient/wi...

morricone - Thursday, December 22, 2011 - link

I'm a developer myself and you have to look really hard to find an article as good as this. Keep this stuff up!