AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTPrelude: The History of VLIW & Graphics

Before we get into the nuts & bolts of Graphics Core Next, perhaps it’s best to start at the bottom, and then work our way up.

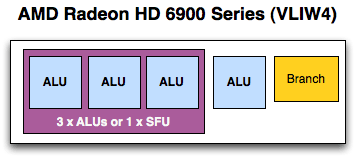

The fundamental unit of AMD’s previous designs has been the Streaming Processor, previously known as the SPU. In every modern AMD design other than Cayman (6900), this is a Very Long Instruction Word 5 (VLIW5) design; Cayman reduced this to VLIW4. As implied by the architectural name, each SP would in turn have 5 or 4 fundamental math units – what AMD now calls Radeon cores – which executed the individual instructions in parallel over as many clocks as necessary. Radeon cores were coupled with registers, a branch unit, and a special function (transcendental) unit as necessary to complete the SP.

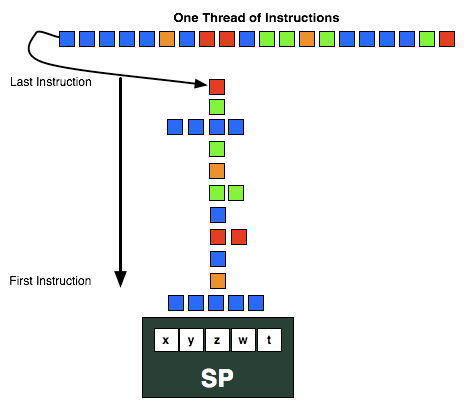

VLIW designs are designed to excel at executing many operations from the same task in parallel by breaking it up into smaller groupings called wavefronts. In AMD’s case a wavefront is a group of 64 pixels/values and the list of instructions to be executed against them. Ideally, in a wavefront a group of 4 or 5 instructions will come down the pipe and be completely non-interdependent, allowing every Radeon core to be fed. When dependent instructions would come down however, fewer instructions could be scheduled at once, and in the worst case only a single instruction could be scheduled. VLIW designs will never achieve perfect efficiency in this regard, but the farther real world utilization is from ideal efficiency, the weaker the benefits of VLIW.

The use of VLIW can be traced back to the first AMD DX9 GPU, R300 (Radeon 9700 series). If you recall our Cayman launch article, we mentioned that AMD initially used a VLIW design in those early parts because it allowed them to process a 4 component dot product (e.g. w, x, y, z) and a scalar component (e.g. lighting) at the same time, which was by far the most common graphics operation. Even when moving to unified shaders in DX10 with R600 (Radeon HD 2900), AMD still kept the VLIW5 design because the gaming market was still DX9 and using those kinds of operations. But as new games and GPGPU programs have come out efficiency has dropped over time, and based on AMD’s own internal research at the time of the Cayman launch the average shader program was utilizing only 3.4 out of 5 Radeon cores. Shrinking from VLIW5 to VLIW4 fights this some, but utilization will always be a concern.

Finally, it’s worth noting what’s in charge of doing all of the scheduling. In the CPU world we throw things at the CPU and let it schedule actions as necessary – it can even go out-of-order (OoO) within a thread if it will be worth it. With VLIW, scheduling is the domain of the compiler. The compiler gets the advantage of knowing about the full program ahead of time and can intelligently schedule some things well in advance, but at the same time it’s blind to other conditions where the outcome is unknown until the program is run and data is provided. Because of this the schedule is said to be static – it’s set at the time of compilation and cannot be changed in-flight.

So why in an article about AMD Graphics Core Next are we going over the quick history of AMD’s previous designs? Without understanding the previous designs, we can’t understand what is new about what AMD is doing, or more importantly why they’re doing it.

83 Comments

View All Comments

ClagMaster - Tuesday, June 21, 2011 - link

What is being describe is tantamont Vector Processing that was featured on CRAY supercomputers available in the 70's through 90's. In the machines I once programmed (using CFT77 compiler), a vector was 64 64-bit words that was processed through a pipe.789427 - Thursday, June 23, 2011 - link

Is it just me, or will we be seeing AMD refresh cycles quadruple for their processors because of on-die graphics?I sense a prefix/suffix CPU/GPU diversification happening soon - and a bit of confusion with maybe some sideport memory enabled chips coming our way.

2/4/8 cores with

6550, 6750, 6850 level graphics and

512Mb/1Gb sideport

all for $100-$200 and crossfire capable?

Drool now?

cb

Kakkoii - Sunday, August 21, 2011 - link

This pleases me, because this will likely mean that AMD no longer has such a performance per dollar and watt difference from Nvidia. Thus further degrading most arguments AMD fanboys have against Nvidia. I see this being a benefit for Nvidia in the long term. After AMD claiming what Nvidia was doing wasn't right, they basically give up and are doing it themselves now too.Cyber.Angel - Saturday, October 15, 2011 - link

exactly what I was thinkingAMD/ATI is catching up - in the HPC sector

otherwise they are still a better buy in the consumer market

and in 2012 also in HPC

Nvidia uses too much power

too bad if even Trinity is not using this new GPU design...

Wreckage - Wednesday, December 21, 2011 - link

I'm guessing we won't see product until sometime next year.tzhu07 - Wednesday, December 21, 2011 - link

Looking forward to buying a 7970 (or possibly a 7950) to go along with my Sandy Bridge build. I'm currently running on Intel HD3000 and it's killing me. But just a few more days now. Hopefully I can hit the refresh button on my browser fast enough to catch one before they sell out.OwnedKThxBye - Thursday, December 22, 2011 - link

Typo on the last page. At no point has AMD specified when a GPU will appear using GCN will appear, so it’s very much a guessing game.R3MF - Thursday, December 22, 2011 - link

"We expect AMD to take a page from NVIDIA here and configure lower-end consumer parts to use the slower rates since FP64 is not currently important for consumer uses."Will AMD be likewise crippling the FP64 support native to the chip, in products that have the resident features, if they are sold in a consumer SKU rather than a more expensive professional SKU?

I refer to nvidia's practice of crippling access to FP64 functionality in Geforce 580 cards that is otherwise available in Tesla 580 products.

zarck - Thursday, December 22, 2011 - link

For the GPGPU GRID, a test with Radeon 7970 and Folding@Home it's possible ?https://fah-web.stanford.edu/projects/FAHClient/wi...

morricone - Thursday, December 22, 2011 - link

I'm a developer myself and you have to look really hard to find an article as good as this. Keep this stuff up!