AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTAMD Graphics Core Next: Out With VLIW, In With SIMD

The fundamental issue moving forward is that VLIW designs are great for graphics; they are not so great for computing. However AMD has for all intents and purposes bet the company on GPU computing – their Fusion initiative isn’t just about putting a decent GPU right on die with a CPU, but then utilizing the radically different design attributes of a GPU to do the computational work that the CPU struggles at. So a GPU design that is great at graphics and poor at computing work simply isn’t sustainable for AMD’s future.

With AMD Graphics Core Next, VLIW is going away in favor of a non-VLIW SIMD design. In principal the two are similar – run lots of things in parallel – but there’s a world of difference in execution. Whereas VLIW is all about extracting instruction level parallelism (ILP), a non-VLIW SIMD is primarily about thread level parallelism (TLP).

Without getting unnecessarily deep into the differences between VLIW and non-VLIW (we’ll save that for another time), the difference in the architectures is about what VLIW does poorly for GPU computing purposes, and why a non-VLIW SIMD fixes it. The principal issue is that VLIW is hard to schedule ahead of time and there’s no dynamic scheduling during execution, and as a result the bulk of its weaknesses follow from that. As VLIW5 was a good fit for graphics, it was rather easy to efficiently compile and schedule shaders under those circumstances. With compute this isn’t always the case; there’s simply a wider range of things going on and it’s difficult to figure out what instructions will play nicely with each other. Only a handful of tasks such as brute force hashing thrive under this architecture.

Furthermore as VLIW lives and dies by the compiler, which means not only must the compiler be good, but that every compiler is good. This is an issue when it comes to expanding language support, as even with abstraction through intermediate languages you can still run into issues, including issues with a compiler producing intermediate code that the shader compiler can’t handle well.

Finally, the complexity of a VLIW instruction set also rears its head when it comes to optimizing and hand-tuning a program. Again this isn’t normally a problem for graphics, but it is for compute. The complex nature of VLIW makes it harder to disassemble and to debug, and in turn difficult to predict performance and to find and fix performance critical sections of the code. Ideally a coder should never have to work in assembly, but for HPC and other uses there is a good deal of performance to be gained by doing so and optimizing down to the single instruction.

AMD provided a short example of this in their presentation, showcasing the example output of their VLIW compiler and their new compiler for Graphics Core Next. Being a coder helps, but it’s not hard to see how contrived things are under VLIW.

VLIW

// Registers r0 contains "a", r1 contains "b"

// Value is returned in r2

00 ALU_PUSH_BEFORE

1 x: PREDGT ____, R0.x, R1.x

UPDATE_EXEC_MASK UPDATE PRED

01 JUMP ADDR(3)

02 ALU

2 x: SUB ____, R0.x, R1.x

3 x: MUL_e R2.x, PV2.x, R0.x

03 ELSE POP_CNT(1) ADDR(5)

04 ALU_POP_AFTER

4 x: SUB ____, R1.x, R0.x

5 x: MUL_e R2.x, PV4.x, R1.x

05 POP(1) ADDR(6)

Non-VLIW SIMD

// Registers r0 contains "a", r1 contains "b"

// Value is returned in r2

v_cmp_gt_f32 r0,r1 //a > b, establish VCC

s_mov_b64 s0,exec //Save current exec mask

s_and_b64 exec,vcc,exec //Do "if"

s_cbranch_vccz label0 //Branch if all lanes fail

v_sub_f32 r2,r0,r1 //result = a - b

v_mul_f32 r2,r2,r0 //result=result * a

s_andn2_b64 exec,s0,exec //Do "else" (s0 & !exec)

s_cbranch_execz label1 //Branch if all lanes fail

v_sub_f32 r2,r1,r0 //result = b - a

v_mul_f32 r2,r2,r1 //result = result * b

s_mov_b64 exec,s0 //Restore exec mask

VLIW: it’s good for graphics, it’s often not as good for compute.

So what does AMD replace VLIW with? They replace it with a traditional SIMD vector processor. While elements of Cayman do not directly map to elements of Graphics Core Next (GCN), since we’ve already been talking about the SP we’ll talk about its closest replacement: the SIMD.

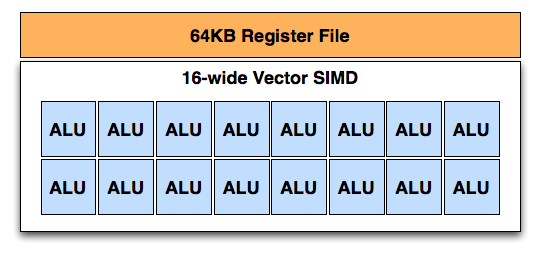

Not to be confused with the SIMD on Cayman (which is a collection of SPs), the SIMD on GCN is a true 16-wide vector SIMD. A single instruction and up to 16 data elements are fed to a vector SIMD to be processed over a single clock cycle. As with Cayman, AMD’s wavefronts are 64 instructions meaning it takes 4 cycles to actually complete a single instruction for an entire wavefront. This vector unit is combined with a 64KB register file and that composes a single SIMD in GCN.

As is the case with Cayman's SPs, the SIMD is capable of a number of different integer and floating point operations. AMD has not gone into fine detail yet of what those are, but we’re expecting something similar to Cayman with the possible exception of how transcendentals are handled. One thing that we do know is that FP64 performance has been radically improved: the GCN architecture is capable of FP64 performance up to ½ its FP32 performance. For home users this isn’t going to make a significant impact right away, but it’s going to help AMD get into professional markets where such precision is necessary.

83 Comments

View All Comments

DoctorPizza - Monday, June 20, 2011 - link

I can't understand that at all.The next architecture will have 16-wide SIMD. How does that fit computational problems better than a 16-wide MIMD VLIW architecture? VLIW can act as if it were SIMD if necessary (simply make each instruction within the word the same, varying only the operands), so how on earth can SIMD be better? SIMD is strictly less general and less flexible than VLIW. This makes it applicable to a narrower set of problems--if you have problems that aren't 16-wide, then you're wasting those additional ALUs, and there's nothing you can do with them, ever. MIMD can't always use them, but there the restriction is unbreakable dependencies, not an inability to encode instructions.

And while VLIW heritage is indeed statically scheduled, nothing about VLIW mandates static scheduling. The next generation Itanium will use dynamic scheduling, for example.

This whole article reads like AMD has offered a rationale for its architectural change, and the author has accepted that rationale without ever stopping to consider if it makes sense.

DoctorPizza - Monday, June 20, 2011 - link

(FYI: the *real* reason to go for SIMD instead of VLIW is simply that VLIW takes up more die area. AMD has decided that the problems people are working on have enough data- and thread-level parallelism that it's not worth having extra decode logic to enable extraction of more instruction-level parallelism.The result is a design that's actually *worse* for general-purpose computation--for non-vector computations, it'll only ever use one of those sixteen ALUs, whereas the previous design could in principle use them all--but better for embarrassingly parallel workloads.

Why the article couldn't say this is anybody's guess.)

Quantumboredom - Tuesday, June 21, 2011 - link

I don't understand your argument. They have moved from 16-wide SIMD where each instruction is a 4-operation VLIW (where there are quite a few restrictions on what that VLIW instruction can actually be) to _four_ 16-wide SIMDs where each instruction is scalar. The new architecture is in every way more general and more suited to a wide range of computational problems while retaining the same power. It does presumably cost more (in terms of area/transistors), but hopefully it will be worth it.DoctorPizza - Tuesday, June 21, 2011 - link

Where does it say that Cayman SPs are ganged into groups of 16? It says they're grouped somehow, but never makes the claim that their groups are as wide as the new SIMD short vectors.Quantumboredom - Tuesday, June 21, 2011 - link

It is well-known that Cypress and Cayman both have arrays of 16 processing elements operating in SIMD mode, and they have to execute work-items from the same work-group over four cycles, leading to a wavefront size of 64. See for example the AMD APP OpenCL Programming Guide 1.3c section 1.2 where this is described. Specifically it says "All stream cores within a compute unit execute the same instruction sequence in lock-step".DoctorPizza - Tuesday, June 21, 2011 - link

"well-known"? I assure you, the vast majority of people have not read AMD's OpenCL Programming Guide.Nonetheless, the article still makes little sense.

A vector of 16 instruction-parallel processors is more versatile than a vector of 16 strictly SISD ones. In the worst case, with unbreakable data dependencies, the former degrades to the latter. In the best case, the former can do 4 (VLIW4) or 5 (VLIW5) times the work of the latter. The average case cited in the article was about 3.5 times.

If you only had one thread of work, the old architecture would tend to be better. For every 64 ALUs (one old VLIW vector or four new SIMD vectors), a single-threaded task would average usage of 56 out of 64 ALUs (3.5 per VLIW) on the old arch, but only 16 out of 64 on the new.

However, AMD is plainly counting on there being many, many potential threads. If you have abundant threads then you can guarantee that you can fill up the remaining 48 ALUs with different threads, whereas the 8 unused ALUs in the VLIW arch are off-limits.

This is a less general architecture, but as long as all your problems are massively parallel, creating all those extra threads shouldn't be a problem. AMD is sacrificing generality in favour of the embarrassingly parallel.

Quantumboredom - Tuesday, June 21, 2011 - link

I actually asked a similar question at the AMD Fusion Developer Summit.The minimum number of wavefronts (i.e., batches of 64 work-items) needed to keep a Cypress/Cayman CU fed is two, while GCN requires four wavefronts (so twice as many). However it is the case that quite often (for all of my programs actually) you really do need four wavefronts per CU on Cypress/Cayman to effectively hide the global memory latency. The guy I was talking to at AMD seemed to thnik that in practice the number of work-items needed would stay about the same between Cayman and GCN for most applications.

I've asked this question on the AMD developer forums as well, but I don't know how many answers will be given about GCN there.

DoctorPizza - Tuesday, June 21, 2011 - link

I certainly wouldn't be surprised to hear that typical GPGPU workloads could inundate the GPU with threads and so provide more than enough wavefronts. The GPGPU workloads are pretty much all of the embarrassingly parallel kind, so creating more threads should tend to be pretty trivial.So your experience certainly makes sense with what I'd expect.

It's not that I think this is necessarily a bad change for the applications that people use GPGPU processing for.

It's more that I'm disputing the implication that this somehow makes the GPU more general and easier to take advantage of; to my mind it's doing the exact opposite of that.

Or to put it another way: virtually every single program has a reasonable amount of instruction level parallelism. Data-/thread-level parallelism is much rarer. We're losing the former to improve the latter.

For problems amenable to massive thread-/data-level parallelism the result should be substantially more ALUs available to process on. But for problems with only limited data-/thread-level parallelism, it's a step backwards.

name99 - Thursday, December 22, 2011 - link

"The next architecture will have 16-wide SIMD. How does that fit computational problems better than a 16-wide MIMD VLIW architecture? VLIW can act as if it were SIMD if necessary (simply make each instruction within the word the same, varying only the operands), so how on earth can SIMD be better? SIMD is strictly less general and less flexible than VLIW."A VLIW system has to have instruction decoders and routers for every instruction, and thus for every data item that is processed.

A SIMD system only has to have one instruction decoder and router for every 16 data items that are processed. If your computations consist primarily of doing the same thing to multiple data items this is a win. (More processing for less power and less silicon.) If your computations do NOT consist primarily of doing the same thing to multiple data items, it's a loss.

Or, to put it differently, is it worth investing silicon in moving instructions around with great facility, or is it better to invest silicon in moving data around with great facility? Seymour Crane thought (for the problems he cared about) the answer was data. I'd like to think AMD know enough about what they are doing that they have the numbers in hand, and have calculated that, once again for them the answer is data.

MySchizoBuddy - Tuesday, June 21, 2011 - link

where is the information about the toolkit to take advantage of this hardware?