AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTFinal Words

As GPUs have increased in complexity, the refresh cycle has continued to lengthen. 6 month cycles have largely given way to 1 year cycles, and even then it can be 2+ years between architecture refreshes. This is not only a product of the rate of hardware development, but a product of the need to give developers time to breathe and to absorb information about new architectures.

The primary purpose of the AMD Fusion Developer Summit and the announcement of the AMD Graphics Core Next is to give developers even more time to breathe by extending the refresh window backwards as well as forwards. It can take months to years to deliver a program, so the sooner an architecture is introduced the sooner a few brave developers can begin working on programs utilizing it; the alternative is that it may take years after the launch of a new architecture before programs come along that can fully exploit the new architecture. One only needs to take a look at the gaming market to see how that plays out.

Because of this need to inform developers of the hardware well in advance, while we’ve had a chance to see the fundamentals of GCN products using it are still some time off. At no point has AMD specified when a GPU will appear using GCN will appear, so it’s very much a guessing game. What we know for a fact is that Trinity – the 2012 Bulldozer APU – will not use GCN, it will be based on Cayman’s VLIW4 architecture. Because Trinity will be VLIW4, it’s likely-to-certain that AMD will have midrange and low-end video cards using VLIW4 because of the importance they place on being able to Crossfire with the APU. Does this mean AMD will do another split launch, with high-end parts using one architecture while everything else is a generation behind? It’s possible, but we wouldn’t make at bets at this point in time. Certainly it looks like it will be 2013 before GCN has a chance to become a top-to-bottom architecture, so the question is what the top discrete GPU will be for AMD by the start of 2012.

Moving on, it’s interesting that GCN effectively affirms most of NVIDIA’s architectural changes with Fermi. GCN is all about creating a GPU good for graphics and good for computing purposes; Unified addressing, C++ capabilities, ECC, etc were all features NVIDIA introduced with Fermi more than a year ago to bring about their own compute architecture. I don’t believe there’s ever been a question whether NVIDIA was “right”, but the question has been whether it’s time to devote so much engineering effort and die space on technologies that benefit compute as opposed to putting in more graphics units. With NVIDIA and now AMD doing compute-optimized GPUs, clearly the time is quickly approaching if it’s not already here.

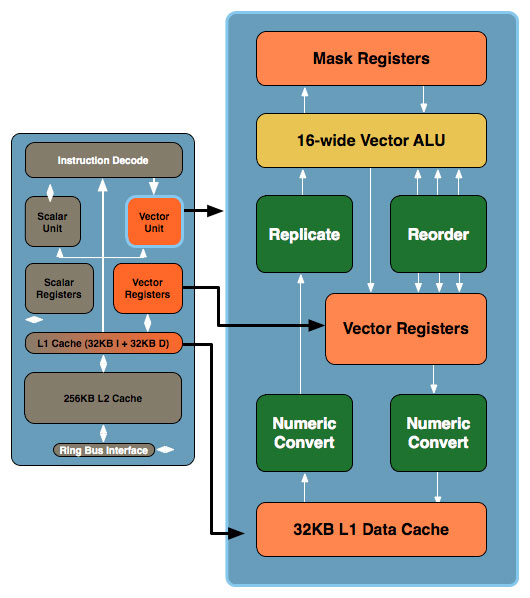

Larrabee As It Was: Scalar + 16-Wide Vector

I can’t help but to also make a comparison to Intel’s aborted Larrabee Prime architecture here. There are some very interesting similarities between Larrabee and GCN, primarily in the dual vector/scalar design and in the use of a 16-wide vector ALU. Processing 16 elements at once is an incredibly common occurrence in GPUs – it even shows up in Fermi which processes half a warp (16 threads) a clock. There are still a million differences between all of these architectures, but there’s definitely a degree of convergence occurring. Previously NVIDIA and AMD converged around VLIW in the days of the graphical GPU, and now we’re converging at a new point for the compute GPU.

Finally, while we’ve talked about the GCN architecture in great detail we haven’t talked about how to program it. Of course there’s OpenCL, but with GCN there’s going to be so much more. Next week we will be taking a look at AMD’s Fusion System Architecture, a high-level abstraction layer that will make GPU programming even more CPU-like, an advancement necessary to bring forth the kind of heterogeneous computing AMD is shooting for. We will also be taking a look at Microsoft’s C++ Accelerated Massive Parallelism (AMP), a C++ extension to bridge the gap between current and future architectures by allowing developers to program for GPUs in C++ even if the GPU doesn’t fully support the C++ feature set.

It’s clear that 2011 is shaping up to be a big year for GPUs, and we’re not even half-way through. So stay tuned, there’s much more to come.

83 Comments

View All Comments

haplo602 - Saturday, June 18, 2011 - link

I hope that AMD delivers. This is exactly what I expected them to do once Llano was anounced. GPU as a coprocessor. Actualy I hoped that AMD would implement a HTX capable GPU, so I can just plug it into a C32 socket (for example) along with an Opteron.The future past Trinity looks interesting.

jamescox - Monday, June 20, 2011 - link

It would be interesting if they produced a form factor with CPU+GPU on a separate card with memory. Ever since AMD moved the memory controller on die, I wondered if we would see CPU + memory on a separate card. It seems to make a lot of sense. A 4 socket motherboard is huge, especially where each socket has 4 to 6 memory slots associated with it. If the CPU and memory were on a separate card, then you could pack them a lot denser, like you can run 4 GPUs off an ATX board now. It might be cheaper than a massive 4 socket board also. I don't know how many HT links you can run through a slot, but you could always use extra cables/connectors like they use for multiple graphics cards.With the GPU using the same memory space as the CPU, then why leave the CPU attached to the slow system memory? Just put one of these hybrid chips attached to some high-speed graphics card like memory on a separate board. Move the slow system memory out to the chipset again. The current memory hierarchy is not exactly optimal in my opinion. I am using a slightly older macbook pro, which only supports 3 GB of memory. With all of the stuff I run, it is paging a lot to a super slow laptop hard drive. I have been tempted to get an SSD to speed it up rather than a new laptop.

Anyway, with the way the memory hierarchy works now, system memory is kind of like a cache for the swap space on disk. System memory has gotten a lot faster, but disk have not, so people are using SSDs to fill the gap. If you directly connect the "graphics memory" to a CPU/GPU combo, then you don't need as much total memory in the system because you would not need multiple copies of the data. You would just pass pointers to data back and forth between the CPU and GPU components.

Also, it would be nice to switch to something non-volatile for the memory connected to the chipset; just use disk as mass storage only. "System" memory wouldn't need to be that fast, since you would probably have a GB or two of high-speed memory on each processor board. The "system" memory would be used more like the SSD boot/swap drive in a current system. I don't think flash is quite there yet, and the other types of non-volatile memory (magnetic RAM , phase-shift RAM, etc) that promise much better performance and durability seem to still be all talk with no real products.

With keeping the current form factor, it would be nice if they could put a large amount of memory in with the CPU/GPU package to act as high-speed memory for the GPU and L4 cache for the CPU. This form factor doesn't support scaling up to multiple chips easily (too large of main-board), but it would be very power efficient for laptops and other small form factor systems. It would require very little off-module communication which saves a lot of power. Maybe they could use a low-power, wide-interface dram chip originally meant for mobile devices.

Hopefully Trinity is more than just a meaningless code name...

Quantumboredom - Sunday, June 19, 2011 - link

On page 4 ("Many SIMDs Make One Compute Unit") there are two figures showing wavefront scheduling on VLIW4 versus GCN. As I read it the figures seem to indicate that in VLIW4, one 4-wide VLIW handles operations from four wavefronts in parallel, but that's not how I've understood AMD's VLIW4. Only a single work-item is executing on a VLIW4-core at any point in time, the occupancy problems of VLIW4 come from ILP within a work-item, not across wavefronts.At any one point in time, a Cayman/VLIW4 compute unit is only executing instructions from a single wavefront (though they need at least two wavefronts to switch between on VLIW4). Again at any one point in time only 16 work-items are actually being executed, and it's within those 16 work-items that ILP must be extraced to fill the VLIW4 units. Since each work-item is executing on a VLIW4-processor, a total of 16*4=64 operations can be done in parallel, but that requires ILP within the work-items.

On GCN this is quite different, where the four 16-wide vector units are actually executing 64 work-items at a time (four times as many as in Cayman). However the point is that each of these work-items are basically executing on a scalar processor, there's no need for ILP anymore. So again we are executing 64 operations in parallel, but now without any need for ILP.

At least this is how I understood the presentation (I was at AFDS). Basically I agree with how the GCN scheduling is illustrated in this article, but the Cayman part looks wrong to me. A Cayman CU can only execute one wavefront at a time, and it only needs two wavefronts to switch between to be able to fully utilize the hardware, not four like the figures here seem to suggest.

Now I'm just a programmer, not an architecture guy, so if anyone could clear this up for me it would be greatly appreciated :)

Ryan Smith - Monday, June 20, 2011 - link

Hi Quantum;After further consideration you're basically right. I should have made a distinction in the figures between instructions and wholly distinct wavefronts. While there are some ILP considerations to be had, basically the elements Cayman accepts should all be instructions from the same wavefront rather than different wavefronts. Cayman can't really work on multiple wavefronts at once.

I don't have the original files on me, but we'll get this fixed in the morning to show that Cayman is consuming multiple instructions from the same wavefront.

-Thanks

Ryan Smith

jamescox - Monday, June 20, 2011 - link

Would a CPU/GPU integrated chip only be a replacement for integrated graphics, or does it have the possibility to move a little farther up? With multi-threading, 4 to 8 thread CPUs will be common in the mainstream, but that will not be a very big die on smaller processes. Most PC software doesn't make use of more than 4 compute intensive threads, so how much room does that leave for GPU hardware? If they solve the memory speed problem by integrating some high-speed memory into the socket (multi-chip module), or something, then it seems like they could possibly get more mainstream performance out of an integrated chip.

If the integrated GPU isn't being used for graphics, then I really don't see that much software that would use it for compute in the PC space. One of the main things mentioned was usually video encode/decode, but it seems that the best solution is to include specific media encode/decode hardware like sandy bridge does. It seems to be just as fast and much more power efficient. If AMD doesn't include a media processing engine, then that could still be a reason to go with Intel. What other PC software could use the compute power?

There is plenty of software that could use it in professional/HPC markets, so it makes sense to make a GPU that can be used for both if it doesn't sacrifice the graphics performance. The newest generations of GPUs have some things in common with Larrabee and Sony's Cell processors, except both of those tried to move too much of the graphics processing abilities into software. AMD didn't make that mistake, but talk of compute abilities for GPUs in the PC/consumer space seems a bit premature without any real applications to take advantage of it.

GaMEChld - Monday, June 20, 2011 - link

Llano already has low level discrete GPU performance, and that's just the tip of the iceberg. You are correct that on smaller processes they will be able to allocate more space to the GPU while maintaining CPU performance. I believe the successor to Trinity (which is the Bulldozer based successor to Llano) is supposed to be on 28nm. If everything goes exactly right, you could potentially have some kind of monster that has i5-2500K CPU performance with Radeon 6800 GPU performance in some maintstream laptop chip a year or two down the road. (Those numbers are all pure speculation)I encourage everyone to take a moment and remember the first computer you ever used, just to pay homage to what we are capable of as a species in just a few short years.

I remember an IBM computer flipped on by a big red toggle that took 2 minutes to boot to a dos prompt...

Targon - Monday, June 20, 2011 - link

I remember the Timex Sinclaire, with 2KB of memory standard hooked up to a black and white TV and cassette tapes to save/load programs. Z80 running at 1MHz...the old 5.25 inch floppies were MUCH better, at least you could get a list of what was on the storage medium without having to load it.jabber - Monday, June 20, 2011 - link

If only our attitudes to each other and other issues had advanced as much as well.GaMEChld - Monday, June 20, 2011 - link

"Because in the end, aren't all religions the same? They tell us what to eat, when to pray, that this lump of clay called Man can somehow shape himself to resemble the divine. But we can never attain that perfect grace if we have hatred in our hearts. So let us celebrate our commonalites. Some of us don't eat pork. Some of us don't eat shellfish. But we all eat chicken. So spread the word: peace and chicken!"~HOMER SIMPSON

:-D

Cyber.Angel - Saturday, October 15, 2011 - link

off-topic?7th day Adventist don't eat meat, yes, not even chicken

AND

in Christian religion it's God who sacrifices, not human

PLUS

there is a requirement of TOTAL change according to Jesus

That is, the "ME" is buried, forgotten and God lives inside of you

meaning a total change in life

God bless America - but...where is the change?