AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTAnd Many Compute Units Make A GPU

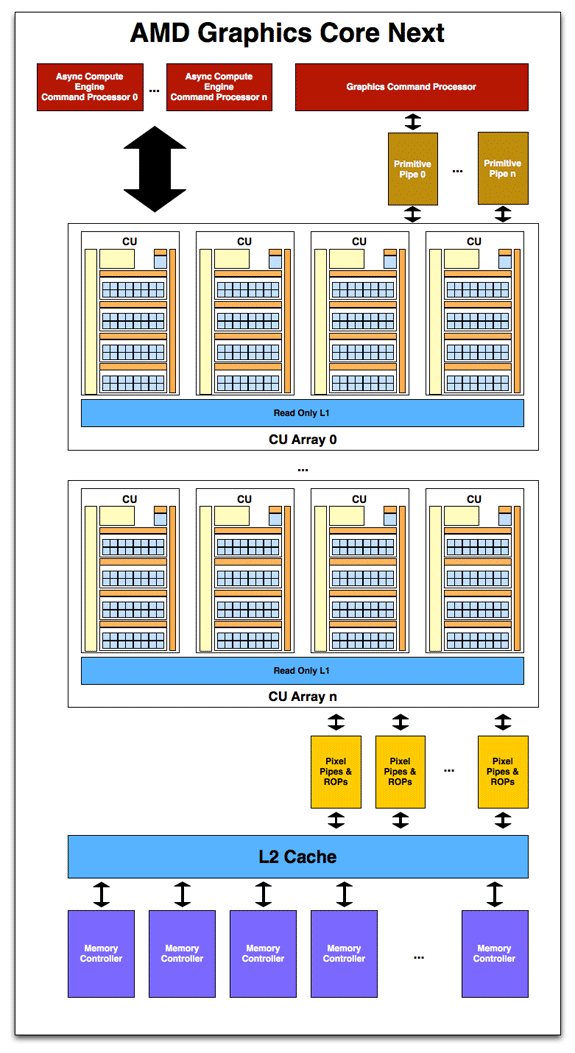

While the compute unit is the fundamental unit of computation, it is not a GPU on its own. As with SIMDs in Cayman it’s a configurable building block for making a larger GPU, with a GPU implementing a suitable number of CUs in multiples of 4. Like past GPUs this will be the primary way to scale the GPU to the desired die size, but of course this isn’t the only element of the design that scales.

With a suitable number of CUs in hand, it’s time to attach the rest of units that make up a GPU. As this is a high-level overview on the part of AMD they haven’t gone into great deal on what each unit does and how it does it, but as the first GCN product gets closer to launching the picture will take on a more complete form.

Starting with memory and cache, GCN will once more pair its L2 cache with its memory controllers. The architecture supports 64KB or 128KB of L2 cache per memory controller, and given that AMD’s memory controllers are typically 64bits each, this means a Cayman-like design would likely have 512KB of L2 cache. The L2 cache is write-back, and will be fully coherent so that all CUs will see the same data, saving expensive trips to VRAM for synchronization. CPU/GPU synchronization will also be handled at the L2 cache level, where it will be important to maintain coherency between the two in order to efficiently split up a task between the CPU and GPU. For APUs there is a dedicated high-speed bus between the two, while discrete GPUs will rely on PCIe’s coherency protocols to keep the CPU and dGPU in sync.

Meanwhile on the compute side, AMD’s new Asynchronous Compute Engines serve as the command processors for compute operations on GCN. The principal purpose of ACEs will be to accept work and to dispatch it off to the CUs for processing. As GCN is designed to concurrently work on several tasks, there can be multiple ACEs on a GPU, with the ACEs deciding on resource allocation, context switching, and task priority. AMD has not established an immediate relationship between ACEs and the number of tasks that can be worked on concurrently, so we’re not sure whether there’s a fixed 1:X relationship or whether it’s simply more efficient for the purposes of working on many tasks in parallel to have more ACEs.

One effect of having the ACEs is that GCN has a limited ability to execute tasks out of order. As we mentioned previously GCN is an in-order architecture, and the instruction stream on a wavefront cannot be reodered. However the ACEs can prioritize and reprioritize tasks, allowing tasks to be completed in a different order than they’re received. This allows GCN to free up the resources those tasks were using as early as possible rather than having the task consuming resources for an extended period of time in a nearly-finished state. This is not significantly different from how modern in-order CPUs (Atom, ARM A8, etc) handle multi-tasking.

On the other side of the coin we have the graphics hardware. As with Cayman a graphics command processor sits at the top of the stack and is responsible for farming out work to the various components of the graphics subsystem. Below that Cayman’s dual graphics engines have been replaced with multiple primitive pipelines, which will serve the same general purpose of geometry and fixed-function processing. Primative pipelines will be responsible for tessellation, geometry, and high-order surface processing among other things. Whereas Cayman was limited to 2 such units, GCN will be fully scalable, so AMD will be able to handle incredibly large amounts of geometry if necessary.

After a trip through the CUs, graphics work then hits the pixel pipelines, which are home to the ROPs. As it’s customary to have a number of ROPs, there will be a scalable number of pixel pipelines in GCN; we expect this will be closely coupled with the number of memory controllers to maintain the tight ROP/L2/Memory integration that’s so critical for high ROP performance.

Unfortunately, those of you expecting any additional graphics information will have to sit tight for the time being. As was the case with NVIDIA’s early reveal of Fermi in 2009, AFDS is a development show, and GCN’s early reveal is about the compute capabilities rather than the graphics capabilities. AMD needs to prime developers for GCN now, so that when GCN appears in an APU developers are ready for it. We’ll find out more about the capabilities of the ROPs, the primitive pipelines, the texture mapping units, the display controllers and other dedicated hardware blocks farther down the line.

In the meantime AMD did throw out one graphics tidbit: partially resident textures (PRT). PRTs allow for only part of a texture to actually be loaded in memory, allowing developers to use large textures without taking the performance hit of loading the entire texture into memory if parts of it are going unused. John Carmack already does something very similar in software with his MegaTexture technology, which is used in the id Tech 4 and id Tech 5 engines. This is essentially a hardware implementation of that technology.

83 Comments

View All Comments

haplo602 - Saturday, June 18, 2011 - link

I hope that AMD delivers. This is exactly what I expected them to do once Llano was anounced. GPU as a coprocessor. Actualy I hoped that AMD would implement a HTX capable GPU, so I can just plug it into a C32 socket (for example) along with an Opteron.The future past Trinity looks interesting.

jamescox - Monday, June 20, 2011 - link

It would be interesting if they produced a form factor with CPU+GPU on a separate card with memory. Ever since AMD moved the memory controller on die, I wondered if we would see CPU + memory on a separate card. It seems to make a lot of sense. A 4 socket motherboard is huge, especially where each socket has 4 to 6 memory slots associated with it. If the CPU and memory were on a separate card, then you could pack them a lot denser, like you can run 4 GPUs off an ATX board now. It might be cheaper than a massive 4 socket board also. I don't know how many HT links you can run through a slot, but you could always use extra cables/connectors like they use for multiple graphics cards.With the GPU using the same memory space as the CPU, then why leave the CPU attached to the slow system memory? Just put one of these hybrid chips attached to some high-speed graphics card like memory on a separate board. Move the slow system memory out to the chipset again. The current memory hierarchy is not exactly optimal in my opinion. I am using a slightly older macbook pro, which only supports 3 GB of memory. With all of the stuff I run, it is paging a lot to a super slow laptop hard drive. I have been tempted to get an SSD to speed it up rather than a new laptop.

Anyway, with the way the memory hierarchy works now, system memory is kind of like a cache for the swap space on disk. System memory has gotten a lot faster, but disk have not, so people are using SSDs to fill the gap. If you directly connect the "graphics memory" to a CPU/GPU combo, then you don't need as much total memory in the system because you would not need multiple copies of the data. You would just pass pointers to data back and forth between the CPU and GPU components.

Also, it would be nice to switch to something non-volatile for the memory connected to the chipset; just use disk as mass storage only. "System" memory wouldn't need to be that fast, since you would probably have a GB or two of high-speed memory on each processor board. The "system" memory would be used more like the SSD boot/swap drive in a current system. I don't think flash is quite there yet, and the other types of non-volatile memory (magnetic RAM , phase-shift RAM, etc) that promise much better performance and durability seem to still be all talk with no real products.

With keeping the current form factor, it would be nice if they could put a large amount of memory in with the CPU/GPU package to act as high-speed memory for the GPU and L4 cache for the CPU. This form factor doesn't support scaling up to multiple chips easily (too large of main-board), but it would be very power efficient for laptops and other small form factor systems. It would require very little off-module communication which saves a lot of power. Maybe they could use a low-power, wide-interface dram chip originally meant for mobile devices.

Hopefully Trinity is more than just a meaningless code name...

Quantumboredom - Sunday, June 19, 2011 - link

On page 4 ("Many SIMDs Make One Compute Unit") there are two figures showing wavefront scheduling on VLIW4 versus GCN. As I read it the figures seem to indicate that in VLIW4, one 4-wide VLIW handles operations from four wavefronts in parallel, but that's not how I've understood AMD's VLIW4. Only a single work-item is executing on a VLIW4-core at any point in time, the occupancy problems of VLIW4 come from ILP within a work-item, not across wavefronts.At any one point in time, a Cayman/VLIW4 compute unit is only executing instructions from a single wavefront (though they need at least two wavefronts to switch between on VLIW4). Again at any one point in time only 16 work-items are actually being executed, and it's within those 16 work-items that ILP must be extraced to fill the VLIW4 units. Since each work-item is executing on a VLIW4-processor, a total of 16*4=64 operations can be done in parallel, but that requires ILP within the work-items.

On GCN this is quite different, where the four 16-wide vector units are actually executing 64 work-items at a time (four times as many as in Cayman). However the point is that each of these work-items are basically executing on a scalar processor, there's no need for ILP anymore. So again we are executing 64 operations in parallel, but now without any need for ILP.

At least this is how I understood the presentation (I was at AFDS). Basically I agree with how the GCN scheduling is illustrated in this article, but the Cayman part looks wrong to me. A Cayman CU can only execute one wavefront at a time, and it only needs two wavefronts to switch between to be able to fully utilize the hardware, not four like the figures here seem to suggest.

Now I'm just a programmer, not an architecture guy, so if anyone could clear this up for me it would be greatly appreciated :)

Ryan Smith - Monday, June 20, 2011 - link

Hi Quantum;After further consideration you're basically right. I should have made a distinction in the figures between instructions and wholly distinct wavefronts. While there are some ILP considerations to be had, basically the elements Cayman accepts should all be instructions from the same wavefront rather than different wavefronts. Cayman can't really work on multiple wavefronts at once.

I don't have the original files on me, but we'll get this fixed in the morning to show that Cayman is consuming multiple instructions from the same wavefront.

-Thanks

Ryan Smith

jamescox - Monday, June 20, 2011 - link

Would a CPU/GPU integrated chip only be a replacement for integrated graphics, or does it have the possibility to move a little farther up? With multi-threading, 4 to 8 thread CPUs will be common in the mainstream, but that will not be a very big die on smaller processes. Most PC software doesn't make use of more than 4 compute intensive threads, so how much room does that leave for GPU hardware? If they solve the memory speed problem by integrating some high-speed memory into the socket (multi-chip module), or something, then it seems like they could possibly get more mainstream performance out of an integrated chip.

If the integrated GPU isn't being used for graphics, then I really don't see that much software that would use it for compute in the PC space. One of the main things mentioned was usually video encode/decode, but it seems that the best solution is to include specific media encode/decode hardware like sandy bridge does. It seems to be just as fast and much more power efficient. If AMD doesn't include a media processing engine, then that could still be a reason to go with Intel. What other PC software could use the compute power?

There is plenty of software that could use it in professional/HPC markets, so it makes sense to make a GPU that can be used for both if it doesn't sacrifice the graphics performance. The newest generations of GPUs have some things in common with Larrabee and Sony's Cell processors, except both of those tried to move too much of the graphics processing abilities into software. AMD didn't make that mistake, but talk of compute abilities for GPUs in the PC/consumer space seems a bit premature without any real applications to take advantage of it.

GaMEChld - Monday, June 20, 2011 - link

Llano already has low level discrete GPU performance, and that's just the tip of the iceberg. You are correct that on smaller processes they will be able to allocate more space to the GPU while maintaining CPU performance. I believe the successor to Trinity (which is the Bulldozer based successor to Llano) is supposed to be on 28nm. If everything goes exactly right, you could potentially have some kind of monster that has i5-2500K CPU performance with Radeon 6800 GPU performance in some maintstream laptop chip a year or two down the road. (Those numbers are all pure speculation)I encourage everyone to take a moment and remember the first computer you ever used, just to pay homage to what we are capable of as a species in just a few short years.

I remember an IBM computer flipped on by a big red toggle that took 2 minutes to boot to a dos prompt...

Targon - Monday, June 20, 2011 - link

I remember the Timex Sinclaire, with 2KB of memory standard hooked up to a black and white TV and cassette tapes to save/load programs. Z80 running at 1MHz...the old 5.25 inch floppies were MUCH better, at least you could get a list of what was on the storage medium without having to load it.jabber - Monday, June 20, 2011 - link

If only our attitudes to each other and other issues had advanced as much as well.GaMEChld - Monday, June 20, 2011 - link

"Because in the end, aren't all religions the same? They tell us what to eat, when to pray, that this lump of clay called Man can somehow shape himself to resemble the divine. But we can never attain that perfect grace if we have hatred in our hearts. So let us celebrate our commonalites. Some of us don't eat pork. Some of us don't eat shellfish. But we all eat chicken. So spread the word: peace and chicken!"~HOMER SIMPSON

:-D

Cyber.Angel - Saturday, October 15, 2011 - link

off-topic?7th day Adventist don't eat meat, yes, not even chicken

AND

in Christian religion it's God who sacrifices, not human

PLUS

there is a requirement of TOTAL change according to Jesus

That is, the "ME" is buried, forgotten and God lives inside of you

meaning a total change in life

God bless America - but...where is the change?