AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTNot Just A New Architecture, But New Features Too

So far we’ve talked about Graphics Core Next as a new architecture, how that new architecture works, and what that new architecture does that Cayman and other VLIW architectures could not. But along with the new architecture GCN will bring with it a number of new compute features to further flesh out AMD’s GPU computing capabilities and to cement the GPU’s position as the CPU’s partner rather than a subservient peripheral.

In terms of base features the biggest change will be that GCN will implement the underlying features necessary to support C++ and other advanced languages. As a result GCN will be adding support for pointers, virtual functions, exception support, and even recursion. These underlying features mean that developers will not need to “step down” from higher languages to C to write code for the GPU, allowing them to more easily program for the GPU and CPU within the same application. For end-users the benefit won’t be immediate, but eventually it will allow for more complex and useful programs to be GPU accelerated.

Because the underlying feature set is evolving, the memory subsystem is also evolving to be able to service those features. The chief change here is that the hardware is being adapted to support an ISA that uses unified memory. This goes hand-in-hand with the earlier language features to allow programmers to write code to target both the CPU and the GPU, as programs (or rather compilers) can reference memory anywhere, without the need to explicitly copy memory from one device to the other before working on it. Now there’s still a significant performance impact when accessing off-GPU memory – particularly in the case of dGPUs where on-board memory is many times faster than system memory – so developers and compilers will still be copying data around to keep it close to the processor that’s going to use it, but this essentially becomes abstracted from developers.

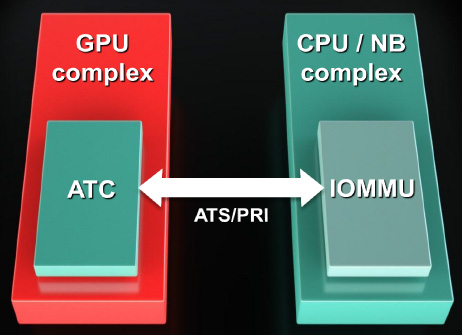

Now what’s interesting is that the unified address space that will be used is the x86-64 address space. All instructions sent to a GCN GPU will be relative to the x86-64 address space, at which point the GPU will be responsible for doing address translation to local memory addresses. In fact GCN will even be incorporating an I/O Memory Mapping Unit (IOMMU) to provide this functionality; previously we’ve only seen IOMMUs used for sharing peripherals in a virtual machine environment. GCN will even be able to page fault half-way gracefully by properly stalling until the memory fetch completes. How this will work with the OS remains to be seen though, as the OS needs to be able to address the IOMMU. GCN may not be fully exploitable under Windows 7.

Finally on the memory side, AMD is adding proper ECC support to supplement their existing EDC (Error Detection & Correction) functionality, which is used to ensure the integrity of memory transmissions across the GDDR5 memory bus. Both the SRAM and VRAM memory can be ECC protected. For the SRAM this is a free operation, while for the VRAM there will be a performance overhead. We’re assuming that AMD will be using a virtual ECC scheme like NVIDIA, where ECC data is distributed across VRAM rather than using extra memory chips/controllers.

Elsewhere we’ve already mentioned FP64 support. All GCN GPUs will support FP64 in some form, making FP64 support a standard feature across the entire lineup. The actual FP64 performance is configurable – the architecture supports ½ rate FP64, but ¼ rate and 1/16 rate are also options. We expect AMD to take a page from NVIDIA here and configure lower-end consumer parts to use the slower rates since FP64 is not currently important for consumer uses.

Finally, for programmers some additional hardware changes have been made to improve debug support by allowing debugging tools to tap the GPU at additional points. The new ISA for GCN will already make debugging easier, but this will further that goal. As with other developer features this won’t directly impact end-users, but it will ultimately lead to better software sooner as the features and tools available for debugging GPU programs have been well behind the well-established tools used for debugging CPU programs.

83 Comments

View All Comments

Targon - Sunday, June 19, 2011 - link

AMD wants to put an end to the GPU in the chipset, but no one expects dedicated CPU and GPU to go away. Now, the code that would take advantage of the APU would probably work with a full AMD CPU/AMD GPU combination, so the software side of things would not need a lot of change to support both configurations.khimera2000 - Sunday, June 19, 2011 - link

Agree, dedicated cards will not go away, however intergrated cards like the past will.I think we see Eye to Eye on this. AMD wants to take full advantage of all its hardware, It looks like the way there trying to do it is by combining the CPU and Intergrated GPU into one package, after which they want to set it up so infromation that goes into that package dosent have to leave to be processed, like sending it out to ram from the CPU only to be read by the GPU.

Still want to see how this will work across PCI-E. I can already see future reviews and comparisons on how effetive GPU acceleration is on there intergrated aproach VS discreet cards. AND Buying those discreet cards :D

By the time these parts comes out my desktop will be right in the middle of its upgrade cycle :D

Targon - Monday, June 20, 2011 - link

AMD needs to push for the HTX slot again for discrete video, where there is a direct HyperTransport link between the CPU and whatever is plugged into that slot. PCI-Express is decent, but HTX would and should blow the doors off PCI-Express.rnssr71 - Friday, June 17, 2011 - link

i wish this coming next year especially in Trinity but at lest they are heading in the right direction:) also, to those wondering about improvements in gaming ability, look what amd did with cayman vs cypress- the improved efficiency and noticeably improved performance on the same manufacturing. http://www.anandtech.com/bench/Product/294?vs=331GCN this is going to improve efficiency even farther and they are cutting the transistor size roughly in half.

nlr_2000 - Saturday, June 18, 2011 - link

"Unfortunately, those of you expecting any additional graphics information will have to sight tight for the time being." sight = sitEnerJi - Saturday, June 18, 2011 - link

I wonder if this architecture would be a particularly good fit for a next-generation Xbox (due around 2013)? Any thoughts on this?GaMEChld - Saturday, June 18, 2011 - link

2013? I heard 2015, unless they recently changed dates to counter Nintendo. Anyways, I'm not so sure what benefits a console will realize from this, since full blown PC's barely get to utilize much of the technology we currently have access to. Multi-threading, 64-bit support, advanced cpu instructions are all available yet barely utilized features.Also, consoles are designed to be cost effective and relatively cheap, so usually modified older generation architecture is used. For example, the new Wii uses Radeon 4700 class graphics, which sounds old but is roughly twice as powerful as the X360 (Radeon X1900) or PS3 (GF7000) graphics.

DanNeely - Saturday, June 18, 2011 - link

That's true of the Wii because Nintendo doesn't subsidize the console, but MS and Sony have gone after higher end GPUs for their last launches. The XBox 360 launched using a GPU similar to that of the ATI 1900, a bare month and a half after the card hit the market.The PS3 used a GF7800 derivative and launched roughly 1 year after the GF7800 did. The GF7900 was nVidias top of the line card at the time, but it was only a marginal improvement over the 7800.swaaye - Saturday, June 18, 2011 - link

PS3 actually launched about when G80 came out, which obviously made RSX look awfully retro when you saw 7900GTX SLI being beaten in reviews by a single board. ;) But G80 surely was never an option for a console due to size and power.Xenos has less than half of the pixel fillrate of X1900. X1900 also has 48 pixel shader units + 8 vertex shaders so it might have an advantage over Xenos 48 unified units, especially when clock speed and the access to a large RAM pool over a 256-bit bus are taken into account.

GaMEChld - Sunday, June 19, 2011 - link

But we must also bear in mind that X360 and PS3 may have chosen high on the scale because of the concurrent shift to 720p/1080p resolution instead of the old 480p standard. At this point in time, the 1080p resolution is standardized, so greatly escalating GPU horsepower will show diminishing gains, since people aren't really going to be gaming on higher resolutions than the new standard tv resolution.What I mean is, if a Radeon 5000 Series could maximize all graphics quality at 1080p, why would a console manufacturer bother with more power?

For example, you wouldn't buy a GTX590 or Radeon 6990 just to game on a 1080p monitor, would you?

The only exception I can think of for this TV resolution argument is 3DTV gaming, in which case I am not well versed in the added GPU overhead required to render a 3D game.