AMD's Graphics Core Next Preview: AMD's New GPU, Architected For Compute

by Ryan Smith on December 21, 2011 9:38 PM ESTAnd Many Compute Units Make A GPU

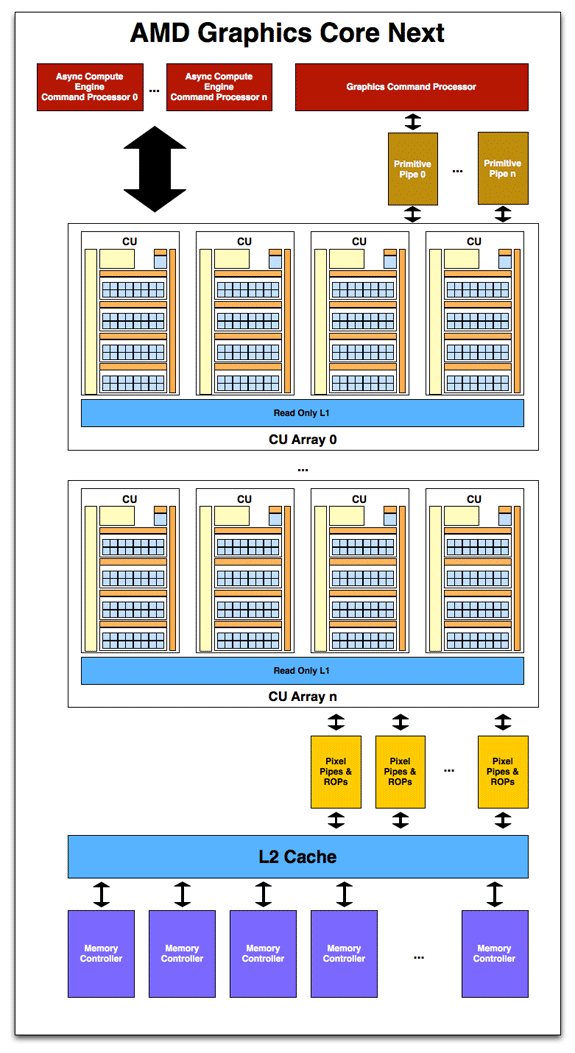

While the compute unit is the fundamental unit of computation, it is not a GPU on its own. As with SIMDs in Cayman it’s a configurable building block for making a larger GPU, with a GPU implementing a suitable number of CUs in multiples of 4. Like past GPUs this will be the primary way to scale the GPU to the desired die size, but of course this isn’t the only element of the design that scales.

With a suitable number of CUs in hand, it’s time to attach the rest of units that make up a GPU. As this is a high-level overview on the part of AMD they haven’t gone into great deal on what each unit does and how it does it, but as the first GCN product gets closer to launching the picture will take on a more complete form.

Starting with memory and cache, GCN will once more pair its L2 cache with its memory controllers. The architecture supports 64KB or 128KB of L2 cache per memory controller, and given that AMD’s memory controllers are typically 64bits each, this means a Cayman-like design would likely have 512KB of L2 cache. The L2 cache is write-back, and will be fully coherent so that all CUs will see the same data, saving expensive trips to VRAM for synchronization. CPU/GPU synchronization will also be handled at the L2 cache level, where it will be important to maintain coherency between the two in order to efficiently split up a task between the CPU and GPU. For APUs there is a dedicated high-speed bus between the two, while discrete GPUs will rely on PCIe’s coherency protocols to keep the CPU and dGPU in sync.

Meanwhile on the compute side, AMD’s new Asynchronous Compute Engines serve as the command processors for compute operations on GCN. The principal purpose of ACEs will be to accept work and to dispatch it off to the CUs for processing. As GCN is designed to concurrently work on several tasks, there can be multiple ACEs on a GPU, with the ACEs deciding on resource allocation, context switching, and task priority. AMD has not established an immediate relationship between ACEs and the number of tasks that can be worked on concurrently, so we’re not sure whether there’s a fixed 1:X relationship or whether it’s simply more efficient for the purposes of working on many tasks in parallel to have more ACEs.

One effect of having the ACEs is that GCN has a limited ability to execute tasks out of order. As we mentioned previously GCN is an in-order architecture, and the instruction stream on a wavefront cannot be reodered. However the ACEs can prioritize and reprioritize tasks, allowing tasks to be completed in a different order than they’re received. This allows GCN to free up the resources those tasks were using as early as possible rather than having the task consuming resources for an extended period of time in a nearly-finished state. This is not significantly different from how modern in-order CPUs (Atom, ARM A8, etc) handle multi-tasking.

On the other side of the coin we have the graphics hardware. As with Cayman a graphics command processor sits at the top of the stack and is responsible for farming out work to the various components of the graphics subsystem. Below that Cayman’s dual graphics engines have been replaced with multiple primitive pipelines, which will serve the same general purpose of geometry and fixed-function processing. Primative pipelines will be responsible for tessellation, geometry, and high-order surface processing among other things. Whereas Cayman was limited to 2 such units, GCN will be fully scalable, so AMD will be able to handle incredibly large amounts of geometry if necessary.

After a trip through the CUs, graphics work then hits the pixel pipelines, which are home to the ROPs. As it’s customary to have a number of ROPs, there will be a scalable number of pixel pipelines in GCN; we expect this will be closely coupled with the number of memory controllers to maintain the tight ROP/L2/Memory integration that’s so critical for high ROP performance.

Unfortunately, those of you expecting any additional graphics information will have to sit tight for the time being. As was the case with NVIDIA’s early reveal of Fermi in 2009, AFDS is a development show, and GCN’s early reveal is about the compute capabilities rather than the graphics capabilities. AMD needs to prime developers for GCN now, so that when GCN appears in an APU developers are ready for it. We’ll find out more about the capabilities of the ROPs, the primitive pipelines, the texture mapping units, the display controllers and other dedicated hardware blocks farther down the line.

In the meantime AMD did throw out one graphics tidbit: partially resident textures (PRT). PRTs allow for only part of a texture to actually be loaded in memory, allowing developers to use large textures without taking the performance hit of loading the entire texture into memory if parts of it are going unused. John Carmack already does something very similar in software with his MegaTexture technology, which is used in the id Tech 4 and id Tech 5 engines. This is essentially a hardware implementation of that technology.

83 Comments

View All Comments

hammer256 - Friday, June 17, 2011 - link

It's good to see AMD more committed to the GPGPU. I use GPGPU for neural network simulations, and currently the default choice has been Nvidia with CUDA. It would be nice to see some competition in this space.From the article it sounds like AMD knows to put a lot of emphasis on the software side of things for the developers. Hopefully they'll have a capable programming system that's as good as CUDA, maybe even better.

Finally, Given AMD's strategies in the past with medium sized GPU chips and multi-GPU for high-end, hopefully they'll put sufficient emphasis into support for easier multi-GPU programming.

Exciting times indeed.

krumme - Friday, June 17, 2011 - link

What a pleasure to read articles like this. I would gladly pay for it, more directly, so to speak.Some animations or video, especially for us less tech savvy, would be highly appriciated too.

Competition for x86 is comming ! :)

mczak - Friday, June 17, 2011 - link

I wouldn't really call it radical, Cayman already had the same theoretic 1/2 performance for FP64 adds compared to FP32. Muls/FMAs though are now 1/2 too it seems (though it might not extend to all products) whereas it was 1/4 on Cayman. Still, a factor two is not what I'd call a "radical" improvement.ahmedz_1991 - Friday, June 17, 2011 - link

I really appreciated the letters A M D. Since Athlon, one could feel that AMD is lagging behind Intel more and more, but now with them beingh the first successful CPU\GPU combination (Llano out there now ) now AMD can make their own way and API's even into OS's just like what Intel and NVidia always do. This way I'm more than sure that we'll see titles (apps and games ) with the unified AMD brand instead of those ( meant to be played ) or ( smart solution ) with some stupid stars for Core i3,5 or 7frozentundra123456 - Wednesday, December 21, 2011 - link

Well, technically Sandy Bridge is also a CPU/GPU combination, and I think I would call it successful. Granted, the graphics are not up to AMD levels, but their CPU performance is much better. And considering the debacle of Bulldozer and the architecture that was not optimized for current software, AMD will have to do a much better job of integrating their hardware with software than they have done so far.haukionkannel - Friday, June 17, 2011 - link

So maybe not big upgrades in graphic power, but improvement in computing power. Its really good for CPGPU usage. It allso makes it easier to run physic calculations in AMD GPUs.Hmm... It allso means that more silicon space is neede for same graphic power...

Interesting to see how it all sums up.

Targon - Saturday, June 18, 2011 - link

Right now, there has been a shortage of software that really pushes the graphics limits, mostly because you have the substandard Intel graphics out there that still has a significant market share. How many games out there really make you feel that a Radeon 6970 just isn't enough? The polygon count for objects(characters) in games have not been going up as much as more world detail has been going in.Now, when developers want to try aiming for 5 million polygon figures in games, THAT is where there will be a bigger demand for more graphics power, and with that level of detail, the CPU power needed to properly animate the objects needs to be higher. This is where all of this work with GPU compute comes in, to handle all the complexities of properly animating these super-high detailed objects.

I will note that The Witcher 2 is one of the first games I have seen in a long time where CPU power needs to be higher than a Phenom 2 945, and I am waiting for the AMD Bulldozer core CPUs(not APUs) to come out to see how big of an improvement it will make.

IlllI - Friday, June 17, 2011 - link

can someone explain all this to me? lol this is all beyond my understandingtipoo - Saturday, June 18, 2011 - link

They are making GPU compute much more capable and possible, in a nutshell. This will greatly increase the processing speed of many tasks on computers.khimera2000 - Sunday, June 19, 2011 - link

AMD has CPU and GPU, but there seperate. They want this to change.There combining the CPU and GPU so that they are more able to talk to each other, and do the tasks there best at. this is done by remaking the way they build video cards.

C++... great for CPU not so great for gpu... they want to change this.

Out of order operations suck on the GPU. they want to change this, so it can hammer through more work faster.

There also throwing in a bunch of tools to help tell developers where there messing up in this regard.

fusion APUs will have a nice trick... they will be able to talk to each other without needing to send information back to memory. Imagion passing letters but having to use fedex, this would be like a move to passing letters in class (no fedex) its quicker :) and your mail isint delayed.

APU will talk over PCI-E... Im wondering how that will work to 0.o