Intel Z68 Chipset & Smart Response Technology (SSD Caching) Review

by Anand Lal Shimpi on May 11, 2011 2:34 AM ESTAnandTech Storage Bench 2011

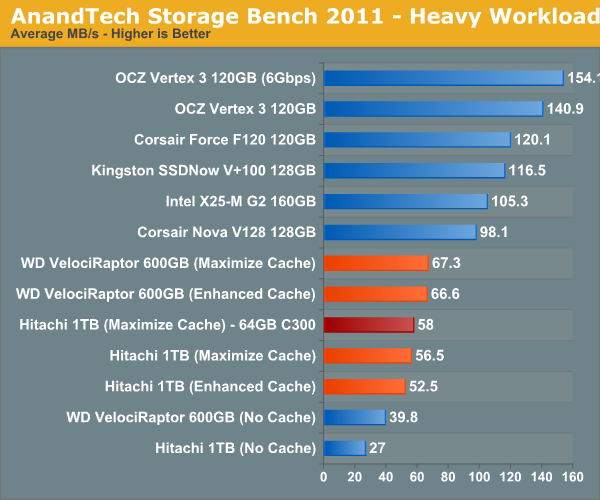

With the hand timed real world tests out of the way, I wanted to do a better job of summarizing the performance benefit of Intel's SRT using our Storage Bench 2011 suite. Remember that the first time anything is ever encountered it won't be cached and even then, not all operations afterwards will be cached. Data can also be evicted out of the cache depending on other demands. As a result, overall performance looks more like a doubling of standalone HDD performance rather than the multi-x increase we see from moving entirely to an SSD.

Heavy 2011—Background

Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

First, some details:

1) The MOASB, officially called AnandTech Storage Bench 2011—Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011—Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011—Heavy Workload

We'll start out by looking at average data rate throughout our new heavy workload test:

For this comparison I used two hard drives: 1) a Hitachi 7200RPM 1TB drive from 2008 and 2) a 600GB Western Digital VelociRaptor. The Hitachi 1TB is a good large, but aging drive, while the 600GB VR is a great example of a very high end spinning disk. With a modest 20GB cache enabled, the 3+ year old Hitachi drive is easily 41% faster than the VelociRaptor. We're still not into dedicated SSD territory, but the improvement is significant.

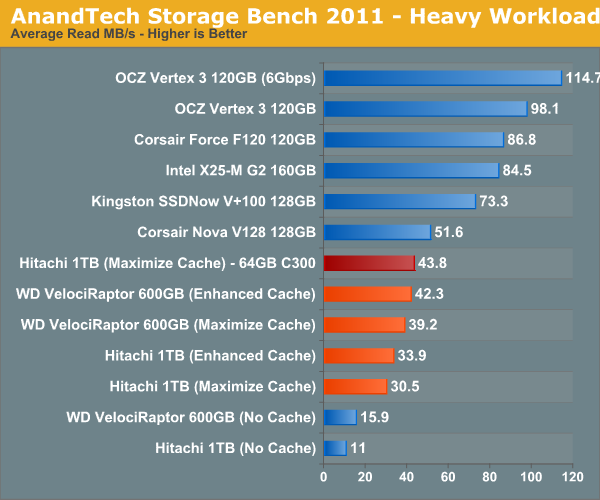

I also tried swapping the cache drive out with a Crucial RealSSD C300 (64GB). Performance went up a bit but not much. You'll notice that average read speed got the biggest boost from the C300 as a cache drive since it does have better sequential read performance. Overall I am impressed with Intel's SSD 311, I just wish the drive were a little bigger.

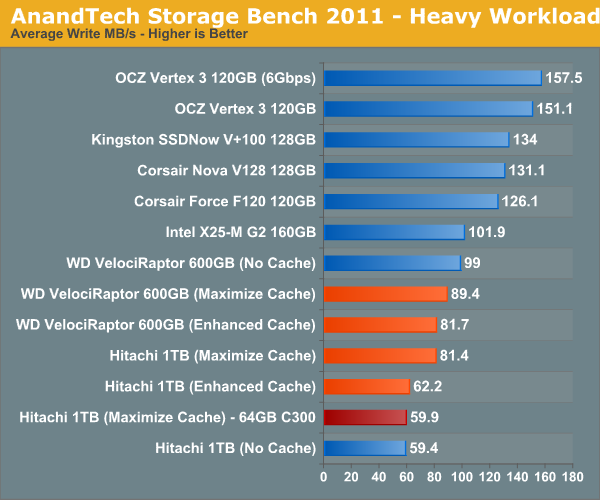

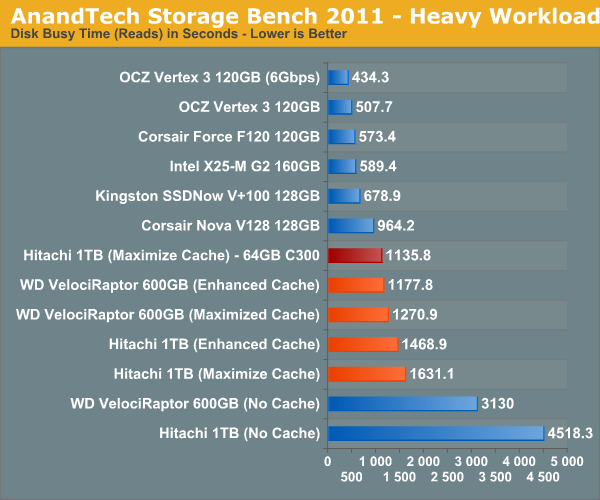

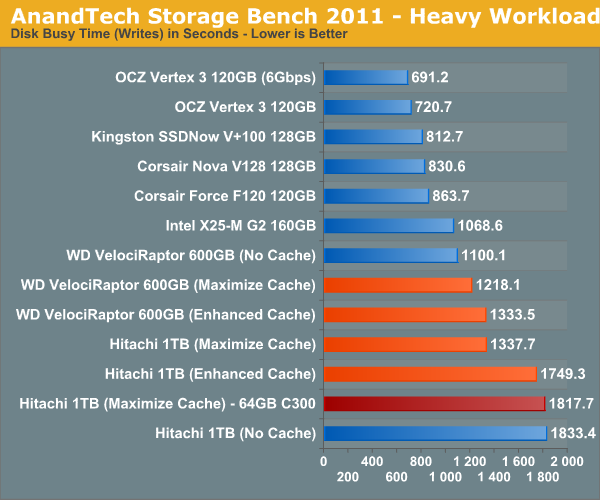

The breakdown of reads vs. writes tells us more of what's going on:

This isn't too unusual—pure write performance is actually better with the cache disabled than with it enabled. The SSD 311 has a good write speed for its capacity/channel configuration, but so does the VelociRaptor. Overall performance is still better with the cache enabled, but it's worth keeping in mind if you are using a particularly sluggish SSD with a hard drive that has very good sequential write performance.

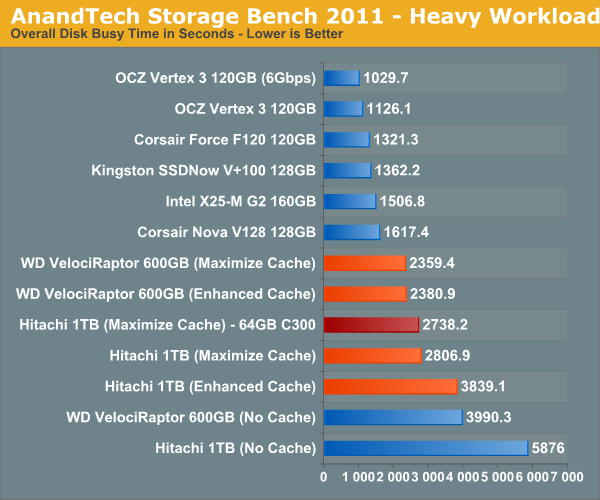

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

106 Comments

View All Comments

jordanclock - Wednesday, May 11, 2011 - link

You can use a drive for both, but you must set up your data partition AFTER you set up the cache partition.jorkolino - Wednesday, June 6, 2012 - link

What do you mean by that? You partition the SSD drive, install the OS in the first partition, set-up the other partition as a cache, and then format your remaining HDD?jorkolino - Wednesday, June 6, 2012 - link

I wonder, can you tell SRT to cache blocks only from the HDD onto the cache partition, because by default SRT may decide to cache system files that already reside onto a fast SSD partition...evilspoons - Wednesday, May 11, 2011 - link

I know it's early on for Z68, but I'm curious how other SSDs will perform in SRT mode. I ask because the 40 GB X25-V is on sale here for half its usual price...evilspoons - Wednesday, May 11, 2011 - link

To answer my own question, Tom's Hardware reviewed SRT with several SSDs and to put it bluntly, the X25-V sucks. Its very low write speed of 35 mb/sec actually drags the hard drive down in a few tests.Shadowmaster625 - Wednesday, May 11, 2011 - link

Yeah that is a nice way of putting it. Talk about sugar coating. Here is a question for ya: was intel being "conservative" when they tried to shove rambus down everyone's throats? If it werent for AMD and DDR god knows how much memory would cost now. I still have one of those rambus P4 systems running in the lab right now. (intel 850 chipset with dual channel RDRAM). I did some memory benchmarking on it and was shocked to find that it was actually slower than any of the P4 DDR 266 machines we have running. (Yes we are slow to upgrade lol.) It runs at about DDR200 equivalent speeds. And we really paid out the wazoo for that system.Shinobisan - Wednesday, May 11, 2011 - link

discrete graphics cards are limited - even though they often have three, four.. or more connectors these days, they can often only drive two monitors at a time. (unless you use a displayport connector... and monitors with DP don't really exist yet)I have two monitors driven by my HD6950 via the digital video out connectors. So the HDMI connector on that card is "dead" until I turn one of the monitors off.

What I would like to be able to do... is have my dGPU drive my two monitors, and the iGPU drive my 1080p TV via HDMI.

Can I do that? This discussion on virtu muddies the water some. unclear.

Conficio - Wednesday, May 11, 2011 - link

Well, so SRT is a good idea but again it is limited artificially in its use. Sounds to me like the P67/H67 stund all over again.Why is it limited?

* For starters it is driver supported, and I believe that means Windows only (I could find no mention of what OS is supported). To be fully useful it belongs into the chipset/BIOS realm.

* Next there is the artificial 64GB limit. As is obvious from even the tests that is not really the practical limit of its usefulness. It is simply a marketing limit to not compete with Intels own full SSD business. You got to ask yourself, why not use your aging SSD of 100GB or 256 GB (a couple years down the road) as an SRT drive?

* "With the Z68 SATA controllers set to RAID (SRT won't work in AHCI or IDE modes) just install Windows 7 on your hard drive like you normally would." So only RAID setups are supported? Well you are testing with a single hard drive, so this might be a confusing statement. But if it is RAID only then that is ceratinly not what Joe Shmoe has in its desktop (let alone in its Laptop).

A5 - Wednesday, May 11, 2011 - link

If the AT Heavy Workload Storage Bench is a typical usage case for you, than you shouldn't be using SRT anyway - you'd have a RAID array of SSDs to maximize your performance.jordanclock - Wednesday, May 11, 2011 - link

For caching purposes, I'm sure 64GB is a very reasonable limit. The more data you cache, the more data you have to pay attention to when it comes to kicking out old data.And it isn't a RAID set up, per se. You set the motherboard to RAID, but the entire system is handled in software. So Joe Shmoe wouldn't even have to know what a RAID is, though I don't see Joe Shmoe even knowing what a SSD is...