The OCZ Vertex 3 Review (120GB)

by Anand Lal Shimpi on April 6, 2011 6:32 PM ESTAnandTech Storage Bench 2011

I didn't expect to have to debut this so soon, but I've been working on updated benchmarks for 2011. Last year we introduced our AnandTech Storage Bench, a suite of benchmarks that took traces of real OS/application usage and played them back in a repeatable manner. I assembled the traces myself out of frustration with the majority of what we have today in terms of SSD benchmarks.

Although the AnandTech Storage Bench tests did a good job of characterizing SSD performance, they weren't stressful enough. All of the tests performed less than 10GB of reads/writes and typically involved only 4GB of writes specifically. That's not even enough exceed the spare area on most SSDs. Most canned SSD benchmarks don't even come close to writing a single gigabyte of data, but that doesn't mean that simply writing 4GB is acceptable.

Originally I kept the benchmarks short enough that they wouldn't be a burden to run (~30 minutes) but long enough that they were representative of what a power user might do with their system.

Not too long ago I tweeted that I had created what I referred to as the Mother of All SSD Benchmarks (MOASB). Rather than only writing 4GB of data to the drive, this benchmark writes 106.32GB. It's the load you'd put on a drive after nearly two weeks of constant usage. And it takes a *long* time to run.

I'll be sharing the full details of the benchmark in some upcoming SSD articles but here are some details:

1) The MOASB, officially called AnandTech Storage Bench 2011 - Heavy Workload, mainly focuses on the times when your I/O activity is the highest. There is a lot of downloading and application installing that happens during the course of this test. My thinking was that it's during application installs, file copies, downloading and multitasking with all of this that you can really notice performance differences between drives.

2) I tried to cover as many bases as possible with the software I incorporated into this test. There's a lot of photo editing in Photoshop, HTML editing in Dreamweaver, web browsing, game playing/level loading (Starcraft II & WoW are both a part of the test) as well as general use stuff (application installing, virus scanning). I included a large amount of email downloading, document creation and editing as well. To top it all off I even use Visual Studio 2008 to build Chromium during the test.

Update: As promised, some more details about our Heavy Workload for 2011.

The test has 2,168,893 read operations and 1,783,447 write operations. The IO breakdown is as follows:

| AnandTech Storage Bench 2011 - Heavy Workload IO Breakdown | ||||

| IO Size | % of Total | |||

| 4KB | 28% | |||

| 16KB | 10% | |||

| 32KB | 10% | |||

| 64KB | 4% | |||

Only 42% of all operations are sequential, the rest range from pseudo to fully random (with most falling in the pseudo-random category). Average queue depth is 4.625 IOs, with 59% of operations taking place in an IO queue of 1.

Many of you have asked for a better way to really characterize performance. Simply looking at IOPS doesn't really say much. As a result I'm going to be presenting Storage Bench 2011 data in a slightly different way. We'll have performance represented as Average MB/s, with higher numbers being better. At the same time I'll be reporting how long the SSD was busy while running this test. These disk busy graphs will show you exactly how much time was shaved off by using a faster drive vs. a slower one during the course of this test. Finally, I will also break out performance into reads, writes and combined. The reason I do this is to help balance out the fact that this test is unusually write intensive, which can often hide the benefits of a drive with good read performance.

There's also a new light workload for 2011. This is a far more reasonable, typical every day use case benchmark. Lots of web browsing, photo editing (but with a greater focus on photo consumption), video playback as well as some application installs and gaming. This test isn't nearly as write intensive as the MOASB but it's still multiple times more write intensive than what we were running last year.

As always I don't believe that these two benchmarks alone are enough to characterize the performance of a drive, but hopefully along with the rest of our tests they will help provide a better idea.

The testbed for Storage Bench 2011 has changed as well. We're now using a Sandy Bridge platform with full 6Gbps support for these tests. All of the older tests are still run on our X58 platform.

AnandTech Storage Bench 2011 - Heavy Workload

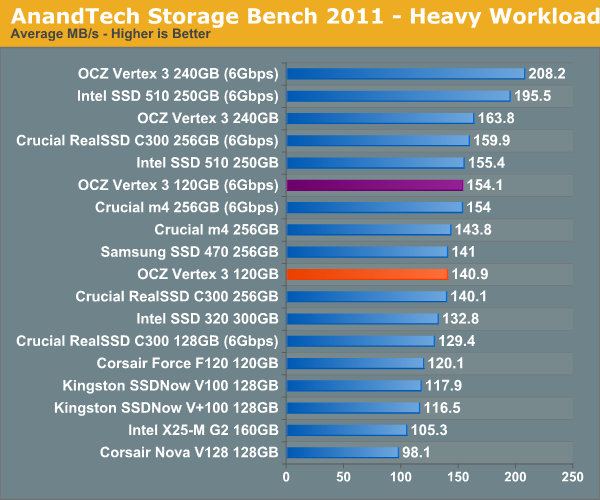

We'll start out by looking at average data rate throughout our new heavy workload test:

In our heavy test for 2011 the 120GB Vertex 3 is noticeably slower than the 240GB sample we tested a couple of months ago. Fewer available die are the primary explanation. We're still waiting on samples of the 120GB Intel SSD 320 and the Crucial m4 but it's looking like this round will be more competitive than we originally thought.

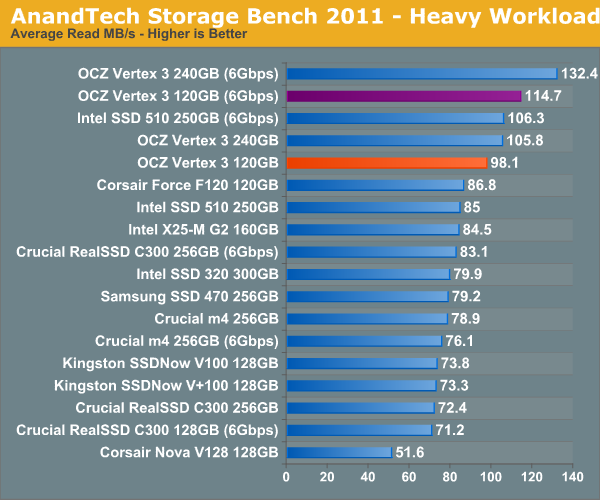

The breakdown of reads vs. writes tells us more of what's going on:

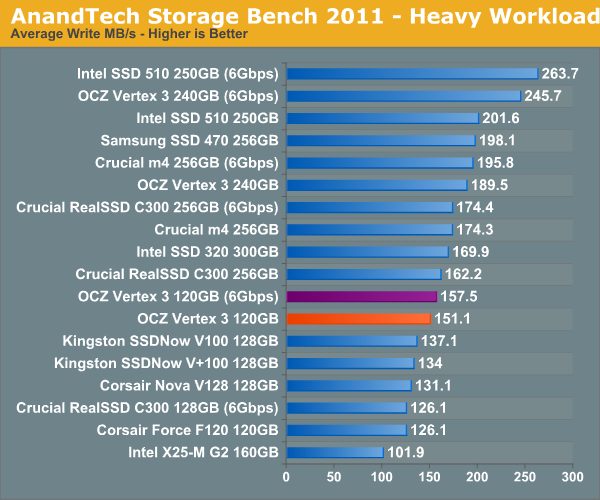

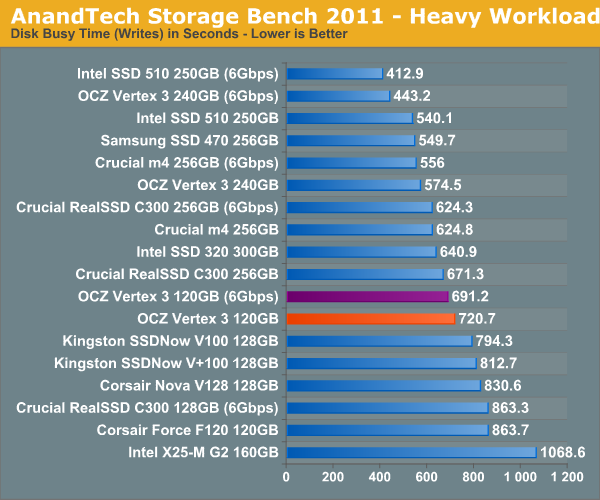

Surprisingly enough it's not read speed that holds the 120GB Vertex 3 back, it's ultimately the lower (incompressible) write speed:

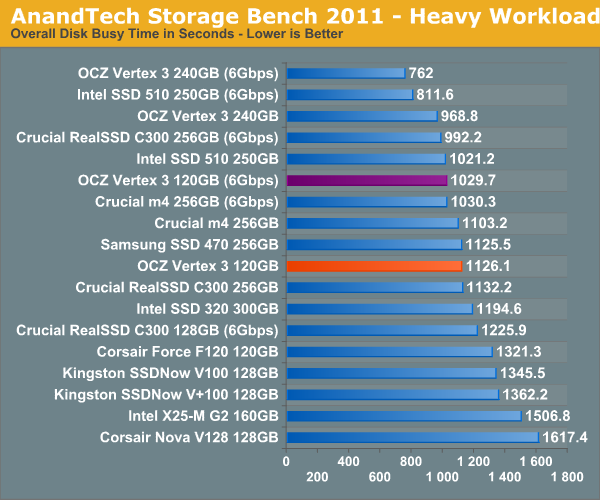

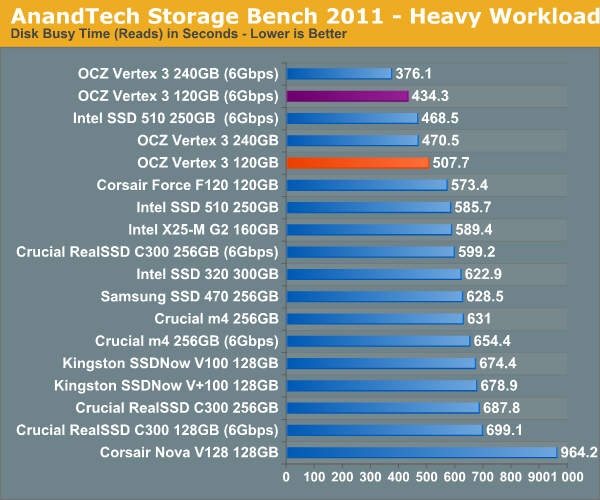

The next three charts just represent the same data, but in a different manner. Instead of looking at average data rate, we're looking at how long the disk was busy for during this entire test. Note that disk busy time excludes any and all idles, this is just how long the SSD was busy doing something:

153 Comments

View All Comments

pfarrell77 - Sunday, April 10, 2011 - link

Great job Anand!ARoyalF - Wednesday, April 6, 2011 - link

For keeping them honest!magreen - Wednesday, April 6, 2011 - link

Intro page: "It's also worth nothing that 3000 cycles is at the lower end for what's industry standard..."I can't figure out your intent here. Is it worth noting or is it worth nothing?

Anand Lal Shimpi - Wednesday, April 6, 2011 - link

Noting, not nothing. Sorry :)Take care,

Anand

magreen - Wednesday, April 6, 2011 - link

Hey, it was nothing.:)

vol7ron - Wednesday, April 6, 2011 - link

Lmao. Magreen, I like how you addressed that.Shark321 - Thursday, April 7, 2011 - link

On many workstations in my company we have a daily SSD usage of at least 20 GB, and this is not something really exceptional. One hibernation in the evening writes 8 GB (the amount of RAM) to the SSDs. And no, Windows does not write only the used RAM, but the whole 8 GB. One of the features of Windows 8 will be that Windows does not write the whole RAM content when hibernating anymore. Windows 7 disables hibernation by default on system with >4GB of RAM for that very reason! Several of the workstation use RAM-Disks, which write a 2 or 3 GB Images on Shutdown/Hibernate. Since we use VMWare heavily, 1-2 GB is written contanstly all over the day as Spanshots. Add some backup spanshops of Visual Studio products to that and you have another 2 GB.Writing 20 GB a day, is nothing unusual, and this happens on at least 30 workstations. Some may even go to 30-40 GB.

Only 3000 write cycles per cell is the reason why we had several complete failures of SSDs. Three of them from OCZ, one Corsair, one Intel.

Pessimism - Thursday, April 7, 2011 - link

Yours is a usage scenario that would benefit more from running a pair of drives, one SSD and one large conventional hard drive. The conventional drive could be used for all your giant writes (slowness won't matter because you are hitting shut down and walking away) and use the SSD for windows and applications themselves.Shark321 - Friday, April 8, 2011 - link

HDD slowness does matter! A lot! Loading a VMWare snapshot on a Raptor HDD takes at least 15 seconds, compared to about 6-8 with a SDD. Shrinking the image once a month takes about 30 minutes on a SDD and 3 hours on a HDD!Since time is money, HDDs are not an option, except as a backup medium.

Per Hansson - Friday, April 8, 2011 - link

How can you be so sure it is due to the 20GB writes per day?If you run out of NAND cycles the drives should not fail (as I'm implying you mean by your description)

When an SSD runs out of write cycles you will have (for consumer drives) if memory serves about one year before data retention is no longer guaranteed.

What that means is that the data will be readable, but not writeable

This of course does not in any way mean that drives could not fail in any other way, like controller failure or the likes

Intel has a failure rate of ca 0.6% Corsair ca 2% and OCZ ca 3%

http://www.anandtech.com/show/4202/the-intel-ssd-5...