NVIDIA’s GeForce GTX 590: Duking It Out For The Single Card King

by Ryan Smith on March 24, 2011 9:00 AM ESTOCP Refined, A Word On Marketing, & The Test

As you may recall from our GTX 580 launch article, NVIDIA added a rudimentary OverCurrent Protection (OCP) feature to the GTX 500 series. At the time of the GTX 580 launch, OCP would clamp down on Furmark and OCCT keep those programs from performing at full speed, as the load they generated was so high that it risked damaging the card. As a matter of principle we have been disabling OCP in all of our tests up until now as OCP was only targeting Furmark and OCCT, meaning it didn’t provide any real protection for the card in any other situations. Our advice to NVIDIA at the time was to expand it to cover the hardware at a generic level, similar to how AMD’s PowerTune operates.

We’re glad to report that NVIDIA has taken up at least some of our advice, and that OCP is taking its first step forward since then. Starting with the ForceWare 267 series drivers, NVIDIA is now using OCP at all times, meaning OCP now protects against any possible program that would generate an excessive load (as defined by NVIDIA), and not just Furmark and OCCT. At this time there’s definitely still a driver component involved as NVIDIA still throttles Furmark and OCCT right off the bat, but everything else seems to be covered by their generic detection methods.

At this point our biggest complaint is that OCP’s operation is still not transparent to the end user. If you trigger it you have no way of knowing unless you know how the game/application should already be performing. NVIDIA tells us that at some point this will be exposed through NVIDIA’s driver API, but today is not that day. Along those lines, at least in the case of Furmark and OCCT OCP still throttles to an excessive degree—whereas AMD gets this right and caps anything and everything at the PowerTune limit, we still see OCP heavily clamp these programs to the point that our GTX 590 draws 100W more under games than it does under Furmark. Clamping down on a program to bring power consumption down to safe levels is a good idea, but clamping down beyond that just hurts the user and we hope to see NVIDIA change this.

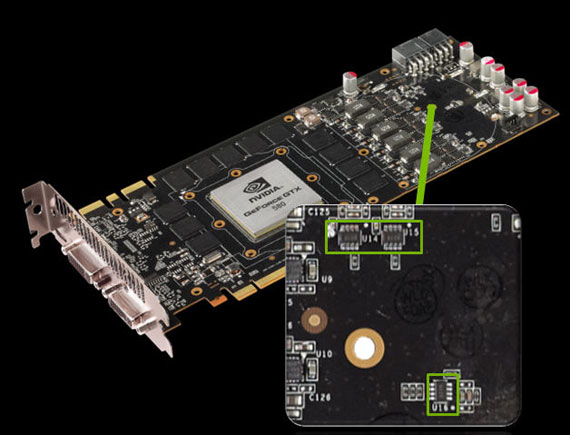

Finally, the expansion of OCP’s capabilities is going to have an impact on overclocking. As with reporting when OCP is active, NVIDIA isn’t being fully transparent here so there’s a bit of feeling around at the moment. The OCP limit for any card is roughly 25% higher than the official TDP, so in the case of the GTX 590 this would translate into a 450W limit. This limit cannot currently be changed by the end user, so overclocking—particularly overvolting—risks triggering OCP. Depending on how well its generic detection mode works, it may limit extreme overclocking on all NVIDIA cards with the OCP hardware at the moment. Even in our own overclock testing we have some results that may be compromised by OCP, so it’s definitely something that needs to be considered.

Moving on, I’d like to hit upon marketing quickly. Normally the intended market and uses of most video cards is rather straightforward. For the 6990 AMD pushed raw performance Eyefinity (particularly 5x1P), while for the GTX 590 NVIDIA is pushing raw performance, 3D Vision Surround, and PhysX (even if dedicating a GF110 to PhysX is overkill). However every now and then something comes across that catches my eye. In this case NVIDIA is also using the GTX 590 to reiterate their support for SuperSample Anti-Aliasing support for DX9, DX10, and DX11. SSAA is easily the best hidden feature of the GTX 400/500 series, and it’s one that doesn’t get much marketing attention from NVIDIA. So it’s good to see it getting some attention from NVIDIA—certainly there’s no card better suited for it than the GTX 590.

Last, but not least, we have the test. For the launch of the GTX 590 NVIDIA is providing us with ForceWare 267.71 beta, which adds support for the GTX 590; there are no other significant changes. For cooling purposes we have removed the case fan behind PEG1 on our test rig—while an 11” card is short enough to fit it, it’s counterproductive for a dual-exhaust design. Finally, in order to better compare the GTX 590 to the 6990’s OC/Uber mode, we’ve given our GTX 590 a slight overclock. Our GTX 590 OC is clocked at 750/900, a 143MHz (23%) core and 47MHz (5%) memory overclock. Meanwhile the core voltage was raised from 0.912v to 0.987v. With the poor transparency of OCP’s operation however, we are not 100% confident that we haven’t triggered OCP, so please keep that in mind when looking at the overclocked results.

Update: April 2nd, 2011: Starting with the 267.91 drivers and release 270 drivers, NVIDIA has disabled overvolting on the GTX 590 entirely. This is likely a consequence of several highly-publicized incidents where GTX 590 cards died as a result of overvolting. Although it's unusual to see a card designed to not be overclockable, clearly this is where NVIDIA intends to be.

As an editorial matter we never remove anything from a published article so our GTX 590 OC results will remain. However with these newer drivers it is simply not possible to attain them.

| CPU: | Intel Core i7-920 @ 3.33GHz |

| Motherboard: | Asus Rampage II Extreme |

| Chipset Drivers: | Intel 9.1.1.1015 (Intel) |

| Hard Disk: | OCZ Summit (120GB) |

| Memory: | Patriot Viper DDR3-1333 3x2GB (7-7-7-20) |

| Video Cards: |

AMD Radeon HD 6990 AMD Radeon HD 6970 AMD Radeon HD 6950 2GB AMD Radeon HD 6870 AMD Radeon HD 6850 AMD Radeon HD 5970 AMD Radeon HD 5870 AMD Radeon HD 5850 AMD Radeon HD 5770 AMD Radeon HD 4870X2 AMD Radeon HD 4870 EVGA GeForce GTX 590 Classified NVIDIA GeForce GTX 580 NVIDIA GeForce GTX 570 NVIDIA GeForce GTX 560 Ti NVIDIA GeForce GTX 480 NVIDIA GeForce GTX 470 NVIDIA GeForce GTX 460 1GB NVIDIA GeForce GTX 460 768MB NVIDIA GeForce GTS 450 NVIDIA GeForce GTX 295 NVIDIA GeForce GTX 285 NVIDIA GeForce GTX 260 Core 216 |

| Video Drivers: |

NVIDIA ForceWare 262.99 NVIDIA ForceWare 266.56 Beta NVIDIA ForceWare 266.58 NVIDIA ForceWare 267.71 AMD Catalyst 10.10e AMD Catalyst 11.1a Hotfix AMD Catalyst 11.4 Preview |

| OS: | Windows 7 Ultimate 64-bit |

123 Comments

View All Comments

BreadFan - Thursday, March 24, 2011 - link

Would this card be better for the P67 platform vs GTX 580's in sli considering you won't get full 16x going the sli route?Nfarce - Thursday, March 24, 2011 - link

The 16x vs. 8x issue has been beaten to death for years. Long story short, it's not a measurable difference at or below 1920x1080 resolutions and only barely a difference above that.BreadFan - Thursday, March 24, 2011 - link

Thanks man. Already have one evga 580. Only reason I was considering was for the step up program evga offers (590 for around $200). I have till first part of June to think about it but am leaning towards adding another 580 once the price comes down in a year or two.wellortech - Thursday, March 24, 2011 - link

You won't get 16x going the CF route either.....although I agree that it doesn't really matter.softdrinkviking - Sunday, March 27, 2011 - link

i hope your screen name is from the budgie song!softdrinkviking - Sunday, March 27, 2011 - link

that comment was to breadfan.7Enigma - Thursday, March 24, 2011 - link

Comon guys, I would have thought you could have at least had the 6990 and the 590 data points for Crysis 2. Perhaps a short video as well with the new game? :)Ryan Smith - Thursday, March 24, 2011 - link

It's unlikely we'll be using Crysis 2 in its current state, but that could always change.However if we were to use it, it won't be until the next benchmark refresh.

YouGotServed - Friday, March 25, 2011 - link

Crysis: Warhead will always be the benchmark. Crysis 2 isn't nearly as demanding. It's been dumbed down for consoles, in case you haven't heard. There are no advanced settings available to you through the normal game menu. You have to tweak the CFG file to do so.I thought like you, originally. I was thinking: Crysis 2 is gonna set a new bar for performance. But in reality, it's not even close to the original in terms of detail level.

mmsmsy - Thursday, March 24, 2011 - link

I know I can be annoying, but I checked it myself and the built in benchmark in Civ V really favours nVidia cards. In the real world scenario the situation is almost upside down. I got a reply from one of the reviewers last time that it provides quite accurate scores, but I thought that just for the heck of it you'd try and see for yourself that it doesn't at all. I know it's just one game and that benchmarking is a slow work, but in order to keep up the good work you're doing you should at least use the advice and confront it with the reality to stay objective and provide the most accurate scores that really mean sth. I don't mean to undermine you, because I find your articles to be mostly accurate and you're doing a great job. Just use the advice to make this site even better. A lot of writing for a comment, but this time maybe you will see what I'm trying to do.