NVIDIA’s GeForce GTX 590: Duking It Out For The Single Card King

by Ryan Smith on March 24, 2011 9:00 AM ESTCrysis: Warhead

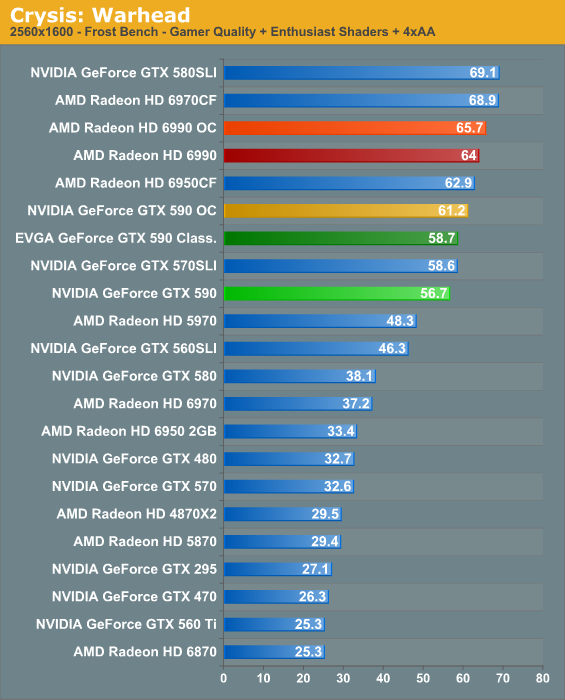

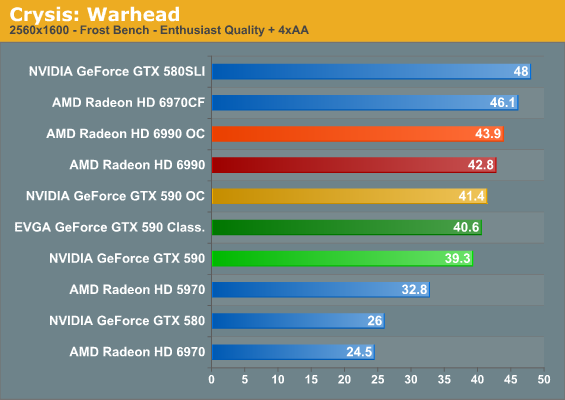

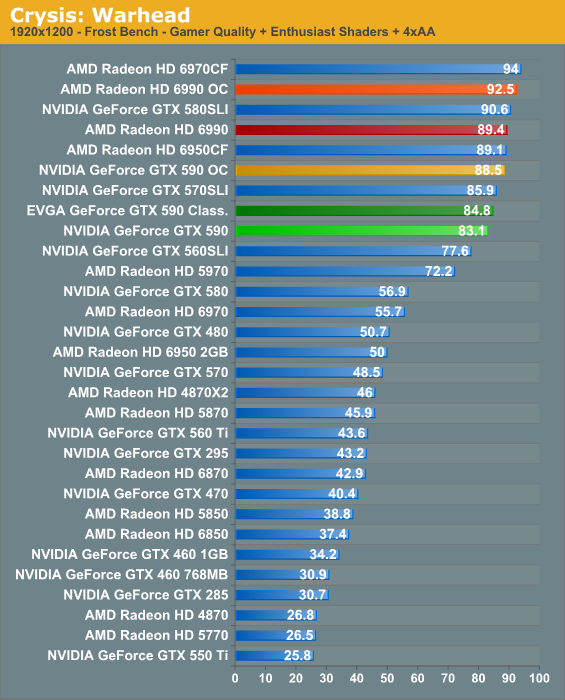

Kicking things off as always is Crysis: Warhead, still one of the toughest games in our benchmark suite. Even three years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and for three years the answer was “no.” Dual-GPU halo cards can now play it at Enthusiast settings at high resolutions, but for everything else max settings are still beyond the grasp of a single card.

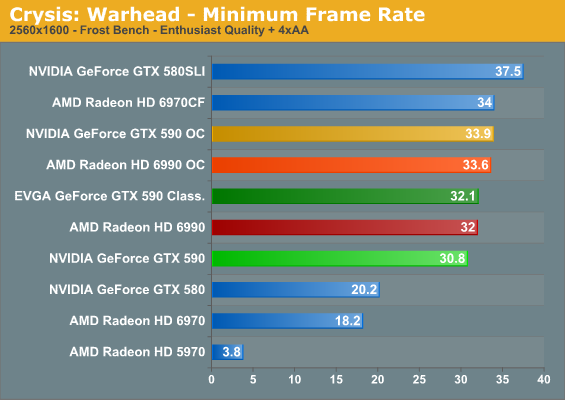

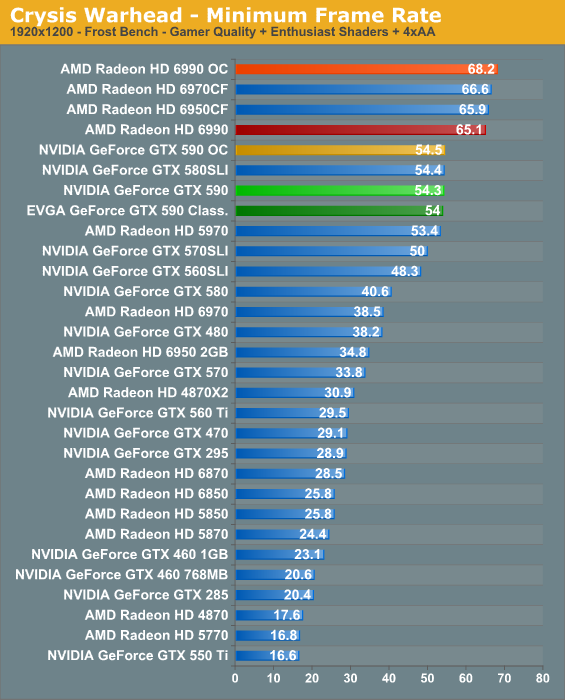

Crysis is often a bellwether for overall performance; if that’s the case here, then NVIDIA and the GTX 590 is not off to a good start at the all-important resolution of 2560x1600.

AMD gets some really good CrossFire scaling under Crysis, and as a result the 6990 has no problem taking the lead here. At a roughly 10% disadvantage it won’t make or break the game for NVIDIA, but given the similar prices they don’t want to lose too many games.

Meanwhile amongst NVIDIA’s own stable of cards, the stock GTX 590 ends up slightly underperforming the GTX 570 SLI. As we discussed in our look at theoretical numbers, the GTX 590’s advantage/disadvantage depends on what the game in question taxes the most. Crysis is normally shader and memory bandwidth heavy, which is why the GTX 590 never falls too far behind with its memory bandwidth advantage. EVGA’s mild overclock is enough to close the gap however, delivering identical performance. A further overclock can improve performance some more, but surprisingly not by all that much.

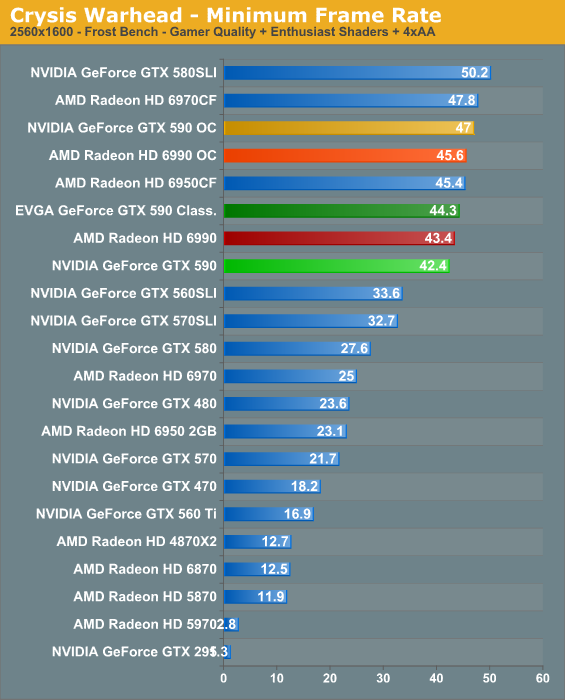

The minimum framerate ends up looking better for NVIDIA. The GTX 590 is still behind the 6990, but now it’s only by about 5%, while the EVGA GTX 590 squeezes past by all of .1 frame per second.

123 Comments

View All Comments

Ruger22C - Thursday, March 24, 2011 - link

Don't spew nonsense to the people reading this! Write a disclaimer if you're going to do that.The Finale of Seem - Saturday, March 26, 2011 - link

Um...no. For one, HUD elements tend to shrink in physical size as resolution rises, meaning that games with a lot of HUD (WoW comes to mind) benefit by letting you see more of what's going on, which means that 720p is pretty friggin' awful. For two, 1920x1080 has become the standard for most monitors over 21" or so, and a lot of gamers get 1920x1080 displays, especially if they're also watching 1080p video or doing significant multitasking. Non-native resolutions look like ass, and as such, 1600x1050 is right out as you won't want to play at anything but 1920x1080.Now, you can say that there isn't much point going above that, and right now, that may be so as cost is pretty prohibitive, but that may not always be the case.

rav55 - Thursday, March 31, 2011 - link

What good is it if you can't buy it? Nvidia cherry picked the gpu's to work on this card and they could only release a little over 1000 units. It is now sold out in the US and available in limited amounts in Europe.Basically the GTX 590 is vapourware!!! What a joke!

wellortech - Thursday, March 24, 2011 - link

Reviews seem to still agree that 6950CF or 570 SLI are just as powerful, and much less expensive. Guess I'll be keeping my pair of 6950s while continuing to enjoy 30" 2550x1600 heaven.DanNeely - Thursday, March 24, 2011 - link

Yeah, these only really make sense if you're going for a 4GPU setup in an ATX box, or have a larger mATX case and want to 2 GPUs and some other card.jfelano - Thursday, March 24, 2011 - link

You go boy. I'll continue to have a life.The_Comfy_Chair - Thursday, March 24, 2011 - link

Get over yourself.YOU are trolling on a forum about a video card on a tech-geek site on the internet. You have no more of a life than wellortech or anyone else here - self included.

ShumOSU - Thursday, March 24, 2011 - link

You're 16,000 pixels short. :-)egandt - Thursday, March 24, 2011 - link

Would have been better to see what these cards did with 3x 1920x1200 displays, as obviously they are overkill for any single display.Dudler - Thursday, March 24, 2011 - link

Couldn't agree more, but since we know from the 1,5 GB 580 that the nVida card do poorly in higher resolutions, AnandTech is probably never test any such setup. Expect 12x10 instead, as nVidia tends to do better in low resolutions than Amd. 19x12 is already irrelevant with these cards.