AMD's Radeon HD 6990: The New Single Card King

by Ryan Smith on March 8, 2011 12:01 AM EST- Posted in

- AMD

- Radeon HD 6990

- GPUs

Power, Temperature, and Noise: How Loud Can One Card Get?

Last but not least as always is our look at the power consumption, temperatures, and acoustics of the Radeon HD 6990 series. This is an area where AMD has traditionally had an advantage, as their small die strategy leads to less power hungry and cooler products compared to their direct NVIDIA counterparts. Dual-GPU cards like the 6990 tend to increase the benefits of lower power consumption, but heat and noise are always a wildcard.

AMD continues to use a single reference voltage for their cards, so the voltages we see here represent what we’ll see for all reference 6900 series cards. In this case voltage also plays a big part, as PowerTune’s TDP profile is calibrated around a specific voltage.

| Radeon HD 6900 Series Voltage | ||||

| 6900 Series Idle | 6970 Load | 6990 Load | ||

| 0.9v | 1.175v | 1.12v | ||

The 6990 idles at the same 0.9v as the rest of the 6900 series. At load under default clocks it runs at 1.12v thanks to AMD’s chip binning, and is a big part of why the card uses as little power as it does for its performance. Overclocked to 880MHz however and we see the core voltage go to 1.175v, the same as the 6970. Power consumption and heat generation will shoot up accordingly, exacerbated by the fact that PowerTune is not in use here.

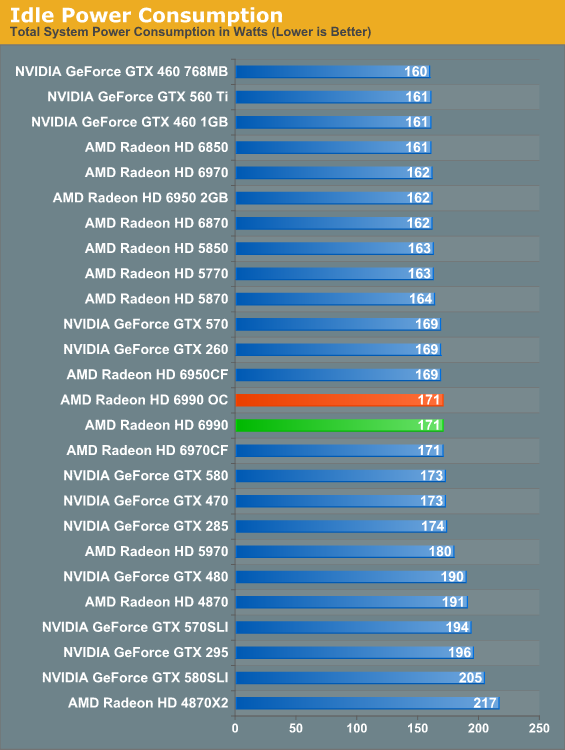

The 6990’s idle power is consistent with the rest of the 6900 series. At 171W it’s at parity with the 6970CF, while we see the advantage of the 6990’s lower idle TDP versus the 5970 in the form of a 9W advantage over the 5970.

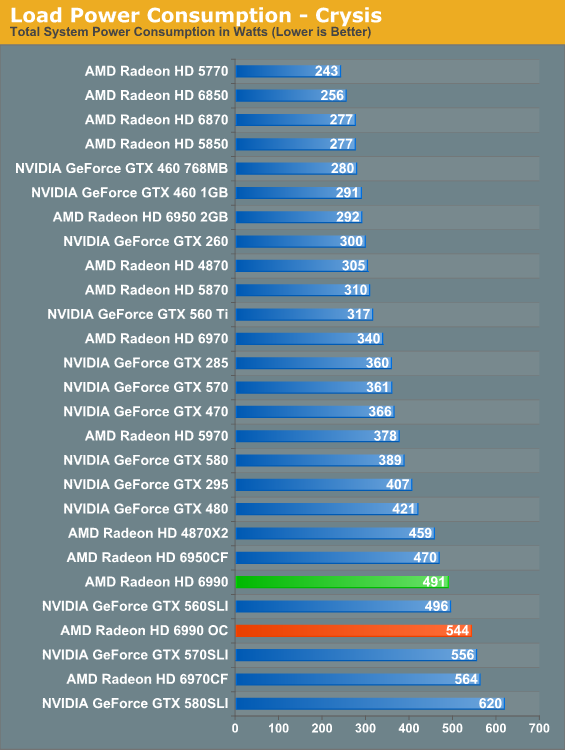

With the 6990, load power under Crysis gives us our first indication that TDP alone can’t be used to predict total power consumption. With a 375W TDP the 6990 should consume less power than 2x200W 6950CF, but in practice the 6950CF setup consumes 21W less. Part of this comes down to the greater CPU load the 6990 can create by allowing for higher framerates, but this doesn’t completely explain the disparity. Compared to the 5970 the 6990 is also much higher than the TDP alone would indicate; the gap of 113W exceeds the 75W TDP difference. Clearly the 6990 truly is a more power hungry card than the 5970.

Meanwhile overclocking does send the power consumption further up, this time to 544W. This is better than the 6970CF at the cost of some performance. Do keep in mind though that at this point we’re dissipating 400W+ off of a single card, which will have repercussions.

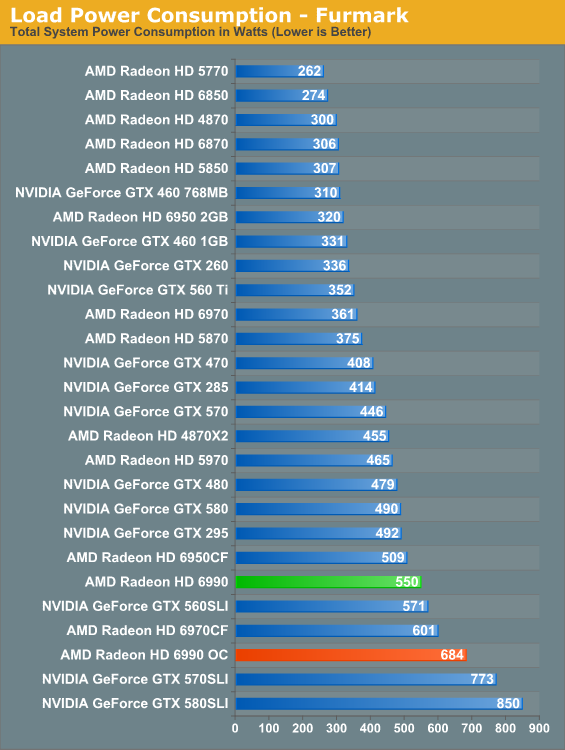

Under FurMark PowerTune limits become the defining factor for the 6900 series. Even with PT triggering on all three 6900 cards, the numbers have the 375W 6990 drawing more than the 2x200W 6950CF, this time by 41W, with the 6970CF in turn drawing 51W more. All things considered the 6990’s power consumption is in line with its performance relative to the other 6900 series cards.

As for our 6990OC, overclocked and without PowerTune we see what the 6990 is really capable of in terms of power consumption and heat. 684W is well above the 6970CF (which has PT intact), and is approaching the 570/580 in SLI. We don’t have the ability to measure the power consumption of solely the video card, but based on our data we’re confident the 6990 is pulling at least 500W – and this is one card with one fan dissipating all of that heat. Front and rear case ventilation starts looking really good at this point.

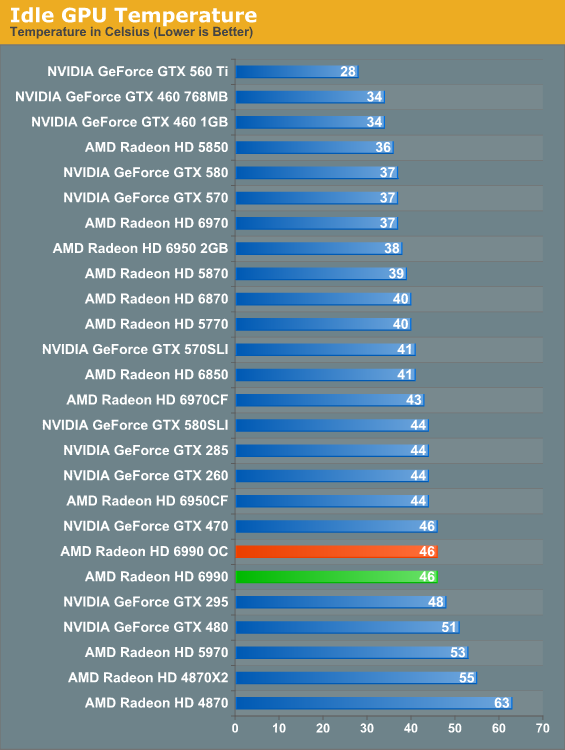

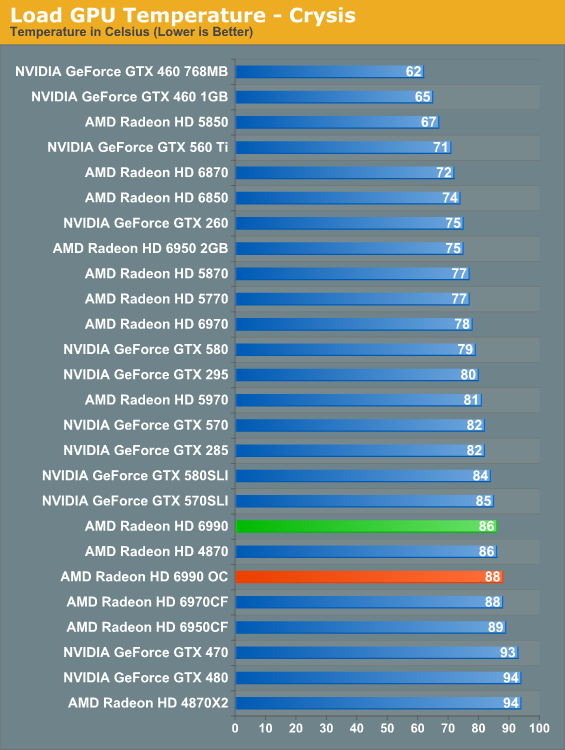

Along with the 6900 series’ improved idle TDP, AMD’s dual-exhaust cooler makes its mark on idle temperatures versus the 5970. At 46C the 6990 is warmer than our average card but not excessively so, and in the meantime it’s 7C cooler than the 5970 which has to contend with GPU2 being cooled with already heated air. A pair of 6900 cards in CF though is still going to beat the dual-exhaust cooler.

When the 5970 came out it was warmer than the 5870CF; the 6990 reverses this trend. At stock clocks the 6990 is a small but measurable 2C cooler than the 6970CF, which as a reminder we run in a “bad” CF configuration by having the cards directly next to each other. There is a noise tradeoff to discuss, but as far as temperatures are concerned these are perfectly reasonable. Even the 6990OC is only 2C warmer.

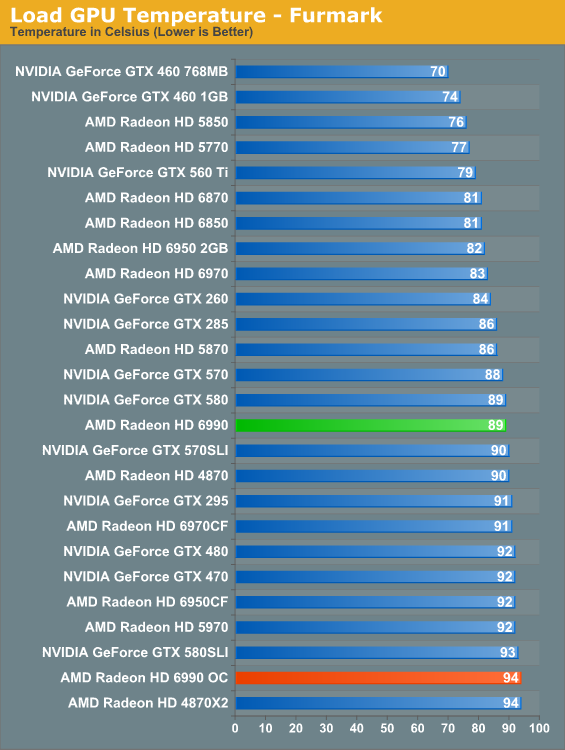

At stock clocks FurMark does not significantly change the picture. If anything it slightly improves things as PowerTune helps to keep the 6990 in the middle of the pack. Overclock however and the story changes. Without PowerTune to keep power consumption in check that 681W power consumption catches up to us in the form of 94C core temperatures. It’s only a 5C difference, but it’s as hot as we’re willing to let the 6990 get. Further overclocking on our test bed is out of the question.

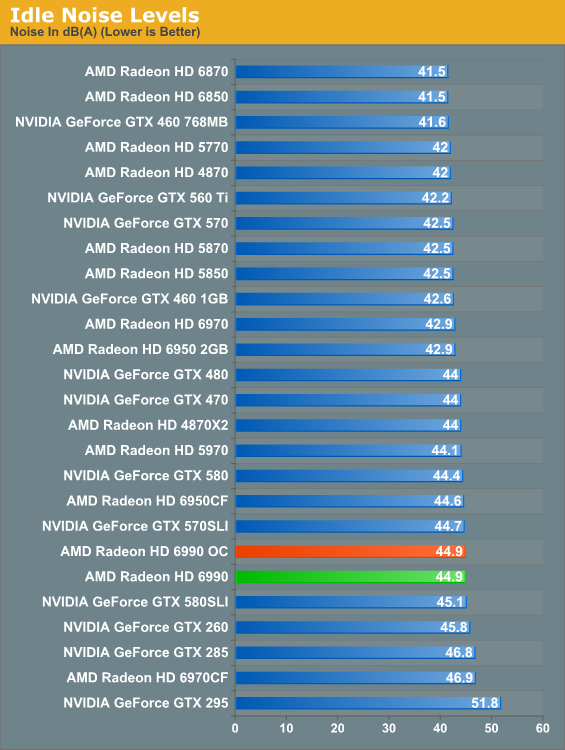

Finally there’s the matter of noise to contend with. At idle nothing is particularly surprising; the 6990 is an iota louder than the average card, presumably due to the dual-exhaust cooler.

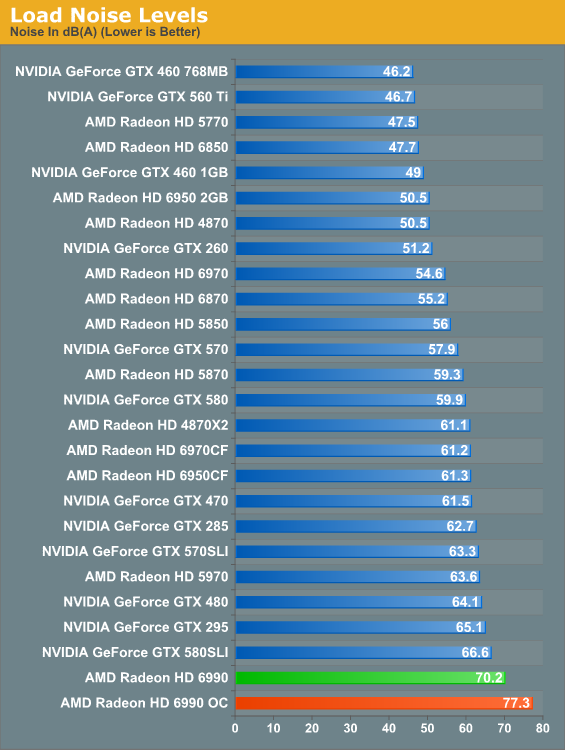

And here’s where it all catches up to us. The Radeon HD 5970 was a loud card, the GTX 580 SLI was even louder, but nothing tops the 6990. The laws of physics are a cruel master, and at some point all the smart engineering in the world won’t completely compensate for the fact that you need a lot of airflow to dissipate 375W of heat. There’s no way around the fact that the 6990 is an extremely loud card; and while games aren’t as bad as FurMark here, it’s still noticeably louder than everything else on a relative basis. Ideally the 6990 requires good airflow and good noise isolation, but the former makes the latter difficult to achieve. Water cooled 6990s will be worth their weight in gold.

130 Comments

View All Comments

james.jwb - Tuesday, March 8, 2011 - link

I doubt it. That would be 80 dBA at ear level compared to whatever Ryan used. At ear level it's going to be a lot lower than 80.Still, that doesn't take away the fact that this card is insane...

Belard - Tuesday, March 8, 2011 - link

The card needs 3 slots to keep cool and such. They should have made a 2.5-slotted card, but with a bit of a twist.Channel the AIR from the front GPU chamber into a U-duct, then split into a Y that goes around the fan (which can still be made bigger. The ducts then exhaust out the back in a "3rd slot". Or a duct runs along the top of the card (out of spec a bit) to allow the fan more air space. It would add about $5 for more plastic.

Rather than blowing HOT air INTO the case (which would then recycle BACK into the card!

OR - blowing HOT air out the front and onto your foot or arm.

Noise is a deal killer for many people nowadays.

strikeback03 - Tuesday, March 8, 2011 - link

That ductwork would substantially reduce the airflow, making a sharp turn like that would be a large bottleneck.burner1980 - Tuesday, March 8, 2011 - link

I´m always wondering why reviews always neglect this topic. Can this card run 3 monitors @ 1920x1080p 120 HZ. 120 HZ monitors/beamer offer not only 3D but foremost smooth transitions and less screen tearing. Since this technique is available and getting more and more friends, I really would like to see it tested.Can anybody enlighten me ? (I know that Dual link is necessary for every display and that AMD had problems with 120 HZ+eyefinity) Did they improve?

silverblue - Tuesday, March 8, 2011 - link

...with two slightly downclocked 6950s. Alternatively, a 6890, albeit with the old VLIW5 shader setup. As we've seen, the 5970 can win a test or two thanks to this even with a substantial clock speed disparity.The 6990 is stunning but I couldn't ever imagine the effort required to set up a machine capable of adequately running one... and don't get me started on two.

Figaro56 - Tuesday, March 8, 2011 - link

I'd love to see Jessica Alba's beaver, but that aint going to happen either.qwertymac93 - Tuesday, March 8, 2011 - link

AUSUM SWITCHhttp://img202.imageshack.us/img202/8717/ausum.png

KaelynTheDove - Tuesday, March 8, 2011 - link

Could someone please confirm if this card supports 30-bit colour?Previously, only AMD's professional cards supported 30-bit colour, with the exception of the 5970. I will buy either the 6990 or Nvidia's Quadro based on this single feature.

(Because somebody will inevitably say that I don't need or want 30-bit colour, I have a completely hardware-calibrated workflow with a 30" display with 110% AdobeRGB, 30-bit IPS panel and the required DisplayPort cables. Using 24-bit colour with my 5870 CF I suffer from _very_ nasty posterisation when working with high-gamut photographs. Yes, my cameras have a colour space far above sRGB. Yes, my printers have it too.)

Gainward - Tuesday, March 8, 2011 - link

Just a heads up for anyone buying the card and wanting to remove the stock cooler.... There is a small screw on the back that is covered by two stickers with (its under the two stickers that look like a barcode). Well removing that you will then notice a void logo underneath it... I just wanted to point it out to you all...Didnt bother us too much here seen as ours is sample but I know to some droppin £550ish UK is quite a bit of cash and if all you are doing is having an inquisitive look it seems a shame to void your warranty :-S

mmsmsy - Tuesday, March 8, 2011 - link

I'd like to know how do you benchmark those cards in Civ V. I suppose it's the in-game benchmark, isn't it? Well, I read some tests on one site using their own test recorded after some time spent in the game using FRAPS and I'm wondering if using the in-game test is that really different scenario. According to the source, in the real world situation nVidia cards' performance show no improvement whatsoever over AMD's offerings. If you could investigate that matter it would be great.