AMD's Radeon HD 6990: The New Single Card King

by Ryan Smith on March 8, 2011 12:01 AM EST- Posted in

- AMD

- Radeon HD 6990

- GPUs

Power, Temperature, and Noise: How Loud Can One Card Get?

Last but not least as always is our look at the power consumption, temperatures, and acoustics of the Radeon HD 6990 series. This is an area where AMD has traditionally had an advantage, as their small die strategy leads to less power hungry and cooler products compared to their direct NVIDIA counterparts. Dual-GPU cards like the 6990 tend to increase the benefits of lower power consumption, but heat and noise are always a wildcard.

AMD continues to use a single reference voltage for their cards, so the voltages we see here represent what we’ll see for all reference 6900 series cards. In this case voltage also plays a big part, as PowerTune’s TDP profile is calibrated around a specific voltage.

| Radeon HD 6900 Series Voltage | ||||

| 6900 Series Idle | 6970 Load | 6990 Load | ||

| 0.9v | 1.175v | 1.12v | ||

The 6990 idles at the same 0.9v as the rest of the 6900 series. At load under default clocks it runs at 1.12v thanks to AMD’s chip binning, and is a big part of why the card uses as little power as it does for its performance. Overclocked to 880MHz however and we see the core voltage go to 1.175v, the same as the 6970. Power consumption and heat generation will shoot up accordingly, exacerbated by the fact that PowerTune is not in use here.

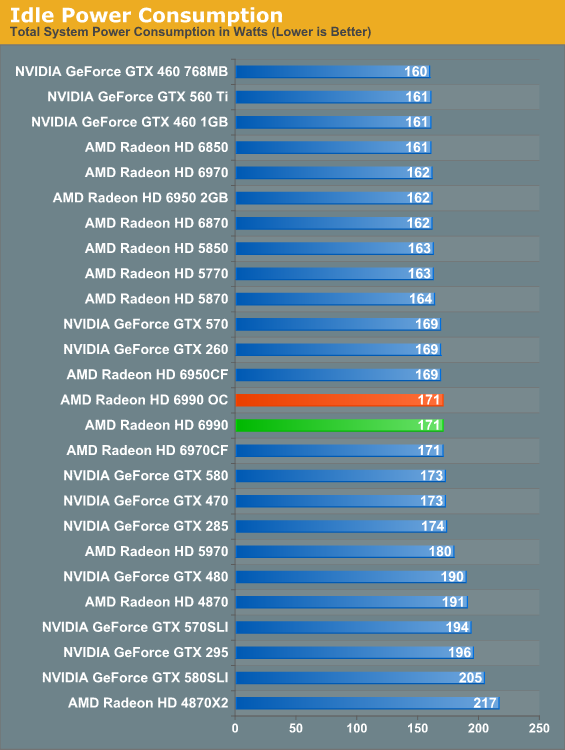

The 6990’s idle power is consistent with the rest of the 6900 series. At 171W it’s at parity with the 6970CF, while we see the advantage of the 6990’s lower idle TDP versus the 5970 in the form of a 9W advantage over the 5970.

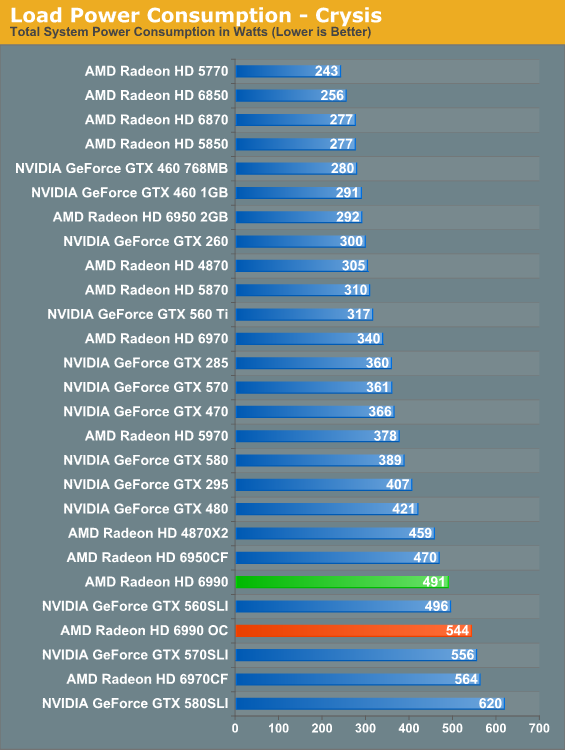

With the 6990, load power under Crysis gives us our first indication that TDP alone can’t be used to predict total power consumption. With a 375W TDP the 6990 should consume less power than 2x200W 6950CF, but in practice the 6950CF setup consumes 21W less. Part of this comes down to the greater CPU load the 6990 can create by allowing for higher framerates, but this doesn’t completely explain the disparity. Compared to the 5970 the 6990 is also much higher than the TDP alone would indicate; the gap of 113W exceeds the 75W TDP difference. Clearly the 6990 truly is a more power hungry card than the 5970.

Meanwhile overclocking does send the power consumption further up, this time to 544W. This is better than the 6970CF at the cost of some performance. Do keep in mind though that at this point we’re dissipating 400W+ off of a single card, which will have repercussions.

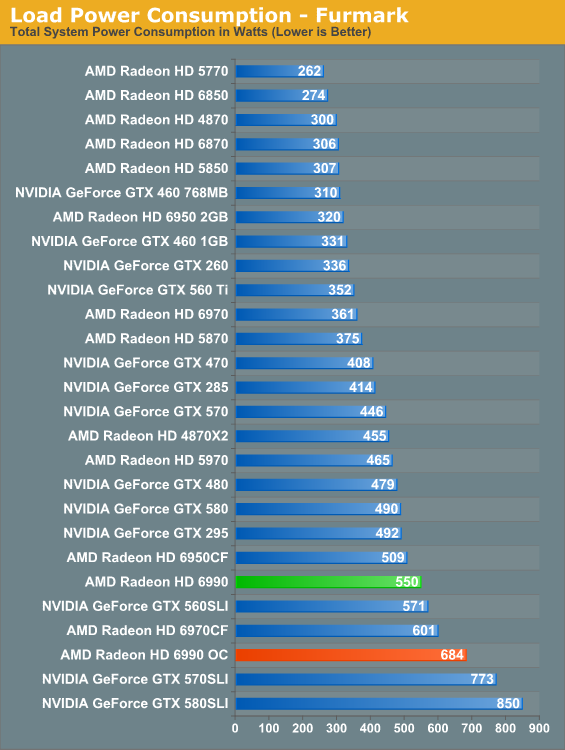

Under FurMark PowerTune limits become the defining factor for the 6900 series. Even with PT triggering on all three 6900 cards, the numbers have the 375W 6990 drawing more than the 2x200W 6950CF, this time by 41W, with the 6970CF in turn drawing 51W more. All things considered the 6990’s power consumption is in line with its performance relative to the other 6900 series cards.

As for our 6990OC, overclocked and without PowerTune we see what the 6990 is really capable of in terms of power consumption and heat. 684W is well above the 6970CF (which has PT intact), and is approaching the 570/580 in SLI. We don’t have the ability to measure the power consumption of solely the video card, but based on our data we’re confident the 6990 is pulling at least 500W – and this is one card with one fan dissipating all of that heat. Front and rear case ventilation starts looking really good at this point.

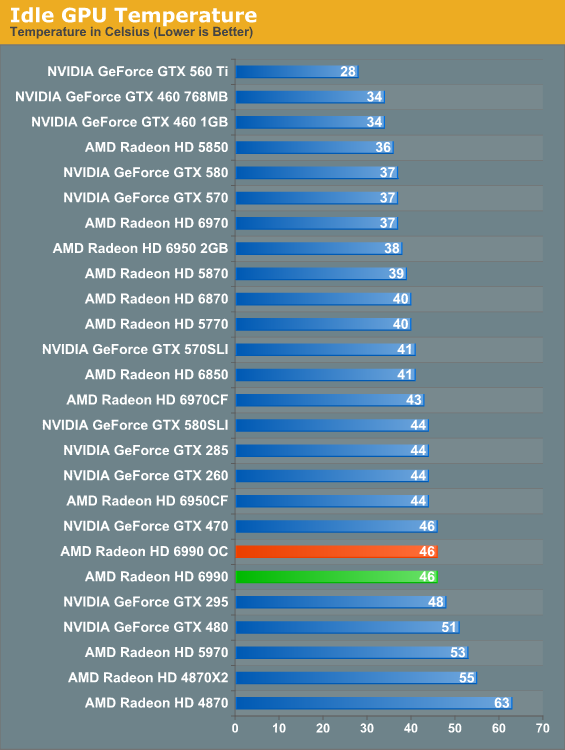

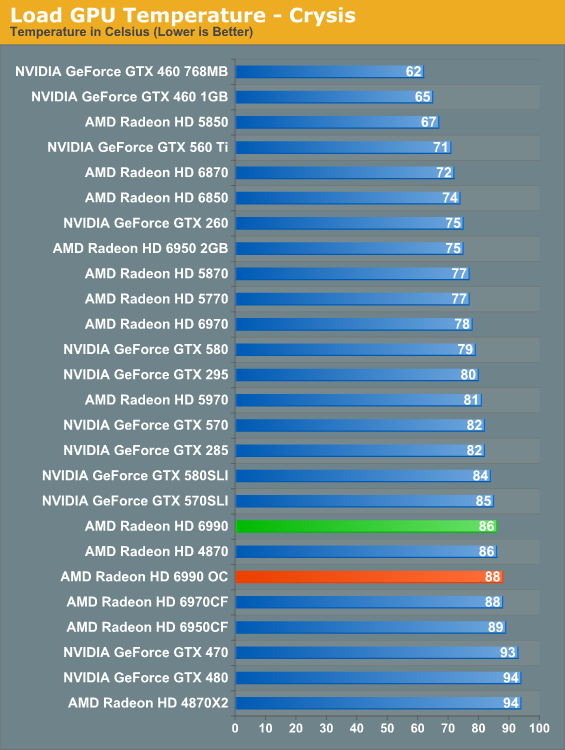

Along with the 6900 series’ improved idle TDP, AMD’s dual-exhaust cooler makes its mark on idle temperatures versus the 5970. At 46C the 6990 is warmer than our average card but not excessively so, and in the meantime it’s 7C cooler than the 5970 which has to contend with GPU2 being cooled with already heated air. A pair of 6900 cards in CF though is still going to beat the dual-exhaust cooler.

When the 5970 came out it was warmer than the 5870CF; the 6990 reverses this trend. At stock clocks the 6990 is a small but measurable 2C cooler than the 6970CF, which as a reminder we run in a “bad” CF configuration by having the cards directly next to each other. There is a noise tradeoff to discuss, but as far as temperatures are concerned these are perfectly reasonable. Even the 6990OC is only 2C warmer.

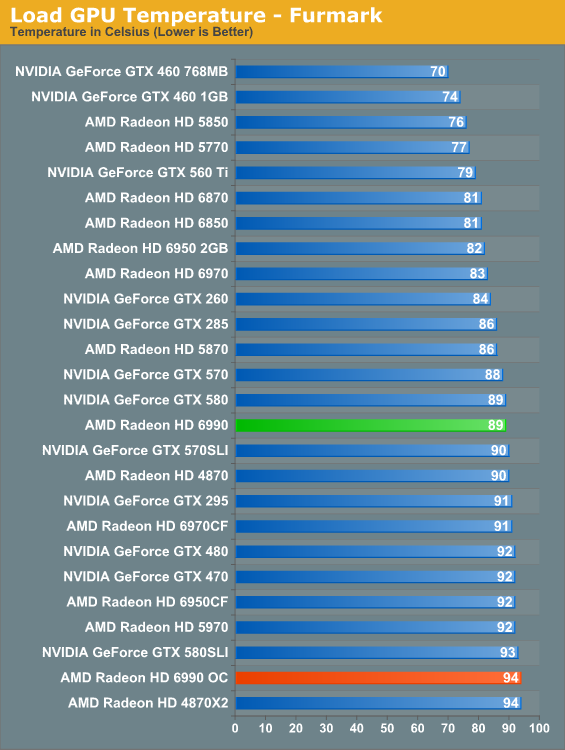

At stock clocks FurMark does not significantly change the picture. If anything it slightly improves things as PowerTune helps to keep the 6990 in the middle of the pack. Overclock however and the story changes. Without PowerTune to keep power consumption in check that 681W power consumption catches up to us in the form of 94C core temperatures. It’s only a 5C difference, but it’s as hot as we’re willing to let the 6990 get. Further overclocking on our test bed is out of the question.

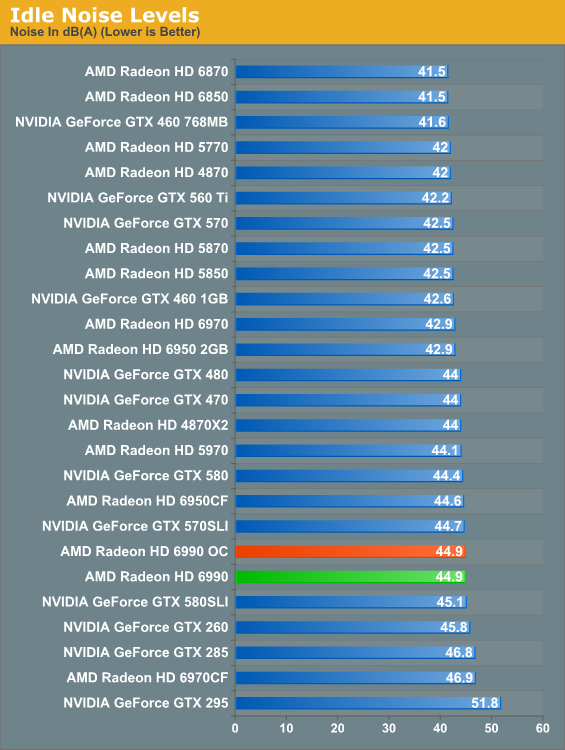

Finally there’s the matter of noise to contend with. At idle nothing is particularly surprising; the 6990 is an iota louder than the average card, presumably due to the dual-exhaust cooler.

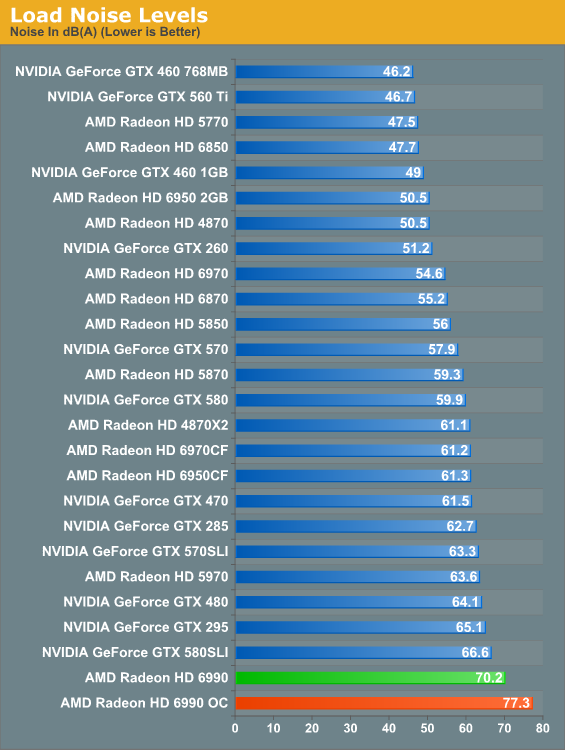

And here’s where it all catches up to us. The Radeon HD 5970 was a loud card, the GTX 580 SLI was even louder, but nothing tops the 6990. The laws of physics are a cruel master, and at some point all the smart engineering in the world won’t completely compensate for the fact that you need a lot of airflow to dissipate 375W of heat. There’s no way around the fact that the 6990 is an extremely loud card; and while games aren’t as bad as FurMark here, it’s still noticeably louder than everything else on a relative basis. Ideally the 6990 requires good airflow and good noise isolation, but the former makes the latter difficult to achieve. Water cooled 6990s will be worth their weight in gold.

130 Comments

View All Comments

Spazweasel - Tuesday, March 8, 2011 - link

I've always viewed single-card dual-GPU cards as more of a packaging stunt than a product.They invariably are clocked a little lower than the single-GPU cards they re based upon, and short of a liquid cooling system are extremely noisy (unavoidable when you have twice as much heat that has to be dissipated by the same sized cooler as the single-GPU card). They also tend to not be a bargain price-wise; compare a dual-GPU card versus two of the single-GPU cards with the same GPU.

Personally, I would much rather have discrete GPUs and be able to cool them without the noise. I'll spend a little more for a full-sized case and a motherboard with the necessary layout (two slots between PCI-16x slots) rather than deal with the compromises of the extra-dense packaging. If someone else needs quad SLI or quad Crossfire, well, fine... to each their own. But if dual GPUs is the goal, I truly don't see any advantage of a dual-GPU card over dual single-GPU cards, and plenty of disadvantages.

Like I said... more of a stunt than a product. Cool that it exists, but less useful than advertised except for extremely narrow niches.

mino - Tuesday, March 8, 2011 - link

Even -2- years since the release of the original Crysis, “but can it run Crysis?” is still an important question, and for -3.5- years the answer was “no.”Umm, you sure bout both those time values?

:)

Nice review, BTW.

MrSpadge - Tuesday, March 8, 2011 - link

"With a 375W TDP the 6990 should consume less power than 2x200W 6950CF, but in practice the 6950CF setup consumes 21W less. Part of this comes down to the greater CPU load the 6990 can create by allowing for higher framerates, but this doesn’t completely explain the disparity."If it hasn't been mentioned before: guys, this is simple. The TDP for the HD6950 is just for the PowerTune limit. The "power draw under gaming" is specified at ~150 W, which is just what you'll find during gaming gaming tests.

Furthermore Cayman is run at lower voltage (1.10 V) and clocks and with less units on HD6950, so it's only natural for 2 of these to consume less power than a HD6990. Summing it up one would expect 1.10^2/1.12^2 * 800/830 * 22/24 = 85,2% the power consumption of a Cayman on HD6990.

MrS

mino - Tuesday, March 8, 2011 - link

You shall not hit them so hard next time. :)Numbers tend to hurt one's ego badly if properly thrown.

geok1ng - Tuesday, March 8, 2011 - link

The article points that the 6990 runs much closer to 6950CF than 6970CF.I assume that the author is talking about 2GB 6950, that can be shader unlocked, in a process much safer than flashing the card with a 6970 BIOS.

It would be interesting to see CF numbers for unlocked 6950s.

As it stands the 6990 is not a great product: it requires an expensive PSU, a big case full of fans, at price ponit higher than similar CF setups.

Considering that there are ZERO enthuasiast mobos thah wont accept CF, the 6990 becomes a very hard sell.

Even more troubling is the lack of a DL-DVI adapter in the bundle, scaring way 30" owners, precisely the group of buyers most interested in this video card.

Why should a 30" step away from a 580m or SLI 580s, if the 6990 the same expensive PSU, the same BIG case full of fans and a DL-DVI adapter costs more than teh price gap to a SLI mobo?

Thanny - Tuesday, March 8, 2011 - link

This card looks very much like the XFX 4GB 5970 card. The GPU position and cooling setup is identical.I'd be very interested to see a performance comparison with that card, which operates at 5870 clock speeds and has the same amount of graphics memory (which is not "frame buffer", for those who keep misusing that term).

JumpingJack - Wednesday, March 9, 2011 - link

:) Yep, I wished they would actually make it right.

The frame buffer is the amount of memory to store the pixel and color depth info for a renderable frame of data, whereas graphics memory (or VRAM) is the total memory available for the card which consequently holds the frame buffer, command buffer, textures, etc etc. The frame buffer is just a small portion of the VRAM set aside and is the output target for the GPU. The frame buffer size is the same for every modern video card on the planet at fixed (same) resolution. I.e. a 1900x1200 res with 32 bit color depth has a frame buffer of ~9.2 MB (1900x1200x32 / 8), if double or tripled buffered, multiply by 2 or 3.

Most every techno site misapplies the term "frame buffer", Anandtech, PCPer (big abuser), Techreport ... most everyone.

Hrel - Wednesday, March 9, 2011 - link

Anyone wanting to play at resolutions above 1080p should just buy two GTX560's for 500 bucks. Why waste the extra 200? There's no such thing as future proofing at these levels.wellortech - Wednesday, March 9, 2011 - link

If the 560s are as noisy as the 570, I think I would rather try a pair of 6950s.HangFire - Wednesday, March 9, 2011 - link

And you can't even bring yourself to mention Linux (non) support?You do realize there are high end Linux workstation users, with CAD, custom software, and OpenCL development projects that need this information?