The Intel SSD 510 Review

by Anand Lal Shimpi on March 2, 2011 1:23 AM EST- Posted in

- IT Computing

- Storage

- SSDs

- Intel

- Intel SSD 510

Random Read/Write Speed

The four corners of SSD performance are as follows: random read, random write, sequential read and sequential write speed. Random accesses are generally small in size, while sequential accesses tend to be larger and thus we have the four Iometer tests we use in all of our reviews.

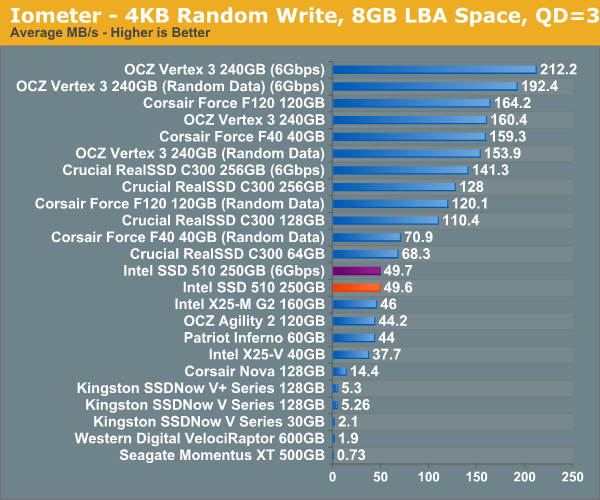

Our first test writes 4KB in a completely random pattern over an 8GB space of the drive to simulate the sort of random access that you'd see on an OS drive (even this is more stressful than a normal desktop user would see). I perform three concurrent IOs and run the test for 3 minutes. The results reported are in average MB/s over the entire time. We use both standard pseudo randomly generated data for each write as well as fully random data to show you both the maximum and minimum performance offered by SandForce based drives in these tests. The average performance of SF drives will likely be somewhere in between the two values for each drive you see in the graphs. For an understanding of why this matters, read our original SandForce article.

It's a bit unfortunate for Intel that we happen to start our performance analysis with a 4KB random write test in Iometer. The 510's random write performance is only marginally better than the X25-M G2 at 49.7MB/s. The RealSSD C300 is faster, not to mention the SF-1200 based Corsair Force F120 and the SF-2200 based OCZ Vertex 3.

Although not depicted here, max write latency is significantly reduced compared to the X25-M G2. While the G2 would occasionally hit a ~900ms write operation, the 510 keeps the worst case latency to below 400ms. The Vertex 3 by comparison has a max write latency of anywhere from 60ms - 350ms depending on the type of data being written.

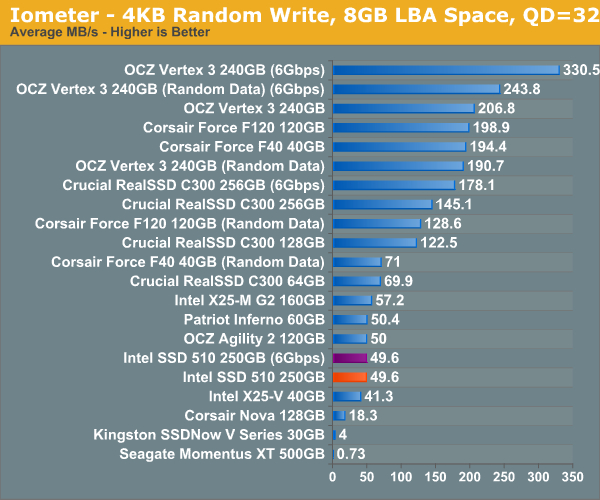

Many of you have asked for random write performance at higher queue depths. What I have below is our 4KB random write test performed at a queue depth of 32 instead of 3. While the vast majority of desktop usage models experience queue depths of 0 - 5, higher depths are possible in heavy I/O (and multi-user) workloads:

While the X25-M G2 scaled with queue depth in our random write test, the 510 does not. It looks like 50MB/s is the absolute highest performance we'll see for constrained 4KB random writes. Note that these numbers are for 4KB aligned transfers, performance actually drops down to ~40MB/s if you perform sector aligned transfers (e.g. performance under Windows XP).

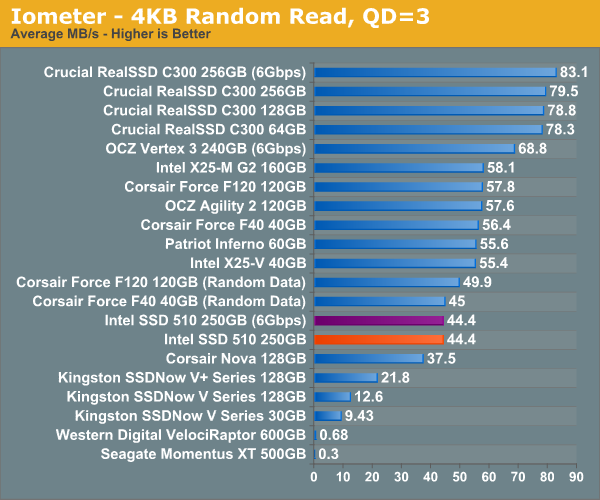

Random read performance is just as disappointing. The X25-M G2 took random read performance seriously but the 510 is less than 20% faster than the Indilinx based Corsair Nova. When I said the Intel SSD 510's random performance is decidedly last-generation, I meant it.

128 Comments

View All Comments

Squuiid - Friday, March 4, 2011 - link

+1They were the 1st of this next gen to be available, yet NOBODY has reviewed them.

Based on the 2nd Gen Marvel controller I believe, a' la C400.

Luke212 - Friday, March 4, 2011 - link

Anand,I am looking to implement SSDs in Application servers and I need to know how they go in Raid 1 over time. Noone seems to test this! So I am stuck with magnetic drives!!

sean.crees - Friday, March 11, 2011 - link

Anyone else notice the Samsung 470 near or at the top of most benchmarks on a 3gb controller? Is this the SSD in current Macbook Pro's? I havn't seen a review posted to Anandtech about this specific device.daidaloss - Tuesday, March 15, 2011 - link

I'm curious as to how does this SSD drive stacks up when compared to this unit SATA2 DDR2 HyperDrive5 from http://www.hyperossystems.co.uk/.Maybe sometime in the future, Anand will consider this RAM drive.

tygrus - Wednesday, May 11, 2011 - link

Not specific to Intel 510 SSD:Sequential performance after several full disk GB rewrites ?

The LBA remapping for wear levelling must make more of the disk look random (not sequential) after every block has been re-written several times. It's a torture test to see how it can handle reading large files that have been spread over several non-sequential NAND blocks. Or does it not matter as much because the controller can optimise access to several NAND dies at once? Does it only remap 512KB at a time or does the 512KB blocks have non-sequential 4KB LBA's written to them?

Does SSD performance approach random R/W performance after long term heavy use ?

gaffe - Tuesday, October 11, 2011 - link

Just an anonymous tip. I happen to know this data is wildly inaccurate because my friend is a reliability engineer at a major company.WHY DON'T YOU DO A SMALL RELIABILITY TEST OF YOUR OWN TO SEE FOR YOURSELF HOW UNLIKELY IT IS THAT THIS DATA IS ACCURATE.

It seems you have tested probably 20 SSDs for your reviews. So, how many of them have failed on you during testing? How many during the course of the past 3 years? What's the failure rate average across all manufacturers?

Even though manufacturers probably send you their best tested units for review, and your sample size is small, etc. I am willing to bet you REAL MONEY that the failure rate will be more than 3% even in a sample size of just 20.

How about we buy 20 SSDs today and in 3 years see who is right, loser buys em all, winner gets em (you can keep the failed ones)?

Any takers?

gaffe - Tuesday, October 11, 2011 - link

Oh and P.S.You forgot to mention above that failure rates are generally PER YEAR. So that's a 3% chance it fails EACH YEAR. And it's still wrong by double or triple (it's closer to 9%).

gaffe - Tuesday, October 11, 2011 - link

Sorry to keep piling on, but it bothers me so much that this inaccurate data is out there and people are believing this that I also want to mention this data does not even say which models were tested. This is probably all enterprise grade drives that does not even apply to consumers that are reading this article, and, as I said above, it's STILL INACCURATE!