The last time we discussed CUDA and Tesla in depth was in September of 2010. At the time NVIDIA had just recently launched their lineup of Fermi-powered Tesla products, and was using the occasion to announce the 3.2 version of their CUDA GPGPU toolchain. And though when we’re discussing the fast pace of the GPU industry we’re normally referring to NVIDIA’s massive consumer GPU products arm, the Tesla and Quadro businesses are not to be underestimated. An aggressive 6 month refresh schedule is not just good for consumer products it seems, but it’s good for the professional side too.

Even against the backdrop of a 6 month refresh schedule, quite a bit has changed in the intervening period. NVIDIA’s Parallel Nsight – which we only first discussed in depth back in September – has gone free, with NVIDIA realizing that charging for the software wasn’t going to sell as many GPUs and that no one likes doing software licensing. Meanwhile the first (and thusfar only) Mac Fermi card was launched in the form of a Quadro card, helping NVIDIA go after the all-important niche of Mac desktop *nix programmers. Even the financial side of things is showing some change, with NVIDIA having just closed out Fiscal Year 2011 with nearly $100mil in Tesla sales, which at around 2.8% of NVIDIA’s revenue is the highest Tesla revenue has ever been. In fact the only thing we haven’t seen surprisingly enough is a Tesla refresh – we had GF110 pegged as an obvious upgrade for the Tesla line, which under GF100 continues to ship with only 448 SPs enabled to help meet the necessary 225W power envelope.

Meanwhile the CUDA team has been hard at work developing the next version of CUDA after CUDA 3.2, which brings us to today’s announcement. Today NVIDIA is announcing CUDA 4.0, the next full version of the toolchain. As is customary for CUDA development given its long QA cycle, NVIDIA is making their formal announcement well before the final version will be shipping. The first release candidate will be available to registered developers March 4th, and we’d expect the final version to be available a couple of months later based on NVIDIA’s previous CUDA releases.

CUDA 4.0 ends up being an interesting release as it breaks with NVIDIA’s previous release schedules somewhat. Previous CUDA releases were timed with the launch of hardware: CUDA 1.0 was released to go with G80/G9x (albeit nearly a year after they launched), CUDA 2.0 was released for GT200 in 2008, and CUDA 3.0 was released for Fermi in 2010. In the case of CUDA 4.0 there’s no new hardware to talk about at the moment, so it’s the first independent software-only major CUDA release. I’d expect that NVIDIA will still be on CUDA 4.x by the time Kepler launches, but that’s still several months out.

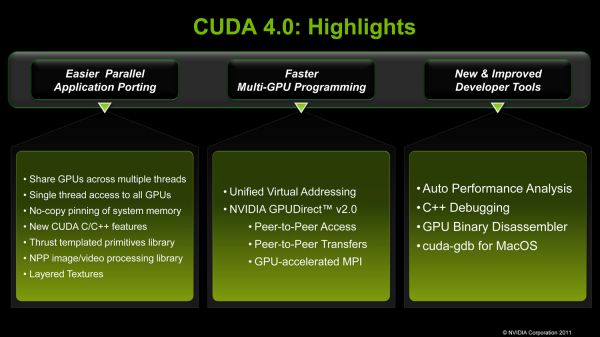

So what’s new in CUDA 4.0? As an independent software release NVIDIA’s biggest focus is on multi-GPU GPGPU performance of existing Fermi products. This is the next logical step for the company, as previous CUDA releases have continuously drilled down, starting with the basic CUDA framework which was suitable for embarrassingly parallel tasks that didn’t require intra-GPU communication, to CUDA 3.x which introduced GPUDirect thereby giving 3rd party devices direct access to CUDA memory. CUDA 4.0 in turn is the next step on that long path, and will be enabling multiple GPUs within the same system/node to more closely work together by making it easier for GPUs to access each other’s memory.

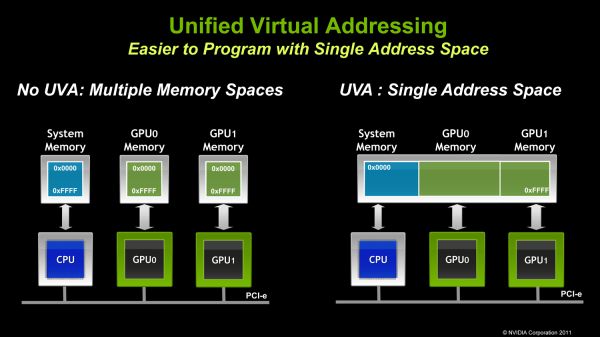

Specifically NVIDIA is doing a few things here. On the software side NVIDIA is introducing a new unified virtual address space mode (aptly named Unified Virtual Addressing), which puts all CUDA execution – CPU and GPU – in the same address space. Prior to this each GPU and the CPU used their own virtual address space, which required a number of additional steps and careful tracking on behalf of CUDA software to copy data structures between address spaces. This would seem to be riskier on the driver side in order to keep GPUs and CPUs from stomping on each other(and hence the long QA cycle), but for CUDA developers the benefit is going to be very straightforward due to the easier memory management.

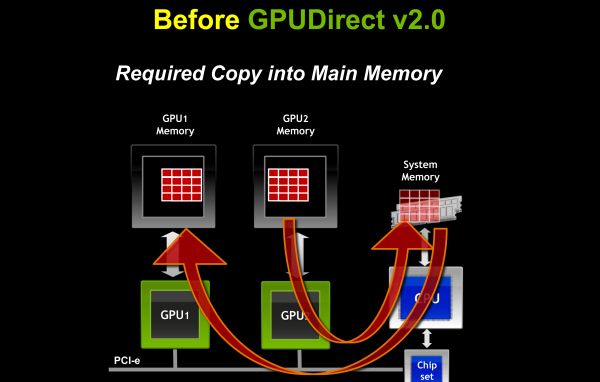

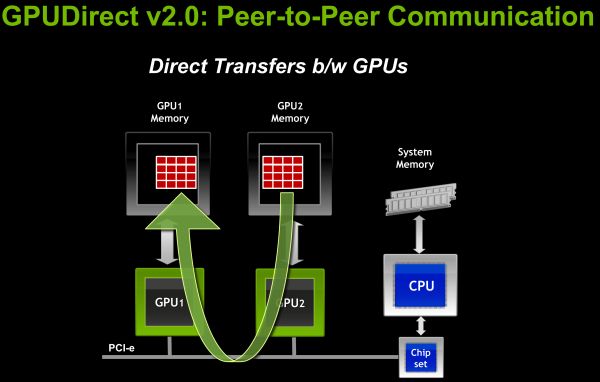

Meanwhile on the hardware side NVIDIA is introducing GPUDirect 2.0. While GPUDirect 1.0 gave 3rd party devices direct memory access, it was primarily for network/infiniband communication purposes; GPUs within a node were still isolated in most cases, requiring data structures to be copied to system RAM first before any additional GPUs could access the data. GPUDirect 2.0 resolves this issue, introducing the ability for GPUs within a node to directly access each other’s memory without requiring a system memory copy first. And while system memory is by no means slow this is still much faster; for fully fed PCIe x16 slots this gives each GPU 8GB/sec of low latency full duplex bandwidth to use between the CPU and other GPUs. From our impressions we’d categorize GPUDirect 2.0 as being very NUMA-like (Non-Uniform Memory Access), however there’s still an important distinction between local and remote memory as PCIe bandwidth is still a fraction the speed of local memory – 8GB/sec versus 148GB/sec for a Tesla card, for example.

The addition of UVA on the software side and GPUDirect 2.0 on the hardware side are NVIDIA’s primary tactics to improving intra-GPU performance. PCIe’s limited bandwidth means that intra-GPU communication speeds will not be approaching intra-CPU communication speeds in the near future, so SMP-like operation is still some time off, but it should be fast enough to allow developers to work on new classes of problems that were too slow without UVA/GPUDirect.

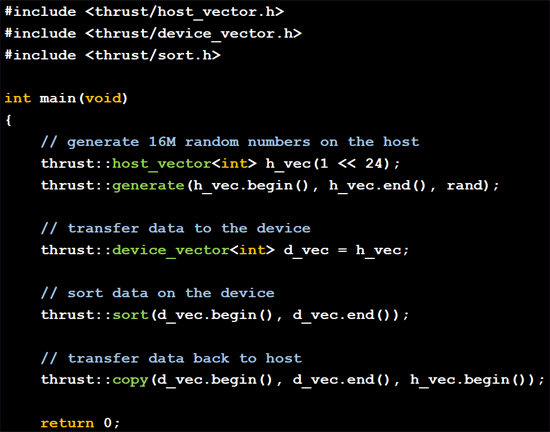

Along with multi-GPU performance, NVIDIA is of course giving considerable focus to single/overall GPU performance. CUDA 4.0 follows up on CUDA 3.2’s additional libraries with yet another set of performance-optimized libraries. Thrust – an open source CUDA template library that mimics the C++ Standard Template Library (STL) – is being integrated into CUDA proper. Thrust has been available for a couple of years now as an external library that NVIDIA developed as a research project, and is now being promoted to a member of the CUDA family. C++ programmers used to the STL stand the most to gain, as Thrust is nearly identical and can automatically handle assigning work to GPUs or CPUs as necessary.

CUDA C++ is also getting some further improvements by introducing some C++ features that were absent under CUDA 3.x. Virtual functions are now supported, along with the New and Delete functions for dynamic memory. NVIDIA noted that with CUDA 4.0 they’re shifting to working on developer requests, with both of these features being highly requested. We had also asked NVIDIA about what C++ adoption by developers had been like – C++ being an important part of the Fermi hardware – but unfortunately NVIDIA doesn’t have the means to precisely track which languages developers are actually using. However it sounds like adding C++ was an appropriate choice for the company.

Finally, the last set of improvements NVIDIA is focusing on is on the developer tools themselves. Coming back again to the Mac/*nix market, NVIDIA had added CUDA debugging support to Mac OS X; *nix CUDA developers doing their development on Macs will now be able to debug their code right on their machines. Meanwhile NVIDIA’s Visual Profiler performance profiling tool is getting an upgrade of its own: previously it could identify bottlenecks in code, now it can offer hints on how to improve performance at those bottlenecks. Finally, the CUDA toolkit will now include a binary disassembler, for use in analyzing the resulting output of the CUDA compiler.

Wrapping things up, as we mentioned before the first release candidate of CUDA 4.0 will be available to registered developers on March 4th. NVIDIA doesn’t have a commitment date for the release version, but expect it to be available a couple of months later based on NVIDIA’s previous CUDA releases.

44 Comments

View All Comments

moozoo - Monday, February 28, 2011 - link

and everyone uses windows. Or did I miss a DX for linux and Mac OS announcement...For those that require the feature "works on platforms other than windows" DX is a massive fail.

ET - Tuesday, March 1, 2011 - link

Wine includes DX compatibility.ET - Tuesday, March 1, 2011 - link

It's not. OpenGL has often been behind DX, but it keeps catching up. OpenGL 4.0 was released quite a few months after DX11, but it did catch up, and OpenGL (currently at 4.1) offers some features not available in DX11. That's not something new, actually. OpenGL has always had some features not supported by Direct3D.So while OpenGL will probably never be at the front line of any new 3D generation, it's not "way behind DX now".

habibo - Monday, February 28, 2011 - link

NVIDIA has been one of the primary supporters of OpenCL since its inception. They're always the first with drivers and continue to support OpenCL today.They continue to develop CUDA to give developers access to new hardware features sooner than they would otherwise have them with OpenCL which, like all open standards, suffers from the "design by committee" and lowest common denominator problems. Futhermore, CUDA and OpenCL have different programming models. Some developers prefer one over the other, so there will possibly always be a demand for both languages. There are many languages for programming CPUs, I'm unclear as to why having more than one language for programming GPUs is heresy. To claim that "NVIDIA is far behind OpenCL" simply shows a shocking ignorance of the GPU computing landscape.

moozoo - Monday, February 28, 2011 - link

I hate to break it to you. But Nvidia basically pulled the plug on all their openCL work once they where beaten by AMD to release opencl 1.1 drivers.Their opencl 1,1 has been stuck in beta for 8 months now

http://forums.nvidia.com/index.php?showtopic=19374...

My guess is that they transferred all their software development resources to Parallel Nsight and apparently CUDA 4.0

habibo - Monday, February 28, 2011 - link

You're not breaking anything to me. :-)Khronos Group certified NVIDIA's OpenCL 1.1 drivers as the first conformant implementation back in July:

http://www.khronos.org/adopters/conformant-product...

Since they were the first available OpenCL 1.1 drivers that were certified conformant, I'm not sure where you got the idea that AMD beat them to it. Is it because AMD "released" theirs and NVIDIA's were just "pre-release"? As for why NVIDIA does not consider these drivers more than pre-release after successfully passing all of the Khronos conformance tests, I have no idea. It certainly seems odd that NVIDIA would not officially release the drivers.

You're right, though: NVIDIA invests far more heavily in CUDA than in OpenCL because CUDA makes them money and OpenCL does not. This has nothing to do with proprietary vs open, however. It has to do with the fact that no one uses OpenCL. If OpenCL had even the modest adoption rate of OpenGL, I'm sure NVIDIA would invest in it the way they do with OpenGL.

Namarrgon - Wednesday, March 2, 2011 - link

True enough, if by "available" you mean "can be only downloaded by registered developers who have GTX 465 hardware or earlier".Fact is, after 8 months I still can't use OpenCL 1.1 features in my visual fx app because none of the 90% of my customers who use nVidia hardware can actually get a driver that supports it. Not to mention that many of them have cards that aren't supported by that 8-month-old developer-release driver. What's the use of being "first" if it's on paper only?

Since 10% of my customers do use ATI cards, CUDA is not an option for me. OpenCL has just as much potential to sell cards for them as CUDA, probably more, but this ongoing "delay" to release final drivers is looking suspiciously deliberate, despite nVidia's claim to be open & API-agnostic.

B3an - Monday, February 28, 2011 - link

I wondered how long it would be before morons like yourself start commenting about "openness".All you people have one thing in common - you have absolutely no idea what you're talking about. Most of you tools will even be making comments like this on OS's that are open, and happily using proprietary software and hardware.

raddude9 - Tuesday, March 1, 2011 - link

Wow, bitter.What in my comment makes you think that I don't know what I'm talking about. Please point it out. I in fact do know about the level of openness of the OS's and tools I use.

ET - Tuesday, March 1, 2011 - link

I agree and disagree. NVIDIA is catering to the high performance crowd and CUDA users will definitely appreciate this update. A GPGPU programmer will find this CUDA version a lot easier to use than OpenCL, so NVIDIA is doing a good job here.On the other hand I agree that for the consumer market NVIDIA is deliberately working against the use of AMD graphics cards alongside NVIDIA ones, which is annoying.