AMD’s GTX 560 Ti Counter-Offensive: Radeon HD 6950 1GB & XFX’s Radeon HD 6870 Black Edition

by Ryan Smith on January 25, 2011 12:20 PM ESTPower, Temperature, & Noise

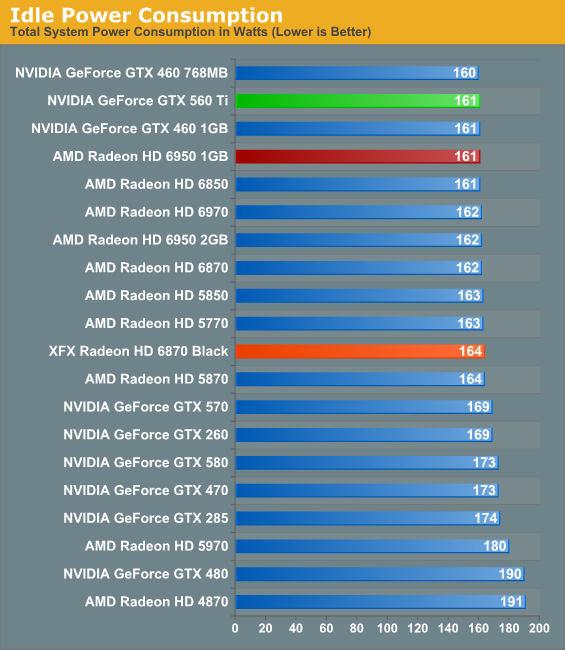

As was the case with gaming performance, we’ll keep our running commentary thin here. The Radeon HD 6950 1GB is virtually identical to the 2GB card, so other than a few watts power difference (which can easily be explained by being an engineering sample) the two are equals. It’s the XFX Radeon HD 6870 Black Edition that has caught our attention.

| Radeon HD 6800/6900 Series Load Voltage | |||||

| Ref 6870 | XFX 6870 | Ref 6950 2GB | Ref 6950 1GB | ||

| 1.172v | 1.172v | 1.1v | 1.1v | ||

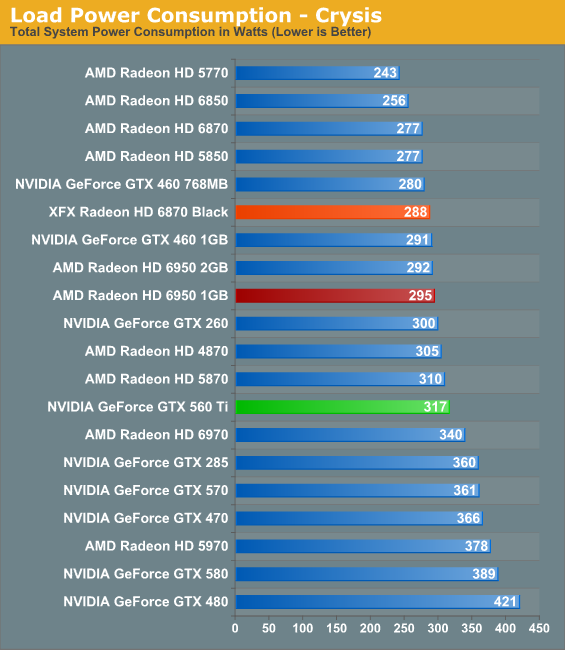

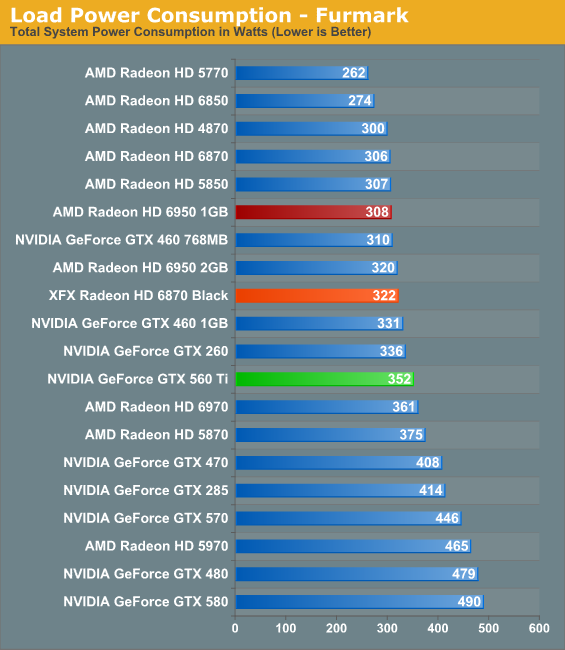

While the XFX 6870 has the same load voltage as the reference 6870, between the change in the cooler and the higher core and memory frequencies power usage still goes up. Under Crysis this is 11W, and under FurMark this expands to 16W. Unfortunately this factory overclock has wiped out much of the 6870’s low power edge versus the 6950, and as a result the two end up being very close. In practice power consumption under load is nearly identical to the GTX 460 1GB, albeit with much better gaming performance.

Meanwhile this is one of the few times we’ll see a difference between the 1GB and 2GB 6950. At idle and under Crysis the two are nearly identical, but under FurMark the 1GB reduces power consumption by some 12W even with PowerTune in effect. We believe that this is due to the higher operating voltage of the 2Gb GDDR5 modules AMD is using on the 2GB card.

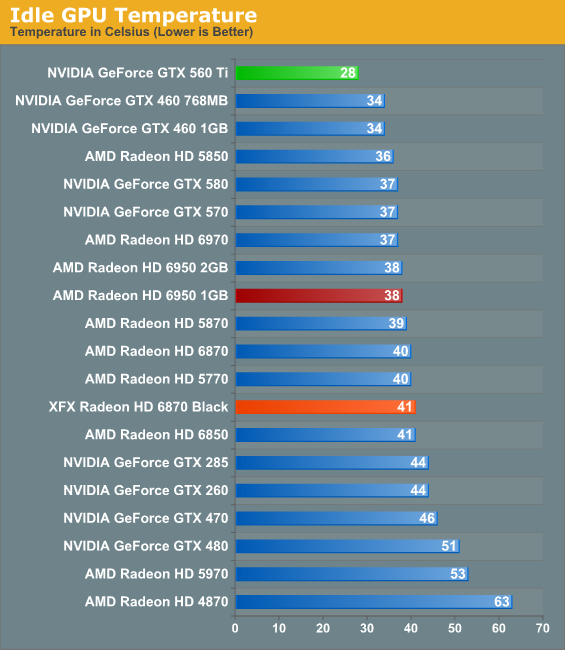

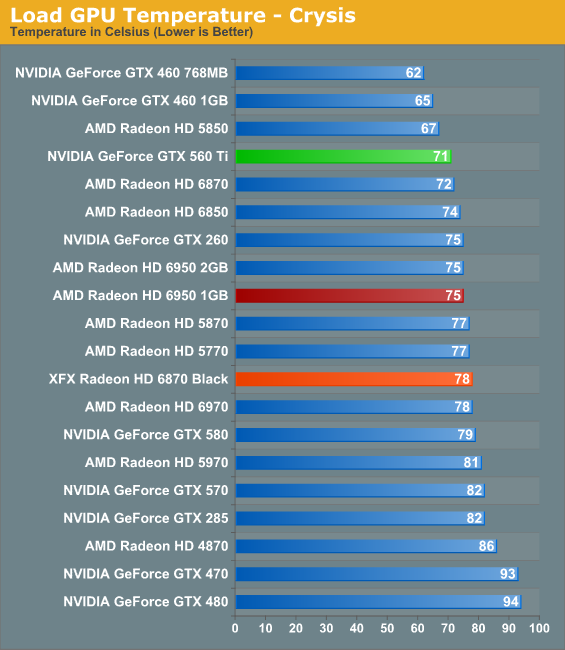

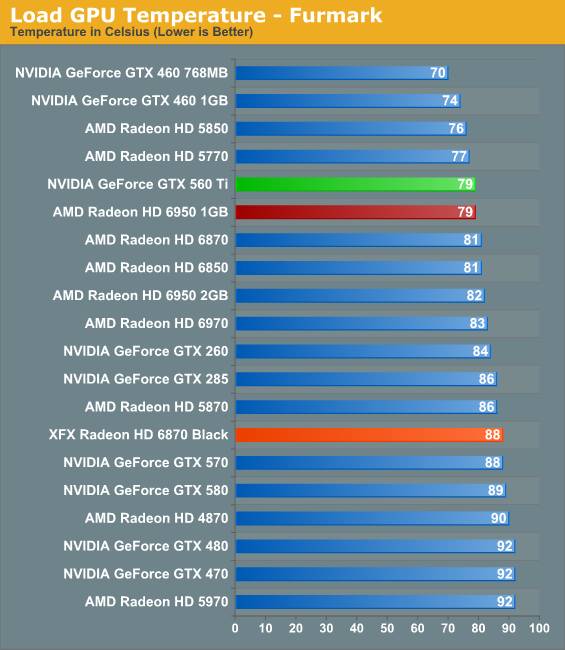

As far as temperatures go both cards are in the middle of the pack. The vapor chamber cooler on the 6900 series already gives it a notable leg up over most cards, including the XFX 6870. At 41C the XFX card is a bit warm at idle, meanwhile 78C under load is normal for most cards of this class. Meanwhile the 6950 1GB and 2GB both perform identically, even with the power consumption difference between the two.

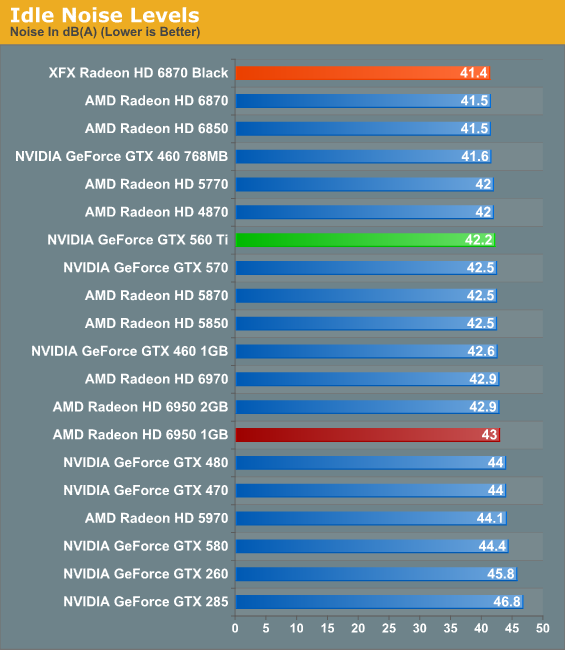

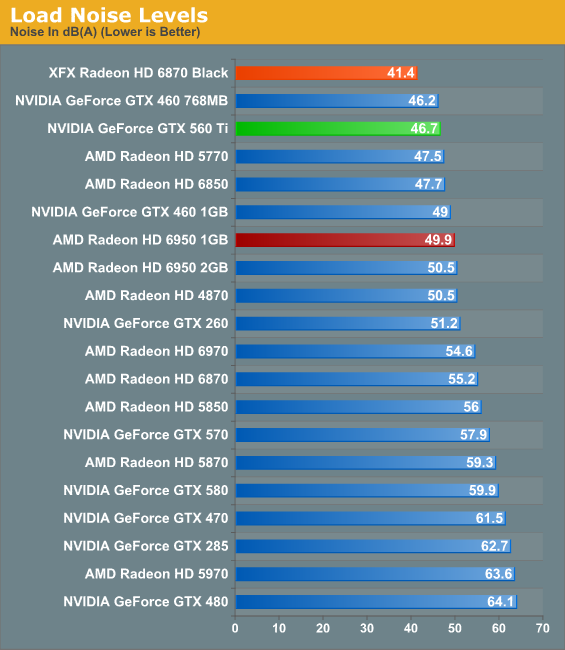

Last but certainly not least we have our noise testing, and this is the point where the XFX 6870 caught our eye. The reference 6870 was an unremarkable card when it came to noise – it didn’t use a particularly advanced cooling design, and coupled with the use of a blower it ended up being louder than a number of cards, including the vapor chamber equipped Radeon HD 6970. The XFX 6870 reverses this fortune and then some due to XFX’s well-designed open-air cooler. At idle it edges out our other cards by a mere 0.1dB, but the real story is at load. And no, that’s not a typo in the load noise chart, the XFX Radeon HD 6870 Black Edition really is that quiet.

In fact at 41.4dB under load, the XFX 6870 is for all intents and purposes a silent card in our GPU testbed. Under load the fans do rev up, but even when doing so the card stays below the noise floor of our testbed. Compared to the reference 6870 we’re looking at just shy of a 14dB difference between said reference card and the XFX 6870, a feat that is beyond remarkable. With the same warning as we attach to the GTX 460 and GTX 560 – you need adequate case cooling to make an open-air card work – the XFX Radeon HD 6870 Black Edition may very well be the fastest actively cooled quiet card on the market.

Meanwhile for the Radeon HD 6950 1GB and 2GB, we’re once again left with results that are nearly indistinguishable. Under load our 1GB card ended up being .6dB quieter, an imperceptible difference.

111 Comments

View All Comments

7Enigma - Tuesday, January 25, 2011 - link

Here's the point. There is no measurable difference with it on or not from a framerate perspective. So in this case it doesn't matter. That should tell you that the only possible difference in this instance would be a possible WORSENING of picture quality since the GPU wars are #1 about framerate and #2 about everything else. I'm sure a later article will delve into what the purpose of this setting is for but right now it clearly has no benefit from the test suite that was chosen.I agree with you though that I would have liked a slightly more detailed description of what it is supposed to do...

For instance is there any power consumption (and thus noise) differences with it on vs. off?

Ryan Smith - Tuesday, January 25, 2011 - link

For the time being it's necessary that we use Use Application Setting so that newer results are consistent with our existing body of work. As this feature did not exist prior to to the 11.1a drivers, using it would impact our results by changing the test parameters - previously it wasn't possible to cap tessellation factors like this so we didn't run our tests with such a limitation.As we rebuild our benchmark suite every 6 months, everything is up for reevaluation at that time. We may or may not continue to disable this feature, but for the time being it's necessary for consistent testing.

Dark Heretic - Wednesday, January 26, 2011 - link

Thanks for the reply Ryan, that's a very valid point on keeping the testing parameters consistent with current benchmark results.Would it be possible to actually leave the drivers at default settings for both Nvidia and AMD in the next benchmark suite. I know there will be some inconsistent variations between both sets of drivers, but it would allow for a more accurate picture on both hardware and driver level (as intended by Nvidia / AMD when setting defaults)

I use both Nvidia and AMD cards, and do find differences between picture quality / performances from both sides of the fence. However i also tend to leave drivers at default settings to allow both Nvidia and AMD the benefit of knowing what works best with their hardware on a driver level, i think it would allow for a more "real world" set of benchmark results.

@B3an, perhaps you should have used the phrase "lacking in cognitive function", it's much more polite. You'll have to forgive the oversight of not thinking about the current set of benchmarks overall as Ryan has politely pointed out.

B3an - Wednesday, January 26, 2011 - link

You post is simply retarded for lack of a better word.Ryan is completely right in disabling this feature, even though it has no effect on the results (yet) in the current drivers. And it should always be disabled in the future.

The WHOLE point of articles like this is to get the results as fair as possible. If you're testing a game and it looks different and uses different settings on one card to another, how is that remotely fair? What is wrong with you?? Bizarre logic.

It would be the exact same thing as if AMD was to disable AA by default in all games even if the game settings was set to use AA, and then having the nVidia card use AA in the game tests while the AMD card did not. The results would be absolutely useless, no one would know which card is actually faster.

prdola0 - Thursday, January 27, 2011 - link

Exactly. We should compare apples-to-apples. And let's not forget about the FP16 Demotion "optimization" in the AMD drivers that reduces the render target width from R16G16B16A16 to R11G11B10, effectively reducing bandwidth from 64bits to 32bits at the expense of quality. All this when the Catalyst AI is turned on. AMD claims it doesn't have any effect on the quality, but multiple sources already confirmed that it is easily visible without much effort in some titles, while in some others it doesn't have. However it affects performance for up to 17%. Just google "fp16 demotion" and you will see a plenty of articles about it.burner1980 - Tuesday, January 25, 2011 - link

Thanks for not listening to your readers.Why do you have to include an apple to orange comparison again ?

Is it so hard to test Non-OC vs Non-OC and Oc vs. OC ?

The article itself is fine, but please stop this practice.

Proposal for an other review: Compare ALL current factory stock graphic card models with their highest "reasonable" overclock against each other. Which valus does the customer get when taking OC into (buying) consideration ?

james.jwb - Tuesday, January 25, 2011 - link

quite a good idea if done correctly. Sort of 460's and above would be nice to see.AnnonymousCoward - Thursday, January 27, 2011 - link

Apparently the model number is very important to you. What if every card above 1MHz was called OC? Then you wouldn't want to consider them. But the 6970@880MHz and 6950@800MHz are fine! Maybe you should focus on price, performance, and power, instead of the model name or color of the plastic.I'm going to start my own comments complaint campaign: Don't review cards that contain any blue in the plastic! Apples to apples, people.

AmdInside - Tuesday, January 25, 2011 - link

Can someone tell me where to find a 6950 for ~$279? Sorry but after rebates do not count.Spoelie - Tuesday, January 25, 2011 - link

If you look at the numbers, the 6870BE is more of a competitor than the article text would make you believe - in the games where the nvidia cards do not completely trounce the competition.Look at the 1920x1200 charts of the following games and tell me the 6870BE is outclassed:

*crysis warhead

*metro

*battlefield (except waterfall? what is the point of that benchmark btw)

*stalker

*mass effect2

*wolfenstein

If you now look at the remaining games where the NVIDIA card owns:

*hawx (rather inconsequential at these framerates)

*civ5

*battleforge

*dirt2

You'll notice in those games that the 6950 is just as outclassed. So you're better of with an nvidia card either way.

It all depends on the games that you pick, but a blanket statement that 6870BE does not compete is not correct either.