AMD’s GTX 560 Ti Counter-Offensive: Radeon HD 6950 1GB & XFX’s Radeon HD 6870 Black Edition

by Ryan Smith on January 25, 2011 12:20 PM ESTAfter being AnandTech’s senior GPU editor for nearly a year and a half and through more late-night GPU launches than I care to count, there’s a very specific pattern I’ve picked up on: the GPU market may be competitive, but it’s the $200-$300 that really brings out the insanity. I’m not sure if it’s the volume, the profit margins, or just the desire to be seen as affordable, but AMD and NVIDIA seem to take out all the stops to one-up each other whenever either side plans on launching a new video card in this price range.

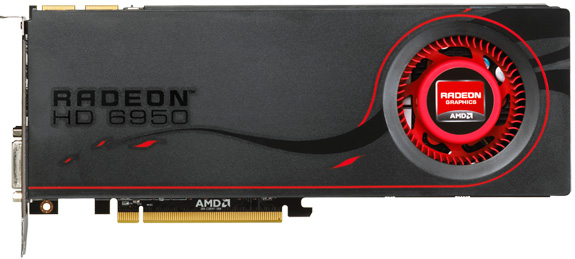

Today was originally supposed to be about the newly released GeForce GTX 560 Ti – NVIDIA’s new GF114-based $250 video card. Much as was the case with the launch of AMD’s Radeon HD 6800 series however, AMD is itching to spoil NVIDIA’s launch with their own push. Furthermore they intend to do so on two fronts: directly above the GTX 560 Ti at $259 is the Radeon HD 6950 1GB, and somewhere below it one of many factory overclocked Radeon HD 6870 cards, in our case an XFX Radeon HD 6870 Black Edition. The Radeon HD 6950 1GB is effectively the GTX 560 Ti’s direct competition, while the overclocked 6870 serves to be the price spoiler.

It wasn’t always meant to be this way, and indeed 5 days ago things were quite different. But before we get too far, let’s quickly discuss today’s cards.

| AMD Radeon HD 6970 | AMD Radeon HD 6950 2GB | AMD Radeon HD 6950 1GB | XFX Radeon HD 6870 Black | AMD Radeon HD 6870 | |

| Stream Processors | 1536 | 1408 | 1408 | 1120 | 1120 |

| Texture Units | 96 | 88 | 88 | 56 | 56 |

| ROPs | 32 | 32 | 32 | 32 | 32 |

| Core Clock | 880MHz | 800MHz | 800MHz | 940MHz | 900MHz |

| Memory Clock | 1.375GHz (5.5GHz effective) GDDR5 | 1.25GHz (5.0GHz effective) GDDR5 | 1.25GHz (5.0GHz effective) GDDR5 | 1.15GHz (4.6GHz effective) GDDR5 | 1.05GHz (4.2GHz effective) GDDR5 |

| Memory Bus Width | 256-bit | 256-bit | 256-bit | 256-bit | 256-bit |

| Frame Buffer | 2GB | 2GB | 1GB | 1GB | 1GB |

| FP64 | 1/4 | 1/4 | 1/4 | N/A | N/A |

| Transistor Count | 2.64B | 2.64B | 2.64B | 1.7B | 1.7B |

| Manufacturing Process | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm | TSMC 40nm |

| Price Point | $369 | ~$279 | $259 | $229 | ~$219 |

Back when the Radeon HD 6950 launched, AMD told us to expect 1GB cards sometime in the near future as a value option. Because the 6900 series is using fairly new 2Gb GDDR5, such chips are still in short supply and cost more versus the very common and very available 1Gb variety. It’s not a massive difference once you all up the bill of materials on a video card, but for the card manufactures if they can save $10 on RAM then that’s $10 they can mark down a card and snag that many more sales. Furthermore we’re not quite to the point where 2GB is essential in the sub-$300 market - where 2560x1600 monitors are rare – so the performance penalty isn’t a major concern. As a result it was only a matter of time until 1GB 6900 series cards hit the market, to fill in the gap until 2Gb GDDR5 came down in price.

The day has finally come for the Radeon HD 6950 1GB, and today is that day. Truth be told it’s actually a bit anticlimactic – the reference 6950 1GB is virtually identical to the reference 6950 2GB. It’s the same PCB attached to the same vapor chamber cooler with the same power and heat characteristics. There is one and only one difference: the 1GB card uses 8 1Gb GDDR5 chips, and the 2GB card uses 8 2Gb GDDR5 chips. Everything else is equal, and indeed when the 6950 is not RAM limited even the performance is equal.

The second card we’re taking a quick look at is the XFX Radeon HD 6870 Black Edition, the obligatory factory overclocked Radeon HD 6870. Utilizing XFX’s open-air custom HSF, it’s clocked at 940MHz core and 1150MHz (4.6Gbps data rate) memory, representing a 40Mhz (4%) core overclock and a 100MHz (9%) memory overclock. Truth be told it’s not much of an overclock, and if it wasn’t for the cooler it wouldn’t be a very remarkable card as far as factory overclocking goes, and for that reason it’s almost a footnote today. But it wasn’t meant to be, and that’s where our story begins.

111 Comments

View All Comments

rdriskill - Tuesday, January 25, 2011 - link

Given that it is a lot easier to find a 1920 x 1080 monitor now than it is to find a 1920 x 1200 monitor, would that resolution make more sense to list in these kinds of comparisions? I realise it wouldn't make much of a difference, but it is kind of strange to not see what, at least in my area, is the most common native resolution.james.jwb - Tuesday, January 25, 2011 - link

wouldn't mind seeing 27" res include at the high end (2560x1440) as up there pushes the cards much harder and could make all the difference between playable and unplayable. I realize this is more work though :)Ryan Smith - Tuesday, January 25, 2011 - link

As 16:9 monitors have 90% of the resolution of 16:10 monitors, the performance is very similar. We may very well have to switch from 19x12 to 19x10 because 19x12 monitors are becoming so rare, but there's not a lot of benefit in running both resolutions.The same goes for 25x14 vs. 25x16. Though in that case, 25x16 monitors aren't going anywhere.

Makaveli - Tuesday, January 25, 2011 - link

Great review.As for the complaints GTFO, is it somehow affecting your manhood that there is an overclocked card in the review?

Some of you really need to get a life!

ctbaars - Tuesday, January 25, 2011 - link

Hey! Don't you talk to Becky that way ....silverblue - Tuesday, January 25, 2011 - link

It's overclocked, sure, but it's an official AMD product line. If AMD had named it the 6880, I don't think anyone would've questioned it really.Shadowmaster625 - Tuesday, January 25, 2011 - link

The 6870 has 56 texture units and the 6950 has 88 , or 57% more. Yet if you add up all the scores of each you find that the 6950 is only 8% faster on average. This implies a wasted 45% increase in SPs and/or texture units (which one?), as well as about 800 million wasted transistors. Clearly AMD needed to add more ROPs to the 6950. Also, since the memory clock is faster on the 6950, this implies even more wasted transistors. If both cards had the same exact memory bandwidth, they might very well only be 4% apart in performance! AMD's gpu clearly responds much more favorably to an increase in memory bandwidth than it does to increased texture units. It really looks like they're going off the wheels and into the weeds. What they need is to increase memory bandwidth to 216G/s, and increase their ROP-to-SIMD ratio to around 2:1.Yes I know about VLIW4... but where is the performance? Improvements should be seen by now. Like what Nvidia did with Civ 5. I'm not seeing anything like that from AMD and we should have been seeing that by now, in spades.

B3an - Wednesday, January 26, 2011 - link

....I like how you've completely missed out the fact that the 6870 is clocked 100MHz higher on the core, and the 6870 Black is 140MHz higher. You list all these other factors, and memory speeds, but dont even mention or realise that the 6870/Black have considerably higher core clocks than the 6950.Shadowmaster625 - Wednesday, January 26, 2011 - link

It is probably clocked higher because it has almost a billion fewer transistors. Which begs the question.... what the hell are all those extra transistors there for if they do not improve performance?DarkHeretic - Tuesday, January 25, 2011 - link

This is my first post, i've been reading Anand for at least a year, and this concerned me enough to actually create a user and post."For NVIDIA cards all tests were done with default driver settings unless otherwise noted. As for AMD cards, we are disabling their new AMD Optimized tessellation setting in favor of using application settings (note that this doesn’t actually have a performance impact at this time), everything else is default unless otherwise noted."

While i read your concerns about where to draw the line on driver optimisation Ryan, i disagree with your choice to disable select features from one set of drivers to the next. How many PC users play around with these settings apart from the enthusiasts among us striving for extra performance or quality?

Surely it would make be far fairer for testing to leave drivers at default settings when benchmarking hardware and / or new sets of drivers? Essentially driver profiles have been tweaking performance for a while now from both AMD and Nvidia, so where to draw the line on altering the testing methodology in "tweaking drivers" to suit?

I'll admit, regardless of whether disabling a feature makes a difference to the results or not, it actually made me stop reading the rest of the review as from my own stance the results have been skewed. No two sets of drivers from AMD or Nvidia will ever be equal (i hope), however deliberately disabling features meant for the benefit of the end users, just seems completely the wrong direction to take.

As you are concerned about where AMD is taking their driver features in this instance, equally i find myself concerned about where you are taking your testing methodology.

I hope you can understand my concerns on this and leave drivers as intended in the future to allow a more neutral review.

Regards