Intel’s Sandy Bridge i7-2820QM: Upheaval in the Mobile Landscape

by Jarred Walton on January 3, 2011 12:00 AM EST- Posted in

- Laptops

- Intel

- Sandy Bridge

- Compal

All the Performance, and Good Battery Life As Well!

We’ve just finished showing that CPU and GPU performance has basically more than doubled compared to last year’s Arrandale offerings. That’s great news, but what happens to battery life? We’ve got 35W TDP Arrandale parts compared to a 45W TDP Sandy Bridge quad-core; doesn’t that mean battery life will decrease by around 25%? The answer is happily no; as we’ve point out in the past, TDP isn’t really a useful measurement of power requirements. All the TDP represents in this case is the maximum amount of power Sandy Bridge should draw. So worst-case battery life under full load might drop, but the real question is going to be what happens under typical workloads.

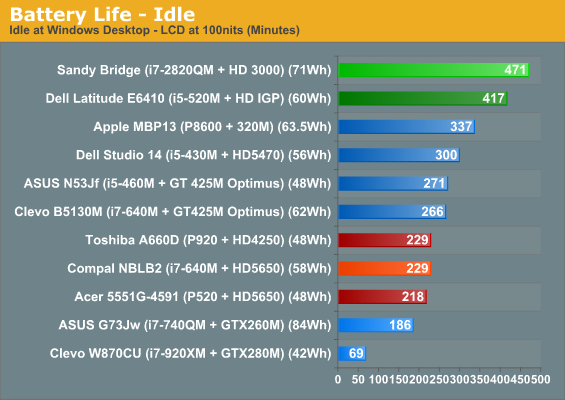

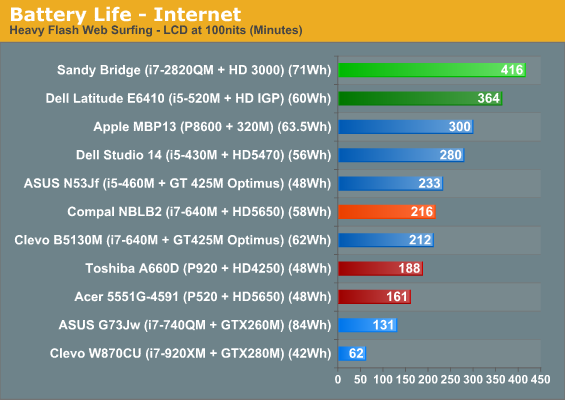

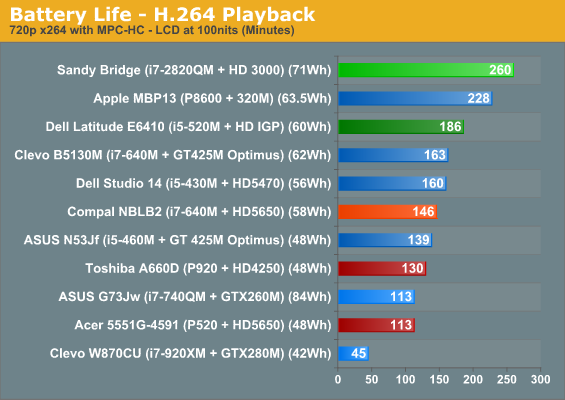

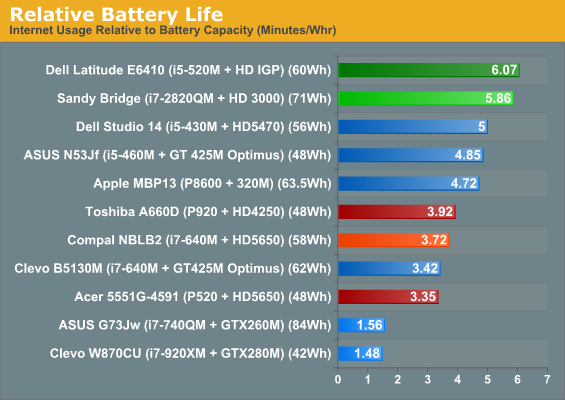

Intel’s use of power gating and variable clock speeds is put to good use, with the result being battery life that is nothing short of exceptional when compared to previous generation products. We’ve seen ULV and Atom netbooks and ultraportables get battery life into the 8+ hour range, but such designs have always required serious compromise in the performance department. SNB certainly won’t beat out Atom for pure battery life, but that doesn’t mean it’s a power hog. Our Compal test system comes with a 71Wh battery, which is larger than what we’ve seen in many 15.6” and smaller designs but still reasonable for a 17.3” chassis. Here are the results of our standard battery life testing.

Yes, those figures are accurate. Best-case, running at 100nits, quad-core Sandy Bridge still lasted nearly eight hours on a single charge! What’s more interesting is that our standard Internet battery life test that loads four pages with Flash ads every sixty seconds still checks in just shy of seven hours. Finally, H.264 playback also comes in at the top of our charts, providing more than four hours of demanding video playback. If 240 minutes of content off your HDD/SSD isn’t enough, we also were able to watch a Blu-ray disc and still get 220 minutes of 35Mbit VLC playback. Wow!

So Sandy Bridge comes out on the top of the above charts, but we didn’t include some of the other long battery life alternatives. Just to put things in perspective, ASUS’ U30JC—with an SSD and an 84Wh battery—has long been our king for matching reasonable performance with long battery life. It managed 588 minutes idle, 476 minutes Internet, and 254 minutes H.264 playback. That’s 25% more idle life, but only 14% better Internet and actually slightly lower H.264 battery life, and you need to factor in the 18% higher capacity battery and 13.3” (versus 17.3”) LCD.

We have to wonder just how small of a form factor manufacturers can manage to cram the quad-core Sandy Bridge into. Idle and low usage power requirements are clearly very good, but with maximum TDP still at 45W the chassis needs to be able to handle the heat. We’d really love to see some 14” designs with quad-core CPUs, and the icing on the cake would be sticking a reasonably fast discrete GPU with graphics switching technology into the case as well. Intel doesn’t have any LV/ULV quad-core parts listed—yet!—so we may have to wait for ultraportable quad-core laptops, but certainly 15.6” designs should be able to combine SNB with reasonably fast Optimus GPUs to provide an optimal blend of performance and mobility.

66 Comments

View All Comments

skywalker9952 - Monday, January 3, 2011 - link

For your CPU specific benchmarks you annotate the CPU and GPU. I beleive the HDD or SSD plays a much larger role in those benchmarks then a GPU. Would it not be more appropriate to annotate the storage device used. Were all of the CPUs in the comparison paired with SSDs? If they weren't how much would that affect the benchmarks?JarredWalton - Monday, January 3, 2011 - link

The SSD is a huge benefit to PCMark, and since this is laptop testing I can't just use the same image on each system. Anand covers the desktop side of things, but I include PCMark mostly for the curious. I could try and put which SSD/HDD each notebook used, but then the text gets to be too long and the graph looks silly. Heh.For the record, the SNB notebook has a 160GB Intel G2 SSD. The desktop uses a 120GB Vertex 2 (SF-1200). W870CU is an 80GB Intel G1 SSD. The remaining laptops all use HDDs, mostly Seagate Momentus 7200.4 I think.

Macpod - Tuesday, January 4, 2011 - link

the synthetics benchmarks are all run at turbo frequencies. the scores from the 2.3ghz 2820qm is almost the same as the 3.4ghz i7 2600k. this is because the 2820qm is running at 3.1ghz under cinebench.no one knows how long this turbo frequency lasts. maybe just enough to finish cinebench!

this review should be re done

Althernai - Tuesday, January 4, 2011 - link

It probably lasts forever given decent cooling so the review is accurate, but there is something funny going on here: the score for the 2820QM is 20393 while the score for the score in the 2600K review is 22875. This would be consistent with a difference between CPUs running at 3.4GHz and 3.1GHz, but why doesn't the 2600K Turbo up to 3.8GHz? The claim is that it can be effortlessly overclocked to 4.4GHz so we know the thermal headroom is there.JarredWalton - Tuesday, January 4, 2011 - link

If you do continual heavy-duty CPU stuff on the 2820QM, the overall score drops about 10% on later runs in Cinebench and x264 encoding. I mentioned this in the text: the CPU starts at 3.1GHz for about 10 seconds, then drops to 3.0GHz for another 20s or so, then 2.9 for a bit and eventually settles in at 2.7GHz after 55 seconds (give or take). If you're in a hotter testing environment, things would get worse; conversely, if you have a notebook with better cooling, it should run closer to the maximum Turbo speeds more often.Macpod, disabling Turbo is the last thing I would do for this sort of chip. What would be the point, other than to show that if you limit clock speeds, performance will go down (along with power use)? But you're right, the whole review should be redone because I didn't mention enough that heavy loads will eventually drop performance about 10%. (Or did you miss page 10: "Performance and Power Investigated"?)

lucinski - Tuesday, January 4, 2011 - link

Just like any other low-end GPU (integrated or otherwise) I believe most users would rely on the HD3000 just for undemanding games in the category of which I would mention Civilization IV and V or FIFA / PES 11. This goes to say that I would very much like to see how the new Intel graphics fares in these games, should they be available in the test lab of course.I am not necessarily worried about the raw performance, clearly the HD3000 has the capacity to deliver. Instead, the driver maturity may come out as an obstacle. Firstly one has to consider the fact that Intel traditionally has problems with GPU driver design (relative to their competitors). Secondly, should at one point Intel be able to repair (some of) the rendering issues mentioned in this article or elsewhere, notebook producers still take their sweet time before supplying users with new driver versions.

In this context I am genuinely concerned about the HD3000 goodness. The old GMA HD + Radeon 5470 combination still seems tempting. Strictly referring to the gaming aspect I honestly prefer reliability and a few FPS' missing rather than the aforementioned risks.

NestoJR - Tuesday, January 4, 2011 - link

So, when Apple starts putting these in Macbooks, I'd assume the battery life will easily eclipse 10 hours under light usage, maybe 6 hours under medium usage ??? I'm no fanboy but I'll be in line for that ! My Dell XPS M1530's 9-cell battery just died, I can wait a few months =]JarredWalton - Tuesday, January 4, 2011 - link

I'm definitely interested in seeing what Apple can do with Sandy Bridge! Of course, they might not use the quad-core chips in anything smaller than the MBP 17, if history holds true. And maybe the MPB13 will finally make the jump to Arrandale? ;-)heffeque - Wednesday, January 5, 2011 - link

Yeah... Saying that the nVidia 320M is consistently slower than the HD3000 when comparing a CPU from 2008 and a CPU from 2011...Great job comparing GPUs! (sic)

A more intelligent thing to say would have been: a 2008 CPU (P8600) with an nVidia 320M is consistently slightly slower than a 2011 CPU (i7-2820QM) with HD3000, don't you think?

That would make more sense.

Wolfpup - Wednesday, January 5, 2011 - link

That's the only thing I care about with these-and as far as I'm aware, the jump isn't anything special. It's FAR from the "tock" it supposedly is, going by earlier Anandtech data. (In fact the "tick/tock" thing seems to have broken down after just one set of products...)This sounds like it is a big advantage for me...but only because Intel refused to produce quad core CPUs at 32nm, so these by default run quite a bit faster than the last gen chips.

Otherwise it sounds like they're wasting 114 million transistors that I want spent on the CPU-whether it's more cache, more, more functional units, another core (if that's possible in 114 million transistors) etc.

I absolutely do NOT want Intel's garbage, incompatible graphics. I do NOT want the addition complexity, performance hit, and software complexity of Optimus or the like. I want a real GPU, functioning as a real GPU, with Intels' garbage completely shut off at all times.

I hope we'll see that in mid range and high end notebooks, or I'm going to be very disappointed.