The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTResolution Scaling with Intel HD Graphics 3000

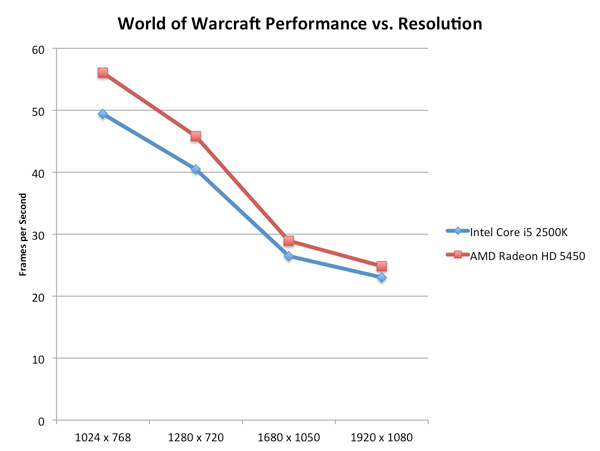

All of our tests on the previous page were done at 1024x768, but how much of a hit do you really get when you push higher resolutions? Does the gap widen between a discrete GPU and Intel's HD Graphics as you increase resolution?

On the contrary: low-end GPUs run into memory bandwidth limitations just as quickly (if not quicker) than Intel's integrated graphics. Spend about $70 and you'll see a wider gap, but if you pit Intel's HD Graphics 3000 against a Radeon HD 5450 the two actually get closer in performance the higher the resolution is—at least in memory bandwidth bound scenarios:

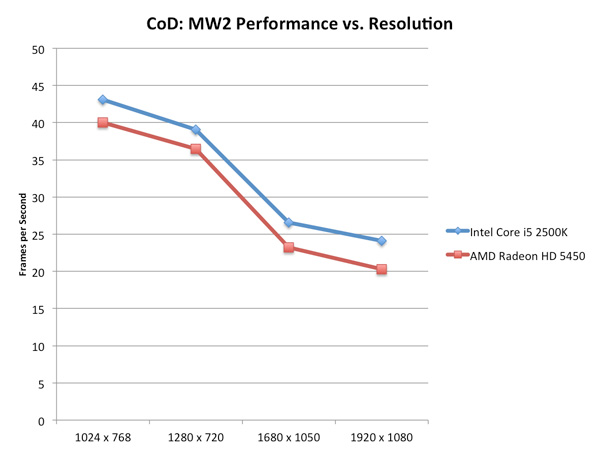

Call of Duty: Modern Warfare 2 stresses compute a bit more at higher resolutions and thus the performance gap widens rather than closes:

For the most part, at low quality settings, Intel's HD Graphics 3000 scales with resolution similarly to a low-end discrete GPU.

Graphics Quality Scaling

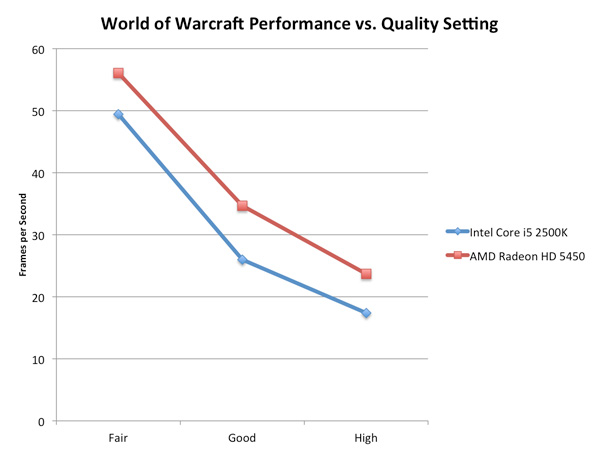

The biggest issue with integrated and any sort of low-end graphics is that you have to run games at absurdly low quality settings to avoid dropping below smooth frame rates. The impact of going to higher quality settings is much greater on Intel's HD Graphics 3000 than on a discrete card as you can see by the chart below.

The performance gap between the two is actually its widest at WoW's "Good" quality settings. Moving beyond that however shrinks the gap a bit as the Radeon HD 5450 runs into memory bandwidth/compute bottlenecks of its own.

283 Comments

View All Comments

karlostomy - Thursday, January 6, 2011 - link

what the hell is the point of posting gaming scores at resolutions that no one will be playing at?If i am not mistaken, the grahics cards in the test are:

eVGA GeForce GTX 280 (Vista 64)

ATI Radeon HD 5870 (Windows 7)

MSI GeForce GTX 580 (Windows 7)

So then, with a sandybridge processor, these resolutions are irrelevant.

1080p or above should be standard resolution for modern setup reviews.

Why, Anand, have you posted irrelevant resolutions for the hardware tested?

dananski - Thursday, January 6, 2011 - link

Games are usually limited in fps by the level of graphics, so processor speed doesn't make much of a difference unless you turn the graphics detail right down and use an overkill graphics card. As the point of this page was to review the CPU power, it's more representative to use low resolutions so that the CPU is the limiting factor.If you did this set of charts for gaming at 2560x1600 with full AA & max quality, all the processors would be stuck at about the same rate because the graphics card is the limiting factor.

I expect Civ 5 would be an exception to this because it has really counter-intuitive performance.

omelet - Tuesday, January 11, 2011 - link

For almost any game, the resolution will not affect the stress on the CPU. It is no harder for a CPU to play the game at 2560x1600 than it is to play at 1024x768, so to ensure that the benchmark is CPU-limited, low resolutions are chosen.For instance, the i5 2500k gets ~65fps in the Starcraft test, which is run at 1024x768. The i5 2500k would also be capable of ~65fps at 2560x1600, but your graphics card might not be at that resolution.

Since this is a review for a CPU, not for graphics cards, the lower resolution is used, so we know what the limitation is for just the CPU. If you want to know what resolution you can play at, look at graphics card reviews.

Tom - Sunday, January 30, 2011 - link

Which is why the tests have limited real world value. Skewing the tests to maximize the cpu differences makes new cpus look impressive, but it doesn't show the reality that the new cpu isn't needed in the real world for most games.Oyster - Monday, January 3, 2011 - link

Maybe I missed this in the review, Anand, but can you please confirm that SB and SB-E will require quad-channel memory? Additionally, will it be possible to run dual-channel memory on these new motherboards? I guess I want to save money because I already have 8GB of dual-channel RAM :).Thanks for the great review!

CharonPDX - Monday, January 3, 2011 - link

You can confirm it from the photos of it only using two DIMMs in photo.JumpingJack - Monday, January 3, 2011 - link

This has been discussed in great detail. The i7, i3, and i5 2XXX series is dual channel. The rumor mill is abound with SB-E having quad channel, but I don't recall seen anything official from Intel on this point.8steve8 - Monday, January 3, 2011 - link

the K processors have the much better IGP and a variable multiplier, but to use the improved IGP you need an H67 chipset, which doesn't support changing the multiplier?ViRGE - Monday, January 3, 2011 - link

CPU Multiplier: Yes, H67 cannot change the CPU multiplierGPU Multiplier: No, even H67 can change the GPU multiplier

mczak - Monday, January 3, 2011 - link

I wonder why though? Is this just officially? I can't really see a good technical reason why CPU OC would work with P67 but not H67 - it is just turbo going up some more steps after all. Maybe board manufacturers can find a way around that?Or is this not really linked to the chipset but rather if the IGP is enabled (which after all also is linked to turbo)?