The Sandy Bridge Review: Intel Core i7-2600K, i5-2500K and Core i3-2100 Tested

by Anand Lal Shimpi on January 3, 2011 12:01 AM ESTResolution Scaling with Intel HD Graphics 3000

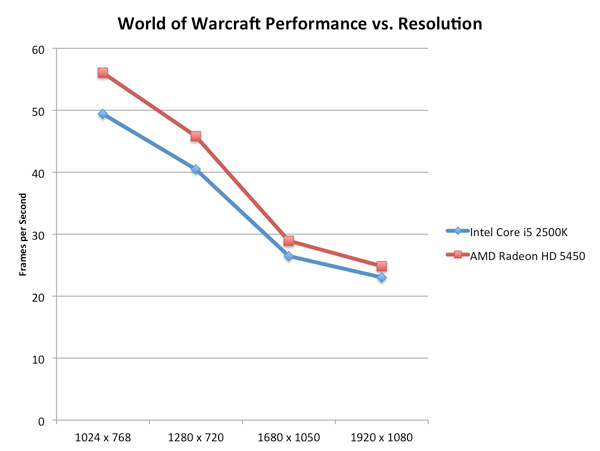

All of our tests on the previous page were done at 1024x768, but how much of a hit do you really get when you push higher resolutions? Does the gap widen between a discrete GPU and Intel's HD Graphics as you increase resolution?

On the contrary: low-end GPUs run into memory bandwidth limitations just as quickly (if not quicker) than Intel's integrated graphics. Spend about $70 and you'll see a wider gap, but if you pit Intel's HD Graphics 3000 against a Radeon HD 5450 the two actually get closer in performance the higher the resolution is—at least in memory bandwidth bound scenarios:

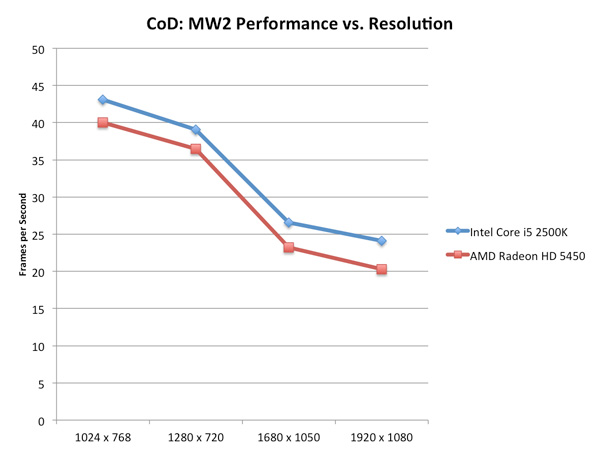

Call of Duty: Modern Warfare 2 stresses compute a bit more at higher resolutions and thus the performance gap widens rather than closes:

For the most part, at low quality settings, Intel's HD Graphics 3000 scales with resolution similarly to a low-end discrete GPU.

Graphics Quality Scaling

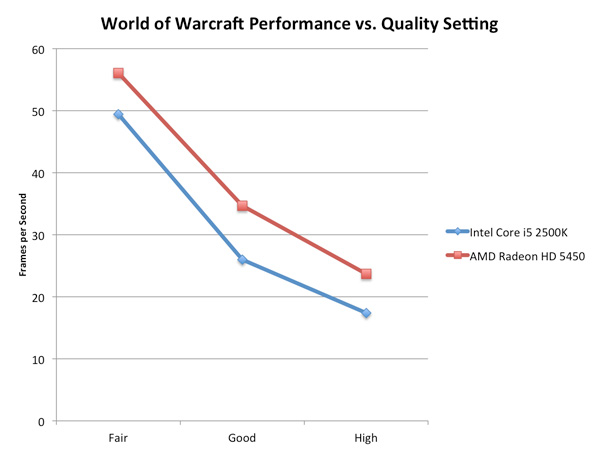

The biggest issue with integrated and any sort of low-end graphics is that you have to run games at absurdly low quality settings to avoid dropping below smooth frame rates. The impact of going to higher quality settings is much greater on Intel's HD Graphics 3000 than on a discrete card as you can see by the chart below.

The performance gap between the two is actually its widest at WoW's "Good" quality settings. Moving beyond that however shrinks the gap a bit as the Radeon HD 5450 runs into memory bandwidth/compute bottlenecks of its own.

283 Comments

View All Comments

vol7ron - Monday, January 3, 2011 - link

I'm also curious if there will be a hybrid P/H type mobo that will allow for OC'ing all components.sviola - Monday, January 3, 2011 - link

Yes. There will be a Z series to be released in the 2Q11.dacipher - Monday, January 3, 2011 - link

The Core i5-2500K was just what i was looking for. Performance/ Price is where it needs to be and overclocking should be a breeze.vol7ron - Monday, January 3, 2011 - link

I agree."As an added bonus, both K-series SKUs get Intel’s HD Graphics 3000, while the non-K series SKUs are left with the lower HD Graphics 2000 GPU."

Doesn't it seem like Intel has this backwards? For me, I'd think to put the 3000 on the lesser performing CPUs. Users will probably have their own graphics to use with the unlocked procs, whereas the limit-locked ones will more likely be used in HTPC-like machines.

DanNeely - Monday, January 3, 2011 - link

This seems odd to me unless they're having yield problems with the GPU portion of their desktop chips. That doesn't seem too likely though because you'd expect the mobile version to have the same problem but they're all 12 EU parts. Perhaps they're binning more aggressively on TDP, and only had enough chips that met target with all 12 EUs to offer them at the top of the chart.dananski - Monday, January 3, 2011 - link

I agree with both of you. This should be the ultimate upgrade for my E8400, but I can't help thinking they could've made it even better if they'd used the die space for more CPU and less graphics and video decode. The Quick Sync feature would be awesome if it could work while you're using a discrete card, but for most people who have discrete graphics, this and the HD Graphics 3000 are a complete waste of transistors. I suppose they're power gated off so the thermal headroom could maybe be used for overclocking.JE_Delta - Monday, January 3, 2011 - link

WOW........Great review guys!

vol7ron - Monday, January 3, 2011 - link

Great review, but does anyone know how often 1 active core is used. I know this is a matter of subjection, but if you're running an anti-virus and have a bunch of standard services running in the background, are you likely to use only one core when idling?What should I advise people, as consumers, to really pay attention to? I know when playing games such as Counter-Strike or Battlefield: Bad Company 2, my C2D maxes out at 100%, I assume both cores are being used to achieve the 100% utilization. I'd imagine that in this age, hardly ever will there be a time to use just one core; probably 2 cores at idle.

I would think that the 3-core figures are where the real noticeable impact is, especially in turbo, when gaming/browsing. Does anyone have any more perceived input on this?

dualsmp - Monday, January 3, 2011 - link

What resolution is tested under Gaming Performance on pg. 20?johnlewis - Monday, January 3, 2011 - link

According to Bench, it looks like he used 1680×1050 for L4D, Fallout 3, Far Cry 2, Crysis Warhead, Dragon Age Origins, and Dawn of War 2, and 1024×768 for StarCraft 2. I couldn't find the tested resolution for World of Warcraft or Civilization V. I don't know why he didn't list the resolutions anywhere in the article or the graphs themselves, however.