AMD's Radeon HD 6970 & Radeon HD 6950: Paving The Future For AMD

by Ryan Smith on December 15, 2010 12:01 AM ESTCayman: The Last 32nm Castaway

With the launch of the Barts GPU and the 6800 series, we touched on the fact that AMD was counting on the 32nm process to give them a half-node shrink to take them in to 2011. When TSMC fell behind schedule on the 40nm process, and then the 32nm process before canceling it outright, AMD had to start moving on plans for a new generation of 40nm products instead.

The 32nm predecessor of Barts was among the earlier projects to be sent to 40nm. This was due to the fact that before 32nm was even canceled, TSMC’s pricing was going to make 32nm more expensive per transistor than 40nm, a problem for a mid-range part where AMD has specific margins they’d like to hit. Had Barts been made on the 32nm process as projected, it would have been more expensive to make than on the 40nm process, even though the 32nm version would be smaller. Thus 32nm was uneconomical for gaming GPUs, and Barts was moved to the 40nm process.

Cayman on the other hand was going to be a high-end part. Certainly being uneconomical is undesirable, but high-end parts carry high margins, especially if they can be sold in the professional market as compute products (just ask NVIDIA). As such, while Barts went to 40nm, Cayman’s predecessor stayed on the 32nm process until the very end. The Cayman team did begin planning to move back to 40nm before TSMC officially canceled the 32nm process, but if AMD had a choice at the time they would have rather had Cayman on the 32nm process.

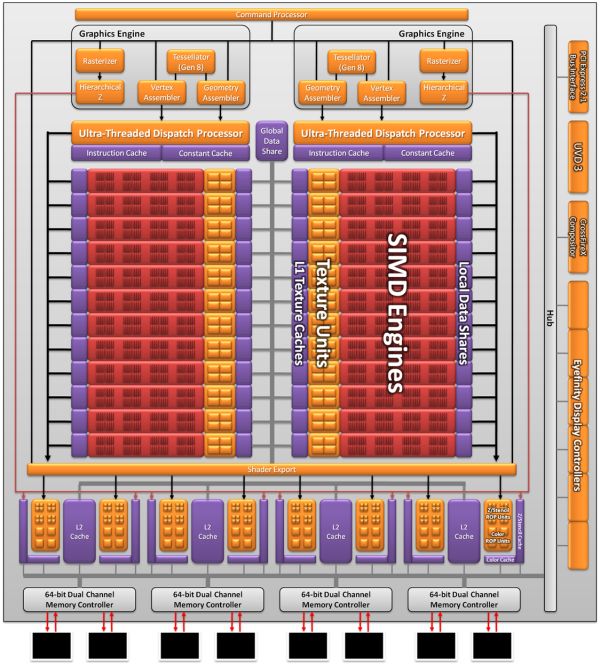

As a result the Cayman we’re seeing today is not what AMD originally envisioned as a 32nm part. AMD won’t tell us everything that they had to give up to create the 40nm Cayman (there has to be a few surprises for 28nm) but we do know a few things. First and foremost was size; AMD’s small die strategy is not dead, but getting the boot from the 32nm process does take the wind out of it. At 389mm2 Cayman is the largest AMD GPU since the disastrous R600, and well off the sub-300mm2 size that the small die strategy dictates. In terms of efficient usage of space though AMD is doing quite well; Cayman has 2.64 billion transistors, 500mil more than Cypress. AMD was able to pack 29% more transistors in only 16% more space.

Even then, just reaching that die size is a compromise between features and production costs. AMD didn’t simply settle for a larger GPU, but they had to give up some things to keep it from being even larger. SIMDs were on the chopping block; 32nm Cayman would have had more SIMDs for more performance. Features were also lost, and this is where AMD is keeping mum. We know PCI Express 3.0 functionality was scheduled for the 32nm part, where AMD had to give up their PCIe 3.0 controller for a smaller 2.1 controller to make up for their die size difference. This in all honesty may have worked out better for them: PCIe 3.0 ended up being delayed until November, so suitable motherboards are still at least months away.

The end result is that Cayman as we know it is a compromise to make it happen on 40nm. AMD got their new VLIW4 architecture, but they had to give up performance and an unknown number of features to get there. On the flip side this will make 28nm all the more interesting, as we’ll get to see many of the features that were supposed to make it for 2010 but never arrived.

168 Comments

View All Comments

Ryan Smith - Wednesday, December 15, 2010 - link

Exactly the same as on Cypress.L2: 128KB per ROP block (so 512KB)

L1: 8KB per SIMD

LDS: 32KB per SIMD

GDS: 64KB

http://images.anandtech.com/doci/4061/MidLevelView...

I don't have the register file size readily available.

DanNeely - Wednesday, December 15, 2010 - link

How likely is the decrease from 2 to 1 operations per clock likely to affect real world applications?yeraldin37 - Wednesday, December 15, 2010 - link

My current cards are running at 870Mhz(GPU) and 1100Mhz(clock), faster than stock 5870, those benchmarks for new 6970 are really disappointing, I was seriously expecting to get a single 6970 for Christmas to replace my 5850OC CF cards and make room for additional cards or even have a free pcie to plug my gtx460 for physx capability. I was going to be happy to get at least 80% of my current 5850CF setup from new 6970. what a joke! I will not make any move and wait for upcoming next generation 28nm amd GPU's. We have to be fair and mention all great efforts from AMD team to bring new technology to newest radeon cards, however not enough performance for die hard gamers. If gtx 580 were 20% cheaper I might consider to buy one, I personally never ever pay more than $400 for one(1) video card.Nfarce - Wednesday, December 15, 2010 - link

Reading Tom's Hardware they essentially slam AMD's marketing these cards as a 570-580 beater. Guru3D is also less than friendly. Interstingly, *both* sites have benches showing the 570 an d580 beating the 6950 and 6970 commandingly. What's up with that exactly?fausto412 - Wednesday, December 15, 2010 - link

it's called AMD didn't deliver on the hype...they deserve to get slammed.medi01 - Wednesday, December 15, 2010 - link

AMD delivers cards with better performance/price ratio that also consume less power. How come there is a reason to "slam", eh?zst3250 - Friday, December 31, 2010 - link

Off yourself cretin, prefearbly by getting your cranium kicked in.Mr Perfect - Thursday, December 16, 2010 - link

Wait, is Tom's reputable again? Haven't read that site since the Athlon XP was new....AnnonymousCoward - Wednesday, December 15, 2010 - link

As a 30" owner and gamer, I would never run at 2560x1600 with AA enabled if that causes <60fps. I'd disable AA. Who wouldn't value framerate over AA? So when the fps is <60, please compare cards at 2560x1600 without AA, so that I'm able to apply the results to a purchase decision.SimpJee - Wednesday, December 15, 2010 - link

Greetings, also a 30'' gamer. If you see the FPS above 30 with AA enabled, you can assume it will be (much) higher without it enabled so what's the point in actually having the author bench it without AA? Plus, anything above 30 FPS is just icing on the cake as far as I'm concerned.