10G Ethernet: More Than a Big Pipe

by Johan De Gelas on November 24, 2010 2:34 PM EST- Posted in

- IT Computing

- Networking

- 10G Ethernet

Cleaning Up the Cable Mess

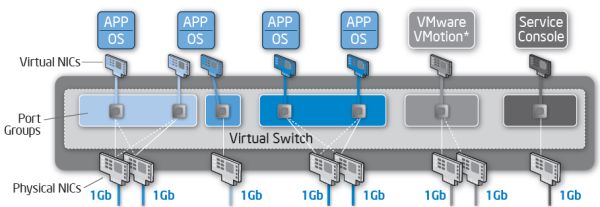

Between 6 and 12 I/O cables on one server is quite a bit. You end up with a complicated NIC configuration as illustrated in this VMware/Intel white paper.

The white paper does not consider the storage I/O, but if you use two FC cards, you are adding another 14W (7W x 2) and two cables. So if we take such a heavy consolidated server as an example you end up with about:

- 10 I/O Cables (without KVM and Server management)

- 2 quad-port NICs x 5W + 2 FC cards x 7W = 24W

24W is not enormous, but in reality this is the best case. Dual socket servers typically need between 200 and 350W, quad socket servers about 250 to 500W in total. So the I/O power consumption is about 5—15% of the total power consumption.

The 10 I/O cables are a bigger problem. The more ports and cables, the higher the chances are that something gets badly configured and the harder it gets to troubleshoot. It does not take much imagination to see that this kind of cabling might waste a lot of sysadmin time and thus money.

The biggest problem is of course cost. Fibre Channel cabling is not cheap, but it is nothing compared to the huge cost of FC HBAs, FC switches, and SFPs. Cabling up to eight Ethernet cables per server is not cheap either: while the cable cost is negligible, someone has to do the pulling and plugging and that task isn't free.

38 Comments

View All Comments

Kahlow - Friday, November 26, 2010 - link

Great article! The argument between fiber and 10gig E is interesting but from what I have seen it is extremely application and workload dependant that you would have to have a 100 page review to be able to figure out what media is better for what workload.Also, in most cases your disk arrays are the real bottleneck and max’ing your 10gig E or your FC isn’t the issue.

It is good to have a reference point though and to see what 10gig translates to under testing.

Thanks for the review,

JohanAnandtech - Friday, November 26, 2010 - link

Thanks.I agree that it highly depends on the workload. However, there are lots and lots of smaller setups out there that are now using unnecessarily complicated and expensive setups (several physical separated GbE and FC). One of objective was to show that there is an alternative. As many readers have confirmed, a dual 10GbE can be a great solution if your not running some massive databases.

pablo906 - Friday, November 26, 2010 - link

It's free and you can get it up and running in no time. It's gaining a tremendous amount of users because of the recent Virtual Desktop licensing program Citrix pushed. You could double your XenApp (MetaFrame Presentation Server) license count and upgrade them to XenDesktop for a very low price, cheaper than buying additonal XenApp licenses. I know of at least 10 very large organizations that are testing XenDesktop and preparing rollouts right now.What gives. VMWare is not the only Hypervisor out there.

wilber67 - Sunday, November 28, 2010 - link

Am I missing something in some of the comments?Many are discussing FCoE and I do not believe any of the NICs tested were CNAs, just 10GE NICs.

FCoE requires a CNA (Converged Network Adapter). Also, you cannot connect them to a garden variety 10GE switch and use FCoE. . And, don't forget that you cannot route FCoE.

gdahlm - Sunday, November 28, 2010 - link

You can use software initiators on switches which support 802.3X flow control. Many web managed switches do support 802.3X as do most 10GE adapters.I am unsure how that would effect performance at in a virtualized shared environment as I believe it pauses on the port level.

If you workload is not storage or network bound it would work but I am betting that when you hit that hard knee in your performance curve that things get ugly pretty quick.

DyCeLL - Sunday, December 5, 2010 - link

To bad HP virtual connect couldn't be tested (a blade option).It splits the 10GB nics in a max of 8 Nics for the blades. It can do it for fiber and ethernet.

Check: http://h18004.www1.hp.com/products/blades/virtualc...

James5mith - Friday, February 18, 2011 - link

I still think that 40Gbps Infiniband is the best solution. By far it seems to be the best $/Gbps ratio of any of the platforms. Not to mention it can pass pretty much any traffic type you want.saah - Thursday, March 24, 2011 - link

I loved the article.I just reminded myself that VMware published official drivers for the ESX4 recently: http://downloads.vmware.com/d/details/esx4x_intel_...

The ixgbe version is 3.1.17.1.

Since the post says that "enables support for products based on the Intel 82598 and 82599 10 Gigabit Ethernet Controllers." I would like to see the test redone with an 82599-based card and recent drivers.

Would it be feasible?