ZFS - Building, Testing, and Benchmarking

by Matt Breitbach on October 5, 2010 4:33 PM EST- Posted in

- IT Computing

- Linux

- NAS

- Nexenta

- ZFS

Benchmarks

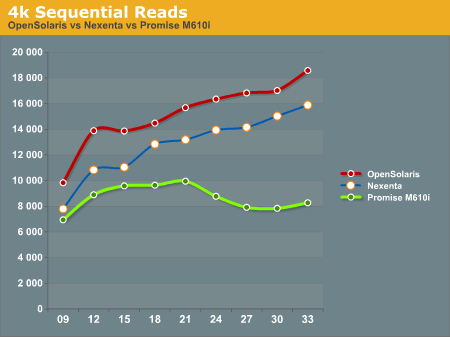

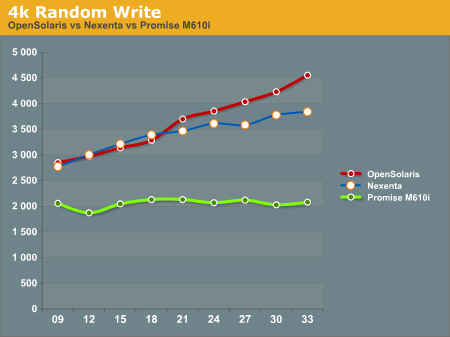

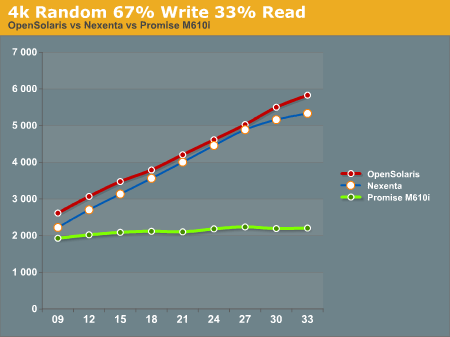

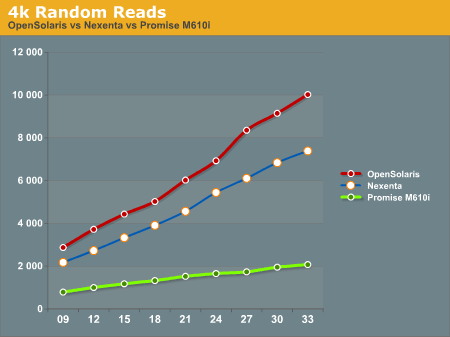

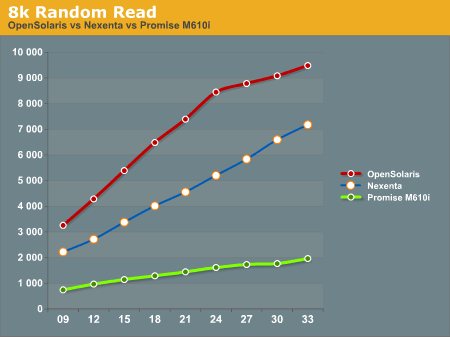

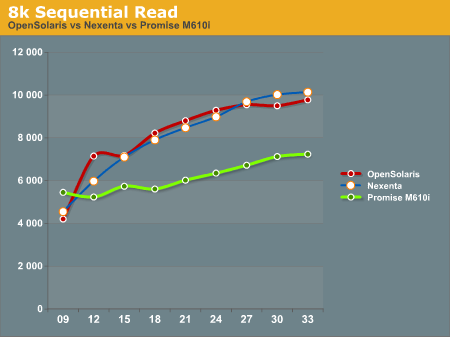

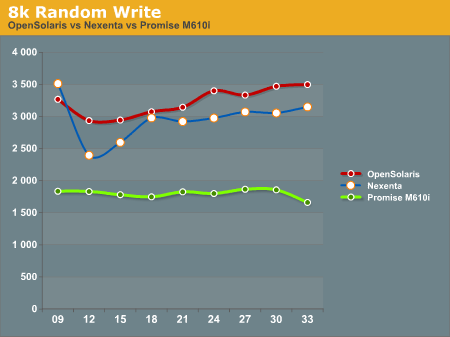

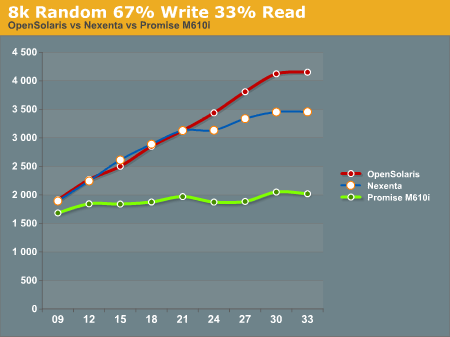

After running our tests on the ZFS system (both under Nexenta and OpenSolaris) and the Promise M610i, we came up with the following results. All graphs have IOPS on the Y-Axis, and Disk Que Lenght on the X-Axis.

In the 4k Sequential Read test, we see that the OpenSolaris and Nexenta systems both outperform the Promise M610i by a significant margin when the disk queue is increased. This is a direct effect of the L2ARC cache. Interestingly enough the OpenSolaris and Nexenta systems seem to trend identically, but the Nexenta system is measurably slower than the OpenSolaris system. We are unsure as to why this is, as they are running on the same hardware and the build of Nexenta we ran was based on the same build of OpenSolaris that we tested. We contacted Nexenta about this performance gap, but they did not have any explanation. One hypothesis that we had is that the Nexenta software is using more memory for things like the web GUI, and maybe there is less ARC available to the Nexenta solution than to a regular OpenSolaris solution.

In the 4k Random Write test, again the OpenSolaris and Nexenta systems come out ahead of the Promise M610i. The Promise box seems to be nearly flat, an indicator that it is reaching the limits of its hardware quite quickly. The OpenSolaris and Nexenta systems write faster as the disk queue increases. This seems to indicate a better re-ordering of data to make the writes more sequential the disks.

The 4k 67% Write 33% Read test again gives the edge to the OpenSolaris and Nexenta systems, while the Promise M610i is nearly flat lined. This is most likely a result of both re-ordering writes and the very effective L2ARC caching.

4k Random Reads again come out in favor of the OpenSolaris and Nexenta systems. While the Promise M610i does increase its performance as the disk queue increases, it's nowhere near the levels of performance that the OpenSolaris and Nexenta systems can deliver with their L2ARC caching.

8k Random Reads indicate a similar trend to the 4k Random Reads with the OpenSolaris and Nexenta systems outperforming the Promise M610i. Again, we see the OpenSolaris and Nexenta systems trending very similarly but with the OpenSolaris system significantly outperforming the Nexenta system.

8k Sequential reads have the OpenSolaris and Nexenta systems trailing at the first data point, and then running away from the Promise M610i at higher disk queues. It's interesting to note that the Nexenta system outperforms the OpenSolaris system at several of the data points in this test.

8k Random writes play out like most of the other tests we've seen with the OpenSolaris and Nexenta systems taking top honors, with the Promise M610i trailing. Again, OpenSolaris beats out Nexenta on the same hardware.

8k Random 67% Write 33% Read again favors the OpenSolaris and Nexenta systems, with the Promise M610i trailing. While the OpenSolaris and Nexenta systems start off nearly identical for the first 5 data points, at a disk queue of 24 or higher the OpenSolaris system steals the show.

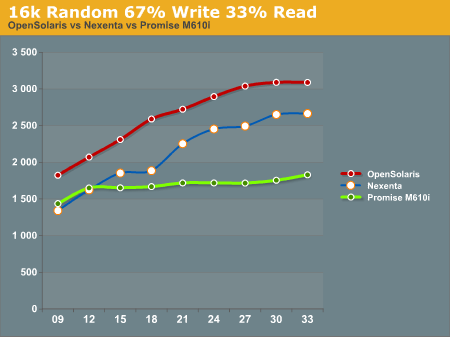

16k Random 67% Write 33% read gives us a show that we're familiar with. OpenSolaris and Nexenta both soundly beat the Promise M610i at higher disk ques. Again we see the pattern of the OpenSolaris and Nexenta systems trending nearly identically, but the OpenSolaris system outperforming the Nexenta system at all data points.

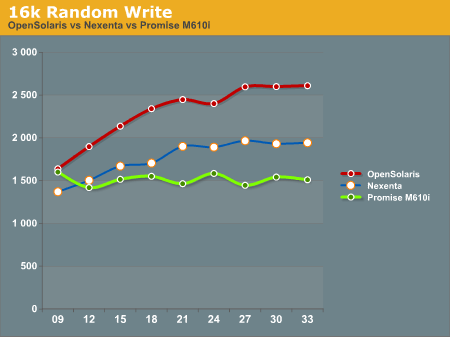

16k Random write shows the Promise M610i starting off faster than the Nexenta system and nearly on par with the OpenSolaris system, but quickly flattening out. The Nexenta box again trends higher, but cannot keep up with the OpenSolaris system.

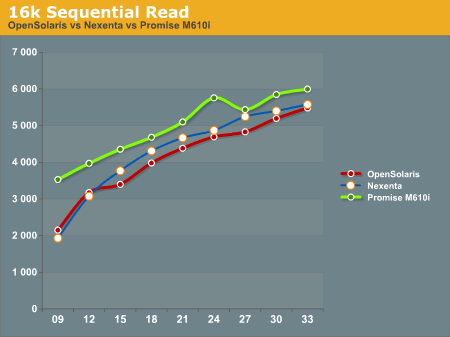

The 16k Sequential read test is the first test that we see where the Promise M610i system outperforms OpenSolaris and Nexenta at all data points. The OpenSolaris system and the Nexenta system both trend upwards at the same rate, but cannot catch the M610i system.

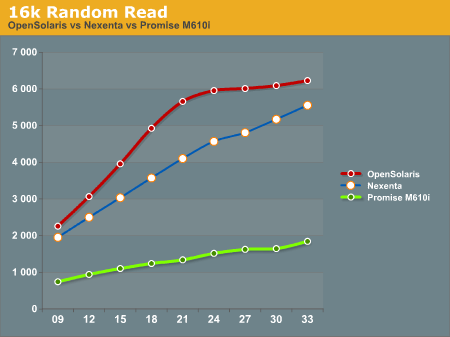

The 16k Random Read test goes back to the same pattern that we've been seeing, with the OpenSolaris and Nexenta systems running away from the Promise M610i. Again we see the OpenSolaris system take top honors with the Nexenta system trending similarly, but never reaching the performance metrics seen on the OpenSolaris system.

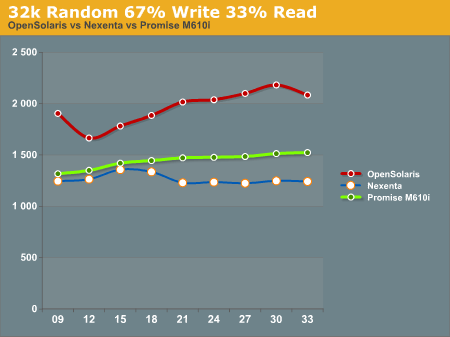

32k Random 67% Write 33% read has the OpenSolaris system on top, with the Promise M610i in second place, and the Nexenta system trailing everything. We're not really sure what to make of this, as we expected the Nexenta system to follow similar patterns to what we had seen before.

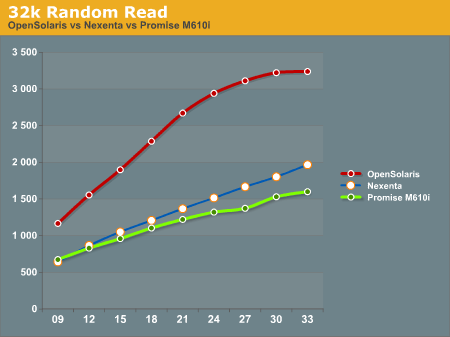

32k Random Read has the OpenSolaris system running away from everything else. On this test the Nexenta system and the Promise M610i are very similar, with the Nexentaq system edging out the Promise M610i at the highest queue depths.

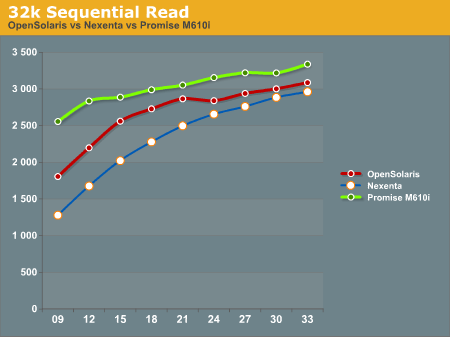

32k Sequential Reads proved to be a strong point for the Promise M610i. It outperformed the OpenSolaris and Nexenta systems at all data points. Clearly there is something in the Promise M610i that helps it excel at 32k Sequential Reads.

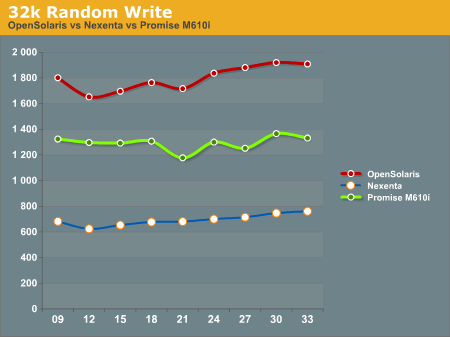

32k random writes have the OpenSolaris system on top again, with the Promise M610i in second place, and the Nexenta system trailing far behind. All of the graphs trend similarly, with little dips and rises, but not ever moving much from the initial reading.

After all the tests were done, we had to sit down and take a hard look at the results and try to formulate some ideas about how to interpret this data. We will discuss this in our conclusion.

102 Comments

View All Comments

MGSsancho - Tuesday, October 5, 2010 - link

I haven't tried this myself yet but how about using 8kb blocks and using jumbo frames on your network? possibly lower through padding to fill the 9mb packet in exchange for lower latency? I have no idea as this is just a theory. dudes in the #opensolaris irc chan have always recommended 128K or 64K depending on the data.solori - Wednesday, October 20, 2010 - link

One easy way to check this would be to export the pool from OpenSolaris and directly import it to NexentaStor and re-test. I think you'll find that the differences - as your benchmarks describe - are more linked to write caching at the disk level than partition alignment.NexentaStor is focused on data integrity, and tunes for that very conservatively. Since SATA disks are used in your system, NexentaStor will typically disable disk write cache (write hit) and OpenSolaris may typically disable device cache flush operations (write benefit). These two feature differences can provide the benchmark differences you're seeing.

Also, some "workstation" tuning includes the disabling of ZIL (performance benefit). This is possible - but not recommended - in NexentaStor but has the side effect of risking application data integrity. Disabling the ZIL (in the absence of SLOG) will result in synchronous writes being committed only with transaction group commits - similar performance to having a very fast SLOG (lots of ARC space helpful too).

fmatthew5876 - Tuesday, October 5, 2010 - link

I'd be very interested to see how FreeBSD ZFS benchmark results would compare to Nexenta and Open Solaris.mbreitba - Tuesday, October 5, 2010 - link

We have benchmarked FreeNAS's implimentation of ZFS on the same hardware, and the performance was abysmal. We've considered looking into the latest releases of FreeBSD but have not completed any of that testing yet.jms703 - Tuesday, October 5, 2010 - link

Have you benchmarked FreeBSD 8.1? There were a huge number of performance fixes in 8.1.Also, when was this article written? OpenSolaris was killed by Sun on August 13th, 2010.

mbreitba - Tuesday, October 5, 2010 - link

There was a lot of work on this article just prior to the official announcement. The development of the Illumos foundation and subsequent OpenIndiana has been so rapidly paced that we wanted to get this article out the door before diving in to OpenIndiana and any other OpenSolaris derivatives. We will probably add more content talking about the demise of OpenSolaris and the Open Source alternatives that have started popping up at a later date.MGSsancho - Tuesday, October 5, 2010 - link

Not to mention that projects like illumos are currently not recommended for production, Currently only meant as a base for other distros (OpenIndiana.) Then there is Solaris 11 due soon. I'll try out the express version when its released.cdillon - Tuesday, October 5, 2010 - link

FreeNAS 0.7.x is still using FreeBSD 7.x, and the ZFS code is a bit dated. FreeBSD 8.x has newer ZFS code (v15). Hopefully very soon FreeBSD 9.x will have the latest ZFS code (v24).piroroadkill - Tuesday, October 5, 2010 - link

This is relevant to my interests, and I've been toying with the idea of setting up a ZFS based server for a while.It's nice to see the features it can use when you have the hardware for it.

cgaspar - Tuesday, October 5, 2010 - link

You say that all writes go to a log in ZFS. That's just not true. Only synchronous writes below a certain size go into the log (either built into the pool, or a dedicated log device). All writes are held in memory in a transaction group, and that transaction group is written to the main pool at least every 10 seconds by default (in OpenSolaris - it used to be 30 seconds, and still is in Solaris 10 U9). That's tunable, and commits will happen more frequently if required, based on available ARC and data churn rate. Note that _all_ writes go into the transaction group - the log is only ever used if the box crashes after a synchronous write and before the txg commits.Now for the caution - you have chosen SSDs for your SLOG that don't have a backup power source for their on board caches. If you suffer power loss, you may lose data. Several SLC SSDs have recently been released that have a supercapacitor or other power source sufficient to write cache data to flash on power loss, but the current Intel like up doesn't have it. I believe the next generation Intel SSDs will.